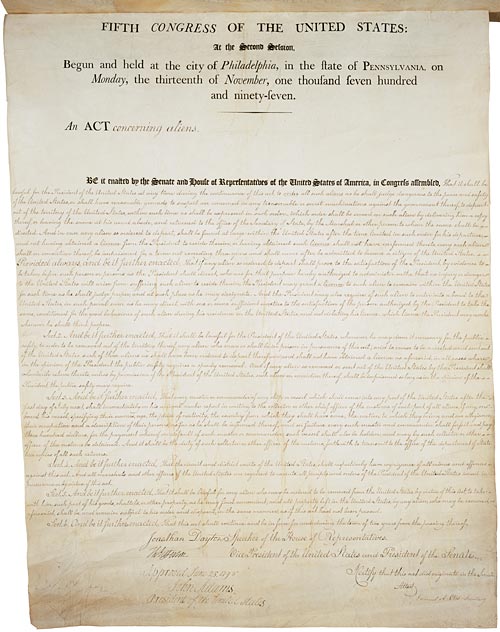

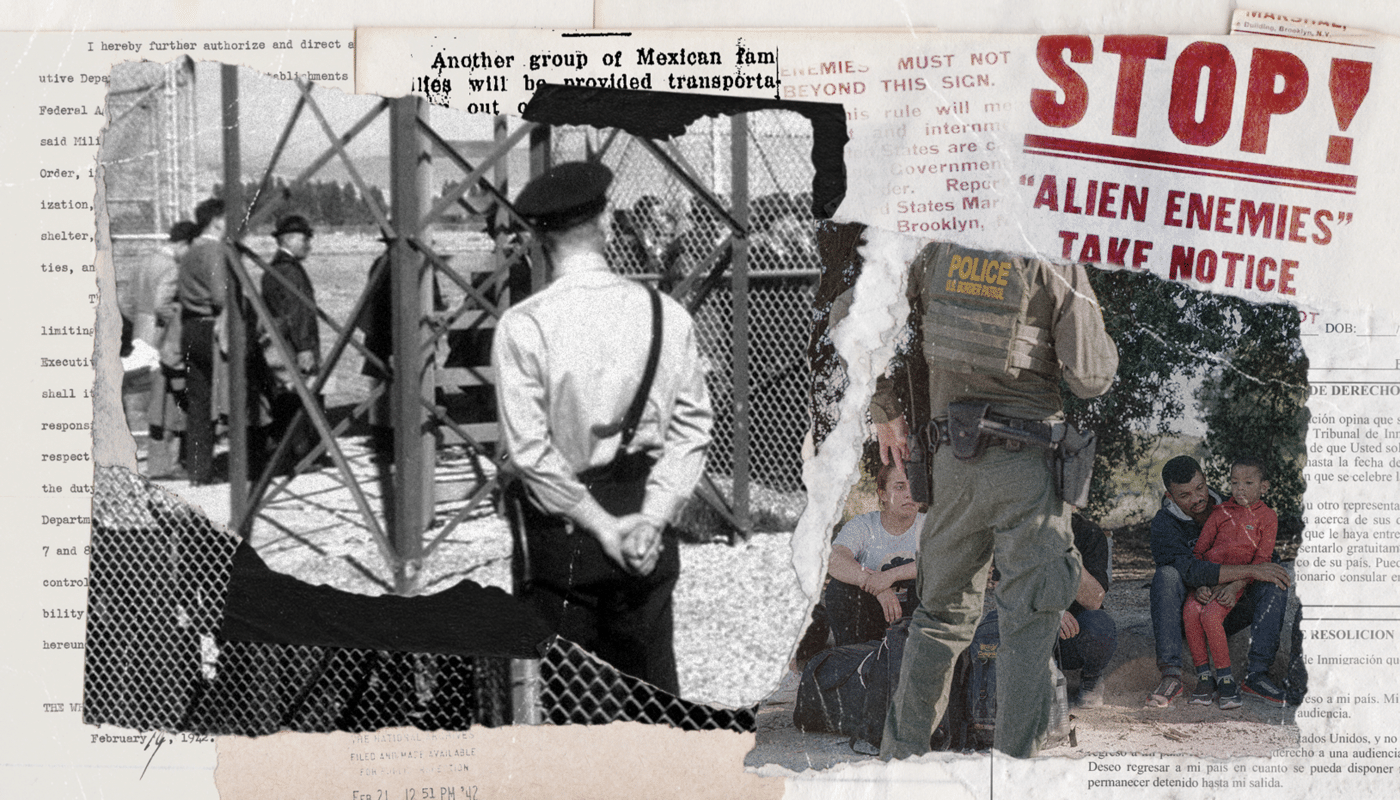

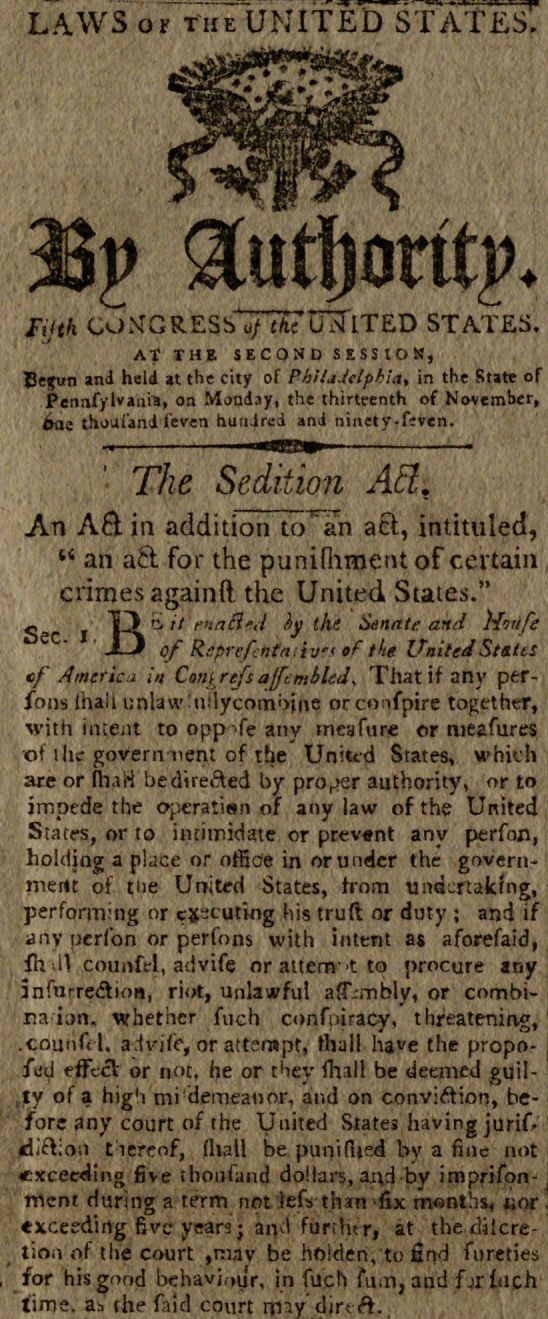

The Alien Enemies Act of 1798 is one of the most enduring pieces of American legislation, granting the President the authority to detain or deport citizens of a country with which the United States is at war. While its origins are rooted in the era of muskets and handwritten letters, the implementation of such high-stakes legal powers in the 21st century has shifted entirely to the realm of technology. Today, the “threat” is no longer just physical; it is digital. In an age of cyber-warfare and algorithmic vetting, the Alien Enemies Act serves as a foundational case study for how modern digital security, artificial intelligence, and data analytics define the concept of national safety.

The Evolution of National Security: From Physical Borders to Digital Vetting

When the Alien Enemies Act was first drafted, identifying a “hostile” individual was a matter of physical documentation and overt association. In the modern tech landscape, this process has been digitized. The transition from physical oversight to digital surveillance represents a tectonic shift in how government agencies utilize technology to enforce 18th-century statutes.

The Architecture of Digital Borders

Modern national security relies on a complex stack of technologies designed to monitor and regulate movement. This “digital border” is comprised of biometric databases, facial recognition software, and advanced API integrations that connect various global security databases. For tech professionals, the Alien Enemies Act is no longer a historical footnote; it is a prompt for the development of robust vetting software that can process millions of data points in milliseconds.

The Role of Cloud Computing in Legal Enforcement

To manage the vast amounts of data required to identify individuals under the purview of national security laws, government agencies rely on hyper-scale cloud providers. These platforms host sensitive datasets that include travel history, financial transactions, and social media activity. The integration of cloud technology allows for the real-time application of legal mandates, moving the enforcement of the 1798 Act from the courtroom to the server room.

Predictive Analytics and the Algorithmic “Enemy”

The most significant technological trend impacting the interpretation of the Alien Enemies Act is the rise of predictive analytics. Software tools now allow for the “scoring” of individuals based on their digital footprint, effectively automating the identification process that was once performed by human agents.

Machine Learning and Threat Assessment

Machine learning (ML) models are now trained on historical security data to identify patterns that might suggest a security risk. In the context of the Alien Enemies Act, these AI tools can analyze vast amounts of unstructured data to determine if an individual’s digital behavior aligns with known threats from hostile nations. However, this raises significant questions regarding algorithmic bias. If the training data is flawed, the AI may disproportionately flag individuals based on nationality or ethnicity, creating a digital echo of the controversies that have surrounded the Act for centuries.

The Big Data Aggregation Engine

The efficacy of national security tech depends on data aggregation. Companies specializing in “big data” analytics provide the software infrastructure necessary to scrape information from public records, the dark web, and private databases. By correlating IP addresses with physical locations and social media handles, these AI tools create a “digital twin” of individuals, making it nearly impossible for anyone to remain anonymous within the eyes of the law. This level of technical oversight is a far cry from 1798, yet it is the primary engine behind modern enforcement.

Cybersecurity and the Privacy Paradox

The potential invocation of the Alien Enemies Act in a modern context creates a unique set of challenges for digital security and personal privacy. As the government increases its technological capabilities to monitor “enemies,” the demand for defensive tech—such as encryption and decentralized apps—grows among civilians and residents.

Encryption as a Defensive Tech Trend

For those concerned about government overreach enabled by the Act, end-to-end encryption (E2EE) has become the gold standard. Messaging apps like Signal and hardware-based encryption tools serve as a technical barrier against mass surveillance. From a digital security perspective, this creates a “cat and mouse” game: as the state adopts more sophisticated decryption and forensic tools, the tech community responds with more robust privacy protocols.

The Vulnerability of Centralized Databases

One of the primary tech risks associated with laws like the Alien Enemies Act is the creation of massive, centralized “watchlists.” These databases are prime targets for state-sponsored hackers from the very nations the Act aims to protect against. A breach of a government vetting system doesn’t just compromise national security; it exposes the private data of thousands of individuals to foreign intelligence services. This highlights a critical lesson in cybersecurity: the tools used for state surveillance are often the most significant points of failure.

The Future of AI Ethics and Administrative Law

As we look toward the future, the intersection of 18th-century law and 21st-century technology requires a new framework for AI ethics. The automation of legal enforcement under the Alien Enemies Act poses a risk of “black box” justice, where individuals are detained or deported based on algorithms that no human fully understands.

Mitigating Bias in Security Software

Developers and data scientists are now under pressure to create “explainable AI” (XAI). In the context of security software, XAI ensures that if a system flags an individual under a national security statute, the logic behind that decision is transparent and auditable. This is essential for maintaining the rule of law in an era where software code often functions as a legal authority.

Decentralized Identity (DID) and Sovereignty

A burgeoning trend in the tech space is Decentralized Identity (DID), often built on blockchain technology. DID allows individuals to own and control their own identification data, sharing only what is necessary with government entities. In a scenario where the Alien Enemies Act is invoked, DID could provide a layer of protection, preventing the wholesale seizure of digital identities by centralized authorities. This shift toward “sovereign tech” represents a direct response to the potential for state-level legal powers to infringe upon digital rights.

Conclusion: Balancing Innovation and Authority

The Alien Enemies Act of 1798 remains a powerful tool in the executive arsenal, but its modern expression is defined entirely by the tech industry. From the AI algorithms that identify threats to the cybersecurity protocols that protect (or expose) personal data, technology has become the medium through which this historical law operates.

For tech professionals, entrepreneurs, and digital security experts, the lesson is clear: software is never neutral. The tools we build to monitor, categorize, and secure our digital world are the same tools that will be used to enforce the laws of the land, no matter how old those laws may be. As we continue to innovate in the fields of AI and big data, the ethical imperative to protect privacy and ensure algorithmic fairness becomes more than just a corporate value—it becomes a fundamental requirement for a free and secure society. In the digital age, the “enemy” is often not a person or a nation, but the potential for our own technology to be used against the principles of justice and transparency.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.