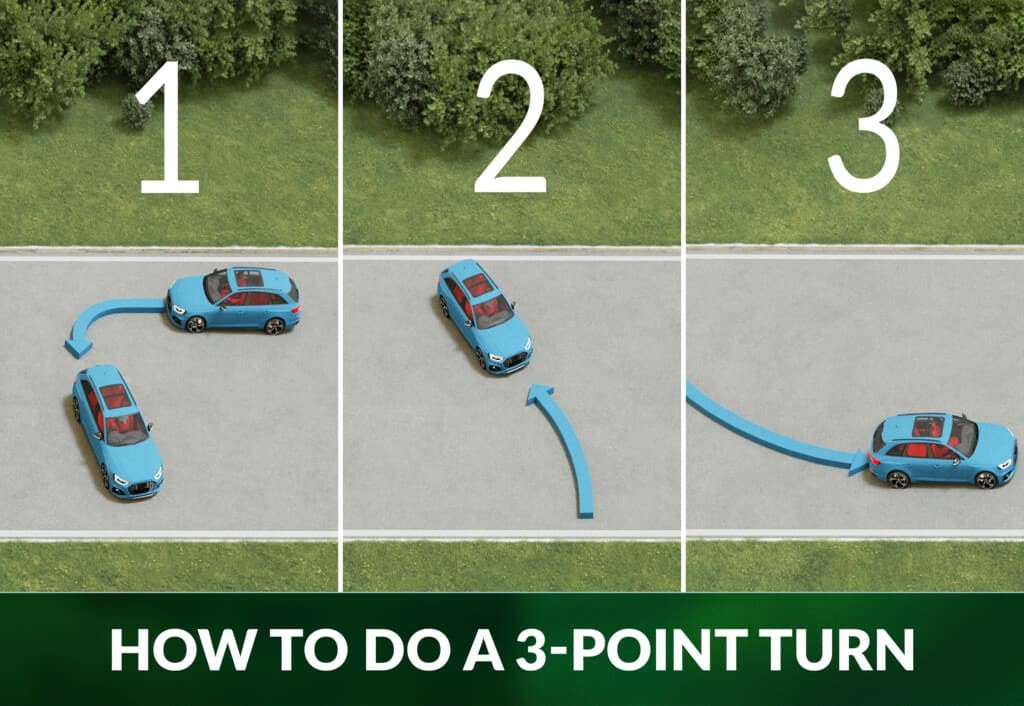

In the lexicon of driving schools and manual vehicle operation, a “Y-turn”—frequently referred to as a three-point turn—is a fundamental maneuver used to reverse a vehicle’s direction in a space too narrow for a U-turn. However, in the rapidly evolving landscape of technology, the Y-turn has transcended its status as a basic human skill to become a critical benchmark in the fields of robotics, artificial intelligence (AI), and autonomous vehicle (AV) development.

As we move toward a future defined by self-driving cars and automated logistics, the ability of a machine to execute a precise Y-turn represents more than just a navigational feat; it is a testament to the sophistication of its path-planning algorithms, sensor fusion, and real-time processing capabilities. This article explores the technical intricacies of the Y-turn within the tech sector, detailing how modern software and hardware work in tandem to master one of the most challenging maneuvers in the digital mobility space.

The Mechanics of the Y-Turn in Modern Robotics

At its core, a Y-turn is a non-holonomic movement—a term used in robotics to describe systems where the controllable degrees of freedom are less than the total degrees of freedom. For a standard wheeled robot or vehicle, moving sideways is impossible; it must move forward or backward while turning. This constraint makes the Y-turn a complex geometric puzzle for software developers.

From Manual Maneuvers to Algorithmic Precision

For a human, a Y-turn is intuitive. We look, we steer, and we adjust based on visual feedback. For a robot, every segment of that “Y” shape must be calculated as a series of coordinates and steering angles. The tech stack responsible for this involves the “Perception-Planning-Action” loop. The “Perception” layer identifies the boundaries of the road, the “Planning” layer calculates the optimal trajectory to avoid curbs, and the “Action” layer translates those coordinates into torque for the motors and angles for the steering rack.

The Role of LiDAR and Ultrasonic Sensors

To execute a Y-turn safely, a machine requires a 360-degree high-fidelity view of its surroundings. This is achieved through sensor fusion. LiDAR (Light Detection and Ranging) provides a precise 3D point cloud of the environment, identifying the exact distance to obstacles. Simultaneously, ultrasonic sensors act as short-range “feelers,” detecting low-lying objects or curbs that might be missed by cameras. In the tech world, the Y-turn serves as a stress test for these sensors, as the vehicle must switch its primary focus from the front to the rear and sides in rapid succession.

Autonomous Vehicles and the “Three-Point” Challenge

While highway driving—essentially staying between two lines at a constant speed—has been largely solved by Level 2 and Level 3 autonomous systems, the Y-turn remains a significant “edge case” in urban navigation. It is a maneuver that usually occurs in unstructured environments, such as narrow residential streets or dead ends, where standardized road markings are often absent.

Solving the Edge Cases of Urban Navigation

Tech giants and AV startups like Waymo, Tesla, and Cruise treat the Y-turn as a high-stakes problem-solving exercise. In an urban setting, a Y-turn is rarely performed in a vacuum. The software must account for “dynamic obstacles”—the cyclist approaching from the blind spot, the pedestrian stepping off the curb, or another vehicle waiting for the maneuver to finish. The ability of an AI to predict the intent of these external actors while calculating its own multi-point turn is a major focus of current deep learning research.

Machine Learning and Trajectory Prediction

Modern AVs utilize Recurrent Neural Networks (RNNs) and Transformers to predict how a scene will evolve over the next few seconds. When an autonomous car initiates a Y-turn, it isn’t just following a static path. It is constantly running thousands of simulations per second, asking “What if?” What if the car behind me moves closer? What if I lose traction on this gravel? This constant re-evaluation is what separates a basic automated system from a truly intelligent autonomous agent.

Software Architecture: Building the Logic for a Y-Turn

Behind the physical movement of the wheels lies a sophisticated software architecture. To solve a Y-turn, developers rely on advanced mathematics and pathfinding logic that can handle the constraints of physics and the unpredictability of the real world.

Pathfinding Algorithms: A*, Dijkstra, and Beyond

In the world of computer science, the Y-turn is a search problem. Algorithms like A* (A-Star) or the Rapidly-exploring Random Tree (RRT) are often employed to find a path from Point A to Point B within a constrained space. For a Y-turn, the algorithm must find a sequence of “states” (position and orientation) that connects the initial heading to the desired opposite heading without the vehicle’s “footprint” intersecting with any obstacles. This requires massive computational power to be executed in real-time, especially when the vehicle must adjust its plan mid-maneuver due to new sensor data.

Real-Time Data Processing and Latency

One of the greatest technical hurdles in executing a Y-turn is latency. The time it takes for a sensor to detect an obstacle, the software to process it, and the mechanical system to react must be in the millisecond range. In a Y-turn, the vehicle is often inches away from obstacles. High-performance onboard computing, often powered by specialized AI chips from companies like NVIDIA, is required to ensure that the “Y” doesn’t turn into a collision. The optimization of these software stacks is a primary area of competition in the tech industry today.

The Future of Maneuverability: Beyond the Traditional Y-Turn

As we look toward the future of technology, the very concept of the Y-turn may become a relic of the “manual era.” Innovation in vehicle hardware is beginning to catch up with the capabilities of the software, potentially rendering the traditional three-point maneuver obsolete.

Omni-Directional Wheels and 4-Wheel Steering

New hardware tech is changing the geometry of mobility. Concepts like the “crab walk” seen in the GMC Hummer EV or the 90-degree rotating wheels found in Hyundai Mobis’s “e-Corner” system allow vehicles to move sideways or spin in place (a “tank turn”). For a robot or a car equipped with this tech, a Y-turn is replaced by a simple pivot. From a software perspective, this simplifies the path-planning logic significantly, as the non-holonomic constraints are removed.

AI and the Convergence of Fleet Management

In the future, the Y-turn won’t just be an isolated event; it will be a coordinated effort. Through Vehicle-to-Everything (V2X) communication, a car performing a Y-turn will be able to broadcast its intentions to all surrounding tech—other cars, smart traffic lights, and even the smartphones of pedestrians. This networked approach transforms the Y-turn from a difficult technical maneuver into a choreographed element of a smart city’s traffic flow.

Conclusion

The Y-turn, once a simple requirement for a teenager’s driver’s license, has become a fascinating intersection of geometry, physics, and high-level computation. In the tech industry, mastering the Y-turn is a milestone that signals a company’s readiness to handle the complexities of the real world. Whether it is a warehouse robot navigating a tight corner or a robo-taxi turning around in a narrow alley, the execution of this maneuver is a clear indicator of how far our AI and hardware integration has come.

As we continue to push the boundaries of what is possible in digital and physical mobility, the technical challenges posed by the Y-turn will continue to drive innovation in sensor technology, algorithmic efficiency, and vehicle design. The “turn” we are witnessing today is not just about changing the direction of a vehicle—it is about the tech industry’s pivot toward a fully autonomous, highly efficient future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.