The release of Meta’s Llama 3 marked a pivotal moment in the evolution of open-weights large language models (LLMs). By providing a suite of models that rival proprietary systems like GPT-4 and Claude 3, Meta has democratized access to high-tier artificial intelligence. However, for developers, researchers, and tech enthusiasts, the most critical question remains: which size is right for the task? Llama 3 was designed with a specific architecture intended to scale across different hardware configurations, resulting in distinct “sizes” characterized by their parameter counts.

Understanding the Llama 3 sizes—specifically the 8B, 70B, and the massive 405B versions—is essential for optimizing compute resources and achieving peak performance in AI applications. This article explores the technical nuances of these sizes, the hardware required to run them, and how they fit into the broader modern technology stack.

The Foundation of Llama 3: Understanding Parameter Scaling

In the world of LLMs, “size” refers to the number of parameters the model possesses. Parameters are essentially the internal variables that the model learns during training; they determine how the model processes input and generates output. With Llama 3, Meta refined its scaling laws to ensure that even the smaller models punch well above their weight class.

The Significance of 8 Billion Parameters

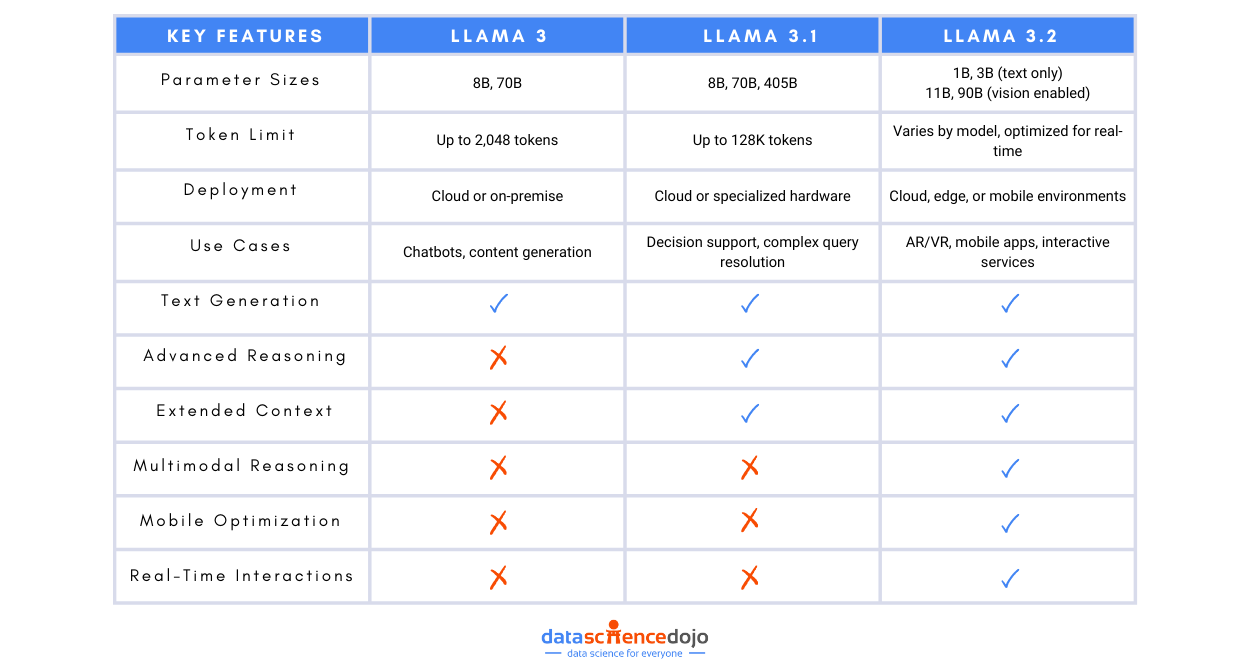

The 8B (8 billion) parameter model represents the “entry-level” size for Llama 3, but the term “entry-level” is deceptive. Unlike previous generations where small models were often seen as mere toys for experimentation, the Llama 3 8B model was trained on over 15 trillion tokens. This massive amount of data allows the 8B model to exhibit reasoning capabilities that previously required models five times its size. In the tech world, this is a significant breakthrough for “edge AI,” where models run locally on laptops or mobile devices rather than in massive data centers.

The Leap to 70 Billion and 405 Billion

As we move up to the 70B and the flagship 405B models, the complexity of the neural network increases exponentially. The 70B model is widely considered the “sweet spot” for enterprise applications, offering a balance between sophisticated reasoning and manageable operational costs. The 405B model, on the other hand, represents Meta’s attempt to create a frontier-class model that can compete directly with the largest closed-source models in existence. These larger sizes utilize more complex attention mechanisms and deeper layers, allowing them to handle nuanced linguistic patterns and complex coding tasks that smaller models might struggle to parse.

The Llama 3 8B: Efficiency and Edge Computing

The 8B model is perhaps the most influential size for the developer community. Its primary advantage is accessibility. In an era where high-end H100 GPUs are scarce and expensive, the ability to run a highly capable LLM on consumer-grade hardware is a game changer.

Hardware Requirements for 8B Deployment

One of the most attractive features of the Llama 3 8B is its relatively low VRAM (Video RAM) requirement. In its 16-bit precision (FP16) state, the model requires approximately 16GB of VRAM. However, through a process called quantization—which compresses the model’s weights—the 8B version can be run on GPUs with as little as 6GB to 8GB of VRAM. This makes it compatible with mid-range gaming laptops and standard desktop GPUs like the NVIDIA RTX 3060 or 4070. For developers, this means the ability to prototype, fine-tune, and deploy AI locally without incurring cloud compute costs.

Ideal Use Cases for the Compact Model

The 8B model excels in specialized, narrow tasks. Because it is fast and has low latency, it is ideal for:

- Customer Support Chatbots: Handling routine queries with high speed.

- Local Content Generation: Assisting writers or developers with drafts and code snippets without sending data to an external server.

- Summarization: Processing individual documents or short emails efficiently.

- Edge Devices: Integrating AI into IoT (Internet of Things) devices where internet connectivity may be intermittent.

The 70B Model: The Enterprise Workhorse

While the 8B model is great for local tasks, the Llama 3 70B is designed for heavy lifting. It is the size most likely to be used by tech companies to power their internal tools and customer-facing AI features.

Advanced Reasoning and Multilingual Support

The 70B model benefits from a much higher density of information. During its training, it developed a more profound “understanding” of logic, mathematics, and programming languages. Unlike the 8B model, which might occasionally lose the thread of a very long and complex instruction, the 70B model maintains high coherence across long-form outputs. It is also significantly more capable in multilingual environments, handling translations and cultural nuances with greater precision than the smaller variant.

Infrastructure and Deployment at Scale

Deploying the 70B model requires professional-grade infrastructure. In its uncompressed form, it requires upwards of 140GB of VRAM, necessitating multiple A100 or H100 GPUs working in tandem. Even with 4-bit quantization, it typically requires two 24GB GPUs (like the RTX 3090/4090) or a single 48GB enterprise GPU (like the A6000). For most businesses, this model is accessed via cloud providers like AWS, Azure, or Google Cloud, where it can be scaled elastically based on demand. It represents the gold standard for companies that need GPT-4 level intelligence while maintaining control over their model weights.

The 405B Frontier: Challenging the AI Giants

With the introduction of the 405B model, Meta officially entered the “Frontier Model” space. This size is a behemoth, designed to push the boundaries of what open-weights AI can achieve. It is not just about having more parameters; it is about the emergent properties that appear at this massive scale.

Training Sophistication and Data Throughput

The 405B model was trained using a massive compute cluster, leveraging over 16,000 H100 GPUs. The “size” here isn’t just a number; it represents a vast library of knowledge and reasoning paths. This model is capable of “synthetic data generation,” meaning it can be used to train smaller models. In the tech ecosystem, this creates a “distillation” effect: the 405B model acts as a teacher, generating high-quality data that can be used to make future versions of the 8B and 70B models even smarter.

Benchmarking Against Proprietary Models

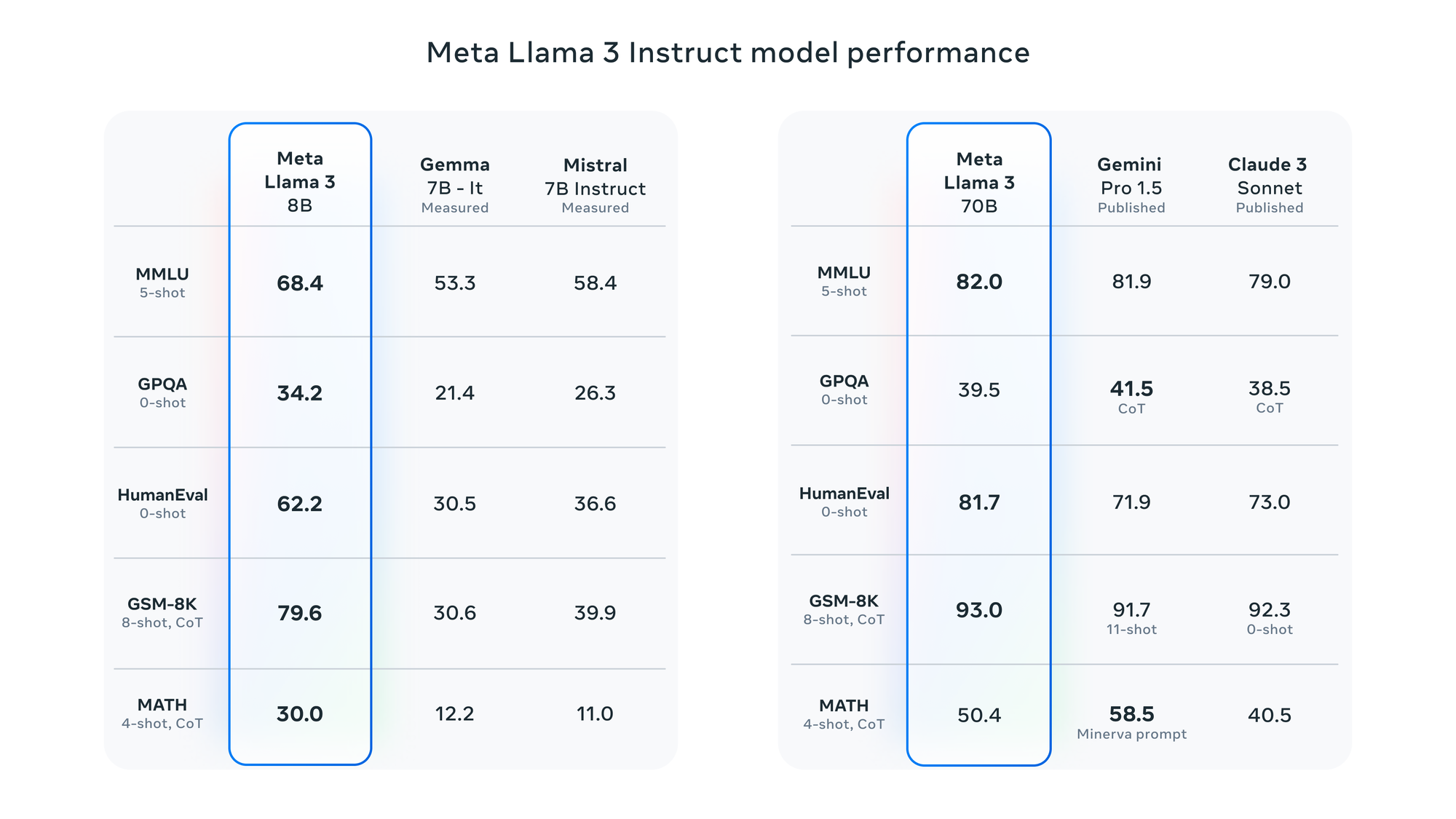

In technical benchmarks (such as MMLU, GSM8K, and HumanEval), the Llama 3 405B competes head-to-head with GPT-4o and Claude 3.5 Sonnet. This is a monumental shift in the tech landscape. Previously, the “best” models were always hidden behind APIs. With the 405B size, organizations can now host a world-class AI on their own private servers, ensuring total data sovereignty. This is particularly critical for sectors like defense, healthcare, and high-finance, where data privacy is non-negotiable.

Architectural Innovations Across All Sizes

Regardless of the parameter count, all Llama 3 sizes share a common architectural DNA that makes them more efficient than their predecessors (Llama 2 and Llama 1).

Grouped Query Attention (GQA)

A major technical upgrade in Llama 3 is the standard use of Grouped Query Attention (GQA) across all sizes, including the 8B. In earlier versions, GQA was often reserved for larger models. This technique improves the efficiency of the “attention” mechanism (the part of the AI that decides which words are important), allowing for faster inference speeds and better handling of longer context windows. It ensures that even the smaller models can process information quickly without a massive spike in memory usage.

Tokenizer and Vocabulary Enhancements

Meta increased the vocabulary size to 128k tokens in Llama 3. This means the model can “read” and “write” more efficiently, using fewer characters to represent complex words or code. For the user, this translates to faster output generation and a more sophisticated command of language. Whether you are using the 8B or the 405B, the underlying “language” the model speaks is more refined, leading to better performance in specialized technical fields like medicine or legal analysis.

Conclusion: Navigating the Llama 3 Ecosystem

The variety of Llama 3 sizes reflects the diverse needs of the modern technological landscape. There is no longer a “one-size-fits-all” approach to AI. The 8B model has democratized local AI, allowing developers to innovate on their own terms. The 70B model has provided a robust, enterprise-grade solution for those who need a balance of power and efficiency. Finally, the 405B model has shattered the ceiling for open-weights performance, proving that open models can compete with the most advanced proprietary systems in the world.

As AI continues to integrate into every facet of software and digital security, choosing the right Llama 3 size will be a defining decision for tech leaders. By matching the model size to the specific hardware constraints and cognitive requirements of the task, developers can build faster, smarter, and more secure applications than ever before.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.