In the realm of physics, the standard unit of measure for energy is the Joule. However, as we navigate the complexities of the twenty-first century, energy has transcended the laboratory to become the fundamental currency of the technology sector. From the lithium-ion batteries in our pockets to the sprawling server farms powering global artificial intelligence, understanding the units of measure for energy—and how they are applied—is critical for anyone navigating the current tech landscape.

As technology advances, our reliance on energy grows not just in quantity but in the precision with which we measure it. In the tech industry, we rarely speak of Joules in isolation. Instead, we discuss Watt-hours (Wh), Thermal Design Power (TDP), and Power Usage Effectiveness (PUE). These metrics define the limits of our gadgets, the sustainability of our software, and the scalability of our digital infrastructure.

1. The Core Metrics: From Joules to Watt-Hours

To understand energy in tech, one must first distinguish between energy and power. While often used interchangeably in casual conversation, they represent different dimensions of technological performance.

Understanding the Joule and the Watt

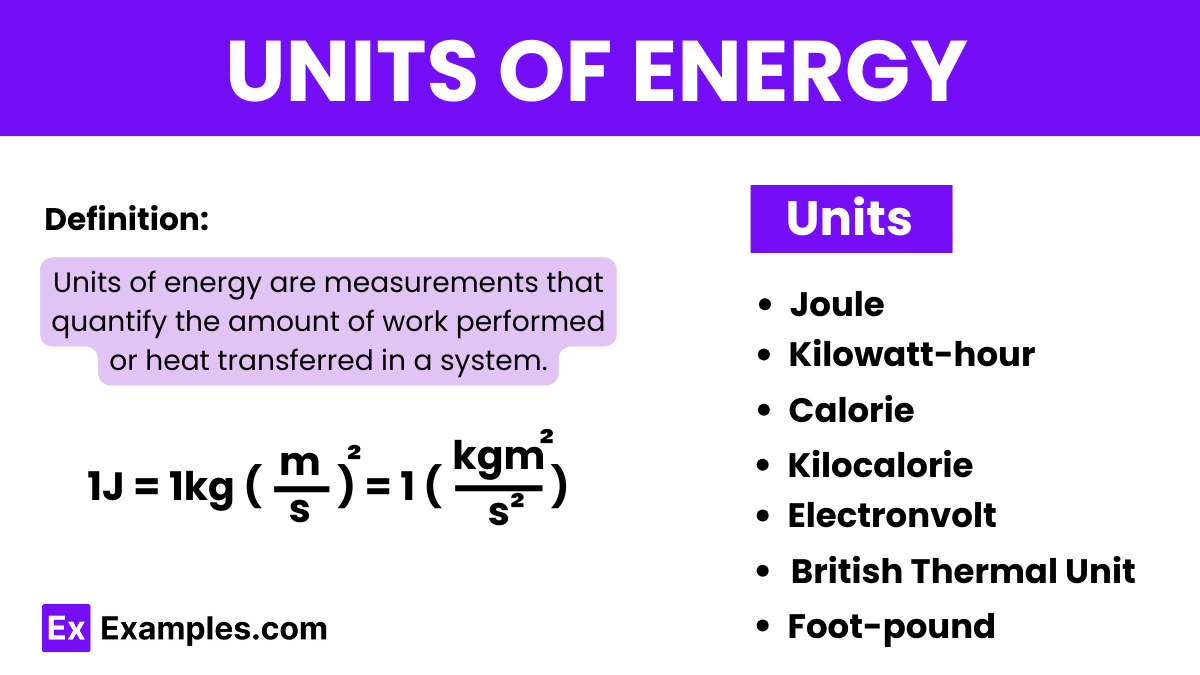

The Joule (J) is the International System of Units (SI) measure for energy. In technical terms, one Joule is the work done by a force of one Newton acting through a distance of one meter. In the context of electronics, a more practical unit is the Watt (W), which measures power—the rate at which energy is transferred. One Watt is defined as one Joule per second. For hardware engineers, the Watt is the primary concern because it dictates how much heat a component will generate and how much electricity it will draw from a source at any given moment.

The Significance of the Watt-Hour (Wh)

In consumer technology, particularly for mobile devices and laptops, the Joule is too small a unit for practical use. Instead, the industry relies on the Watt-hour (Wh). A Watt-hour represents the amount of energy used by a one-watt load drawing power for one hour. When you look at the specifications of a high-end laptop, you might see a “99.9 Wh battery.” This unit is crucial because it provides a clear picture of capacity: it tells the user how long the device can run based on its power draw. Unlike Milliampere-hours (mAh), which only measure charge, Watt-hours account for voltage, making it the most accurate “unit of measure” for comparing energy capacity across different tech platforms.

Thermal Design Power (TDP) in Microchips

For semiconductor giants like Intel, AMD, and Nvidia, the most critical energy metric is Thermal Design Power (TDP). Measured in Watts, TDP represents the maximum amount of heat a computer chip is expected to generate under a theoretical “real-world” load. While it is technically a measure of power, it serves as a proxy for energy efficiency. A lower TDP means a chip is more energy-efficient, allowing for thinner laptop designs and longer-lasting batteries without the need for bulky cooling systems.

2. Energy Density and the Evolution of Mobile Hardware

The progression of mobile technology is a history of mastering energy density. As our devices become more powerful, the challenge lies in cramming more energy into smaller physical spaces while maintaining safety and efficiency.

The Role of Lithium-Ion and Energy Density

The unit of measure for a battery’s performance is often its energy density, calculated in Watt-hours per kilogram (Wh/kg) or Watt-hours per liter (Wh/L). This metric determines how much “tech” we can fit into a smartphone. High energy density allows for vibrant OLED screens and high-refresh-rate processors. The tech industry is currently in a race to move beyond traditional lithium-ion toward solid-state batteries, which promise to double the energy density, effectively doubling the life of our gadgets without increasing their size.

Efficiency per Cycle: The Longevity Metric

Energy in tech isn’t just about how much you have; it’s about how long you can keep it. The “cycle life” of a battery is a vital measure of long-term energy sustainability. A cycle is defined as one full discharge and recharge. Modern tech companies prioritize “charge cycle efficiency,” ensuring that after 1,000 cycles, a device still retains 80% of its original energy capacity. This focus on the longevity of energy storage is a key component of digital security and hardware reliability.

The Impact of 5G and Wireless Energy Loss

As we move toward a more connected world, the way we measure energy loss in wireless transmission becomes paramount. 5G technology, while faster, initially required more energy to maintain signal strength compared to 4G. Tech reviewers and engineers measure this via “milliwatts per gigabit.” Optimizing this ratio is essential for preventing the “battery drain” often associated with new network standards, ensuring that high-speed connectivity doesn’t come at the cost of device endurance.

3. Infrastructure Scale: Energy Metrics in Data Centers and AI

While individual gadgets use small amounts of energy, the infrastructure supporting them—the cloud—is an energy behemoth. Here, the units of measure shift from the micro to the macro, focusing on efficiency at scale.

Power Usage Effectiveness (PUE)

For giants like Google, Amazon (AWS), and Microsoft, the gold standard for measuring energy efficiency is Power Usage Effectiveness (PUE). PUE is a ratio that describes how efficiently a data center uses energy; specifically, how much energy is used by the computing equipment in contrast to cooling and other overhead. A PUE of 1.0 is the theoretical perfect score, meaning all energy goes directly to the servers. Most modern data centers strive for a PUE of 1.2 or lower. This metric is the heartbeat of the tech industry’s sustainability efforts.

The Energy Cost of Artificial Intelligence

The rise of Large Language Models (LLMs) has introduced a new, daunting energy metric: “Kilowatt-hours per training run.” Training a model like GPT-4 requires millions of kWh. Tech analysts now look at “FLOPs per Watt” (Floating-Point Operations per Second per Watt) to measure the efficiency of AI hardware. As AI becomes integrated into every app and software tool, the ability to perform more calculations with less energy is the primary hurdle for the next generation of AI tools.

Grid Interaction and Megawatts (MW)

Hyperscale data centers are no longer measured by their square footage, but by their power capacity in Megawatts (MW). A 100MW data center is a massive facility capable of powering tens of thousands of homes. In the tech world, energy is now a supply-chain constraint. The ability to secure “renewable Megawatts” is a competitive advantage, leading tech companies to become the world’s largest corporate buyers of green energy.

4. Green Coding: Software as an Energy Variable

One of the most significant shifts in the modern tech landscape is the realization that software itself has an energy footprint. “Green Coding” is the practice of writing algorithms that minimize the computational resources—and thus the energy—required to execute a task.

Computational Complexity and Energy Consumption

In software development, the “Big O notation” describes the efficiency of an algorithm. However, tech leads are now translating algorithmic efficiency directly into energy units. A poorly written loop in a mobile app can cause the CPU to “wake up” unnecessarily, consuming Milliwatts that add up across millions of users. By optimizing code, developers can reduce the energy load on the device’s battery and the server’s processor.

The Energy Footprint of Digital Security

Digital security, particularly blockchain technology and high-level encryption, is notoriously energy-intensive. The “Proof of Work” mechanism used by some cryptocurrencies is measured by “Terawatt-hours (TWh) per year,” often comparing the energy use of a single network to that of entire nations. This has led to a tech-wide push toward “Proof of Stake” and other more energy-efficient validation methods, where the unit of measure for security is no longer raw computational power, but economic stake and cryptographic elegance.

Telemetry and Energy Monitoring Tools

Modern software suites now include energy telemetry. Developers use tools to measure “Joules per transaction” or “CPU cycles per request.” By monitoring these metrics, companies can identify “energy leaks” in their applications. This practice is becoming standard in app development, as users are increasingly sensitive to apps that “drain” their devices.

5. The Future: Quantum Computing and New Energy Paradigms

As we look toward the future of technology, our traditional units of measure for energy may need to evolve to account for the strange world of quantum mechanics and hyper-efficient computing.

Quantum Advantage and Cryogenic Energy

Quantum computers operate at temperatures colder than deep space. Here, the energy metric isn’t just about the electricity going into the processor, but the massive amount of energy required for “cryogenic cooling.” The “energy-per-qubit” is a burgeoning metric that researchers are using to determine when quantum computers will become more efficient than classical supercomputers for specific tasks—a milestone known as Quantum Advantage.

Photonic Computing: Energy at the Speed of Light

One of the most exciting trends in tech is photonic computing, which uses light (photons) instead of electricity (electrons) to process data. Because photons generate almost no heat, the “energy-per-bit” transferred could drop by orders of magnitude. In this niche, the unit of measure shifts toward “femtojoules per bit.” If successful, this would represent the greatest leap in energy efficiency since the invention of the transistor.

Conclusion: Energy as the Ultimate Tech Constraint

Whether we are measuring the Milliampere-hours of a wearable device or the Megawatts of a global data center, the unit of measure for energy is the ultimate yardstick of technological progress. In the tech niche, energy is no longer a background utility; it is a design constraint, a software requirement, and a corporate responsibility. As we continue to push the boundaries of AI, mobile hardware, and digital infrastructure, our ability to measure, manage, and minimize energy consumption will define the next era of innovation. The future of tech is not just about how much data we can process, but how efficiently we can turn energy into intelligence.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.