In the rapidly evolving landscape of modern technology, the word “neuron” has migrated from the laboratory of the neurobiologist to the server rooms of Silicon Valley. While biology identifies the multipolar neuron as the most common type in the human central nervous system, the technological revolution has birthed a digital successor: the artificial neuron. In the context of technology, software engineering, and artificial intelligence, understanding the most common type of neuron involves deconstructing the building blocks of the neural networks that power everything from smartphone facial recognition to complex generative AI tools like Large Language Models (LLMs).

This article explores the “most common” digital neurons—specifically the Rectified Linear Unit (ReLU) based artificial neuron—and how these units form the backbone of the contemporary tech stack.

The Blueprint of Intelligence: Understanding the Artificial Neuron

To understand what the most common type of neuron is in the tech world, we must first examine the transition from biological inspiration to digital application. In technology, an artificial neuron is a mathematical function conceived as a model of biological neurons.

From Biological Inspiration to Digital Computation

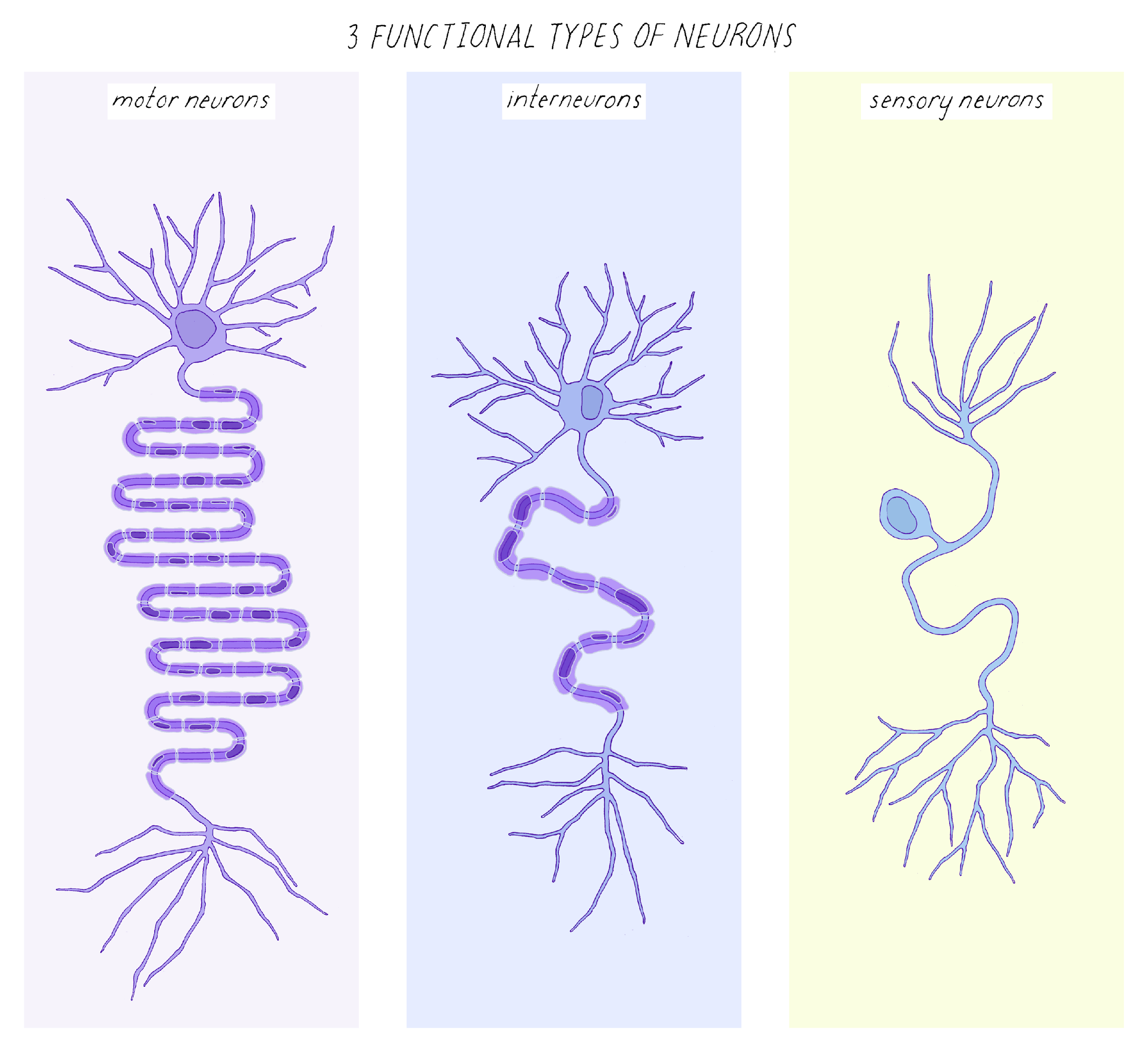

The quest to create “Thinking Machines” led early computer scientists to mimic the structure of the human brain. Just as a biological neuron receives chemical signals through dendrites and fires an electrical pulse down an axon, a digital neuron receives numerical inputs, processes them, and produces an output. While the biological multipolar neuron is characterized by its multiple dendrites and a single axon, its digital counterpart is characterized by its “weights” and “biases.” This structural mimicry has allowed software developers to build complex systems capable of pattern recognition that far exceeds traditional algorithmic programming.

The Fundamental Logic: Input, Weight, and Summation

The most common structural form of a digital neuron involves three primary stages: the input layer, the weighted summation, and the activation function. In this tech-centric framework, the “most common” neuron isn’t a physical entity but a specific configuration of code. Every time you interact with an AI tool, millions of these digital neurons are calculating the dot product of input vectors and weight matrices. This process is the foundational “pulse” of modern software, acting as the primary gatekeeper for data processing in machine learning models.

Defining the Standard: The Multilayer Perceptron (MLP) and Its Components

In the realm of AI tools and software architecture, the Multilayer Perceptron (MLP) is the most ubiquitous framework where these neurons reside. Within an MLP, the “type” of neuron is often defined by its position and its activation behavior.

The Perceptron: The Original Building Block

The Perceptron is the ancestor of all modern digital neurons. Developed in the late 1950s, it was a linear classifier that represented the simplest type of “neuron” in tech history. However, the standard “most common” neuron used today has evolved significantly. Modern software no longer relies on the simple binary output of the original Perceptron; instead, it uses continuous, differentiable functions that allow for “learning” through a process called backpropagation. This evolution is what transitioned AI from a theoretical gadget into a functional tech powerhouse.

Why the Hidden Layer Neuron is the “Most Common” in Practice

When we quantify neurons in modern technology, the vast majority exist within the “hidden layers” of deep learning models. In a model like GPT-4, there are hundreds of billions of parameters, most of which are housed within these hidden neurons. These are the workhorses of the digital age. Unlike input neurons (which merely pass data) or output neurons (which provide the final result), hidden layer neurons perform the heavy lifting of feature extraction. They are the “most common” because of the sheer scale required to map complex data relationships in modern apps and gadgets.

The Activation Function: The “Brain” of the Artificial Neuron

If we define the “type” of a neuron by its operational logic, then the Rectified Linear Unit (ReLU) is indisputably the most common type of neuron in the technology sector today.

ReLU (Rectified Linear Unit): The Industry Workhorse

In modern software development and AI training, the ReLU activation function is the gold standard. A ReLU neuron is defined by a simple mathematical rule: if the input is negative, the output is zero; if the input is positive, the output is exactly that input. This simplicity is its greatest strength. Before ReLU, neurons used complex “Sigmoid” or “Tanh” functions which were computationally expensive and prone to a technical flaw known as the “vanishing gradient problem.” By using ReLU, tech companies can train deeper networks faster, making it the most common component in the neural architecture of current AI tools.

Sigmoid and Tanh: The Legacy of Non-Linearity

While ReLU is the most common, it is important to recognize the legacy types. Sigmoid neurons were the previous standard, often used in early digital security systems and basic predictive apps. They map input values to a range between 0 and 1, mimicking the “on/off” firing rate of biological cells. However, in the high-speed world of modern tech reviews and tutorials, you will find that almost all contemporary frameworks (like PyTorch or TensorFlow) default to ReLU or its variants (like Leaky ReLU or GeLU) because they allow for the massive scaling necessary for modern gadgets.

Specialization in Modern Tech: CNNs, RNNs, and Transformers

As we move toward specialized tech applications—such as digital security, image processing, and translation apps—the most common neuron type shifts slightly to accommodate specific data formats.

Convolutional Neurons for Visual Recognition

In the world of gadgets like smart cameras and self-driving cars, the “Convolutional Neuron” is the dominant type. These neurons are organized into layers that act as filters, scanning images for specific patterns like edges, textures, or shapes. If you use an app that automatically sorts your photos, you are utilizing a Convolutional Neural Network (CNN). This specific type of neural processing is what enables software to “see” and interpret the physical world.

Recurrent Units and the Evolution of Attention Mechanisms

For technologies that deal with sequences—such as voice assistants or real-time translation tools—Recurrent Neural Network (RNN) neurons were once the most common. These neurons have a “memory” component, allowing them to pass information from one step of a sequence to the next. However, the tech landscape has recently shifted toward the “Transformer” architecture. In this context, the most common “neuron” is part of an Attention Mechanism, which allows the software to weigh the importance of different pieces of data simultaneously rather than sequentially. This is the tech that powers the current generative AI boom.

The Future of Neural Processing: Hardware and Neuromorphic Computing

The discussion of the most common type of neuron is not limited to software. As we look at the future of gadgets and digital security, we are seeing the rise of physical neurons in silicon.

Moving Beyond Software: Physical Neurons in Silicon

Neuromorphic computing is a burgeoning field in tech where hardware is designed to mimic the biological brain’s efficiency. Instead of simulating neurons through traditional CPU or GPU instructions, companies are developing “Neuromorphic Chips.” These chips contain physical circuits that act as neurons. This shift represents the next frontier in AI tools, promising to reduce the massive energy consumption currently required by data centers. In this specialized niche, the “Spiking Neuron” is the most common type, sending discrete pulses of electricity only when a specific threshold is met, much like our own brains.

Scaling Intelligence: The Impact on Modern App Ecosystems

The ubiquity of the artificial neuron has fundamentally changed the app economy. Developers no longer need to write every rule for a piece of software; they simply need to choose the right “type” of neural architecture and provide the data. This has led to an explosion in AI-powered tools that handle everything from digital security (detecting fraudulent patterns) to personalized content feeds. As these neurons become even more integrated into our gadgets, the line between biological processing and digital computation continues to blur.

In conclusion, while biology identifies the multipolar neuron as the most common, the technological landscape has crowned the ReLU-based artificial neuron as its most prevalent equivalent. Through its role in deep learning, its efficiency in software training, and its presence in the billions of parameters of modern AI, the digital neuron is the fundamental unit of the 21st-century tech revolution. Whether you are using a simple app or a sophisticated AI tool, you are relying on a massive network of these digital neurons to navigate the modern world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.