In the landscape of software engineering, data science, and digital architecture, precision is the currency of success. While the average person uses the terms “number” and “numeral” interchangeably in daily conversation, the distinction between the two is foundational to how computers process information, how databases are structured, and how algorithms interact with the physical world. For tech professionals, distinguishing between an abstract mathematical value and its symbolic representation is not merely a pedantic exercise; it is a prerequisite for writing clean code, ensuring data integrity, and building scalable systems.

The Abstract vs. The Concrete: Defining the Core Concepts

To understand the difference, one must first view mathematics through the lens of computer science. At its core, a number is a concept—an abstract mathematical object used to count, measure, or label. It represents an amount or a magnitude. For example, the concept of “five” exists independently of how we write it; it is a quantity that remains constant whether we are counting five servers, five bytes, or five users.

Conversely, a numeral is a symbol or a group of symbols used to represent a number. It is the visual or digital “label” we apply to the abstract concept. The numeral “5” is a character, a glyph that we have culturally agreed represents the value of five. In the world of technology, this distinction is the bridge between human-readable interfaces and machine-executable logic.

Numbers as Data Points and Logic

In programming, a number is often treated as a primitive data type. Whether it is an integer (a whole number) or a floating-point number (a decimal), the computer treats this as a value that can be subjected to arithmetic operations. When a developer performs an operation like x + y, the processor is manipulating the underlying values—the numbers—to produce a new result. The computer does not “see” the shape of the numeral; it calculates the magnitude of the number.

Numerals as Strings and Symbols

In contrast, a numeral is frequently handled as a string or a character. When you type your phone number into a web form, the system is generally not treating that input as a “number” (a mathematical value you would add or subtract). Instead, it treats it as a sequence of “numerals” (symbols). This is why a zip code like “02138” is stored as a string; if it were stored as a numerical value, the leading zero would be mathematically irrelevant and discarded, resulting in “2138”—an error in data representation.

Computational Logic: How Systems Process Numerals vs. Values

The way a computer handles the distinction between numerals and numbers determines the efficiency and accuracy of a software system. At the hardware level, computers do not understand numerals at all; they understand electrical states (on and off), which we represent using the binary numeral system (0 and 1).

Data Types and Memory Allocation

When a software engineer defines a variable, they must choose how to store the data. This choice reflects the numeral-number divide. If a value is stored as an int or float, the system allocates a specific amount of memory to hold a binary representation of that number. This allows for high-speed hardware-level arithmetic.

However, if a value is stored as a char or string, the system allocates memory for the numeral. In this state, the “5” is encoded using a standard like ASCII or UTF-8. In ASCII, the numeral “5” is represented by the byte value 53. If a developer mistakenly tries to add the numeral “5” (byte 53) to the number 5, the system will either throw an error or produce an unintended result (like “55”), a common bug in weakly typed languages like JavaScript.

Floating-Point Arithmetic and Precision Issues

The transition from numerals to numbers becomes particularly complex when dealing with decimals. Because computers use binary numerals to represent base-10 numbers, certain values cannot be represented with perfect precision. For example, the number 0.1 is a simple numeral in our decimal system, but in binary, it is a repeating fraction. This discrepancy between the numeral we see and the number the computer stores can lead to rounding errors in financial software or scientific simulations, highlighting why understanding the underlying numerical representation is vital for high-stakes tech development.

Encoding the World: From ASCII to Unicode

The history of digital technology is, in many ways, the history of perfecting how we represent numbers through numerals. As technology became global, the need to represent different numeral systems became a significant engineering challenge.

The Digital Representation of Numerical Glyphs

Early computing relied heavily on ASCII (American Standard Code for Information Interchange), which provided a standard set of numerals for the English-speaking world (0-9). However, as technology spread, it became clear that the “Arabic” numerals used in the West were only one way to represent numbers.

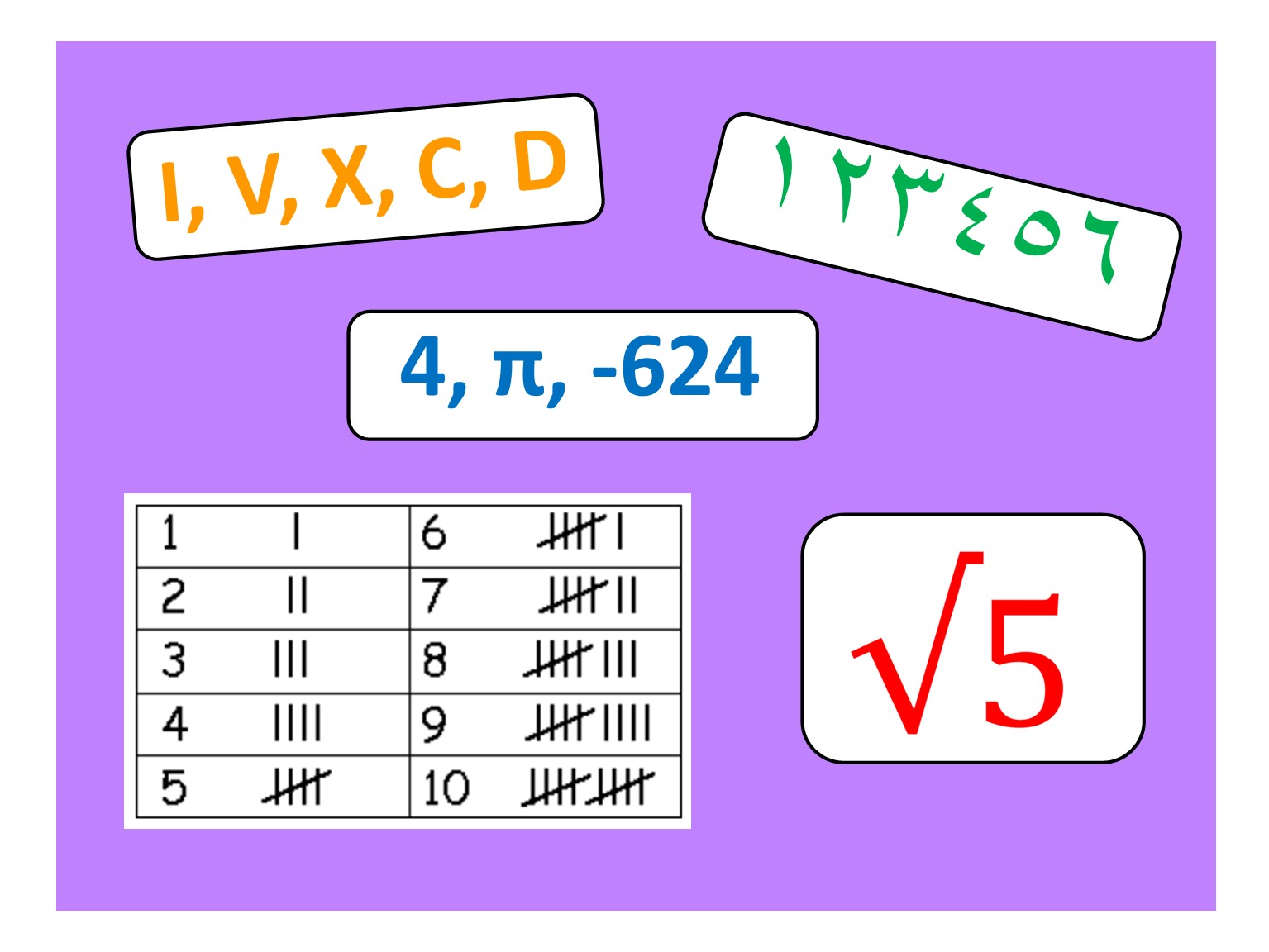

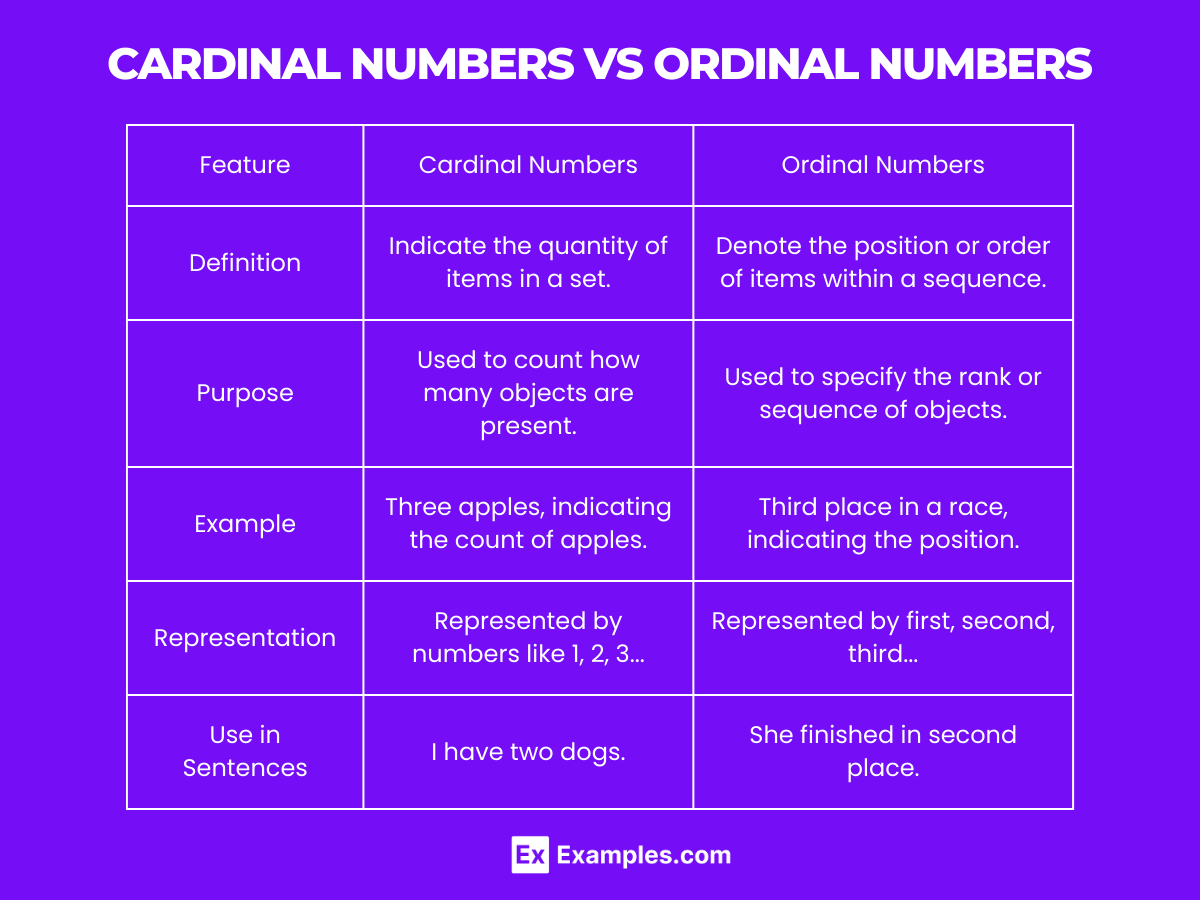

Unicode was developed to solve this, providing a unique code point for every numeral in every language. For instance, the number five can be represented by the Western numeral “5”, the Roman numeral “V”, the Arabic-Indic numeral “٥”, or the Devanagari numeral “५”. While all these numerals look different and are stored as different byte sequences, they all point to the same mathematical number.

Localization and Internationalization (i18n)

For modern tech brands and global platforms, “Internationalization” (i18n) is a critical focus. A well-designed app must be able to display numerals that are culturally appropriate for the user while maintaining the same underlying numerical data. When a user in Riyadh sees a date or a price, the system may display it using Eastern Arabic numerals. The backend logic continues to calculate the number using standard binary operations, but the UI layer translates that value into the appropriate numeral for the user’s locale. This separation of “value” and “view” is a hallmark of sophisticated software architecture.

Security and Input Validation: Why Distinguishing Matters

In the realm of digital security, the confusion between numerals and numbers is a frequent vector for vulnerabilities. Security protocols rely on the strict validation of data types to prevent malicious actors from exploiting a system.

Sanitization and SQL Injection Prevention

One of the most common web vulnerabilities is SQL injection. This occurs when a system takes a numeral provided by a user (like an ID number) and treats it as part of a command string rather than a static value. If the system fails to distinguish that the input should be a strictly defined “number” and instead accepts it as a flexible “numeral/string,” an attacker can input malicious code disguised as a numeral. For example, instead of entering “105”, they might enter “105; DROP TABLE Users;”. Proper “typing”—forcing the system to convert the numeral into a number before processing—is a primary defense mechanism.

UI/UX Design: Handling Human Input vs. Machine Logic

From a User Experience (UX) perspective, the distinction is equally important. When a user enters a credit card number, they are entering a series of numerals. These numerals often include spaces or hyphens for readability (e.g., 4111 1111 1111 1111). A robust system must be able to take those formatted numerals, strip away the non-numeric symbols, and validate the resulting sequence using algorithms like the Luhn formula. The designer must recognize that while the human wants to see a formatted numeral, the machine needs a raw numerical sequence to perform validation.

The Future of Numeric Computation in AI and Quantum Systems

As we move into the era of Artificial Intelligence (AI) and Quantum Computing, the relationship between numerals and numbers is evolving once again.

Vector Embeddings and Numerical Weights

In Large Language Models (LLMs) like GPT-4, the distinction becomes fascinatingly blurred. AI does not process numerals as symbols in the traditional sense; it converts numerals into “tokens” and then into high-dimensional vectors (lists of numbers). When an AI “understands” a number, it isn’t just looking at a numeral; it is analyzing the number’s relationship to other concepts in a multi-dimensional space. The “weight” of a neuron in a neural network is a number that determines how the model behaves, but that number is never seen as a numeral by the end-user.

Quantum Superposition and the End of Binary Numerals

Quantum computing promises to move beyond the binary numeral system entirely. While classical computers use 0s and 1s (bits), quantum computers use qubits, which can exist in a superposition of states. This means the way we represent numbers at the hardware level is undergoing a paradigm shift. We may soon find ourselves needing new “numerals” or symbolic systems to describe the probabilistic numbers generated by quantum processors, further distancing the abstract mathematical concept from our traditional methods of representation.

Conclusion: The Developer’s Duality

In the world of technology, the numeral is the map, and the number is the territory. A successful technologist must be a master of both. They must understand the nuances of the numeral—how it is encoded, displayed, and validated—while never losing sight of the number it represents—the raw, mathematical truth that drives logic and computation.

Whether you are optimizing a database, securing a web application, or training a machine learning model, remember that the “5” on your screen is just a symbol. The true power lies in the number behind it, and the clarity with which you distinguish the two will define the robustness of the digital worlds you build.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.