The quest to understand the human brain has long served as the primary North Star for the field of technology. From the earliest iterations of “logic gates” to the modern complexity of Large Language Models (LLMs), engineers have consistently looked toward biological processes to build more efficient machines. One of the most profound neurobiological frameworks for understanding cognitive processing is the Activation-Synthesis Theory. Originally proposed by psychiatrists J. Allan Hobson and Robert McCarley in 1977, this theory suggests that dreams are the result of the brain attempting to make sense of random neural activity during REM sleep.

In the world of technology, this theory is more than just a psychological curiosity; it is a foundational concept that mirrors how artificial intelligence processes noise, how generative algorithms synthesize new content, and how neural networks achieve “learning” through stochastic processes. By deconstructing the Activation-Synthesis Theory through a technological lens, we can better understand the future of neuro-inspired computing and digital intelligence.

Decoding the Mechanism: From Biological Spikes to Data Processing

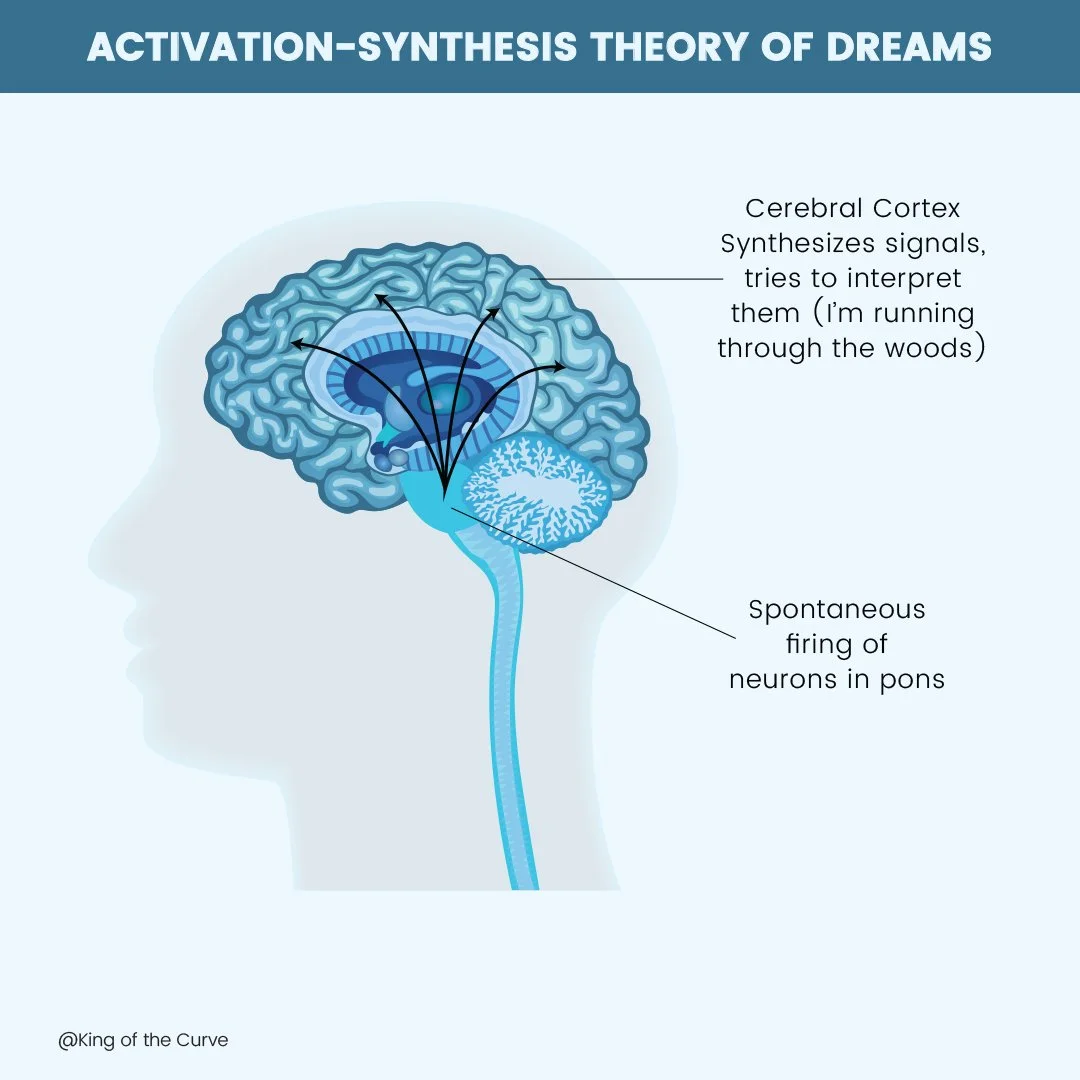

To understand the technological implications of Activation-Synthesis, one must first understand its two-part mechanism. At its core, the theory posits that the brain operates like a sophisticated data-processing unit that never truly “shuts off.”

The Activation Phase: Random Input and Signal Noise

In biological terms, the “activation” phase occurs when the brainstem sends random electrical impulses to the cerebral cortex during REM sleep. These signals are not structured information; they are effectively “neural noise.” In the tech world, this is strikingly similar to stochastic resonance or the injection of noise into a system to prevent stagnation.

In software engineering and data science, “noise” is often seen as an enemy, but the activation phase proves that random input is essential for system maintenance. Just as the brain “fires” random neurons to keep the circuitry active, high-performance computing systems often utilize background processes to ensure that pathways remain responsive. In AI training, adding Gaussian noise to a dataset helps the model become more robust, preventing it from “overfitting” or becoming too rigid in its logic.

The Synthesis Phase: Pattern Recognition and Narrative Construction

The “synthesis” phase is where the magic—and the technology—happens. Once the cortex receives these random signals, it attempts to fulfill its primary function: making sense of data. It pulls from stored memories and emotional states to “synthesize” a coherent narrative, resulting in what we perceive as a dream.

This is the biological equivalent of Inference in machine learning. When an AI model is given a prompt (a form of activation), its internal weights and biases (stored memories/data) work to synthesize a response that follows a logical pattern. The Activation-Synthesis Theory suggests that the brain is essentially an “inference engine” that operates even in the absence of external sensory input.

Parallel Architectures: How Activation-Synthesis Informs Artificial Intelligence

The bridge between neuroscience and AI technology is built on the idea of synthesis. Modern generative AI, such as Stable Diffusion or GPT-4, operates on principles that are eerily similar to Hobson and McCarley’s framework.

Generative AI and the “Dreaming” Machine

When we look at generative image models, the process of “Diffusion” is a direct technological manifestation of activation-synthesis. A diffusion model starts with a field of pure digital noise (activation). Through a series of iterative steps, the model “synthesizes” an image by identifying patterns within that noise based on its training data.

If you ask an AI to generate a “city in the clouds,” it isn’t “thinking” in the human sense. It is taking a random seed of data and synthesizing it into a structure that matches its internal parameters. This mimics the Activation-Synthesis Theory perfectly: the “noise” provides the spark, and the “architecture” provides the meaning. This is why AI “hallucinations”—where a model produces confident but false information—are so fascinating; they are the digital equivalent of a dream where the synthesis engine has prioritized narrative coherence over factual accuracy.

Neural Networks and Noise-Based Learning

Artificial Neural Networks (ANNs) utilize layers of “neurons” to process information. One of the most significant challenges in tech is ensuring these networks can generalize their knowledge. Developers use a technique called Dropout, where random neurons are “turned off” during training.

This intentional introduction of randomness forces the remaining “circuitry” to work harder to synthesize the correct output. This mirrors the activation-synthesis idea that the brain must constantly deal with internal “static” to maintain its ability to process complex reality. By studying how the brain synthesizes dreams from noise, tech researchers are developing more resilient neural architectures that can function in “noisy” real-world environments, such as autonomous driving or real-time signal processing.

Practical Applications in Modern Technology and Software Development

The transition from theory to application is where the Activation-Synthesis framework becomes a tool for developers, cybersecurity experts, and data architects.

Enhancing Predictive Algorithms through Stochastic Models

Predictive analytics—the tech behind everything from stock market forecasting to Netflix recommendations—relies on the ability to find signals within the noise. Software built on the principles of activation-synthesis uses “synthetic data generation” to simulate millions of random scenarios.

By activating these “random” scenarios, the software can synthesize potential outcomes that a human programmer might never have considered. For example, in stress-testing financial software, developers use “Chaos Engineering”—a practice of intentionally introducing random failures (activation) to see how the system synthesizes a recovery (synthesis). This builds a “digital immune system” that mimics the biological brain’s ability to remain functional despite internal disruptions.

Digital Security and Anomaly Detection

In the realm of digital security, the Activation-Synthesis Theory provides a model for sophisticated anomaly detection. Modern Security Information and Event Management (SIEM) tools are constantly bombarded with millions of data points (activation). The challenge is “synthesizing” which of these points represent a legitimate threat versus background noise.

Advanced AI-driven security tools use a “synthesis engine” to create a baseline of “normal” system behavior. When random activation occurs that doesn’t fit the synthesized model, the system flags it as an anomaly. This is essentially a “nightmare” for the software—a recognition that the incoming signals represent a threat to the integrity of the system, triggering a defensive response.

The Future of Neuro-Inspired Computing

As we move toward the next generation of hardware, the Activation-Synthesis Theory is guiding the development of Neuromorphic Computing. Unlike traditional binary systems, neuromorphic chips are designed to mimic the physical structure of the human brain.

Biologically Plausible AI Models

Current AI models are computationally expensive. However, the human brain is incredibly energy-efficient, partly because it doesn’t process every piece of data with equal intensity. The brain “samples” noise and synthesizes only what is necessary.

Tech giants are now investing in “spiking neural networks” (SNNs). These systems only transmit data when a certain electrical threshold is reached—much like the “activation” spikes in the brainstem. By mimicking the activation-synthesis cycle, future gadgets and wearables could offer incredibly powerful AI processing locally on the device, rather than relying on the cloud, because they only “activate” and “synthesize” when relevant patterns emerge.

The Convergence of Neuroscience and High-Performance Computing

The final frontier of this theory lies in the “Digital Twin” technology. By creating digital replicas of human neural pathways, researchers can simulate the activation-synthesis process to test how different drugs or stimuli affect human cognition. This involves massive high-performance computing (HPC) power to synthesize the trillions of connections within the brain.

In this context, the Activation-Synthesis Theory is no longer just a way to explain dreams; it is a mathematical framework for simulating consciousness itself. As our software becomes better at synthesizing reality from raw data, the line between “biological dreaming” and “machine inference” begins to blur.

Conclusion

The Activation-Synthesis Theory reveals that the “noise” in a system is not a bug, but a feature. In technology, as in the human brain, the ability to take random, unorganized input and synthesize it into a coherent, actionable narrative is the hallmark of intelligence. Whether it is a generative AI creating art, a cybersecurity protocol protecting a network, or a neuromorphic chip processing sensory data, the principles of activation and synthesis remain the same.

By embracing the lessons of this theory, the tech industry is moving away from rigid, deterministic logic and toward a more fluid, biological approach to computing. The future of technology does not lie in the elimination of noise, but in our ability to build machines that can dream—synthesizing meaning from the chaos of the digital world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.