In the landscape of modern technology, the most complex systems often rest upon the simplest mathematical truths. At first glance, the question “What is the prime factorization of 21?” appears to be a rudimentary exercise in primary school arithmetic. The answer is straightforward: 21 is the product of two prime numbers, 3 and 7. However, in the realms of computer science, digital security, and algorithmic development, this simple decomposition represents the cornerstone of how we protect data and facilitate global communication.

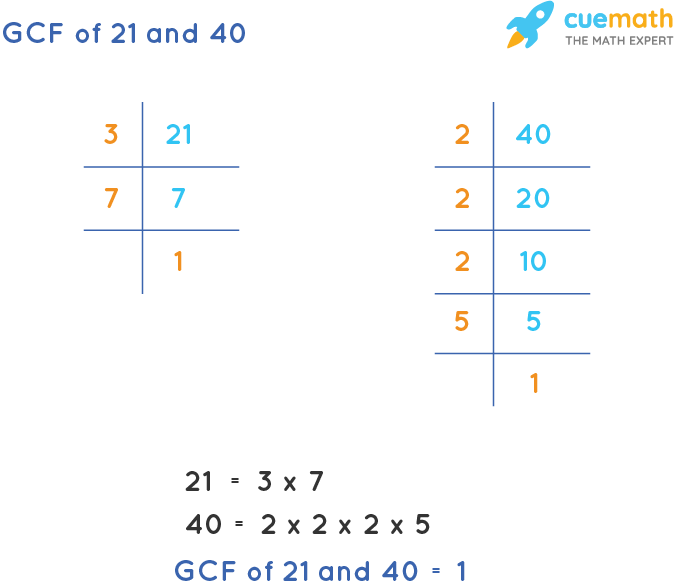

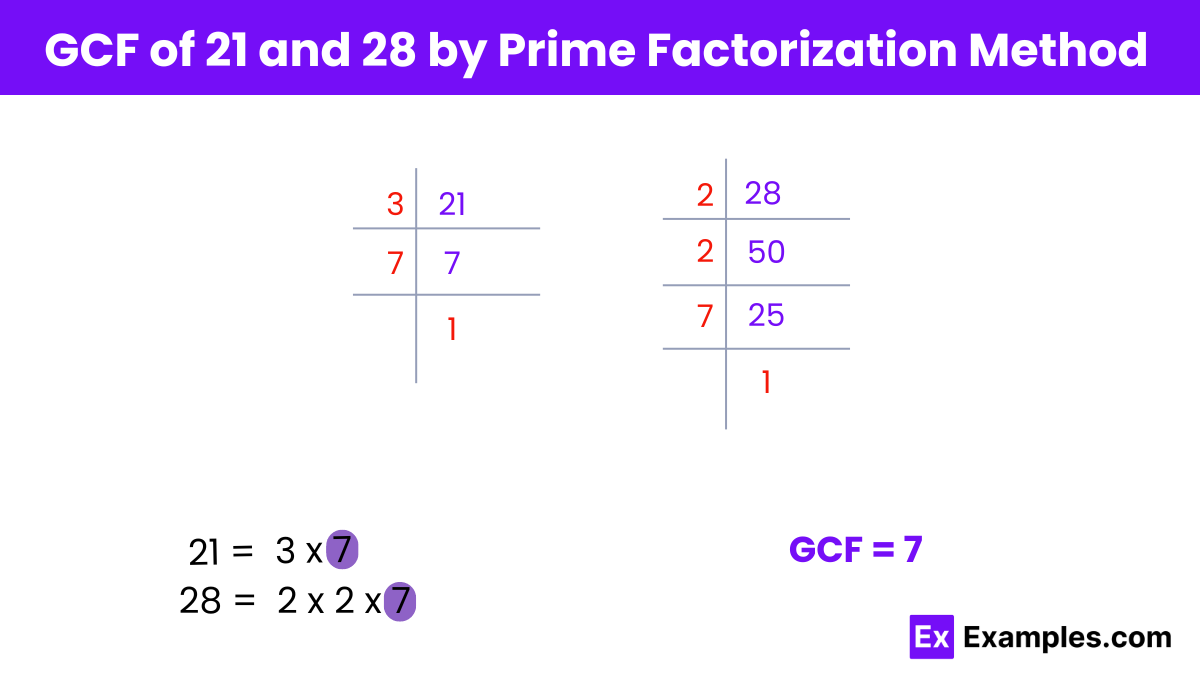

Prime factorization is the process of breaking down a composite number into a set of prime numbers which, when multiplied together, equal the original number. For 21, because both 3 and 7 are prime (meaning they have no divisors other than 1 and themselves), the process concludes immediately. While factoring 21 is a trivial task for a human brain or a basic calculator, the technological implications of this logic—when scaled to hundreds of digits—are what keep our digital world secure.

The Algorithmic Significance of Prime Decomposition

In the tech sector, prime factorization is not just a math problem; it is a computational challenge. The gap between the ease of multiplying two prime numbers and the difficulty of factoring their product is what computer scientists refer to as a “trapdoor function.”

From Simple Math to Computational Logic

When we look at $3 times 7 = 21$, the computation is instantaneous. However, if a software engineer were to design an algorithm to find the factors of a much larger number, the “computational cost” would grow exponentially. In software development, we measure this efficiency using Big O notation. While multiplying two numbers takes negligible time (O(n^2) for basic multiplication), factoring a large composite number is a non-deterministic polynomial-time (NP) problem. This asymmetry is the bedrock upon which modern tech infrastructure is built.

The Role of Primes in Data Structuring

Beyond security, prime numbers like 3 and 7 are utilized in software engineering for data integrity and optimization. Hash functions, which are used to map data of arbitrary size to fixed-size values, frequently utilize prime numbers to reduce “collisions.” When a developer creates a hash table, using a prime number (like 21’s factors or 21 itself in specific modulus operations) ensures a more uniform distribution of data across the available memory slots, enhancing the performance of databases and search algorithms.

Prime Factorization as the Backbone of Digital Cryptography

The most critical application of prime factorization in technology today is in the field of cryptography, specifically the RSA (Rivest–Shamir–Adleman) encryption algorithm. Every time you make a purchase online, log into your banking app, or send an encrypted message, you are relying on the logic behind the factorization of numbers like 21.

RSA Encryption and the Power of Large Primes

The RSA algorithm generates a “public key” and a “private key.” The public key is derived from the product of two very large prime numbers. To understand this through our example, if 21 were our public key, 3 and 7 would be the secret components of our private key. In a real-world scenario, these primes are not single digits; they are hundreds of digits long. The security of the internet relies on the fact that while a computer can easily multiply two 500-digit primes to get a 1000-digit product, no existing classical computer can work backward to find the original primes in a reasonable timeframe.

Why Factorization Difficulty Dictates Digital Security

Digital security is essentially an arms race against computational power. As CPUs and GPUs become faster, the “length” of the primes used in factorization-based encryption must increase. Currently, 2048-bit keys are the standard. The reason we care about the prime factorization of a number like 21 is that it serves as the “Proof of Concept” for this entire security architecture. If an algorithm were discovered that could factor large numbers as easily as we factor 21, the entire framework of global digital privacy would collapse overnight.

Computational Challenges and Modern Sieve Methods

In tech tutorials and software optimization, the methods used to factor numbers are a major point of study. While “trial division” (testing every number up to the square root of 21) works for small integers, it is hopelessly inefficient for the massive integers used in enterprise-level software.

The Evolution of Factoring Algorithms

To solve factorization problems more efficiently, computer scientists have developed sophisticated software tools and algorithms:

- Pollard’s Rho Algorithm: Useful for finding smaller factors in a large composite number.

- Quadratic Sieve: Historically significant for factoring numbers under 100 digits.

- General Number Field Sieve (GNFS): The current “gold standard” for factoring large integers on classical hardware.

These tools represent the pinnacle of software engineering meeting theoretical mathematics. They require immense memory management and parallel processing capabilities, often distributed across thousands of server nodes in a cloud environment.

AI and Machine Learning in Number Theory

We are now entering an era where AI tools are being applied to mathematical conjectures. While AI isn’t currently used to “break” prime factorization, machine learning models are being utilized to optimize the parameters used in sieve algorithms. By predicting which polynomial paths might yield factors more quickly, AI is helping researchers push the boundaries of what is computationally “factorable,” thereby forcing security experts to adopt even more robust encryption standards.

The Quantum Frontier: Shor’s Algorithm and the Future

If the prime factorization of 21 is a small stepping stone, the advent of quantum computing is a tidal wave. The tech world is currently bracing for a “cryptographic apocalypse” known as Q-Day, which involves a specific piece of software: Shor’s Algorithm.

How Quantum Computing Changes the Game

In 1994, Peter Shor developed a quantum algorithm that can factor integers in polynomial time. On a sufficiently powerful quantum computer, factoring a massive RSA key would be as computationally simple as factoring 21 is for a modern smartphone. This is because quantum bits (qubits) can exist in multiple states simultaneously, allowing the computer to “sieve” through potential prime factors at a rate that is physically impossible for classical silicon chips.

Preparing for a Post-Quantum World

Because of this technological shift, the tech industry is currently pivoting toward “Post-Quantum Cryptography” (PQC). This involves developing new digital security standards that do not rely on the difficulty of prime factorization. Tech giants like Google, IBM, and Cloudflare are already testing lattice-based and code-based encryption methods. Even though 21 will always be $3 times 7$, the era of relying on that specific mathematical “hardness” for our gadgets and apps is nearing its transition.

Practical Applications in Software Development and Data Integrity

While cryptography is the “glamour” side of factorization, the logic behind the prime factorization of 21 permeates everyday software development and digital infrastructure in more subtle ways.

Hashing and Data Verification

Software developers use prime-related logic to ensure data has not been tampered with. In blockchain technology, for example, the concept of mathematical proof is central. While Bitcoin uses SHA-256 (a different mathematical family), the underlying principle of using “one-way” mathematical operations to verify integrity is identical to the logic used in factorization. If you change even one bit of data, the resulting “product” changes entirely, much like how changing 3 to 4 would move the product far away from 21.

Optimizing Code for Mathematical Precision

In high-frequency trading (HFT) and FinTech software, understanding the prime factors of numbers is crucial for timing and synchronization. Algorithms that manage “clock ticks” in distributed systems often use prime intervals to avoid resonance and synchronization conflicts that could crash a network. Thus, the prime factorization of 21 isn’t just a math fact; it’s a lesson in how distinct, non-overlapping values (primes) create stable systems.

In conclusion, while the prime factorization of 21 is a simple “3 and 7,” it serves as a microcosm for the most vital challenges and triumphs in modern technology. From the way we encrypt our emails to the way we prepare for the quantum future, the study of prime numbers remains the invisible thread weaving together the fabric of our digital existence. As technology evolves, our appreciation for these fundamental mathematical building blocks only grows, reminding us that the most complex AI and software tools are, at their core, just very fast ways of doing arithmetic.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.