In an era defined by rapid technological advancement, the term “algebraic” might evoke dusty textbooks and abstract equations for some. Yet, far from being a relic of academic pursuits, algebraic thinking is the invisible backbone supporting nearly every facet of our digital world. From the intricate logic governing computer processors to the complex algorithms powering artificial intelligence, and the impenetrable security measures protecting our data, algebra is not just fundamental – it is the very language through which technology communicates, operates, and evolves.

Understanding “what is algebraic” is not merely about reciting definitions; it’s about grasping a powerful paradigm of abstraction and generalization that allows us to model complex systems, predict outcomes, and automate solutions. For anyone involved in tech – whether as a developer, data scientist, cybersecurity expert, or an aspiring innovator – recognizing the algebraic underpinnings of their field is crucial. It’s the key to debugging more efficiently, designing more robust systems, and pushing the boundaries of what’s possible in the digital frontier. This article delves into the essence of algebraic thought, its pervasive applications in technology, and why cultivating an algebraic intuition is indispensable for navigating and shaping the future of tech.

Beyond Numbers: The Essence of Algebraic Thinking

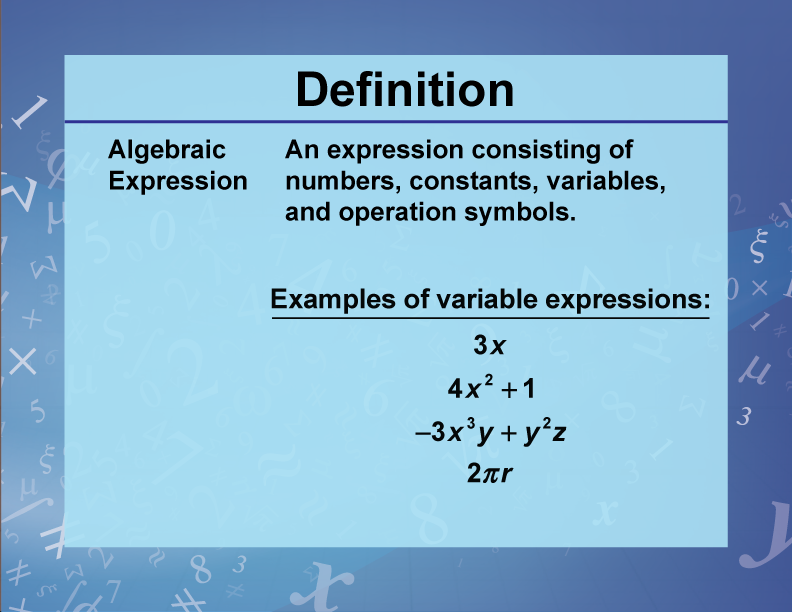

At its core, “algebraic” refers to a branch of mathematics concerned with symbols and the rules for manipulating these symbols. Unlike arithmetic, which deals with specific numbers, algebra introduces variables (symbols representing unknown quantities or general elements) and structures (sets with defined operations). This shift from concrete numbers to abstract symbols is not just a mathematical curiosity; it’s a revolutionary leap that provides the framework for computational logic and problem-solving in technology.

Abstraction and Generalization: Solving Problems Universally

The power of algebraic thinking lies in its ability to abstract specific instances into general rules. Instead of solving a problem for x = 5, algebra allows us to solve it for any x. This generalization is precisely what enables software engineers to write code that handles diverse inputs, or data scientists to build models that predict outcomes across varying datasets. When we define a function f(x) = x^2, we’re not just describing the square of a single number; we’re describing a rule that applies to any number. This ability to capture relationships and processes in a generalized form is the foundation of creating reusable code, scalable algorithms, and adaptable systems – cornerstones of modern tech development.

The Language of Relationships and Operations

Algebra provides a universal language for describing relationships between entities and defining operations on them. Whether it’s combining data points, transforming signals, or executing sequences of commands, these actions can often be expressed algebraically. For example, in database queries, operations like ‘union’ or ‘intersection’ have algebraic equivalents. In graphical user interfaces, transformations like scaling, rotation, and translation are performed using matrix algebra. By articulating these relationships algebraically, engineers gain precision, clarity, and the ability to apply rigorous mathematical analysis to their designs, ensuring reliability and efficiency in complex technological systems. This mathematical precision is what allows us to confidently build complex systems like operating systems or large-scale cloud infrastructure, knowing that the underlying logic is sound and predictable. It’s the difference between guessing how a system will behave and mathematically proving its behavior under various conditions.

Algebra as the Foundation of Computer Science

The entire edifice of computer science is built upon algebraic principles. From the very lowest level of hardware logic to the highest levels of application development, algebraic structures provide the conceptual framework. Understanding this connection is vital for comprehending how computers process information, execute instructions, and manage complex operations.

Boolean Algebra: The Logic Gates of Computing

Perhaps the most direct and fundamental application of algebra in computing is Boolean algebra. Developed by George Boole in the 19th century, this system deals with binary variables (true/false, or 1/0) and logical operations (AND, OR, NOT, XOR). Every digital circuit, from the simplest transistor switch to the most complex microprocessor, operates on the principles of Boolean algebra. Logic gates, the elementary building blocks of digital circuits, directly implement these Boolean operations. A NAND gate, for instance, is an algebraic function that takes two binary inputs and outputs a 1 only if at least one input is 0. Without Boolean algebra, the very idea of a digital computer – a machine that processes information using binary states – would be inconceivable. It’s the bedrock upon which all subsequent computational advancements are made, dictating how electrons flow and how information is represented and manipulated at the hardware level.

Data Structures and Algorithms: Abstracting Information

Beyond hardware, algebra permeates the software layer through data structures and algorithms. A data structure, such as an array, linked list, or tree, is essentially an algebraic model for organizing data in a way that allows for efficient operations. For example, the rules for inserting an element into a binary search tree, or traversing a graph, are defined by algorithmic steps that often have clear algebraic representations. Algorithms themselves are sequences of well-defined instructions, many of which involve algebraic manipulation. Sorting algorithms, search algorithms, and even seemingly simple arithmetic operations within a program are built on algebraic principles. The efficiency of these algorithms – how quickly they run and how much memory they consume – is frequently analyzed using algebraic notation (e.g., Big O notation, which describes the upper bound of an algorithm’s growth rate in terms of algebraic expressions). This allows developers to choose the most optimal approaches for processing vast amounts of data.

Programming Languages: Syntax and Semantic Structures

Every programming language, from Python to C++ to JavaScript, possesses a formal grammar and syntax that can be described algebraically. The way variables are declared, expressions are evaluated, and control flows (like if-else statements or loops) are structured, all follow a set of algebraic rules. Compilers and interpreters, which translate human-readable code into machine instructions, rely heavily on parsing these algebraic structures. The very concept of an ‘expression’ in programming (e.g., x + y * z) is inherently algebraic, defining an order of operations and the combination of values. Understanding the algebraic underpinnings of programming languages allows developers to write cleaner, more efficient, and error-free code, and crucially, to design new languages or extend existing ones with confidence in their logical consistency and computational integrity.

Powering AI and Machine Learning: The Algebraic Core

The revolutionary advancements in Artificial Intelligence and Machine Learning are almost entirely dependent on sophisticated algebraic principles. From processing vast datasets to training complex neural networks, algebra provides the mathematical framework that enables machines to learn, recognize patterns, and make intelligent decisions.

Linear Algebra: The Bedrock of Data Science

If there’s one branch of algebra that underpins modern AI, it’s linear algebra. Data, in the context of machine learning, is almost universally represented as vectors, matrices, and tensors – all fundamental concepts in linear algebra. A grayscale image is a matrix of pixel intensities; a dataset of customer information is a matrix where rows are customers and columns are features. Operations like multiplying matrices, finding eigenvalues, or performing vector additions are at the heart of virtually every machine learning algorithm. Principal Component Analysis (PCA), a common technique for dimensionality reduction, relies entirely on eigenvectors and eigenvalues. Support Vector Machines (SVMs) use hyperplanes (linear decision boundaries) defined by linear algebraic equations to classify data. Understanding linear algebra is not just beneficial for data scientists; it’s absolutely essential for interpreting model behaviors, optimizing performance, and developing new AI techniques.

Neural Networks: Layers of Algebraic Transformations

Artificial Neural Networks (ANNs), the driving force behind deep learning, are essentially a series of intricate algebraic transformations. Each “neuron” in a network takes a set of inputs, multiplies them by corresponding “weights” (coefficients), sums them up, adds a “bias” (an algebraic constant), and then passes the result through an “activation function.” This entire process is a sophisticated algebraic equation. Stacking these neurons into layers creates a cascade of matrix multiplications and non-linear transformations. Training a neural network involves adjusting these weights and biases – an optimization problem solved using algebraic methods like gradient descent – to minimize the difference between the network’s output and the desired outcome. Without a deep understanding of the algebraic operations occurring within each layer, it would be impossible to design, train, or even comprehend the workings of a modern deep learning model, from image recognition to natural language processing.

Optimization and Gradient Descent: Finding the Best Solutions

Machine learning is inherently an optimization problem: finding the set of parameters (weights and biases) that minimizes a “cost function” or “loss function.” This cost function is an algebraic expression that quantifies the error of a model’s predictions. The primary algorithm for solving this optimization problem is gradient descent, an iterative process that relies on calculating the gradient (a vector of partial derivatives) of the cost function with respect to its parameters. This gradient algebraically points in the direction of the steepest ascent, and by moving in the opposite direction (the “descent”), the algorithm iteratively finds the minimum of the cost function. All the variations of gradient descent (Stochastic Gradient Descent, Adam, RMSprop) are sophisticated algebraic manipulations designed to optimize this process, making them faster and more robust. The ability to algebraically represent these functions and their derivatives is what allows AI systems to learn and improve over time, transforming raw data into actionable intelligence.

Securing Our Digital World: Algebra in Cryptography

In the realm of digital security, algebra moves from abstract concepts to practical shields, protecting our privacy, data integrity, and online transactions. Cryptography, the science of secure communication, is profoundly rooted in advanced algebraic structures, making it an indispensable tool for securing the modern internet.

Modular Arithmetic and Public-Key Encryption

One of the most significant applications of algebra in cryptography is modular arithmetic, a system of arithmetic for integers, where numbers “wrap around” when reaching a certain value (the modulus). This seemingly simple algebraic concept is the foundation for widely used public-key encryption schemes like RSA (Rivest-Shamir-Adleman). RSA relies on the computational difficulty of factoring large numbers into their prime factors and the properties of modular exponentiation. When you send an encrypted message, an algebraic operation using a public key transforms your data into an unreadable cipher. Only someone with the corresponding private key can perform the inverse algebraic operation to decrypt it. The security of this entire process hinges on the algebraic properties of numbers and the computational infeasibility of certain inverse operations, providing a robust defense against eavesdropping.

Elliptic Curve Cryptography: Advanced Algebraic Security

Beyond RSA, more advanced cryptographic methods leverage complex algebraic structures. Elliptic Curve Cryptography (ECC) is a prime example. ECC employs the algebraic structure of elliptic curves over finite fields to create highly secure cryptographic keys that are significantly smaller than RSA keys for the same level of security. This makes ECC particularly valuable for resource-constrained devices like smartphones and embedded systems, and for applications where bandwidth is limited. The security of ECC relies on the difficulty of solving the “elliptic curve discrete logarithm problem,” another computationally challenging algebraic problem. Understanding the group theory and field theory inherent in these algebraic structures is critical for designing and analyzing the next generation of cryptographic protocols, from secure communication channels to blockchain technologies.

Hashing Algorithms: Data Integrity Through Algebraic Functions

Hashing algorithms are another cornerstone of digital security, used for verifying data integrity, storing passwords securely, and constructing blockchain ledgers. A hash function takes an input (or “message”) and returns a fixed-size string of bytes, typically a hexadecimal number, which is the “hash value” or “digest.” Cryptographic hash functions are one-way algebraic functions: it’s easy to compute the hash from the input, but computationally infeasible to reverse the process and derive the input from the hash. Furthermore, even a tiny change in the input should result in a drastically different hash value (the “avalanche effect”). These properties are achieved through complex algebraic operations, bitwise manipulations, and iterative transformations. Secure Hash Algorithm (SHA) families (like SHA-256) are examples of these algebraic marvels, ensuring that any tampering with data becomes immediately evident, thereby underpinning trust in digital transactions and records.

Cultivating Algebraic Intuition: Essential for Tomorrow’s Tech Innovators

Given the pervasive influence of algebraic thinking across all domains of technology, cultivating an “algebraic intuition” is no longer just a mathematical luxury; it’s a fundamental skill for anyone aspiring to innovate and lead in the tech industry. This intuition goes beyond memorizing formulas; it involves a deep understanding of abstraction, generalization, and problem-solving through symbolic manipulation.

Problem-Solving Paradigms: From Equations to Code

An algebraic mindset trains individuals to break down complex problems into smaller, manageable components, identify underlying patterns, and express relationships in a precise, formal manner. This mirrors the process of developing software: defining variables, designing functions, constructing data models, and implementing algorithms. Whether you’re debugging a tricky piece of code, designing a new API, or optimizing a database query, the ability to mentally model the system as a set of interacting algebraic equations or transformations will lead to more elegant and robust solutions. It allows for a more abstract view of the problem, enabling designers to focus on logic and structure rather than getting bogged down in specific instances.

Understanding System Dynamics and Scalability

Algebraic thinking also provides a powerful lens for understanding how systems behave, scale, and interact. Equations and models can predict the performance of an algorithm as data volume increases, or how changes in network parameters will affect latency. When a tech professional thinks algebraically, they can anticipate bottlenecks, design for future growth, and make informed decisions about architectural choices. For example, understanding how an algorithm’s complexity (often expressed algebraically as O(n^2) or O(log n)) impacts its scalability is crucial for building systems that can handle real-world demands without collapsing under load. This predictive power, rooted in algebraic analysis, is invaluable for building resilient and high-performing tech solutions.

The Future: Quantum Computing and Advanced Algebraic Structures

Looking ahead, the importance of algebraic intuition is only set to grow. Emerging fields like quantum computing rely heavily on advanced linear algebra, group theory, and abstract algebra to describe quantum states, operations, and algorithms. Quantum bits (qubits) and quantum gates operate on fundamentally different algebraic principles than classical bits and logic gates. Developing quantum software or designing quantum algorithms will require a sophisticated grasp of these more complex algebraic structures. Similarly, advancements in AI, such as explainable AI or new forms of machine learning, will continue to push the boundaries of algebraic modeling. For the next generation of tech innovators, embracing and mastering algebraic thought will be paramount to unlocking the full potential of these transformative technologies and shaping the digital world of tomorrow.

In conclusion, “what is algebraic” transcends a mere definition; it represents a foundational mode of thought that has enabled the creation of our digital world and continues to drive its evolution. From the fundamental binary logic of computers to the sophisticated algorithms of AI and the impenetrable layers of cybersecurity, algebra is the invisible thread weaving through every technological innovation. For those building, securing, and envisioning the future of technology, cultivating a strong algebraic intuition is not just an advantage – it is an absolute necessity.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.