In the world of technology, everything we interact with—from the sleek interface of a smartphone to the complex algorithms driving generative AI—is built upon a foundation of mathematics. While we often discuss high-level concepts like “machine learning” or “cloud architecture,” the fundamental building block of these innovations is the numerical expression. At its core, a numerical expression in mathematics is a combination of numbers and operation symbols (such as addition, subtraction, multiplication, and division) that represents a specific value.

However, in the context of modern technology, a numerical expression is much more than a classroom exercise. It is the DNA of code, the logic of hardware, and the language of data. To understand how software functions and how AI “thinks,” one must first understand how numerical expressions are structured and processed within the digital ecosystem.

The Foundations of Logic: Defining Numerical Expressions in Programming

In the realm of software development, numerical expressions serve as the primary vehicle for data manipulation. While a pure mathematician might view $5 + (3 times 2)$ as a static problem to be solved, a software engineer views it as a dynamic instruction set.

Syntax and Semantics in Code

In programming languages like Python, C++, or Java, numerical expressions must follow strict syntactical rules to be interpreted correctly by a compiler or interpreter. Unlike handwritten math, where a misplaced parenthesis might lead to a minor error, in tech, it leads to a “Syntax Error” that can halt an entire system. The expression is the most basic unit of work. When a programmer writes total_cost = price * quantity, they are using a numerical expression to define the state of an application.

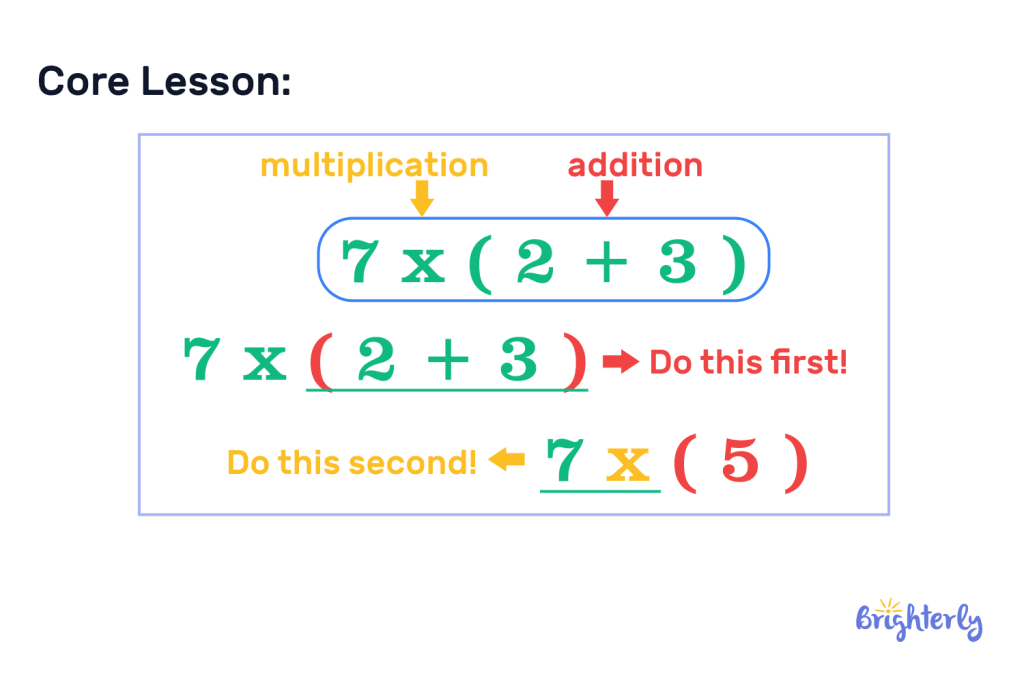

The Order of Operations: From PEMDAS to Computational Logic

Computers follow a strict hierarchy when evaluating numerical expressions, similar to the PEMDAS (Parentheses, Exponents, Multiplication, Division, Addition, Subtraction) rule learned in school. However, in technology, this is referred to as “operator precedence.” Understanding this is critical for digital security and software reliability. A slight deviation in how a numerical expression is grouped can lead to “overflow errors” or logic flaws that hackers can exploit to bypass authentication or crash a server.

Numerical Expressions in Data Science and Big Data

We live in the era of Big Data, where companies process petabytes of information every second. At this scale, the numerical expression evolves from a simple calculation into a sophisticated tool for pattern recognition and statistical analysis.

Algorithmic Efficiency and Big O Notation

When data scientists analyze large datasets, they use numerical expressions to determine the efficiency of an algorithm. This is often expressed through “Big O Notation,” a mathematical expression that describes how the execution time of an algorithm grows as the input size increases. For instance, an expression like $O(n^2)$ tells a developer that their software will slow down exponentially as more users join the platform. By optimizing these numerical expressions, tech companies can save millions in server costs and energy consumption.

Processing Large Datasets with Vectorization

Modern data processing tools, such as NumPy or Pandas, use vectorized expressions. Instead of calculating one numerical expression at a time, these systems apply a single expression across an entire array of millions of numbers simultaneously. This “Single Instruction, Multiple Data” (SIMD) approach is what allows modern weather forecasting, financial modeling, and genome sequencing to happen in real-time. Without the ability to scale numerical expressions across hardware, our most advanced tech tools would grind to a halt.

The Role of Expressions in AI and Machine Learning

The current boom in Artificial Intelligence is, in many ways, a triumph of high-speed numerical expression evaluation. When we talk about a “Neural Network,” we are essentially describing a massive web of interconnected numerical expressions.

Neural Network Weights and Biases

In a machine learning model, every “neuron” performs a specific numerical expression: $y = f(wx + b)$. Here, $w$ represents the weight, $x$ is the input data, and $b$ is the bias. The “learning” process in AI involves adjusting these numerical values until the expression yields the most accurate result. Every time ChatGPT generates a sentence or a self-driving car identifies a stop sign, billions of these numerical expressions are being solved in milliseconds on specialized hardware like GPUs (Graphics Processing Units).

Optimization Functions and Gradient Descent

To train an AI, developers use “Loss Functions,” which are complex numerical expressions that measure the difference between the AI’s prediction and reality. Through a process called Gradient Descent, the system uses calculus-based expressions to minimize this error. The precision of these expressions determines whether an AI is “hallucinating” or providing factual, useful information. In tech, the quality of your numerical expressions directly correlates to the intelligence of your software.

Tools and Languages: How Modern Software Interprets Math

The bridge between a human-readable numerical expression and the binary logic of a computer is built by specialized software tools. These tools ensure that mathematical concepts are translated into physical electrical pulses within a processor.

High-Level vs. Low-Level Languages

High-level languages like Python are designed to make numerical expressions look as close to human math as possible. For example, calculating a square root is simply math.sqrt(16). However, at the “low level”—closer to the hardware—these expressions are broken down into Assembly language and eventually binary code. A simple addition expression in a mobile app might involve dozens of low-level steps where the CPU moves numbers between “registers” to perform the calculation.

The Role of Compilers and Interpreters

A compiler acts as a translator. When you write a complex numerical expression in a piece of software, the compiler analyzes it to find the most efficient way for the hardware to execute it. Modern “Just-In-Time” (JIT) compilers can even rewrite numerical expressions on the fly to optimize performance based on the specific chip (Intel, AMD, or ARM) the software is running on. This level of technical abstraction allows developers to focus on building features while the tools handle the mathematical heavy lifting.

Digital Security: Mathematical Expressions in Encryption

Perhaps the most critical application of numerical expressions in the modern world is in the field of cybersecurity. Every time you log into a bank account or send an encrypted message, your data is protected by complex mathematical expressions.

Cryptographic Hashing and the RSA Algorithm

Encryption relies on “trapdoor functions”—numerical expressions that are easy to calculate in one direction but nearly impossible to reverse without a specific key. The RSA algorithm, which secures most web traffic, is based on the difficulty of factoring the product of two large prime numbers. This is represented by a numerical expression involving modular exponentiation. If a hacker cannot “solve” the inverse of that expression, your data remains safe.

The Future: Quantum Computing and Breaking Expressions

The tech world is currently racing toward the era of Quantum Computing. Standard numerical expressions used in today’s encryption may become vulnerable to “Shor’s Algorithm,” a quantum-based mathematical process. As a result, tech giants are developing “Post-Quantum Cryptography,” which involves creating even more complex numerical expressions based on lattice-type mathematics. In the world of digital security, the battle is fought entirely through the evolution of numerical expressions.

Conclusion: The Ubiquity of Math in Technology

While the question “what is a numerical expression in math” might seem like a basic inquiry into arithmetic, its implications in the tech industry are profound. Numerical expressions are the silent engines of our digital lives. They are the logic gatekeepers in our software, the architects of our AI, the optimizers of our big data, and the guardians of our digital privacy.

As we move further into a future dominated by automation and advanced computation, the ability to construct, interpret, and optimize these expressions remains the most valuable skill in the technological landscape. Whether you are a software developer, a data scientist, or a tech enthusiast, recognizing the power of the numerical expression is key to understanding how our modern world truly functions. From the simplest “1 + 1” to the most complex neural network weight, math remains the ultimate source code of innovation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.