In the rapidly evolving landscape of Artificial Intelligence (AI) and Machine Learning (ML), the ability of a computer to “see” and interpret the world is one of the most significant breakthroughs of the 21st century. At the heart of this visual revolution lies a deceptively simple geometric concept: the bounding box. Whether you are looking at a self-driving car navigating a busy intersection or a security camera identifying an intruder, bounding boxes are the invisible frameworks making those decisions possible.

A bounding box is an imaginary rectangle that serves as a point of reference for object detection and helps machine learning models categorize objects within an image or video frame. While it may look like a simple box drawn around a car or a person, it represents a complex bridge between raw pixel data and high-level cognitive understanding.

Understanding the Fundamentals: What Exactly is a Bounding Box?

To understand a bounding box, one must first understand the goal of computer vision. Computers do not see images as we do; they see a grid of numbers representing color and intensity. For an AI to identify an object—say, a bicycle—it needs to know exactly which pixels belong to that bicycle.

The Geometry of Annotation

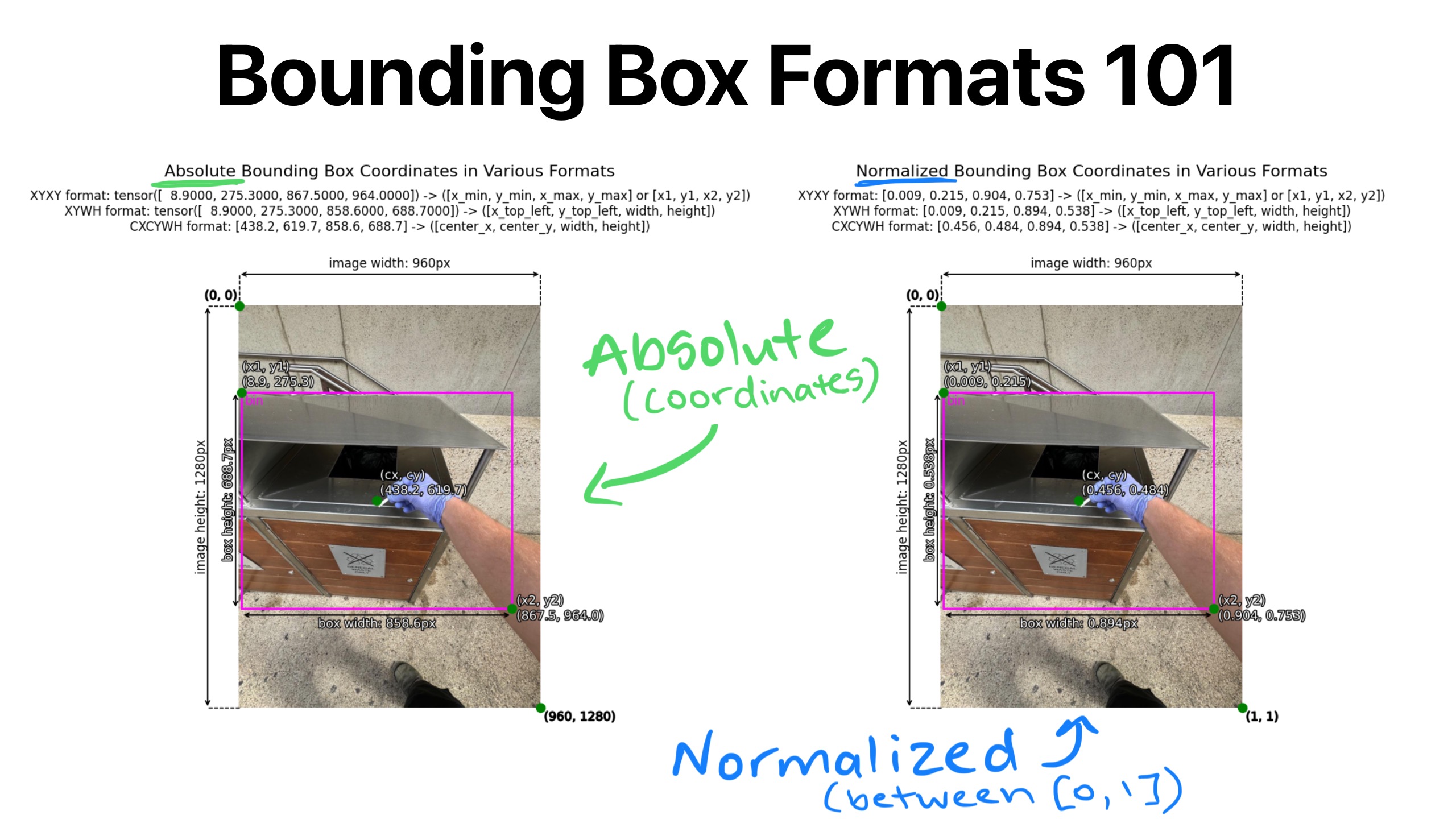

A bounding box is the simplest way to describe the spatial location of an object. In a 2D digital space, a bounding box is defined by its X and Y coordinates. Usually, this is represented in one of two ways:

- Min-Max Coordinates: Defining the top-left corner (x-min, y-min) and the bottom-right corner (x-max, y-max).

- Centroid Representation: Defining the center point of the object (x, y) along with its width and height.

By using these coordinates, a machine learning model can isolate a specific area of an image to analyze its contents. This process is the primary step in “image annotation,” where human or automated labelers draw these boxes to create training data for AI.

How Bounding Boxes Function in Data Labeling

Data labeling is the “teaching” phase of AI development. If you want a model to recognize a “stop sign,” you must show it thousands of images where stop signs are clearly enclosed in bounding boxes and labeled as such. Over time, the neural network learns the patterns—the red octagon, the white lettering—contained within those specific coordinates. The bounding box acts as a focus tool, telling the algorithm: “Ignore the sky, ignore the road; look only at what is inside this rectangle.”

The Role of Bounding Boxes in Machine Learning and AI Training

In the realm of supervised learning, bounding boxes serve as the “Ground Truth.” This term refers to the reality that we want the AI to recognize. Without accurate bounding boxes, a model would have no way to verify if its predictions are correct.

Training Object Detection Models

Object detection is a two-part challenge for a computer: classification (what is the object?) and localization (where is the object?). Bounding boxes solve the localization problem. During training, a model makes a “guess” about where an object is. It draws its own internal bounding box and compares it to the ground truth box provided by human annotators.

Through a process called backpropagation, the model adjusts its internal weights to minimize the difference between its predicted box and the actual box. Popular architectures like YOLO (You Only Look Once), SSD (Single Shot Detector), and Faster R-CNN rely heavily on these rectangular annotations to refine their spatial awareness.

Intersection over Union (IoU): Measuring Accuracy

How do researchers know if a bounding box is “good”? They use a metric called Intersection over Union (IoU). IoU measures the overlap between the predicted bounding box and the ground truth box.

- An IoU of 1.0 means the predicted box perfectly matches the human-annotated box.

- An IoU of 0 means there is no overlap at all.

Generally, a prediction is considered “successful” if the IoU is greater than 0.5. This mathematical rigor allows developers to quantify the progress of their AI tools and ensure that the software is becoming more precise over time.

Types of Bounding Boxes and Their Specific Use Cases

While the standard 2D rectangle is the most common, different technological challenges require different types of bounding boxes. The choice of box depends entirely on the complexity of the environment the AI is expected to operate in.

2D Bounding Boxes vs. 3D Cuboids

The standard 2D bounding box is ideal for flat images, such as those found in document processing or simple surveillance. However, in industries like robotics and autonomous driving, 2D isn’t enough. These systems need to understand depth, volume, and orientation.

This is where 3D bounding boxes, or “cuboids,” come into play. A cuboid adds a Z-axis to the coordinates, allowing the AI to perceive how much space an object occupies in a three-dimensional environment. This is critical for a self-driving car to determine not just that a truck is in front of it, but how far away that truck is and at what angle it is parked.

Rotated Bounding Boxes for Complex Orientations

Standard bounding boxes are “axis-aligned,” meaning their edges are always horizontal and vertical. But what if you are analyzing satellite imagery of ships in the ocean or aerial views of warehouses? Objects are rarely perfectly aligned with the camera’s axis.

Rotated bounding boxes allow the annotator to tilt the box to fit the object’s actual orientation. This significantly reduces the “noise” (extra background pixels) captured inside the box, providing the AI with much cleaner data for training.

Practical Applications: From Self-Driving Cars to Modern E-commerce

The application of bounding boxes extends far beyond theoretical research. They are currently powering some of the most disruptive technologies in the modern economy.

Autonomous Vehicles and Safety

For a self-driving car, every frame of video from its cameras must be processed in milliseconds. Bounding boxes are used to track pedestrians, cyclists, other vehicles, and traffic lights. By identifying these objects within boxes, the car’s onboard computer can predict their trajectories. If a bounding box labeled “Pedestrian” moves closer to the vehicle’s path, the AI triggers the braking system.

Medical Imaging and Diagnostics

In the healthcare tech sector, bounding boxes are literal life-savers. Radiologists use AI-assisted tools to scan X-rays, MRIs, and CT scans. Bounding boxes are used to highlight potential tumors, fractures, or anomalies. By training on thousands of annotated medical images, the AI can flag suspicious regions for a human doctor to review, significantly increasing the speed and accuracy of early diagnoses.

Visual Search in Retail

E-commerce giants use bounding boxes to power “visual search” features. When you take a photo of a pair of shoes and the app finds a matching product, a bounding box is working behind the scenes. The AI identifies the object in your photo, draws a box around it to isolate it from your living room floor, and then compares the features within that box to a massive database of products.

Challenges and Best Practices in Bounding Box Annotation

Despite their utility, bounding boxes are not perfect. The “quality” of an AI tool is directly tethered to the quality of the bounding boxes it was trained on.

Dealing with Occlusion and Truncation

In the real world, objects are rarely fully visible. A person might be standing behind a lamp post (occlusion), or a car might be half-way out of the camera frame (truncation).

- Occlusion: How should an annotator draw a box? Should they box only the visible parts or the “imagined” whole person? Consistency is key here; most tech standards require boxing the entire estimated area of the object to help the AI learn that objects can exist even when partially hidden.

- Truncation: If only the tail of a dog is visible at the edge of the frame, the bounding box must be labeled as “truncated” so the model doesn’t learn that a dog is simply a tail.

The Importance of Labeling Consistency

The biggest threat to AI performance is “noisy data.” If one human annotator draws a tight box around a car’s tires, but another leaves a wide margin of road around the car, the AI will become confused. High-performing tech companies invest heavily in strict annotation guidelines and multi-stage quality assurance (QA) to ensure that every bounding box is drawn with pixel-perfect precision.

As we look toward the future, we see the emergence of “instance segmentation,” where instead of boxes, we use pixel-perfect outlines. However, because bounding boxes are computationally “cheap” and easy to produce at scale, they remain the industry standard for the vast majority of AI applications. They are the scaffolding upon which the future of intelligent machines is being built, providing the necessary structure for software to navigate, understand, and interact with our physical world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.