In the rapidly evolving landscape of modern technology, the phrase “I see” has transitioned from a simple human affirmation of understanding into a complex technical milestone. When we ask, “What does ‘I see’ mean?” in a digital context, we are no longer discussing human philosophy; we are exploring the frontiers of Computer Vision (CV), Machine Learning (ML), and the sophisticated software architectures that allow silicon and code to interpret the physical world.

This transformation of “seeing” from a biological process to a computational one represents one of the most significant shifts in the tech industry. For a machine to “see” is to process, analyze, and categorize visual data in a way that generates actionable insights. Understanding this process is essential for anyone navigating the current trends in AI tools, software development, and digital innovation.

Decoding Machine Vision: How Computers “See” the World

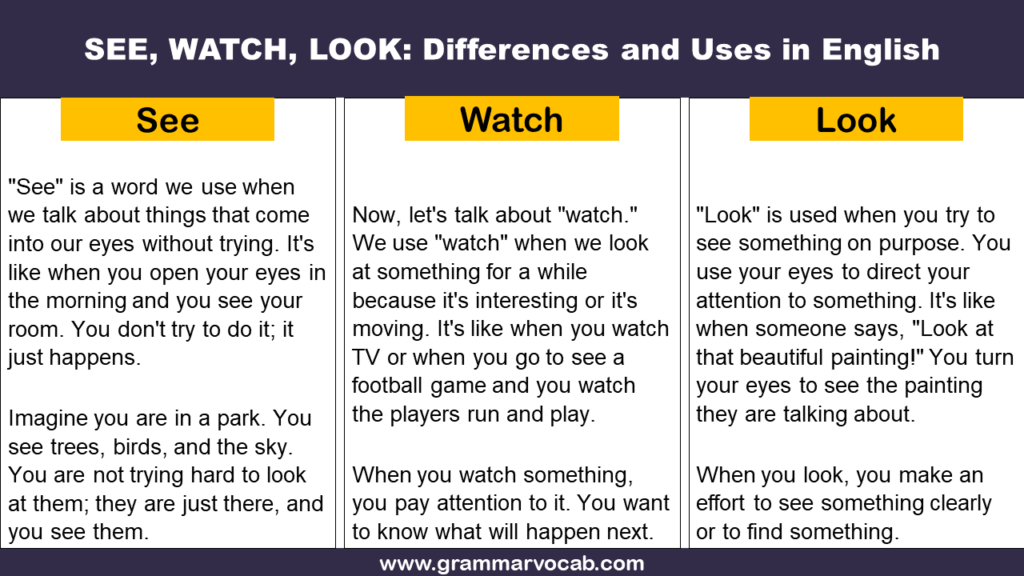

To understand what “I see” means for a computer, one must first dismantle the illusion that a camera works like a human eye. While a human brain interprets light through a complex biological lens and neural network, a computer views the world as a massive grid of numerical values.

From Pixels to Patterns: The Basics of Image Processing

At its most fundamental level, a computer’s visual “sight” is a mathematical operation. Every digital image is composed of pixels, and each pixel is represented by a set of numbers corresponding to its color intensity (typically in RGB—Red, Green, Blue—values). When a piece of software says it “sees” an object, it means it has successfully performed an operation to identify patterns within these numbers.

In the early days of technology, this was done through “hard-coded” rules. Developers would write scripts telling the computer to look for specific shapes or edges. However, modern technology has moved far beyond this. Today, “seeing” involves the identification of features—textures, gradients, and spatial relationships—that are far too complex for manual programming.

The Role of Neural Networks in Visual Interpretation

The true breakthrough in digital perception came with the advent of Convolutional Neural Networks (CNNs). These are a class of deep neural networks specifically designed to process pixel data. When an AI model “sees,” it passes an image through various layers of these networks.

The initial layers might detect simple things like horizontal or vertical lines. Deeper layers combine those lines into shapes like circles or squares. Even deeper layers begin to recognize specific features—a nose, a wheel, or a leaf. By the time the data reaches the final layer, the software can conclude with a high degree of mathematical probability that “I see a Golden Retriever.” This isn’t “seeing” in the conscious sense; it is an incredible feat of statistical pattern matching.

Practical Applications: Where “I See” Becomes a Tool

The phrase “I see” takes on its most practical meaning when applied to software and gadgets that interact with our daily lives. From the smartphone in your pocket to the autonomous vehicles on the road, computer vision is the engine driving the next generation of digital tools.

Assistive Technology for the Visually Impaired

One of the most profound applications of computer vision is in the realm of accessibility. Apps like Microsoft’s “Seeing AI” or Google’s “Lookout” use the smartphone camera to narrate the world for users who are blind or have low vision.

In this niche, “I see” means translation. The software identifies a currency note, reads a restaurant menu out loud, or describes the facial expressions of a person standing in front of the user. Here, the technology acts as a bridge, converting visual data into auditory information, effectively redefining what it means to “see” through the lens of software.

Autonomous Systems and Robotic Navigation

In the world of hardware and gadgets, “I see” is the foundational requirement for autonomy. For a self-driving car or a delivery drone to operate, it must have a 360-degree visual understanding of its environment. This is achieved through a combination of cameras, LiDAR (Light Detection and Ranging), and RADAR.

In this context, “I see” translates to spatial awareness. The vehicle is not just identifying a “pedestrian” or a “stop sign”; it is calculating distances, predicting movement vectors, and identifying “drivable space.” This real-time processing requires immense computational power and sophisticated edge computing, where the “seeing” happens locally on the device rather than in a distant cloud server, ensuring split-second decision-making.

The Evolution of Semantic Understanding

As we move deeper into the decade, the tech industry is shifting the definition of “I see” from simple identification to “semantic understanding.” It is no longer enough for an AI tool to identify a cat; the next frontier is understanding what the cat is doing and why it matters in the context of the image.

Beyond Identification: Contextual Recognition

Contextual recognition is the difference between a security camera identifying a “person” and a smart security system identifying “a person attempting to pick a lock.” The latter requires a temporal understanding—analyzing a sequence of frames to interpret intent.

Modern software uses “Scene Graphs” to map out the relationships between objects. If a computer “sees” a plate, a fork, and a napkin on a table, it can infer the context of “dining.” This level of comprehension is what powers advanced search engines like Google Lens or Pinterest Lens, which allow users to search for “outfits similar to this” or “where to buy this chair,” moving from raw visual data to commercial and functional utility.

Multimodal Models: Combining Sight and Language

The most recent trend in AI—led by models like GPT-4o and Gemini—is multimodality. In these systems, “I see” is integrated with “I speak” and “I think.” A user can show a picture of a broken bicycle derailleur to an AI tool and ask, “How do I fix this?”

The AI must see the part, identify the specific model of the bike, understand the mechanical problem depicted, and then generate a step-by-step text or voice tutorial. This fusion of vision and large language models (LLMs) represents the current pinnacle of tech perception. “I see” has become the input for a complex reasoning engine.

The Challenges and Ethics of Digital Perception

While the technological strides are impressive, the phrase “I see” also brings with it significant challenges regarding digital security, privacy, and algorithmic integrity. As we grant machines the power to observe, we must also consider the implications of that observation.

Addressing Bias in Visual Algorithms

One of the most critical issues in the tech world today is algorithmic bias. Because machine vision models are trained on datasets curated by humans, they often inherit human prejudices. If a facial recognition software is trained primarily on one demographic, its ability to “see” and identify individuals from other demographics is significantly degraded.

In the tech sector, “seeing” correctly requires diverse data. Engineers and data scientists are currently focused on “de-biasing” these models to ensure that when a software claims “I see,” it is doing so with accuracy and equity across all human populations.

Privacy and the Data Used for Training

Every time a device “sees,” there is a question of where that data goes. Digital security is a paramount concern for users of smart home cameras, AR glasses, and photo-storage apps. The “I see” of a modern gadget is often backed by a cloud infrastructure that stores and analyzes that visual data to improve the model.

The tech industry is currently grappling with the balance between functionality and privacy. Trends like “On-device AI” are gaining traction, where the visual processing happens locally without the images ever leaving the user’s device. This ensures that while the device can “see” and provide services, the user’s visual life remains private and secure.

The Future of Digital Sight: Spatial Computing

Looking forward, the definition of “I see” will be further expanded by the rise of Spatial Computing and Augmented Reality (AR). Devices like the Apple Vision Pro or Meta Quest 3 are transforming the screen from a flat surface into an immersive environment.

In the realm of spatial computing, “I see” means the integration of the digital and the physical. The software must see the walls of your room, the surface of your desk, and the movement of your hands to overlay digital information perfectly onto the real world. This requires a level of visual precision and low-latency processing that was unthinkable a decade ago.

Ultimately, “what does I see mean” in the world of technology? It means the successful bridge between raw physical light and digital logic. It is the process of turning pixels into data, data into information, and information into action. Whether it is helping a blind user navigate a city, allowing a car to drive itself, or enabling a professional to manipulate 3D models in mid-air, the ability for technology to “see” is the cornerstone of the modern digital revolution.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.