In the rapidly evolving landscape of technology, the term “disparate impact” has migrated from the courtrooms of civil rights law into the server rooms of Silicon Valley. As we lean more heavily on automated systems, machine learning models, and complex algorithms to manage everything from recruitment to cybersecurity, the risk of unintentional discrimination has never been higher. For tech leaders, developers, and digital strategists, understanding disparate impact is no longer just a legal necessity—it is a fundamental component of ethical software design and sustainable technological innovation.

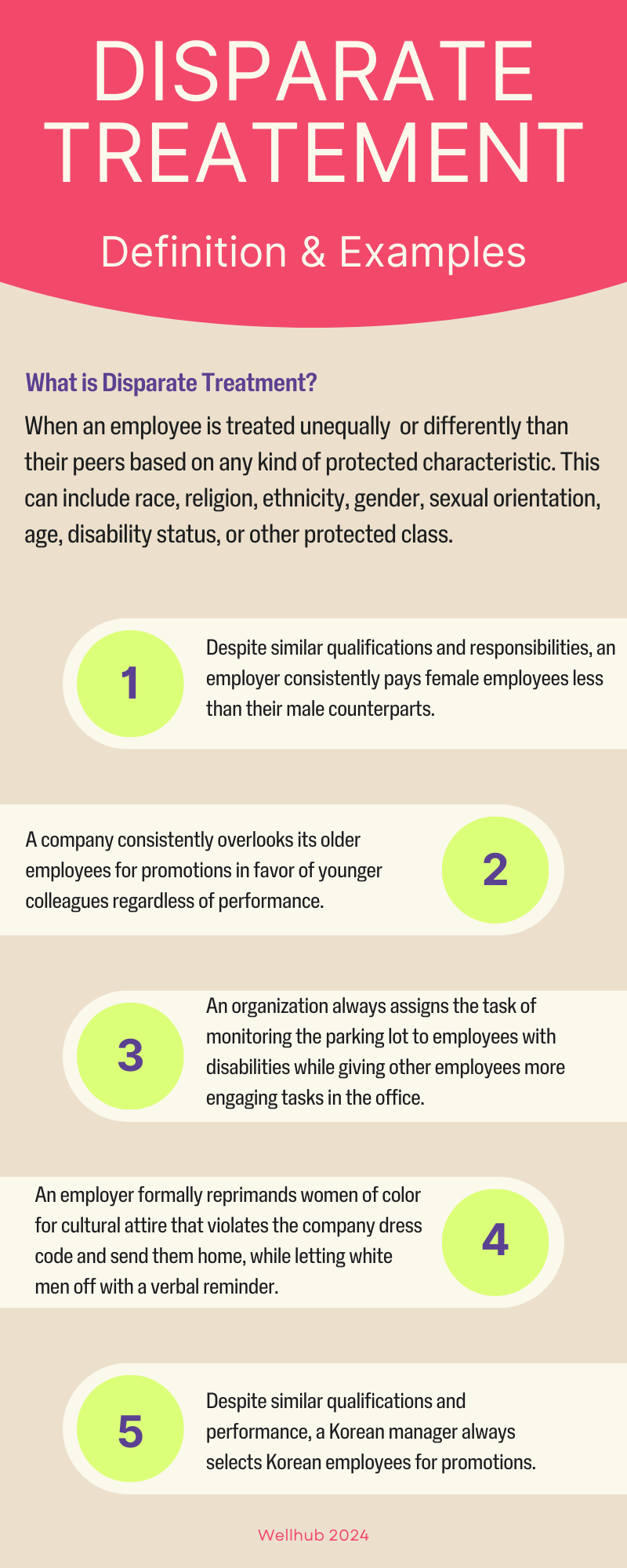

At its core, disparate impact refers to a situation where a practice, policy, or—in our case—an algorithm appears neutral on the surface but results in a disproportionately negative effect on a specific group of people based on protected characteristics like race, gender, or age. In the tech sector, this is frequently referred to as “algorithmic bias.” Unlike “disparate treatment,” which involves intentional discrimination, disparate impact is often an accidental byproduct of data sets, coding priorities, or historical biases embedded in the training information.

The Technological Evolution of Disparate Impact

To understand why disparate impact is a critical tech issue, we must first look at how it manifests within digital ecosystems. We have moved beyond simple “if-then” logic into the realm of black-box neural networks, where the path from input to output is not always transparent.

From Legal Theory to Algorithmic Reality

Historically, disparate impact was used to challenge employment tests or housing policies that unfairly excluded certain demographics. In the 21st century, the “test” is often an automated screening tool. When a software developer builds a recommendation engine, they are essentially creating a digital filter. If that filter is designed using historical data that reflects past societal inequities, the software will inevitably replicate those inequities. This shift from manual to digital decision-making means that bias can now be scaled at the speed of the cloud, affecting millions of users simultaneously.

The Hidden Nature of “Neutral” Code

Code is often viewed as objective because it relies on mathematics. However, algorithms are built by humans and trained on human-generated data. When a developer creates a feature for a software application, they might not explicitly include “gender” as a variable. Yet, the system might learn to associate certain behaviors or preferences with gender, eventually leading to a disparate impact. This “neutral” code becomes a mask for underlying systemic issues, making it difficult for users and even the creators to identify where the bias began.

Why the Tech Sector Faces Unique Risks

The tech industry is uniquely vulnerable to disparate impact because of the iterative nature of software development. Agile methodologies prioritize speed and deployment, sometimes at the expense of comprehensive bias auditing. Furthermore, the global reach of digital tools means that a minor bias in a social media algorithm or a credit-scoring API can have profound socioeconomic consequences across different cultures and jurisdictions. Tech companies are now finding themselves in a position where they must act as both innovators and social regulators.

How Data Bias Fuels Disparate Impact in Software

The engine behind most modern software is data. If the data is flawed, the output—and the resulting impact on the user base—will be flawed as well. Understanding the mechanics of data bias is the first step toward mitigating disparate impact.

The “Garbage In, Garbage Out” Problem

In machine learning, the quality of the training data determines the efficacy of the model. If a tech company develops an AI tool to predict employee performance but trains it on a decade of data from a male-dominated industry, the AI will likely “conclude” that masculine traits are indicators of success. This results in a disparate impact against female candidates. Even though the software was never told to prefer men, it learned to do so because the “garbage” (biased historical data) it was fed dictated its logic.

Proxy Variables: When Metadata Masks Identity

One of the most complex challenges in digital security and data science is the “proxy variable.” Even if a dataset is scrubbed of sensitive information like race or religion, other data points can act as proxies. For instance, a user’s zip code, browsing history, or even their choice of smartphone can be highly correlated with their socioeconomic status or ethnic background. If an automated insurance app uses zip codes to determine premiums, it may unintentionally create a disparate impact by charging higher rates to minority communities, effectively digitizing the practice of redlining.

Case Study: Automated Recruitment and Hiring Tools

Many HR tech platforms use AI to scan thousands of resumes in seconds. In one high-profile case, a major tech corporation realized its recruiting tool was penalizing resumes that included the word “women’s” (as in “women’s chess club captain”). The algorithm had observed that most successful hires in the company’s history were men and thus deduced that “women’s” was a negative indicator. This is a textbook example of disparate impact in tech: a tool designed to increase efficiency ended up narrowing the talent pool through unintended discrimination.

Measuring and Mitigating Algorithmic Bias

Recognizing the problem is only half the battle. The tech industry requires rigorous, quantitative methods to measure disparate impact and robust frameworks to mitigate it.

Quantitative Metrics for Fairness (The Four-Fifths Rule)

The tech world is beginning to adopt legal metrics to evaluate software fairness. One such metric is the “Four-Fifths Rule,” which suggests that if the selection rate for a protected group is less than 80% of the rate for the highest-scoring group, it is evidence of disparate impact. Developers can integrate these calculations directly into their testing environments, ensuring that every software update is audited for demographic parity before it goes live.

Adversarial Testing and Bias Audits

Just as digital security teams use “red teaming” to find vulnerabilities in a firewall, AI ethics teams are now using adversarial testing to find biases. This involves intentionally trying to trick the algorithm into making a biased decision. By stress-testing the software with diverse edge cases, developers can identify hidden disparate impacts that wouldn’t be visible in a standard QA (Quality Assurance) process. Bias audits are becoming a standard part of the software development lifecycle (SDLC) for major platforms.

Human-in-the-Loop: Restoring Accountability to Automated Systems

To combat the cold logic of an algorithm, many tech firms are implementing “human-in-the-loop” (HITL) systems. This approach ensures that while technology does the heavy lifting, final decisions—especially those affecting human livelihoods—are reviewed by diverse human teams. This adds a layer of empathy and context that code currently lacks. In the context of digital security, HITL can prevent automated fraud detection systems from unfairly flagging users based on unconventional but legitimate behavior patterns.

Navigating the Regulatory Landscape of Digital Equity

As disparate impact becomes a central theme in technology, governments around the world are moving to codify digital fairness into law. Tech companies must stay ahead of these regulations to avoid massive fines and reputational damage.

Global Standards: The EU AI Act and NIST Framework

The European Union’s AI Act is one of the most ambitious attempts to regulate disparate impact in tech. It categorizes AI systems by risk level and mandates strict transparency for “high-risk” tools, such as those used in education, employment, and law enforcement. Similarly, in the United States, the National Institute of Standards and Technology (NIST) has released the AI Risk Management Framework, which provides tech companies with a roadmap for managing bias and ensuring their systems are “valid, reliable, and fair.”

The Responsibility of Developers vs. Users

A growing debate in the tech community centers on where the responsibility for disparate impact lies. Is it the developer who wrote the code, or the enterprise user who deployed it? Current trends suggest a shared responsibility model. Software providers are increasingly expected to provide “Model Cards”—essentially a nutrition label for AI—that disclose how the model was trained and where its potential biases lie, allowing users to make informed decisions about deployment.

Future-Proofing Software Against Disparate Impact Claims

For a tech business to thrive in this new era, it must build “fairness by design.” This involves diversifying the engineering teams so that different perspectives are present during the initial brainstorming phases. It also requires a commitment to transparency. When a software’s decision-making process is “explainable,” it is much easier to defend against claims of disparate impact. Companies that prioritize ethical tech will find themselves at a competitive advantage, as both regulators and consumers demand more equitable digital experiences.

The meaning of disparate impact has evolved from a niche legal doctrine into a defining challenge for the 21st-century tech industry. As we continue to automate the world, the responsibility to ensure that our algorithms do not perpetuate the biases of the past rests squarely on the shoulders of those building the future. By integrating fairness into the code itself, we can ensure that technology remains a tool for universal progress rather than a mechanism for systemic exclusion.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.