Gravity is the invisible force that governs the motion of celestial bodies and keeps our feet planted firmly on the ground. In the realm of classical physics, the answer to the question “what are the units of gravity” is straightforward: it is measured as acceleration in meters per second squared ($m/s^2$) or as gravitational field strength in Newtons per kilogram ($N/kg$). However, as we transition from the physical world into the digital frontier, the “units” of gravity take on a multifaceted role.

In modern technology—ranging from high-fidelity video game engines and aerospace simulations to the micro-electromechanical systems (MEMS) in our smartphones—gravity is no longer just a constant in a textbook. It is a programmable variable, a sensor input, and a foundational element of user experience. Understanding how these units are translated into code and hardware is essential for engineers, developers, and tech enthusiasts alike.

The Mathematical Foundation: Translating $m/s^2$ into Computational Logic

At the heart of any software that simulates the physical world lies the fundamental constant of Earth’s gravity: approximately $9.80665 m/s^2$. In tech, this is often simplified or expanded depending on the required precision of the application.

The Standard Model in Coding and Simulation

In most software environments, gravity is treated as a constant vector. For a developer working in a 3D environment, the “unit” of gravity is typically a downward force applied to an object’s velocity over time. In a standard Cartesian coordinate system, this is represented as a vector $(0, -9.81, 0)$.

The challenge for software engineers is not just the number, but the integration. To move an object realistically, the engine must calculate the change in position using the formula $d = v_0t + frac{1}{2}at^2$. Here, the units of gravity (acceleration) must be perfectly synchronized with the software’s “ticks” or “frames.” If a simulation runs at 60 frames per second, the gravity unit must be subdivided precisely to ensure that an object falls at the same rate regardless of hardware performance.

Precision and Floating-Point Variables

In high-precision tech sectors, such as satellite positioning or autonomous vehicle calibration, the standard $9.81 m/s^2$ is insufficient. Engineers must account for “Gravity Units” (GU), where $1 GU = 10^{-6} m/s^2$ (one micrometer per second squared).

To handle these tiny increments, software must utilize double-precision floating-point variables. This prevents “rounding drift,” where small errors in calculating gravitational units accumulate over time, leading to catastrophic failures in navigation systems. In the world of tech, the “unit” of gravity is often a test of a system’s computational accuracy.

Gravity in Game Development: Beyond the Physical Constant

In the gaming industry, gravity is less about physical truth and more about “game feel.” While the units remain technically $m/s^2$, developers often manipulate these units to create more engaging experiences.

Unity and Unreal Engine Implementation

In professional game engines like Unity or Unreal Engine 5, gravity is a global setting. However, it is rarely set to $9.81$. Because human perception of digital motion is different from real-world perception, developers often use “floaty” or “heavy” gravity.

In these environments, gravity units are often scaled. For example, a character might have a “gravity scale” of $2.0$, effectively doubling the units of acceleration to $19.6 m/s^2$ to make jumping feel more responsive. This manipulation of units is a core part of technical design, allowing developers to craft unique planetary environments or superhuman physics without rewriting the underlying engine code.

Custom Gravity Fields and UX Design

Modern tech allows for “localized” gravity units. In a game like Gravity Rush or Super Mario Galaxy, the units of gravity are not a global constant but a dynamic field. Tech-wise, this involves calculating the “up” vector for every individual object based on its proximity to a gravitational source. The units don’t change, but their orientation does. This requires significant GPU overhead, as the system must calculate vector transformations for every frame, demonstrating how gravitational units drive the demand for more powerful graphics processing.

Hardware Integration: Accelerometers and MEMS Technology

We carry devices capable of measuring gravitational units in our pockets every day. Smartphones, tablets, and wearables rely on Micro-Electromechanical Systems (MEMS) to interpret the world around them.

Measuring ‘g’ in Mobile Devices

For a smartphone, the unit of gravity is often expressed as ‘g’ (where $1g$ is Earth’s standard gravity). Accelerometers inside these devices use microscopic silicon structures that bend under the force of gravity. The “unit” recorded is the displacement of these structures, which is then converted into a digital signal.

This technology enables features we take for granted, such as screen rotation, step counting, and image stabilization. When you tilt your phone, the accelerometer detects a change in the distribution of gravitational units across its three axes ($X, Y, Z$). The software then calculates the orientation relative to the “downward” pull of $1g$.

Calibration and Noise Reduction

A major tech hurdle in measuring gravity units at the hardware level is “noise.” Vibrations, walking, or even the device’s own internal components can create “jitter” in the data. To solve this, developers use Kalman filters and sophisticated AI algorithms to isolate the constant unit of gravity from temporary environmental acceleration. This process—sensor fusion—combines data from the accelerometer, gyroscope, and magnetometer to create a stable “gravity vector” that the OS can use for AR (Augmented Reality) applications.

Aerospace Tech and Space Exploration Simulations

When we move beyond Earth’s atmosphere, the units of gravity become a dynamic variable essential for the survival of spacecraft and satellites.

Orbital Mechanics and N-Body Simulations

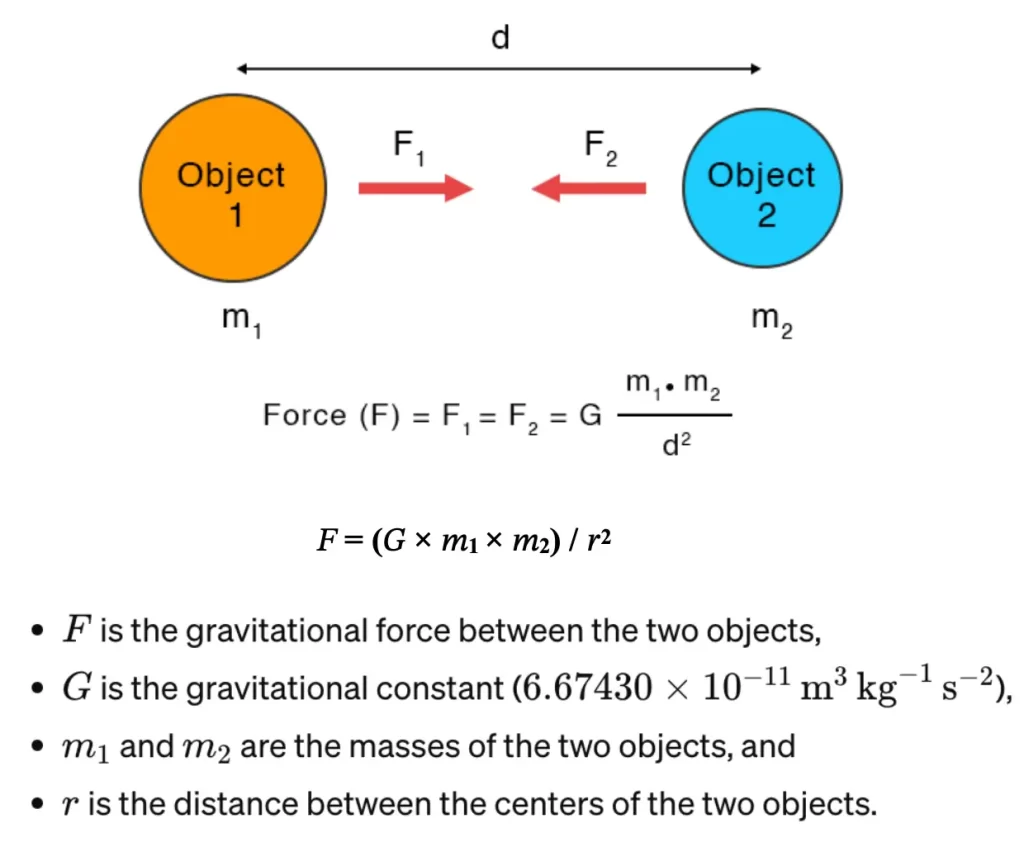

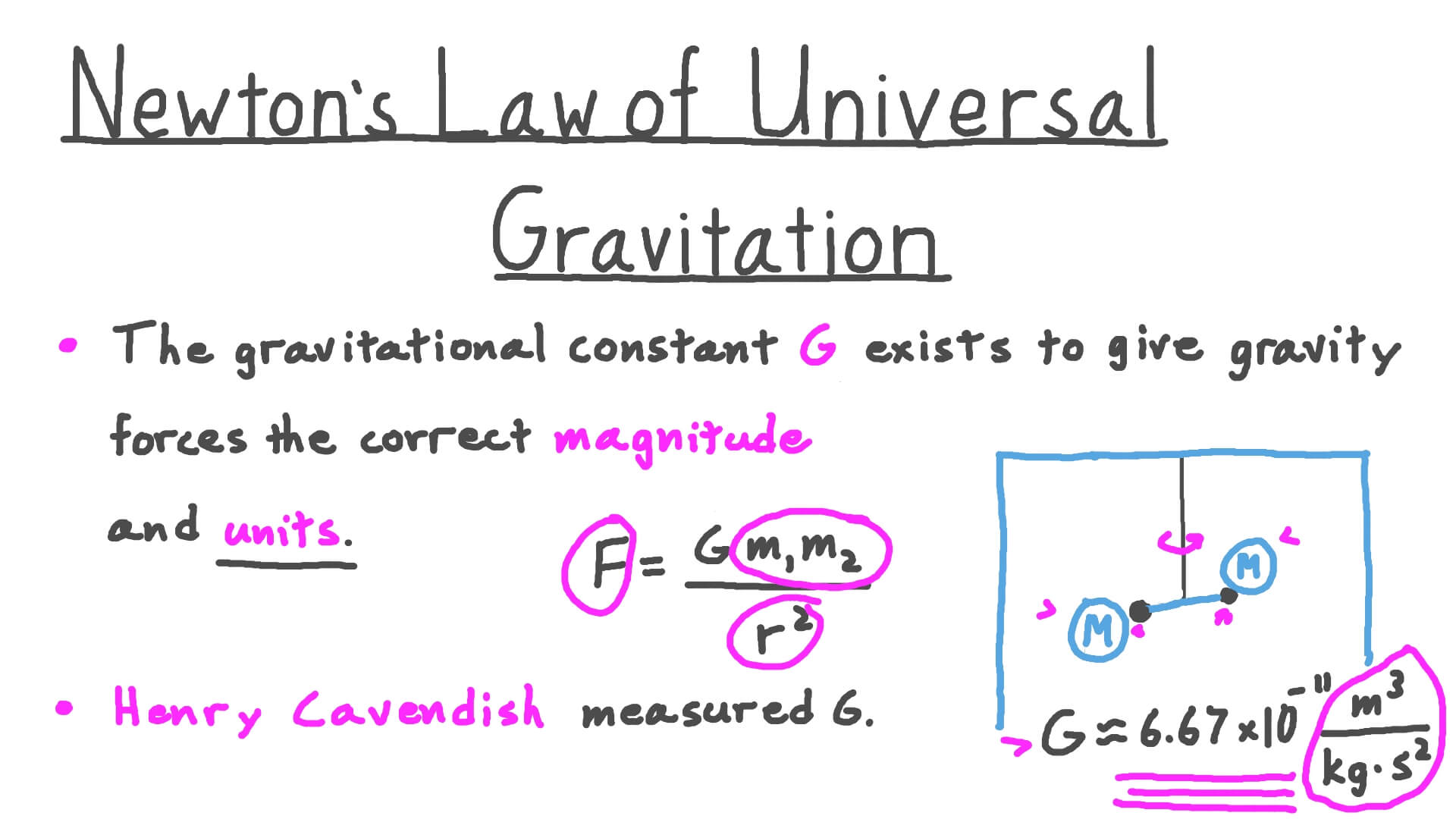

In aerospace tech, gravity is calculated using the Universal Law of Gravitation: $F = G frac{m1m2}{r^2}$. Here, the units are Newtons ($N$), but for trajectory software, the focus is on “Delta-V” (change in velocity).

SpaceX and NASA use N-body simulations to predict how a spacecraft will react to the gravitational units exerted by the Earth, Moon, and Sun simultaneously. Because gravity follows an inverse-square law, the “units” of pull drop off rapidly with distance. Software like GMAT (General Mission Analysis Tool) must calculate these units across millions of kilometers with sub-meter accuracy to ensure a craft can “slingshot” around a planet using its gravity as a fuel-free propellant.

The Role of AI in Predicting Gravitational Anomalies

The Earth is not a perfect sphere, and its gravity is not uniform. The “units” of gravity actually vary depending on whether you are over an ocean trench or a mountain range. Tech companies now use AI and machine learning to analyze data from satellites like GRACE (Gravity Recovery and Climate Experiment).

By processing “gravitational anomalies”—minute changes in the $9.81 m/s^2$ constant—AI can map changes in the Earth’s ice sheets or underground water reservoirs. This is a prime example of how physics units are transformed into “Big Data,” providing actionable insights for climate tech and geological surveying.

The Future of Gravitational Computing: Quantum and VR

As we look toward the future, the way technology interacts with gravitational units is set to become even more complex and immersive.

Quantum Computing and Gravity

One of the greatest challenges in modern science is reconciling gravity with quantum mechanics. In the tech world, quantum computers are being leveraged to simulate “quantum gravity.” While traditional silicon chips struggle with the complex tensors required to model gravity at a subatomic scale, quantum bits (qubits) can handle the probabilistic nature of these calculations. Understanding the “units” of gravity at this scale could lead to breakthroughs in materials science and energy production.

VR/AR Immersion and Haptic Gravity

In Virtual and Augmented Reality, the goal is to trick the brain into believing the digital world is real. A current frontier in VR tech is “haptic gravity.” This involves using wearable exoskeletons or specialized controllers that apply physical resistance to the user’s limbs, simulating the units of weight and gravity of a virtual object.

If you pick up a virtual lead ball in a VR simulation, the tech calculates the required counter-force in Newtons to match the gravitational units of that object’s mass. This level of immersion requires ultra-low latency; if the haptic feedback lags behind the visual gravity by even a few milliseconds, the illusion is broken and the user may experience motion sickness.

Conclusion

While the question “what are the units of gravity” can be answered by a high school physics student, its application in the technology sector reveals a deep well of complexity. From the $m/s^2$ constant that stabilizes a digital character’s jump to the $1g$ measurement that allows an iPhone to orient its screen, gravity units are a fundamental building block of the modern digital experience.

As tech continues to evolve—moving into the realms of space tourism, quantum simulation, and total VR immersion—our ability to measure, manipulate, and simulate these units will define the next generation of innovation. Gravity is the constant that connects the physical and digital worlds, and mastering its units is the key to building more realistic, efficient, and awe-inspiring technology.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.