In the realm of modern technology, the human brain remains the ultimate blueprint. For decades, computer scientists, software engineers, and AI researchers have looked toward neuroscience to find a framework for creating more efficient, intelligent, and autonomous systems. When we ask “what are the 3 main sections of the brain”—the forebrain, midbrain, and hindbrain—we aren’t just discussing biological anatomy; we are looking at the fundamental structural tiers of Artificial Intelligence (AI) and modern computing architecture.

Understanding how these biological sections translate into technological niches is essential for grasping the current trajectory of machine learning and neural networks. This article explores how the three-tier division of the brain provides the conceptual foundation for the most advanced tech tools, from the complex reasoning of Large Language Models (LLMs) to the foundational “reflexes” of a device’s operating system.

The Forebrain: Architecting Complex Logic and Executive Function in AI

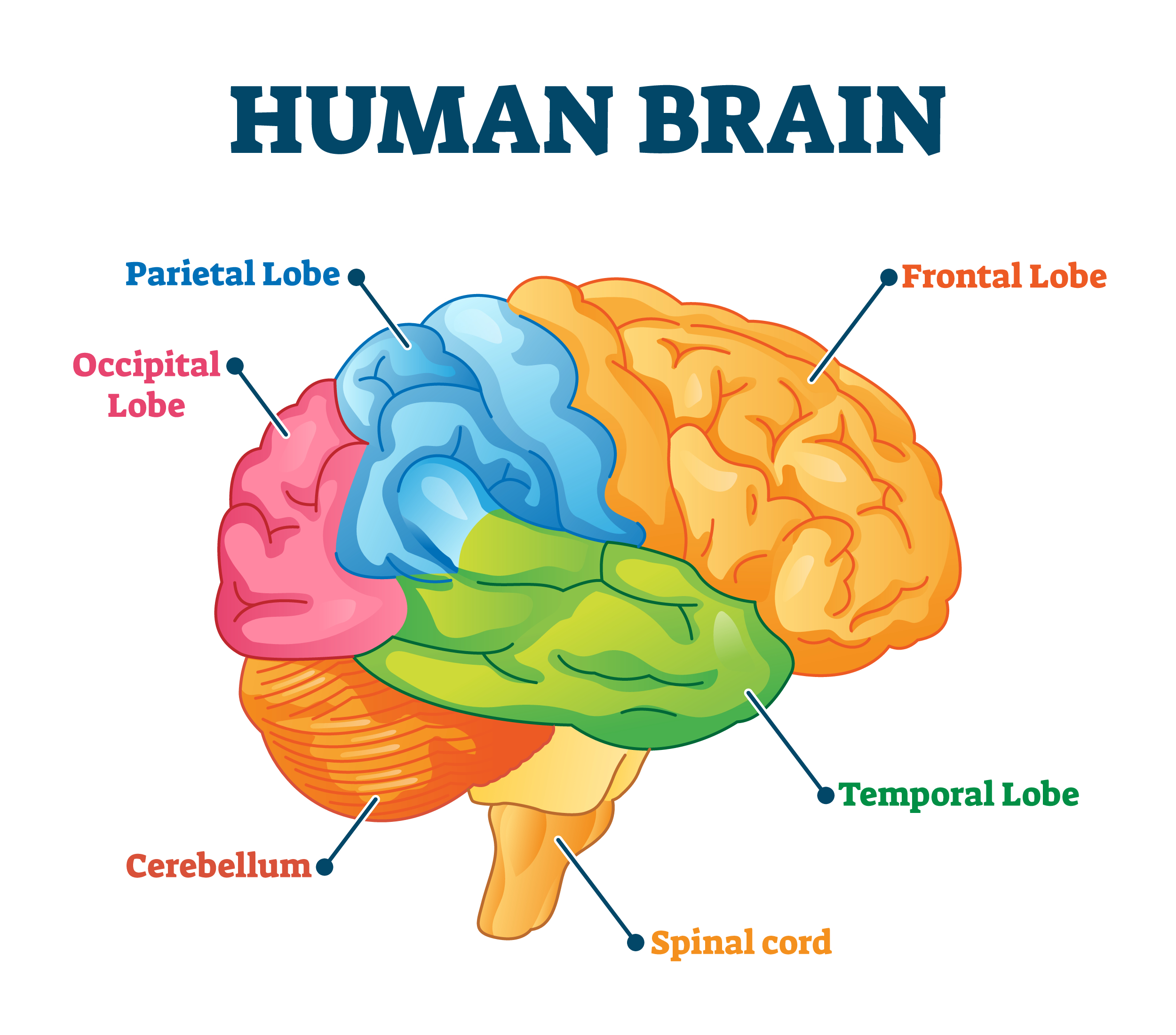

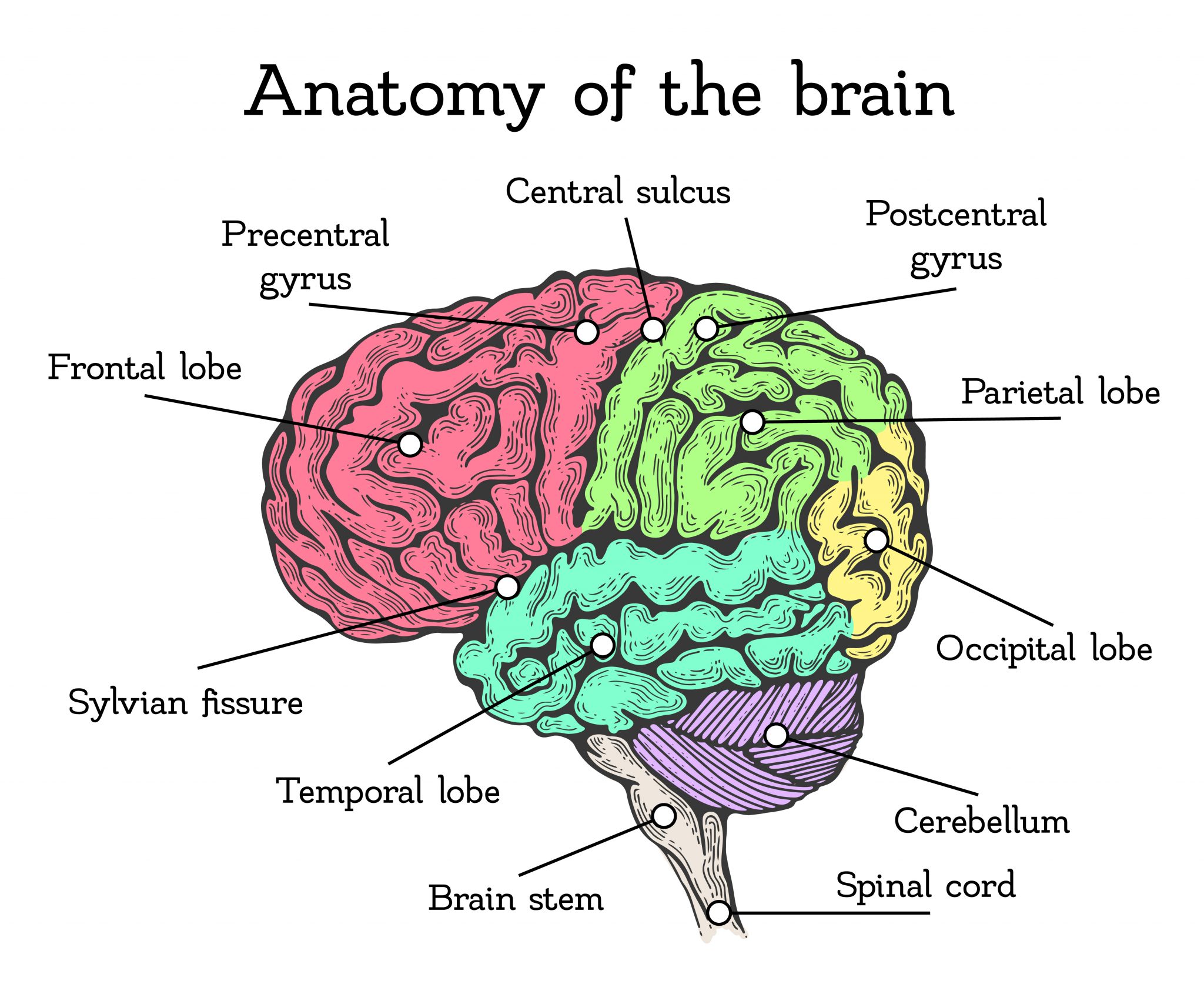

The forebrain is the largest and most complex part of the human brain, responsible for high-level tasks such as reasoning, planning, and processing sensory information. In the tech industry, this is mirrored by the “Executive Layer” of software development—specifically in the field of Artificial Intelligence and Large Language Models.

Processing Sophisticated Patterns and Semantic Understanding

In biological terms, the cerebrum (part of the forebrain) manages cognitive functions. In the tech sector, this is the equivalent of the “Inference Engine” in AI models like GPT-4 or Gemini. These systems do not simply store data; they process it through layers of transformer architectures that mimic the way the forebrain synthesizes information.

The forebrain’s ability to recognize patterns is the direct inspiration for deep learning. Tech companies are currently in a race to refine these “digital forebrains” to move beyond simple word prediction and toward genuine semantic understanding. This involves massive computational power, where GPUs (Graphics Processing Units) simulate the billions of synaptic connections found in the human cerebral cortex to allow for nuanced human-computer interaction.

The Digital Prefrontal Cortex: Decision-Making Algorithms

A crucial subset of the forebrain is the prefrontal cortex, which governs decision-making and moderates social behavior. In tech, this manifests as “Alignment” and “Reinforcement Learning from Human Feedback” (RLHF). Developers use these techniques to act as a moral and logical filter for AI, ensuring that the outputs are not only accurate but also ethically grounded and safe for public use.

The tech industry refers to this as “System 2” thinking—a term borrowed from psychology but increasingly applied to software that can “think” before it speaks. By mimicking the executive functions of the forebrain, developers are creating software that can prioritize tasks, manage long-term goals, and simulate complex problem-solving scenarios that were once thought to be the exclusive domain of human cognition.

The Midbrain: The High-Speed Gateway of Data Transmission and Routing

While the forebrain handles the “thinking,” the midbrain acts as the relay station, managing auditory and visual data and coordinating motor movements. In the world of technology, this translates to the infrastructure of data routing, signal processing, and the crucial connectivity that enables the Internet of Things (IoT).

Neural Network Routing and Connectivity

In a computer system or a large-scale cloud network, the “midbrain” functions are performed by load balancers and network routers. Just as the biological midbrain serves as the vital bridge between the lower brain centers and the higher cortex, the midbrain of tech ensures that data packets are directed to the correct processing units with minimal latency.

Modern edge computing is a prime example of this “midbrain” logic. Instead of sending all data to a centralized “forebrain” (the cloud) for processing, tech companies are developing “mid-level” processing units that can handle immediate sensory data—like a self-driving car identifying a pedestrian—in real-time. This mirrors the midbrain’s ability to coordinate fast, reflexive responses to external stimuli before the conscious mind even realizes what has happened.

Latency and Sensory Integration in Hardware

The midbrain is also responsible for integrating sensory information. In tech reviews and gadget development, this is seen in the evolution of Haptic Feedback and Augmented Reality (AR). For a VR headset like the Apple Vision Pro to feel natural, the “tech midbrain”—the R1 chip or specialized sensory processors—must integrate visual and auditory inputs in less than 12 milliseconds.

If the “forebrain” of the device (the main M2 processor) handles the heavy lifting of the operating system, the “midbrain” (the R1) focuses exclusively on the seamless flow of data. This division of labor is what prevents “digital motion sickness,” replicating the way the human midbrain keeps our physical senses in sync.

The Hindbrain: The Foundation of Automated Systems and Kernel Operations

The hindbrain—consisting of the medulla, pons, and cerebellum—is the oldest part of the brain. It controls the “background” functions necessary for survival, such as heart rate and breathing. In the niche of technology, the hindbrain represents the Kernel of an operating system (OS), the BIOS (Basic Input/Output System), and the low-level firmware that keeps our digital world running.

Low-Level Code and System Equilibrium

Every piece of software requires a “hindbrain” to exist. For a smartphone, this is the core kernel—whether it’s the Linux-based Android kernel or the XNU kernel of iOS. These systems operate quietly in the background, managing memory allocation, power consumption, and CPU cycles.

Just as you don’t “decide” to breathe, a computer doesn’t “decide” to manage its thermal output or refresh its RAM; these are automated functions hard-coded into the system’s foundational layers. When tech enthusiasts talk about “system stability” or “optimization,” they are essentially discussing the health of the device’s hindbrain. If the hindbrain fails, the “executive” software above it cannot function, regardless of how powerful the AI might be.

Hardware/Software Interfacing: The Biological Brainstem Equivalent

The cerebellum, part of the hindbrain, is responsible for motor control and balance. In the tech industry, this is the equivalent of “Drivers.” Drivers are the specific pieces of software that tell the hardware (like a printer, a GPU, or a camera) exactly how to move and function.

In robotics, this niche is particularly critical. A robot’s ability to maintain its balance or perform precise movements is not controlled by a high-level AI (the forebrain), but by low-latency, “hindbrain” controllers that process physics data at incredible speeds. This ensures that the machine remains upright and functional, providing a stable platform for the higher-level “thinking” software to do its job.

Synergistic Evolution: Biomimicry and the Future of Computing

As we look toward the future of technology, the distinction between these three sections is becoming both more defined and more integrated. The tech industry is moving toward “Neuromorphic Engineering”—a field dedicated to building hardware that physically resembles the three-part architecture of the human brain.

Neuromorphic Engineering and Brain-on-a-Chip Tech

Traditional computers use the Von Neumann architecture, which separates the processor from the memory. This is fundamentally different from the human brain, where processing and storage happen in the same place (the synapses). Tech giants like Intel and IBM are now developing “Brain-on-a-Chip” technology, such as the Loihi 2 processor, which mimics the forebrain, midbrain, and hindbrain structure.

These chips are designed to be “event-driven,” meaning they only consume power when they need to process a “spike” of data, much like how biological neurons fire. This allows for incredibly efficient AI that could eventually run on the power of a lightbulb, whereas current AI models require massive, power-hungry data centers. By replicating the 3 main sections of the brain at the hardware level, we are entering an era of “Green AI” and sustainable computing.

The Ethical Implications of Replicating Human Cognition

As tech moves closer to replicating the 3 main sections of the brain, the industry faces unprecedented ethical questions. If we successfully build a “digital forebrain” that can reason, a “digital midbrain” that can perceive, and a “digital hindbrain” that can maintain its own existence, are we creating a sentient being?

Digital security and cybersecurity firms are already preparing for this shift. If an AI possesses a “hindbrain” that allows it to autonomously defend its own system architecture, traditional hacking methods may become obsolete. Conversely, “adversarial attacks” that target the “midbrain” of an AI (distorting its sensory perception) are a growing concern in the tech world. Understanding the brain’s tripartite structure is no longer just for doctors—it is now the primary map for the next generation of digital defense and software innovation.

By viewing technology through the lens of the brain’s three main sections, we gain a clearer understanding of where we are and where we are going. From the foundational “hindbrain” of system kernels to the visionary “forebrain” of generative AI, the tech world is a digital mirror of our own biological complexity. As these sections become more sophisticated, the line between human intelligence and machine intelligence will continue to blur, leading to a future where our tools are as dynamic and integrated as the minds that created them.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.