To understand what the Bible was written in, one must look beyond the mere ink and parchment of antiquity and view it through the lens of information technology. At its core, the Bible is a massive dataset—a collection of 66 books that serves as a complex architecture of history, philosophy, and law. To the modern technologist, the languages used to draft these texts represent the “source code” of Western civilization.

The Bible was not written in a single language, nor was it composed using a single medium. Instead, it was developed using a specific linguistic tech stack: Biblical Hebrew, Aramaic, and Koine Greek. Understanding these languages and the physical hardware they were stored on provides a fascinating insight into how information was processed, transmitted, and secured across millennia.

The Core “Programming” Languages: Hebrew, Aramaic, and Greek

Just as modern software relies on specific languages like Python, C++, or Java to perform different functions, the biblical authors utilized the linguistic tools of their eras to optimize for their target audiences and logistical constraints.

Biblical Hebrew: The Foundation Layer

The vast majority of the Old Testament was written in Biblical Hebrew. From a technological perspective, Hebrew is a “root-based” language. Most words are derived from a three-letter consonantal root. This creates a highly efficient system of data storage and retrieval. In Hebrew, a single root can generate a wide array of related concepts, allowing for deep semantic density. This made it the perfect “foundation layer” for the intricate laws and genealogies that form the bedrock of the text.

Aramaic: The Regional Protocol

While Hebrew was the language of the religious elite and the Israelites, Aramaic served as the lingua franca—the regional protocol—of the Near East for centuries. Certain portions of the books of Daniel and Ezra were written in Aramaic. In the context of technology, Aramaic acted as a bridge between different cultural operating systems, facilitating trade and diplomacy across the Babylonian and Persian Empires. It was the language of connectivity, used when the message needed to reach a broader, more diverse administrative network.

Koine Greek: The Universal Interface

The New Testament was written almost exclusively in Koine Greek. If Hebrew was the language of the “backend” architecture, Koine Greek was the “frontend” user interface. Following the conquests of Alexander the Great, Greek became the universal standard for communication. It was precise, expressive, and highly scalable. Because Koine Greek was the common tongue of the Mediterranean world, it allowed the “data” of the New Testament to be uploaded and synchronized across the Roman Empire’s vast infrastructure with unprecedented speed.

The Hardware of Antiquity: Papyri, Parchment, and the Evolution of the Codex

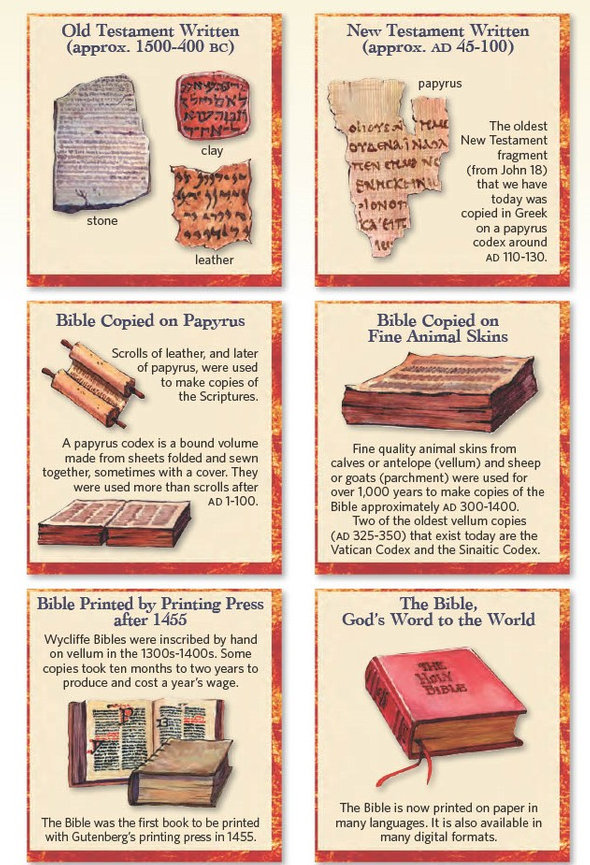

When we ask what the Bible was written in, we must also consider the physical hardware. The transition from oral tradition to physical storage was a significant technological leap that required durable materials and innovative formatting.

From Scrolls to Codices: The First Data Compression

Initially, the biblical texts were stored on scrolls. While effective for storage, scrolls offered “serial access” only; if you wanted to read a passage at the end of the book of Isaiah, you had to manually unroll the entire document.

The invention of the codex (the precursor to the modern book) was a revolutionary shift in data management. The codex allowed for “random access.” By binding sheets of papyrus or parchment together between covers, readers could jump directly to a specific page or section. This significantly improved the “user experience” and made the Bible a much more portable and searchable database.

Chemical Composition and Material Longevity

The “hardware” used—papyrus (made from reeds) and parchment (made from processed animal skins)—had to be resilient enough to survive centuries. Modern digital security often focuses on bit-rot and server degradation, but ancient scribes faced the physical degradation of their storage media. The ink itself was a technological feat, often made from carbon (soot) mixed with gum arabic, ensuring that the “code” remained legible despite exposure to humidity and time.

Modern Tech Tools: How AI and Imaging Are Decoding the “Unreadable”

Today, the question of “what the Bible was written in” is being answered by cutting-edge software and AI tools. We are no longer limited to what the human eye can see on a faded scrap of sheepskin.

Multispectral Imaging and Virtual Unrolling

Many ancient biblical fragments are too fragile to be physically opened. Technologists are now using multispectral imaging (MSI) to recover text that has faded into invisibility. By capturing images at different wavelengths of light—including infrared and ultraviolet—software can enhance the contrast between the ink and the material, bringing “deleted” data back to life.

Furthermore, “virtual unrolling” technology uses micro-CT scans to see inside charred or fused scrolls (such as those found at En-Gedi). AI algorithms then analyze the 3D data to identify layers of parchment and map the ink, digitally flattening the scroll so it can be read on a computer screen without ever touching the physical artifact.

Machine Learning for Textual Criticism

Textual criticism—the science of comparing different manuscript copies to find the original reading—is being transformed by machine learning. AI tools can now scan thousands of digitized manuscripts, identifying patterns of scribal errors and categorizing “families” of texts. This algorithmic approach allows scholars to reconstruct the original “source code” of the Bible with a level of precision that was impossible just twenty years ago.

Digital Security and the “Blockchain” of Manuscript History

The transmission of the Bible throughout history shares remarkable similarities with modern distributed ledger technology, such as blockchain.

Decentralized Preservation

One of the reasons we have such high confidence in the original languages of the Bible is the decentralized nature of its preservation. There was no single “central server” that held all the data. Instead, thousands of copies were distributed across different geographical nodes—from Egypt to Rome to Byzantium.

If a manuscript was corrupted or destroyed in one location, the data survived in dozens of others. This “distributed network” made it virtually impossible to alter the core text without the discrepancy being flagged by other nodes in the network. The sheer volume of manuscripts (over 5,800 in Greek alone) acts as a cryptographic proof of the text’s integrity.

Verifying Authenticity in a Digital Age

In the modern era, digital security protocols are used to protect the integrity of digitized biblical manuscripts. Digital watermarking and hashing are employed to ensure that high-resolution images of the Dead Sea Scrolls or the Codex Sinaiticus are not tampered with. As we move further into the age of Deepfakes and AI-generated content, these digital security measures are essential for maintaining the “provenance” of the world’s most influential text.

The Future of Biblical Linguistics: Real-Time Translation and Neural Networks

The study of what the Bible was written in is not a static field; it is rapidly evolving through the use of neural networks and advanced translation software.

Neural Machine Translation (NMT)

Traditional translation relied on word-for-word replacement, which often failed to capture the nuances of ancient Hebrew or Greek syntax. Modern NMT models, trained on massive corpora of ancient texts, can now understand the context and “intent” of a passage. This is leading to a new generation of translations that are not only more accurate but also more attuned to the specific linguistic “flavor” of the original languages.

The Democratization of Ancient Languages

In the past, understanding what the Bible was written in required years of specialized academic training. Today, apps and software suites like Logos Bible Software or Accordance have democratized this information. Anyone with a smartphone can access interlinear bibles, morphological databases, and Greek/Hebrew lexicons. This tech-driven accessibility ensures that the “source code” of the Bible remains open and transparent to a global audience.

By viewing the Bible through the lens of technology, we see that it is more than just a collection of ancient words. It is a masterclass in information architecture, durable storage, and decentralized security. From the root-based logic of Hebrew to the AI-driven recovery of charred scrolls, the story of what the Bible was written in is a testament to the enduring power of human communication and technological innovation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.