In the rapidly evolving landscape of health technology, the intersection of artificial intelligence (AI) and diagnostic imaging has promised a revolution in how we identify respiratory illnesses. Pneumonia remains one of the most significant challenges for global healthcare systems, acting as a leading cause of hospitalization and mortality. However, as medical software developers and data scientists dive deeper into the automation of chest X-ray (CXR) and CT scan interpretation, they have encountered a persistent technical hurdle: the “mimic” problem. In the digital realm, identifying what can be mistaken for pneumonia is not just a clinical question; it is a complex problem of pattern recognition, algorithmic bias, and signal processing.

The Digital Stethoscope: How AI Interprets Pulmonary Pathologies

The transition from manual radiological review to computer-aided diagnosis (CAD) has fundamentally changed how we approach lung health. Modern diagnostic tools rely heavily on Deep Learning (DL), specifically Convolutional Neural Networks (CNNs), to identify opacities in lung tissue that suggest infection. However, the software does not “see” a virus or bacteria; it interprets variations in pixel intensity and spatial distribution.

The Role of Convolutional Neural Networks (CNNs)

CNNs are the backbone of modern medical imaging software. These algorithms are designed to detect features—edges, textures, and shapes—within a digital image. When a software suite is trained to identify pneumonia, it looks for “consolidation,” which appears as white or hazy patches on an otherwise dark lung field. The technological challenge arises because many different physical conditions produce nearly identical pixel signatures. For a machine-learning model, the distinction between a bacterial infection and a non-infectious structural change in the lung is often a matter of subtle statistical probability rather than definitive visual evidence.

Data Heterogeneity and the “Black Box” Problem

One of the most significant issues in tech-driven diagnostics is the “Black Box” nature of AI. When a software tool flags a chest X-ray as “positive for pneumonia,” it is often difficult for developers to parse exactly which features led to that conclusion. If the training data contains “noise”—such as artifacts from different X-ray machine manufacturers or variations in patient positioning—the AI may learn to associate these technical quirks with the disease itself. This leads to high error rates when the software is deployed across different hospital networks using varied hardware.

Common Mimics: Why Algorithms Struggle to Differentiate

To understand what can be mistaken for pneumonia in a technological context, we must examine the specific pathologies that produce similar visual data. For software to be effective, it must be programmed to distinguish between the “infiltrates” of pneumonia and several other conditions that occupy the same “feature space” in an image.

Pulmonary Edema vs. Pneumonia

Pulmonary edema, often caused by congestive heart failure, involves fluid buildup in the lungs. On a digital radiograph, this fluid appears as hazy opacities that can look strikingly similar to the consolidation found in pneumonia. From a software perspective, the “ground truth” labels used to train the AI are often the source of confusion. If a model is trained on a dataset where edema cases were mislabeled or where the clinical context was missing, the AI will likely generate a false positive for pneumonia. Advanced diagnostic apps are now attempting to solve this by incorporating “temporal analysis”—comparing a current scan with previous images to see how quickly the fluid has accumulated.

Atelectasis and the “Grey Area” of Lung Collapse

Atelectasis occurs when a portion of the lung collapses or fails to inflate properly. This results in an area of increased density that appears white on an X-ray, mimicking the focal consolidation of pneumonia. For a developer building a CAD tool, atelectasis represents an “edge case.” The software must be sophisticated enough to detect volume loss and shifts in the surrounding lung structures (like the diaphragm or mediastinum) to distinguish collapse from infection. Without these secondary spatial markers, the algorithm lacks the “situational awareness” required for an accurate diagnosis.

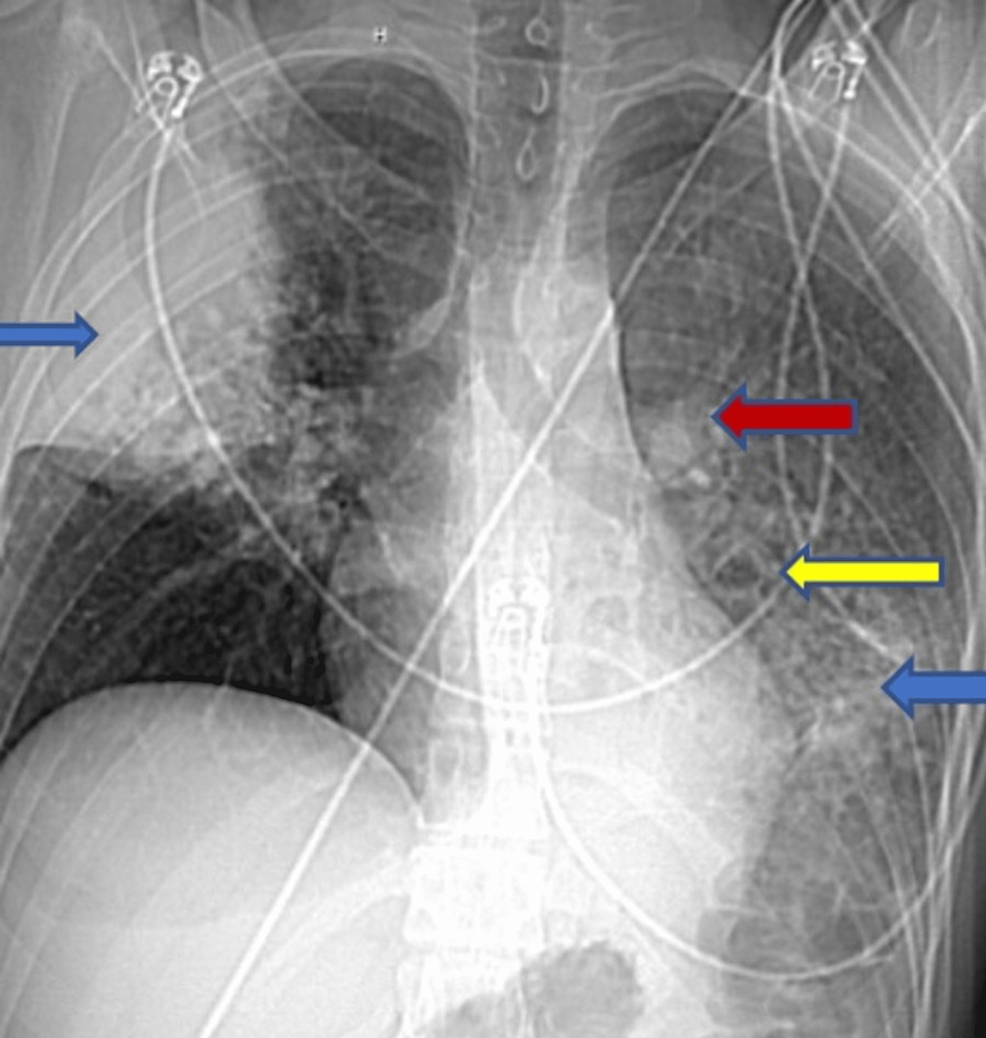

Pleural Effusion: When Fluid Masks Inflammation

Pleural effusion—the buildup of fluid in the space between the lung and the chest wall—is another common mimic. In a 2D X-ray, this fluid can overlap with the lung tissue, creating a shadow that masks the underlying structures. High-end AI tools now use 3D reconstruction from CT scans to “segment” the fluid away from the lung parenchyma. This technological layering allows the software to see through the effusion to determine if an actual infection exists beneath it, a process that requires immense computational power and sophisticated segmentation algorithms.

Technical Limitations and the Risk of False Positives

The accuracy of diagnostic software is only as good as the infrastructure it runs on and the data it consumes. When we ask what can be mistaken for pneumonia, we must look at the technical constraints of the current hardware and the socio-technical biases inherent in software development.

Image Resolution and Hardware Constraints

The “signal-to-noise ratio” is a critical factor in digital diagnostics. Low-resolution portable X-ray machines, often used in intensive care units, produce images with significant “noise.” In these lower-quality digital files, normal vascular markings or even the patient’s overlying skin folds can be misinterpreted by an algorithm as the “ground-glass opacities” associated with viral pneumonia (such as COVID-19). Tech companies are currently investing in “image enhancement AI” that preprocesses these files to remove noise before the diagnostic algorithm ever sees them, but this adds another layer of complexity and a potential point of failure.

Bias in Training Datasets

A persistent problem in the AI industry is “overfitting.” If a diagnostic tool is trained primarily on data from a specific demographic or a specific type of high-end imaging hardware, it may develop a narrow definition of what pneumonia looks like. When this software is used in a different environment—for instance, a rural clinic with older digital sensors—the discrepancies in image quality can lead the AI to “hallucinate” patterns of infection where none exist. This is a classic “garbage in, garbage out” scenario that plagues many AI-driven medical tools today.

The Future of Precision Diagnostics: Multi-Modal AI Integration

To move beyond the limitations of simple image recognition, the tech industry is shifting toward “multi-modal” diagnostic tools. This involves moving away from looking at a chest X-ray in isolation and instead building a comprehensive digital profile of the patient.

Combining Imaging with Clinical Electronic Health Records (EHR)

The most promising software solutions currently under development do not just look at pixels; they ingest data from the patient’s Electronic Health Record (EHR). By integrating lab results (such as white blood cell counts), vital signs (temperature and oxygen saturation), and medical history into the neural network, the AI can weigh the visual evidence of an X-ray against the clinical reality. If the image shows a shadow that could be pneumonia, but the patient has no fever and a history of heart failure, the multi-modal AI can deprioritize a pneumonia diagnosis in favor of pulmonary edema. This “context-aware” computing is the frontier of medical software.

Real-Time Monitoring and Predictive Analytics

Beyond static diagnosis, the next generation of respiratory tech focuses on “predictive analytics.” Wearable sensors and smart beds in hospitals are now being integrated with AI dashboards to monitor patient breathing patterns and oxygenation in real-time. By applying machine learning to these continuous data streams, software can identify the early signs of lung distress before they even become visible on a radiograph. This proactive approach aims to reduce the “diagnostic lag” that often leads to complications, ensuring that what might be mistaken for a minor cough is flagged as a developing pathology long before it requires emergency intervention.

In conclusion, the question of what can be mistaken for pneumonia in the digital age is as much a technical challenge as it is a medical one. As we refine our AI models, improve our data labeling processes, and move toward integrated, multi-modal systems, the “mimics” that once baffled simple algorithms are being unmasked. The future of healthcare technology lies in this convergence: where the precision of software meets the complexity of human biology, reducing errors and ensuring that every patient receives a diagnosis based on the full spectrum of available digital evidence.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.