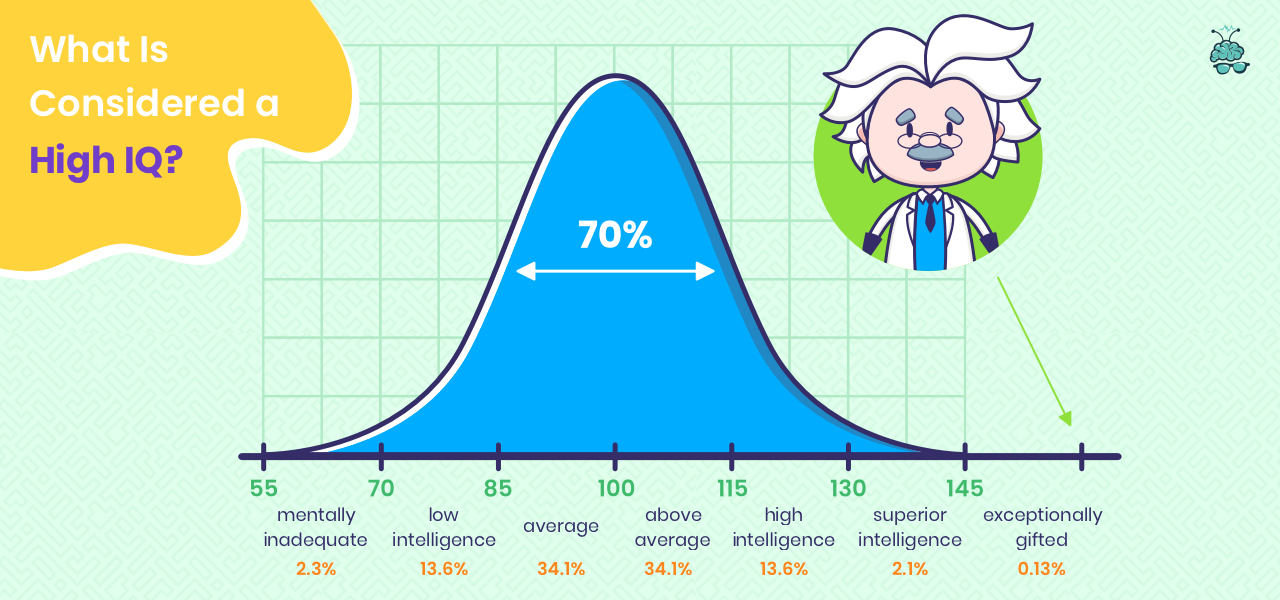

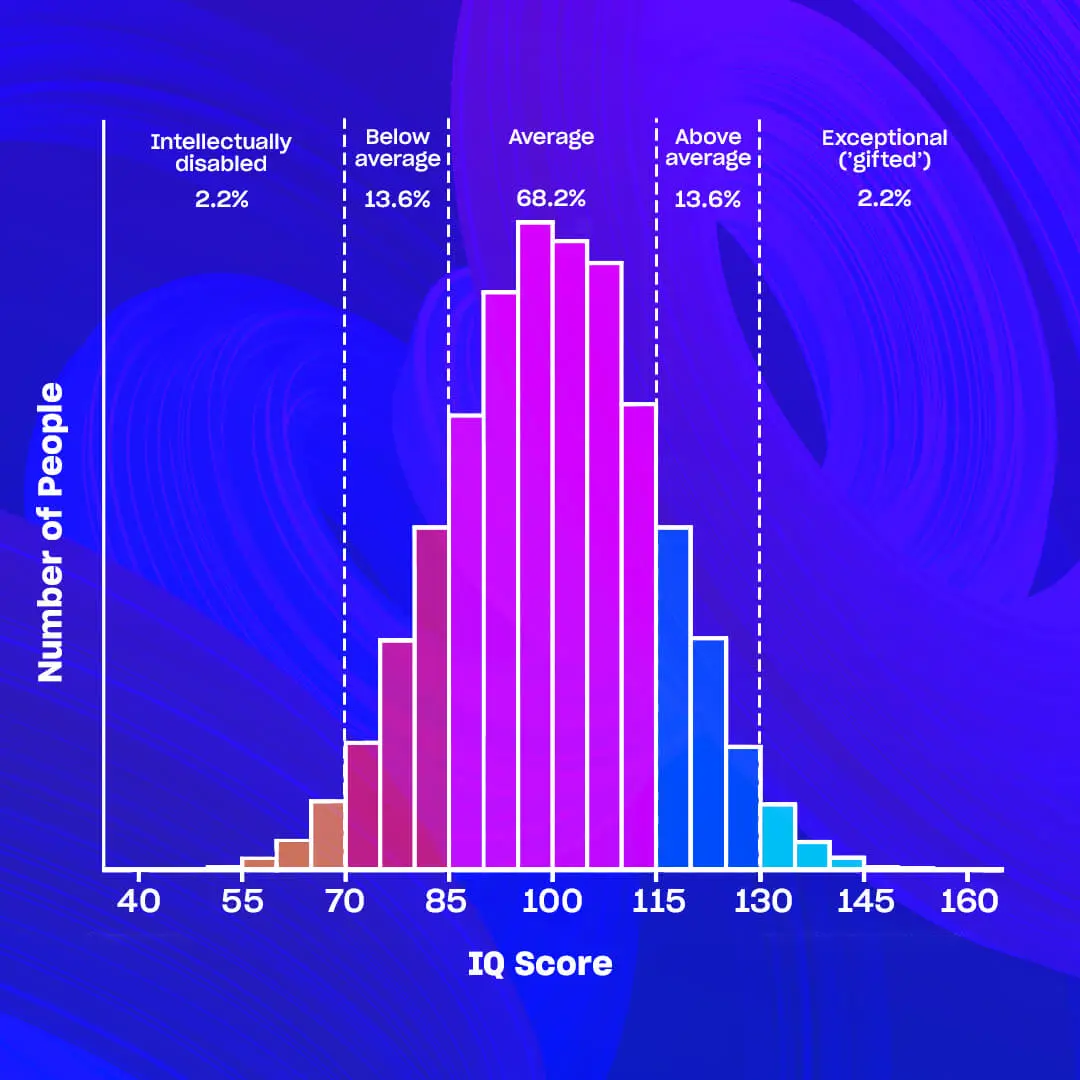

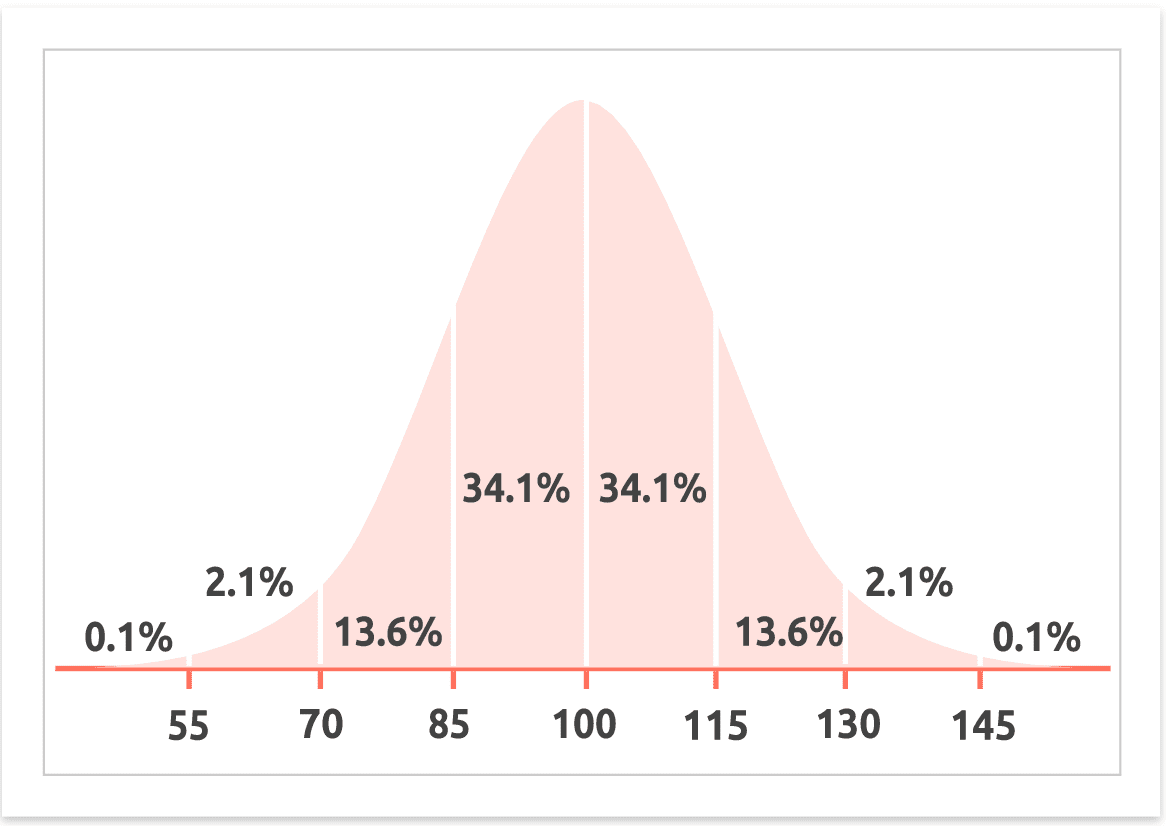

In the landscape of psychometrics, the figure “100” serves as the definitive anchor. By design, the average IQ (Intelligence Quotient) of a person is standardized to 100, representing the median performance of a population on a set of standardized tests. However, as we navigate the third decade of the 21st century, the conversation surrounding “average intelligence” is no longer confined to psychology textbooks. It has migrated into the realms of high-speed computing, neural networks, and digital transformation.

In an era where software can out-calculate human mathematicians and AI tools can draft complex legal briefs in seconds, the definition of an “average” cognitive benchmark is undergoing a massive shift. To understand what an average IQ means today, we must look through the lens of technology—specifically how we measure it, how we augment it, and how artificial intelligence is challenging our monopoly on “intelligence” itself.

The Technological Evolution of the IQ Metric

For over a century, the measurement of human intelligence was a manual, often laborious process involving paper booklets, stopwatches, and physical blocks. Today, the “average” is no longer a static number derived from a one-time exam; it is a data point generated through sophisticated digital psychometrics.

From Paper Tests to Digital Psychometrics

The transition from analog to digital testing has revolutionized our understanding of the average IQ. Modern testing software utilizes Computerized Adaptive Testing (CAT) algorithms. Unlike traditional linear tests where every participant answers the same questions, CAT systems adjust the difficulty of questions in real-time based on the user’s previous answers.

If a user answers several questions correctly, the software serves a more difficult task; if they struggle, the algorithm provides a simpler one. This technological approach allows for a much more precise measurement of the “average” person’s cognitive ceiling while reducing the time required for assessment. Furthermore, digital platforms allow for the collection of meta-data—such as response latency (how long a person pauses before answering)—which provides deeper insights into cognitive processing speed that paper tests simply could not capture.

How Algorithms Are Eliminating Human Bias in Testing

One of the historical criticisms of IQ testing has been cultural and socioeconomic bias. Technology is playing a pivotal role in refining the average by creating “culture-fair” digital assessments. Using non-verbal, pattern-recognition software based on Raven’s Progressive Matrices, tech developers are creating tools that bypass language barriers and educational disparities.

By leveraging big data, psychometricians can now normalize scores across global populations with unprecedented accuracy. These algorithms ensure that the “100” benchmark is truly representative of a global average rather than a narrow demographic, making the data more actionable for international organizations and tech-driven recruitment agencies.

Benchmarking Machine Intelligence Against Human Averages

As we discuss the average IQ of a person, we inevitably reach a point of comparison: How does human intelligence stack up against the silicon-based intelligence we’ve created? This comparison is at the heart of current technology trends, as researchers attempt to quantify the “IQ” of Large Language Models (LLMs).

The Turing Test vs. IQ: Measuring AI’s Cognitive Power

Historically, the Turing Test was the gold standard for AI intelligence—could a machine trick a human into thinking it was also human? However, as AI has evolved, researchers have begun administering standard human IQ tests to models like GPT-4, Claude, and Gemini.

Recent studies suggest that the latest iterations of generative AI have surpassed the “average” human IQ of 100 in specific domains, such as verbal reasoning and pattern recognition. In some standardized testing environments, these AI tools have registered scores equivalent to an IQ of 120 or higher. This shift is significant because it recontextualizes what we consider “average.” If a tool on your smartphone possesses a “verbal IQ” higher than 90% of the population, the value of the average human IQ shifts from “computational ability” toward “creative and emotional synthesis.”

GPT-4 and the Quest for Artificial General Intelligence (AGI)

The tech industry is currently obsessed with the transition from Narrow AI to Artificial General Intelligence (AGI)—the point at which a machine can perform any intellectual task a human can. When we ask “what’s the average IQ of a person,” we are setting the baseline for what AGI must eventually beat.

Current AI tools are exceptional at synthesis and retrieval but still struggle with the “common sense” and spatial reasoning that the average human performs effortlessly. Tech companies are utilizing these human IQ benchmarks to “train” their models. By understanding the failure points of a person with an average IQ, developers can fine-tune neural networks to avoid human-like cognitive biases, such as the gambler’s fallacy or confirmation bias, effectively creating a form of intelligence that is “average” in breadth but “superior” in logic.

Technology as a Cognitive Multiplier

In the modern tech ecosystem, the “average IQ” is increasingly becoming a measure of how well a person can use tools to augment their natural abilities. This is the concept of “Extended Cognition”—the idea that our gadgets and software are not just tools, but functional extensions of our minds.

AI Tools and the “Augmented IQ” Phenomenon

An individual with an average IQ of 100, when equipped with high-level AI tools, can often outperform a “genius” level individual (IQ 140+) who is working without technology. This “Augmented IQ” is a trend that is reshaping the workforce. Software like Notion for organization, ChatGPT for drafting, and specialized data analysis tools allow the average person to offload “lower-order” cognitive tasks (like memorization and basic calculation) to focus on “higher-order” strategy.

This creates a paradox in technology: as our tools get smarter, the necessity for a high “raw” IQ may decrease, while the necessity for “Digital Literacy” or “Prompt Engineering” increases. The average person today effectively operates at a much higher cognitive output level than an average person fifty years ago, thanks to the instant availability of the world’s collective knowledge via search engines and AI.

Productivity Software and the Modern Information Load

The average human brain is currently being tested by the sheer volume of data processed daily. Tech developers are responding to this by creating “Second Brain” software. Apps like Obsidian or Roam Research are designed to mimic the associative nature of the human brain, allowing users to map out thoughts in a digital web.

These tools are specifically designed to support the average cognitive load, preventing “burnout” and “information fatigue.” By externalizing our memory and categorization processes, technology is effectively raising the floor of what an average person can achieve in a professional environment, regardless of their standardized IQ score.

The Future of Cognitive Monitoring and Digital Security

As intelligence measurement moves further into the digital realm, it intersects with critical issues of digital security and ethical AI usage. The data derived from measuring a person’s intelligence is some of the most sensitive personal information an individual can possess.

Biometric Intelligence and Data Privacy

We are approaching a future where “IQ” might be estimated through biometric markers—eye-tracking software that measures how quickly you process information on a screen, or wearable tech that monitors neurological responses to stress. While this offers incredible insights for personalized learning and software UX design, it poses significant security risks.

If a person’s cognitive profile is stored on a cloud server, it becomes a target for hackers. Unlike a password, you cannot change your “cognitive fingerprint.” Tech companies must now prioritize “differential privacy” and end-to-end encryption for any platform that engages in cognitive testing or psychological profiling to ensure that the “average” person is protected from “brain-leaking” or cognitive discrimination.

The Ethics of AI-Driven Talent Acquisition

Many tech startups are now using “gamified” cognitive assessments to replace traditional resumes. These apps use software to measure a candidate’s problem-solving speed and memory—essentially a digital IQ test. While this can lead to more meritocratic hiring, the reliance on these algorithms raises questions about “algorithmic transparency.”

Is the software penalizing someone for having an “average” IQ even if they possess “exceptional” soft skills? As technology continues to define and measure human intelligence, the tech industry has a responsibility to ensure that these tools are used to empower the average person rather than create a new digital divide.

In conclusion, while the average IQ of a person remains numerically fixed at 100, the context of that number is being radically rewritten by technology. From the way we measure intelligence using adaptive algorithms to the way we enhance it using AI tools, technology has turned IQ from a static trait into a dynamic, augmented capability. In the coming years, the most successful individuals will not necessarily be those with the highest raw IQ, but those who best understand how to interface their human intelligence with the growing power of the digital world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.