The human fascination with the passage of time is as old as civilization itself. However, for most of history, envisioning our future selves was left to the imagination or the generic resemblance of our ancestors. Today, the question “What will I look like in the future?” is no longer a matter of guesswork. It has become a complex intersection of generative artificial intelligence, biometric data analysis, and predictive modeling.

In the tech sector, the ability to project physical aging is moving beyond viral smartphone filters and into the realms of high-level forensics, personalized healthcare, and the burgeoning metaverse. By leveraging massive datasets and sophisticated neural networks, technology is providing a window into our biological and digital futures.

The Evolution of Generative AI in Facial Aging

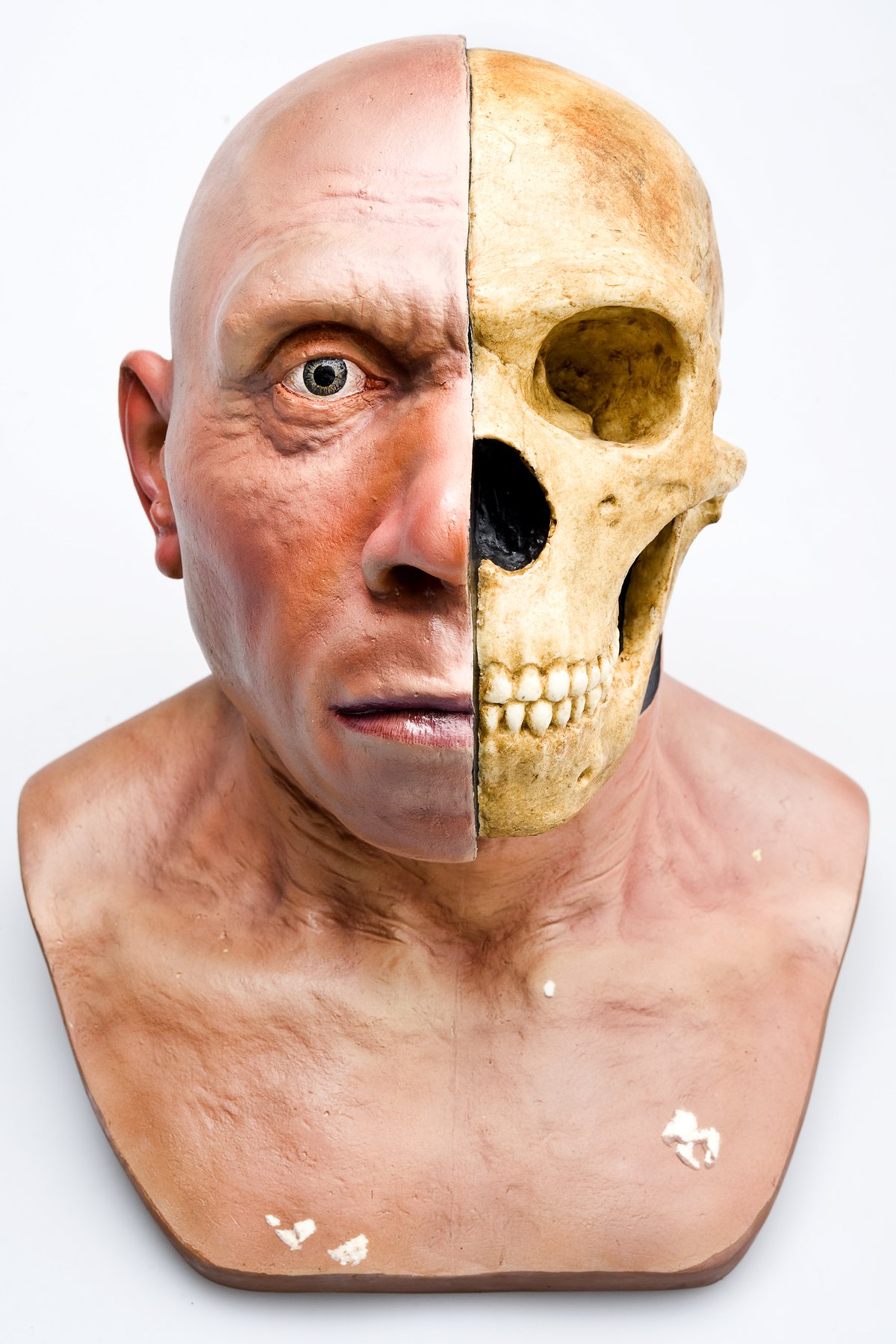

The transformation of a youthful face into an elderly one involves more than just adding wrinkles. It requires an understanding of bone density loss, skin elasticity, gravity’s effect on soft tissue, and even lifestyle-induced pigmentation. The technology driving these visualizations has evolved from simple image overlays to complex machine learning architectures.

From Basic Filters to Generative Adversarial Networks (GANs)

Early iterations of “age-me” apps used static masks—pre-designed textures of wrinkles or grey hair that were warped to fit the user’s facial landmarks. These were often unconvincing because they failed to account for the unique structural nuances of the individual.

The breakthrough came with the advent of Generative Adversarial Networks (GANs). A GAN consists of two neural networks: a generator and a discriminator. The generator creates an image of what it “thinks” a person will look like at age 70, while the discriminator compares it against a vast database of real 70-year-old faces. Through millions of iterations, the generator learns to produce images so realistic they can fool the discriminator. This technology allows for “latent space” manipulation, where specific attributes—such as age, gender, or expression—can be adjusted independently while maintaining the core identity of the subject.

The Role of Big Data in Physiological Accuracy

The accuracy of predictive aging depends heavily on the diversity and volume of the training data. If an AI is only trained on a specific demographic, its predictions for others will be flawed. Tech companies are now utilizing “longitudinal datasets”—collections of images of the same individuals taken over several decades.

By analyzing how thousands of different faces age over 30 to 40 years, AI can identify patterns in “aging trajectories.” This allows the software to account for ethnic variations in skin aging (the “melanin-protective” factor) and structural differences in bone aging. The result is a personalized projection that respects the user’s unique biological heritage rather than applying a “one-size-fits-all” elderly mask.

Beyond the Surface: Biometrics and Health Monitoring

While most people engage with aging technology for entertainment, the tech industry is integrating these visual outputs with deeper biological data. The future of “looking like ourselves” is increasingly tied to our “digital twins”—virtual representations of our physical bodies fed by real-time health metrics.

AI-Driven Health Predictions and External Manifestations

Current research in “age-tech” is looking at how lifestyle choices—visible in our data—impact our future appearance. If a wearable device tracks high levels of UV exposure, poor sleep patterns, or high stress (cortisol levels), future AI models can integrate this data to show the accelerated aging of the skin or the hollowed appearance of the peri-orbital area.

This transition from “chronological aging” (years lived) to “biological aging” (how much the body has worn down) is a significant trend in health tech. Companies are developing interfaces where users can move a slider to see how their face might look in 20 years if they smoke versus if they quit, or how their physique might change based on different fitness regimes. This “visual feedback loop” serves as a powerful psychological tool for behavioral change.

Genomic Sequencing and Visual Simulation

The most advanced frontier in predicting our future appearance lies in the marriage of AI and genomics. Phenotyping—the process of predicting physical traits from genetic code—is a growing field. By analyzing specific markers in a person’s DNA, tech platforms can predict hair loss patterns, the likelihood of developing certain skin conditions, and even the future distribution of body fat.

When this genetic data is fed into a 3D rendering engine, the “future self” becomes a high-fidelity simulation. This is no longer just an image; it is a data-backed projection of a biological reality, allowing researchers and individuals to visualize the long-term physical outcomes of their genetic predispositions.

The Virtual You: Avatars and the Metaverse Identity

As we spend more time in digital environments, the question “What will I look like in the future?” extends beyond our biological skin and into our digital presence. The technology of the metaverse and Augmented Reality (AR) is redefining identity through persistent, evolving avatars.

Photorealistic Avatars in AR/VR

The next generation of digital identity revolves around photorealistic avatars that can age in real-time or be “reset” to any point in the user’s life. Companies like Meta and Epic Games (through their MetaHuman Creator) are developing tools that allow for the creation of digital doubles with pores, peach fuzz, and realistic eye moisture.

In the future, your digital identity may not be a static image but a “living” asset. As the underlying hardware (like VR headsets with inward-facing cameras) tracks your expressions and movements, your avatar reflects your current state. Tech trends suggest a shift toward “identity persistence,” where your digital self ages alongside you, or perhaps matures at a rate you choose, creating a new form of digital self-expression.

The Concept of “Digital Twins”

A “Digital Twin” is a virtual model designed to accurately reflect a physical object—in this case, a human being. In the industrial tech world, digital twins are used to predict when a machine will break down. In the personal tech space, a digital twin of your body can be used to simulate the effects of time.

This technology allows for a sophisticated form of “future-casting.” By integrating data from electronic health records, wearable sensors, and environmental factors, a digital twin can show you not just your future face, but your future posture, gait, and overall physical vitality. This is the ultimate evolution of the “future self” inquiry: a comprehensive, interactive model that allows us to interact with our future selves in a 3D space.

Ethics, Privacy, and the Security of Your Future Face

The technology that allows us to see our future selves is built on some of our most sensitive data: our biometrics. As these tools become more ubiquitous, the tech industry must grapple with the ethical and security implications of predicting and simulating human appearances.

The Deepfake Dilemma and Identity Theft

The same GAN technology that creates a fun “old age” photo is also the foundation for deepfakes. If a piece of software can accurately predict what you will look like in 20 years, that “future face” becomes a piece of biometric data that could potentially be used to bypass future security systems.

As facial recognition becomes a primary security key for everything from banking to border control, the ability to generate a realistic likeness of an individual at any age presents a significant security risk. Tech companies are currently in an arms race to develop “liveness detection” and “deepfake forensics” to distinguish between a real human face and an AI-generated projection of a future self.

Data Ownership in the Age of AI Self-Portraits

Every time a user uploads their current face to an AI aging tool, they are contributing to a massive biometric database. The monetization of this data is a contentious issue in the tech world. Who owns the “future you”?

The terms of service for many viral aging apps often grant the developers broad rights to use the uploaded images to train their models. As we move forward, the tech community is calling for “Biometric Sovereignty,” where individuals have total ownership over their facial data and the generative models derived from it. Blockchain technology is being explored as a way to secure these digital identities, ensuring that your “future look” cannot be generated or used without your explicit, cryptographically verified consent.

Conclusion: The Convergence of Tech and Biological Reality

The question of what we will look like in the future has transitioned from a philosophical mystery to a technical output. Through the power of Generative AI, longitudinal data, and genomic integration, we are now able to gaze into a mirror that reflects not just where we have been, but where we are going.

As technology continues to bridge the gap between our physical bodies and our digital representations, the “future self” becomes a tool for health, a medium for digital expression, and a challenge for cybersecurity. We are entering an era where time is no longer a one-way street; through the lens of technology, we can visit our future selves, learn from them, and perhaps even change the reality of the face that eventually looks back at us from the physical mirror.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.