The question of what was the “first” computer ever made is significantly more complex than it appears on the surface. Depending on how one defines a “computer”—whether as a mechanical calculator, a programmable machine, or an electronic digital processor—the answer shifts across centuries of human innovation. Today, we carry more processing power in our pockets than what was used to land humans on the moon, but the journey to the silicon chip began with gears, steam, and vacuum tubes. Understanding the first computer requires looking at the technological milestones that transitioned humanity from manual arithmetic to automated logic.

The Dawn of Calculation: Mechanical Precursors and Visionary Designs

Long before the advent of electricity, the conceptual foundations of computing were being laid by mathematicians and engineers who sought to automate the tedious process of manual calculation. For centuries, the word “computer” actually referred to a person—usually a woman—whose job was to perform long sequences of mathematical equations by hand.

The Abacus and the Antikythera Mechanism

The earliest roots of computing can be traced back to the abacus, used by ancient civilizations for basic arithmetic. However, the most striking precursor is the Antikythera mechanism, discovered in a shipwreck at the turn of the 20th century. Dating back to approximately 150-100 BCE, this ancient Greek device is often cited as the world’s first analog computer. Used to predict astronomical positions and eclipses for calendar and astrological purposes, its complex system of bronze gears proved that the concept of “calculating through machinery” is millennia old.

Charles Babbage and the Analytical Engine

The transition toward what we recognize as a modern computer began in the 19th century with Charles Babbage, an English polymath often heralded as the “Father of the Computer.” In the 1820s, Babbage designed the Difference Engine, a mechanical device intended to calculate polynomial functions. While he never completed a full-scale working version in his lifetime, his designs were sound.

However, his most revolutionary concept was the Analytical Engine (1837). This was the first design for a general-purpose computer. Unlike previous machines that could only perform one specific task, the Analytical Engine featured an “Arithmetic Logic Unit” (the Mill), integrated memory (the Store), and control flow via punched cards—concepts that remain the bedrock of modern computer architecture. It was here that Ada Lovelace, the world’s first computer programmer, realized that the machine could do more than just math; it could process any symbolic information, marking the conceptual birth of software.

Breaking the Digital Barrier: The First Electronic and Programmable Machines

The leap from mechanical gears to electronic pulses occurred during the mid-20th century, accelerated by the existential pressures of World War II. The need to calculate ballistics tables and crack enemy ciphers drove a rapid shift toward automation using vacuum tubes and electrical relays.

The Z3: Programmable Logic in Germany

In 1941, German engineer Konrad Zuse completed the Z3. It is widely considered by many historians to be the first functional, fully automatic, programmable digital computer. While it relied on electromechanical relays rather than purely electronic components, it utilized binary floating-point numbers—the same 1s and 0s that govern modern tech today. Unfortunately, the original Z3 was destroyed during an Allied bombing raid in 1943, and its impact on the global tech trajectory was delayed by the secrecy of the war.

Colossus: The Codebreaker of Bletchley Park

In the United Kingdom, the push for a faster way to decrypt German high-level communications led to the creation of Colossus. Built by Tommy Flowers and a team of engineers at Bletchley Park in 1943, Colossus was the world’s first large-scale, electronic, digital, programmable computing device. It used over 1,600 vacuum tubes to perform complex boolean and counting operations. Because its existence was kept a state secret for decades after the war, Colossus did not receive the historical credit it deserved until the late 20th century, though its influence on British computing was profound.

The Birth of the Giant: ENIAC and the General-Purpose Revolution

While the Z3 and Colossus were monumental, the machine that truly ushered in the “Computer Age” in the public consciousness was the ENIAC (Electronic Numerical Integrator and Computer). Developed at the University of Pennsylvania’s Moore School of Electrical Engineering, ENIAC was unveiled in 1946.

The Scale and Power of ENIAC

Funded by the U.S. Army, ENIAC was a behemoth. It occupied a 30-by-50-foot room, weighed 30 tons, and utilized approximately 18,000 vacuum tubes. Unlike the Z3, it was fully electronic, meaning it had no moving parts that could slow down the processing speed. It was roughly 1,000 times faster than electromechanical machines of the era, capable of performing 5,000 additions per second.

ENIAC was not without its drawbacks. It was programmed by manually plugging in cables and setting switches, a process that could take days for a single problem. Furthermore, its vacuum tubes were notoriously unreliable; a tube would fail roughly every two days, requiring a specialized team to hunt down the fault. Despite these hurdles, ENIAC proved that a high-speed, general-purpose electronic computer was a viable reality.

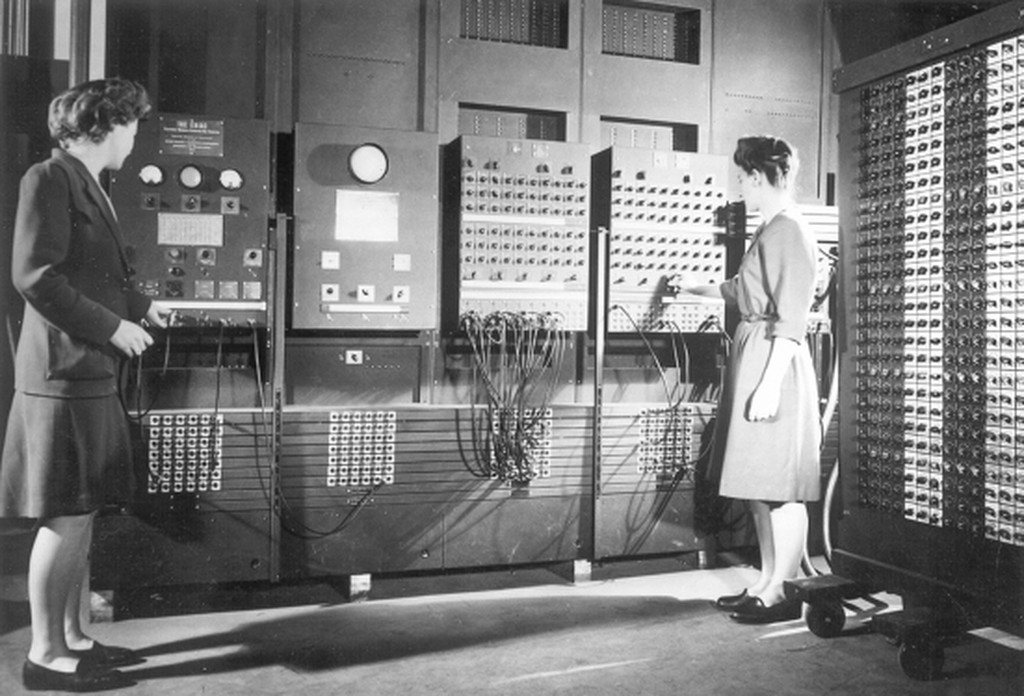

The Role of the “ENIAC Six”

A critical but often overlooked aspect of ENIAC’s history is the group of women who programmed it: Kay McNulty, Betty Jennings, Marlyn Wescoff, Ruth Lichterman, Elizabeth Bilas, and Jean Bartik. In an era where “hardware” was seen as the primary engineering feat, these women pioneered the logic of software. They translated mathematical equations into the physical patches and switches that allowed the machine to function, essentially creating the first methodologies for debugging and software architecture.

Stored Programs and the Transition to Commercialization

The primary limitation of ENIAC was its lack of an internal memory for storing programs. To change a task, the machine had to be physically rewired. The next logical step in technology was the “stored-program” computer, where instructions were held in the same memory as the data.

The Manchester Baby and EDVAC

In June 1948, the Small-Scale Experimental Machine (SSEM), nicknamed the “Manchester Baby,” became the first computer to run a stored program. This was a monumental shift; it meant that the computer was no longer just a calculator, but a flexible tool that could change its behavior purely through electronic input.

This concept was formalized by John von Neumann in his influential paper “First Draft of a Report on the EDVAC.” The “Von Neumann Architecture”—consisting of a processing unit, a control unit, memory, and input/output—became the blueprint for almost every computer built from that point forward.

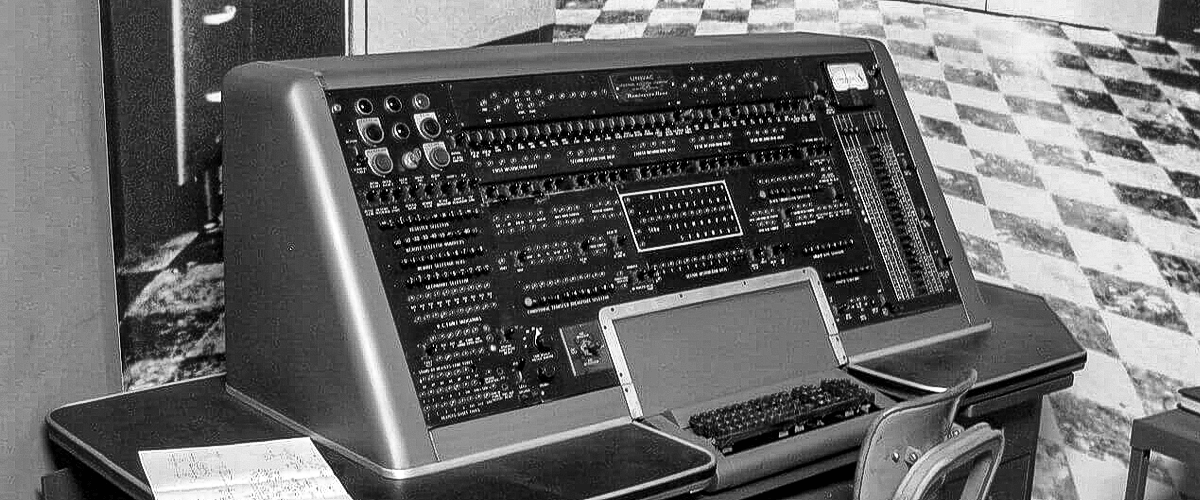

UNIVAC I: Bringing Computers to the Public Eye

The transition from military labs to the corporate world happened with the UNIVAC I (Universal Automatic Computer), delivered to the U.S. Census Bureau in 1951. It was the first commercial computer produced in the United States. The UNIVAC became a household name in 1952 when it correctly predicted the outcome of the Eisenhower-Stevenson presidential election on national television, using only a small percentage of the early returns. This event shifted the public perception of computers from “scary government brains” to indispensable tools for business and data analysis.

Defining the Legacy of Early Computing in the Modern Era

The journey from the mechanical gears of Babbage to the commercial success of the UNIVAC represents one of the most rapid technological evolutions in human history. Every modern innovation, from Artificial Intelligence to cloud computing, can trace its lineage back to these early machines.

From Vacuum Tubes to Transistors

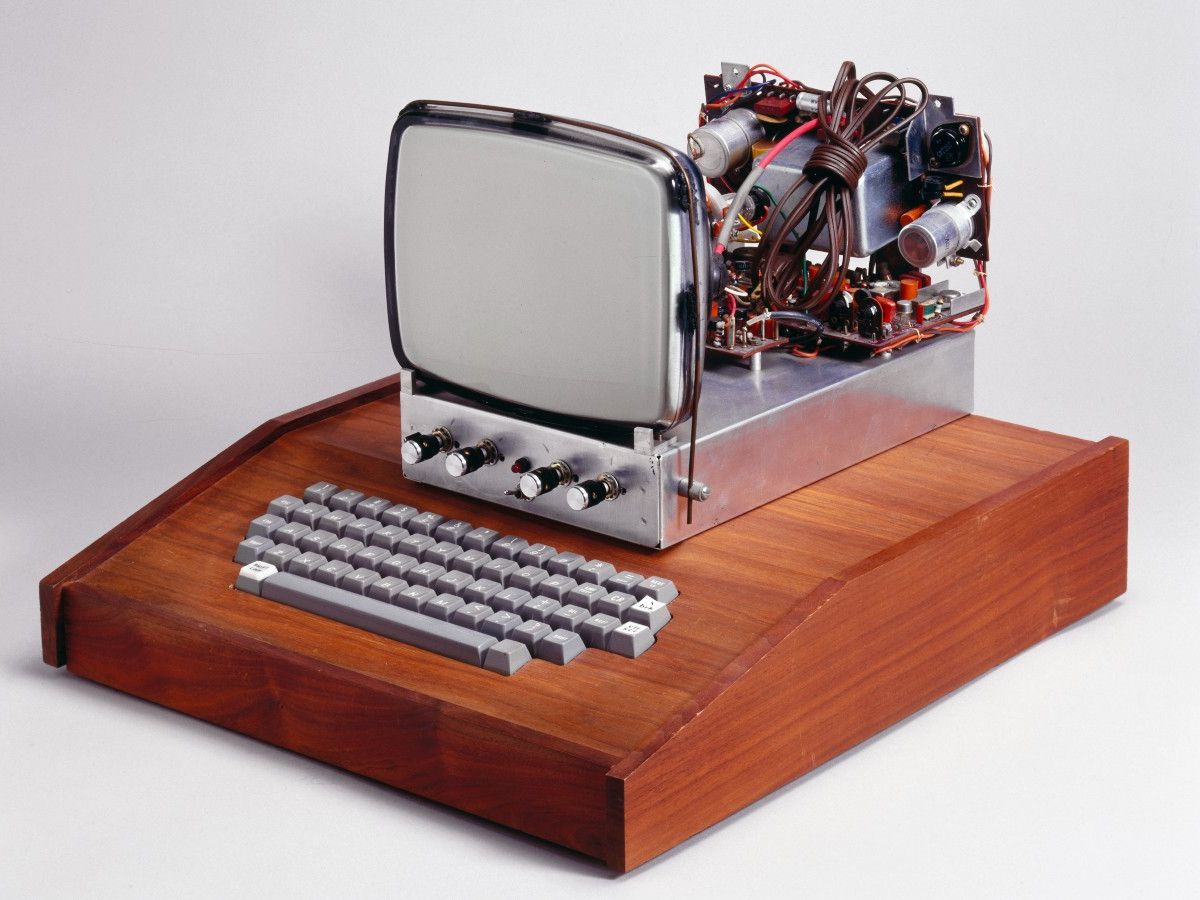

The era of the “first” computers ended with the invention of the transistor at Bell Labs in 1947. Transistors eventually replaced vacuum tubes, allowing computers to become smaller, faster, cheaper, and more reliable. This transition led to the integrated circuit and, eventually, the microprocessor, which miniaturized the power of an ENIAC onto a chip smaller than a fingernail.

How the First Computers Shaped Modern Tech Trends

Today, the principles established by the pioneers of early computing remain relevant. We still use binary logic. We still utilize the stored-program concept. However, we have moved from asking “what can a computer calculate?” to “what can a computer learn?”

The first computers were designed to solve specific problems—ballistics, decryption, or census data. Modern technology has evolved into a general-purpose utility that powers our social interactions, our economies, and our scientific breakthroughs. Looking back at the massive, humming rooms of the 1940s, we see not just the “first” computers, but the birth of the digital consciousness that defines the 21st century. The legacy of the first computer is not found in a single machine, but in the enduring human drive to automate intelligence and expand the boundaries of what is possible.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.