Deconstructing Fog: A Meteorological Perspective for Digital Applications

Fog, often described as a cloud at ground level, is fundamentally a stratus cloud. Specifically, it is a low-lying stratus cloud composed of tiny water droplets or ice crystals suspended in the air near the Earth’s surface. Its formation hinges on two primary conditions: a high relative humidity (typically 100%) and a temperature that causes the air to become saturated, leading to condensation. From a technological standpoint, understanding these foundational meteorological principles is not merely an academic exercise; it is crucial for developing robust, data-driven solutions that interact with or mitigate the impacts of this common atmospheric phenomenon. Digital applications, whether for weather forecasting, autonomous navigation, or smart infrastructure, rely on accurate models of fog’s physical properties.

The Anatomy of a Low-Lying Stratus

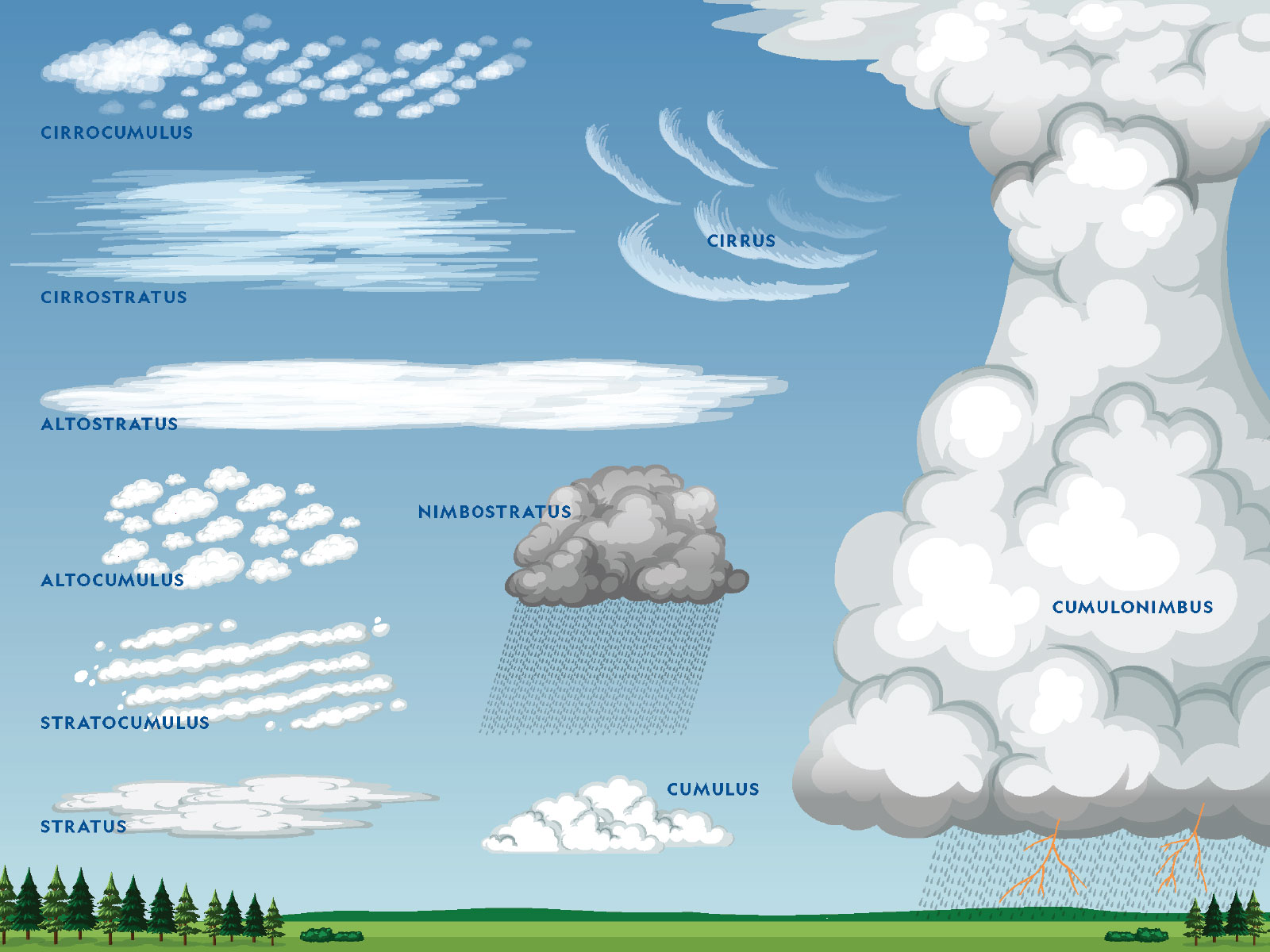

The classification of fog as a stratus cloud highlights its key characteristics: a uniform, gray layer that typically obscures visibility horizontally. Unlike cumulus clouds, which are associated with vertical development and convective activity, stratus clouds form in stable atmospheric conditions where air cools to its dew point. In the case of fog, this cooling often occurs through radiation (radiation fog), advection (advection fog), or the lifting of moist air over terrain (upslope fog). Each type presents unique challenges for technological detection and prediction due to variations in their formation mechanisms, density, and persistence. For developers of sensor systems and predictive algorithms, differentiating between these types and understanding their specific atmospheric precursors is vital for refining accuracy and enhancing real-world performance.

Microphysics of Droplet Formation and Visibility Challenges

The critical factor in fog’s impact on technology is visibility. This is directly related to the microphysics of the water droplets. These droplets are typically very small, ranging from 1 to 100 micrometers in diameter, and their concentration and size distribution determine the fog’s optical density. Higher concentrations of smaller droplets tend to reduce visibility more effectively than lower concentrations of larger droplets, assuming the same liquid water content. Technologies like LiDAR, radar, and optical cameras are profoundly affected by these microscopic particles. LiDAR beams can be scattered or absorbed, optical cameras struggle with light diffusion, and even radar can be attenuated by very dense fog. Therefore, advancements in computer vision, sensor fusion, and signal processing demand a deep understanding of how light and electromagnetic waves interact with these atmospheric aerosols. This knowledge underpins the development of algorithms that can intelligently interpret impaired signals or even “see through” certain levels of atmospheric obscurity.

AI-Driven Forecasting and Analytics in Fog Management

The unpredictable nature and localized occurrence of fog make it a significant challenge for various sectors, from aviation and shipping to intelligent transportation systems. Artificial intelligence (AI) and machine learning (ML) are revolutionizing the way we forecast, detect, and analyze fog, transforming it from a mere meteorological nuisance into a manageable data problem. By leveraging vast datasets and sophisticated algorithms, AI provides unprecedented capabilities for real-time monitoring and predictive modeling, enabling proactive decision-making that enhances safety and operational efficiency.

Predictive Models and Machine Learning for Atmospheric Conditions

AI’s strength lies in its ability to identify complex, non-linear patterns within massive datasets that human analysts might miss. For fog forecasting, ML models ingest a multitude of atmospheric variables: temperature, humidity, wind speed and direction, dew point, atmospheric pressure, and even aerosol concentrations. These models can range from traditional statistical approaches like decision trees and random forests to advanced deep learning architectures such as recurrent neural networks (RNNs) and convolutional neural networks (CNNs). RNNs are particularly adept at processing time-series data, making them suitable for predicting the evolution of fog conditions over several hours, while CNNs can analyze spatial patterns from satellite imagery to identify areas prone to fog formation. The continuous training of these models with new, real-time data ensures their adaptability and improves accuracy over time, leading to more reliable fog advisories and warnings.

Data Synthesis from Satellite, Radar, and Ground Sensors

Effective AI-driven fog forecasting is not reliant on a single data source but thrives on the synthesis of information from a diverse array of sensors. Satellite imagery, particularly from geostationary and polar-orbiting satellites, provides broad-scale insights into cloud cover and atmospheric moisture content. Advanced spectral analysis from satellite sensors can differentiate between low clouds and fog, often before it becomes visible on the ground. Weather radar systems offer data on precipitation and atmospheric stability, which can be indirect indicators of fog potential. Complementing these macro-level observations are granular data points from ground-based sensors: networked weather stations providing localized temperature and humidity readings, visibility sensors (transmissometers), and even more advanced LiDAR ceilometers that measure cloud base height and vertical aerosol profiles. AI algorithms act as sophisticated integrators, fusing these disparate data streams, accounting for their biases and resolutions, to create a comprehensive, multi-dimensional view of the atmospheric conditions conducive to fog formation. This holistic data approach is critical for high-fidelity predictions and localized warnings.

Navigating the Obscurity: Tech Solutions for Autonomous Systems

Fog presents one of the most significant environmental challenges for autonomous systems, including self-driving cars, drones, and delivery robots. Unlike clear conditions where optical sensors excel, dense fog severely degrades the performance of standard perception systems, potentially leading to dangerous navigation errors. Addressing this requires a multi-faceted technological approach, combining advanced sensor modalities with intelligent data processing and robust validation through extensive simulations.

Sensor Fusion and Advanced Perception Systems (LiDAR, Radar, Thermal)

To overcome the limitations of individual sensors in foggy conditions, autonomous systems employ sensor fusion, integrating data from multiple heterogeneous sensors. While conventional cameras struggle with low visibility due to light scattering, other sensors offer complementary strengths. Radar (Radio Detection and Ranging) systems, operating at longer wavelengths, are largely unaffected by fog droplets and can accurately detect the presence, range, and velocity of objects. However, radar typically provides lower resolution and struggles with object classification. LiDAR (Light Detection and Ranging), which uses laser pulses, offers high-resolution 3D point clouds essential for detailed environmental mapping and object recognition, but its performance is severely degraded by dense fog due to signal attenuation and scattering. Thermal cameras (or infrared cameras) detect heat signatures rather than visible light, making them effective for identifying living beings and engines through fog, as they emit heat that can penetrate the droplets more effectively than visible light. By fusing data from these diverse sensors – radar for robust detection, thermal for organic object identification, and LiDAR for detailed mapping when possible – autonomous systems can build a more complete and reliable perception of their surroundings, even under compromised visibility. Advanced algorithms then weight and combine these inputs, compensating for the weaknesses of each sensor in specific conditions.

Real-time Data Processing for Enhanced Situational Awareness

The sheer volume of data generated by multiple high-fidelity sensors necessitates powerful, real-time processing capabilities. Edge computing plays a crucial role here, allowing immediate analysis of sensor data closer to the source, reducing latency and enabling rapid decision-making. AI-powered perception algorithms constantly filter noise, reconstruct object shapes from sparse or corrupted data, and track dynamic elements in the environment. Deep learning models, specifically trained on datasets containing foggy scenarios, learn to extract meaningful features from degraded sensor inputs. For instance, an AI might learn to combine a low-resolution radar detection with a faint thermal signature to confidently identify a pedestrian that would be invisible to an optical camera. This real-time processing also extends to path planning and behavioral prediction, where the system must continuously update its understanding of the environment and adjust its trajectory to safely navigate through areas of reduced visibility, prioritizing safety margins and lower speeds.

Simulation and Testing in Virtual Fog Environments

Given the inherent dangers of testing autonomous vehicles in real-world foggy conditions, virtual simulation environments are indispensable. These platforms create highly realistic digital twins of road networks, urban landscapes, and even entire environments, complete with dynamically generated weather conditions, including varying densities and types of fog. Engineers can programmatically simulate the exact physical effects of fog on different sensor types – how light scatters, how radar signals reflect, and how thermal signatures might appear. This allows for rigorous testing and validation of perception algorithms and navigation stacks under a vast array of foggy scenarios, some of which would be impractical or unsafe to reproduce physically. By iterating on algorithms within these virtual proving grounds, developers can refine their systems, identify vulnerabilities, and ensure that autonomous systems are robustly prepared to encounter and safely operate within real-world foggy conditions long before physical deployment.

IoT and Smart Infrastructure: Granular Insights into Fog Phenomena

The Internet of Things (IoT) is transforming how we monitor and understand environmental phenomena like fog, moving from generalized regional forecasts to hyper-localized, real-time insights. By deploying a dense network of interconnected smart sensors, IoT infrastructure provides granular data that is critical for both immediate operational decisions and long-term climate analysis, enabling smarter responses to fog events across various industries.

Networked Weather Stations and Environmental Sensors

At the core of IoT-driven fog monitoring are networked weather stations and specialized environmental sensors. These devices are typically deployed in high-risk areas such as highways, airports, ports, and critical infrastructure. Beyond standard temperature, humidity, and wind sensors, these IoT nodes often include advanced visibility sensors (transmissometers) that precisely measure atmospheric transparency. Some incorporate LiDAR ceilometers, which use laser pulses to determine cloud base height and the vertical structure of aerosols, providing a three-dimensional view of fog layers. Each sensor node collects data continuously, timestamping it and transmitting it wirelessly to a central processing unit or cloud platform. The sheer density and geographical distribution of these networked sensors create an unprecedented resolution of environmental data, allowing for the precise identification of fog pockets, rapid changes in visibility, and the real-time tracking of fog movement and dissipation, which is far more detailed than traditional weather station networks.

Edge Computing for Localized Fog Detection

The volume and velocity of data generated by a vast IoT network necessitate efficient processing strategies. Edge computing plays a pivotal role in localized fog detection. Instead of sending all raw sensor data to a centralized cloud for processing, edge devices (gateways or even the sensors themselves with integrated processing capabilities) perform initial data analysis, filtering, and aggregation at the network’s periphery. For instance, an edge device connected to a visibility sensor on a highway might continuously monitor the data. If visibility drops below a predefined threshold, the edge device can immediately trigger an alert, activate roadside warning lights, or send a concise summary to a central traffic management system, rather than streaming raw, high-frequency data constantly. This approach significantly reduces latency, conserves bandwidth, and enables faster, more autonomous responses to localized fog events. It empowers smart infrastructure to react intelligently and promptly, enhancing safety for motorists, pilots, or maritime operators by providing immediate, context-aware information.

Beyond Prediction: Leveraging Digital Twins for Resilient Systems

While advanced forecasting and real-time monitoring address immediate operational needs, the long-term strategic challenge lies in building systems and infrastructure resilient to atmospheric events like fog. Digital Twin technology offers a revolutionary approach to simulate, analyze, and optimize the interaction of physical assets with complex environmental conditions, allowing for proactive design and validation.

Replicating Atmospheric Dynamics in Virtual Spaces

A digital twin is a virtual replica of a physical asset, process, or system. For phenomena like fog, this means creating a highly detailed, dynamic virtual model of a specific geographic area, complete with its terrain, infrastructure, and atmospheric properties. This virtual environment can precisely simulate various types and densities of fog, replicating how light scatters, how visibility is affected, and how the microphysics of water droplets interact with the environment. Meteorological models and historical weather data feed into this digital twin, allowing it to predict how fog might form and evolve within that specific virtual space. Engineers can then use this digital environment to run “what-if” scenarios, observing the impact of different fog conditions on specific assets—for example, assessing how a particular bridge design might accumulate fog, or how a new airport runway’s layout might affect visibility during typical fog events. This capability provides an unparalleled platform for understanding complex environmental interactions without the need for costly and time-consuming physical experiments.

Prototyping and Optimization of Fog-Resistant Technologies

The power of digital twins extends beyond mere observation; it facilitates the rapid prototyping and optimization of fog-resistant technologies. Before investing in physical prototypes, engineers can virtually test new sensor configurations, communication systems, or infrastructure designs within the digital twin. For instance, they might simulate a new anti-fog coating for an autonomous vehicle’s camera lens or evaluate the effectiveness of an advanced lighting system for an airport runway under various fog conditions. The digital twin can provide immediate feedback on performance, identifying potential flaws or areas for improvement. This iterative process of virtual design, simulation, and refinement significantly accelerates the development cycle, reduces costs, and ensures that when a technology moves to physical deployment, it has already been rigorously validated against a comprehensive spectrum of foggy scenarios. Ultimately, digital twins enable organizations to build more robust, adaptive, and resilient systems that can consistently perform even when confronted by the pervasive and challenging presence of fog.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.