In the realm of mathematics, the factors of 16—1, 2, 4, 8, and 16—are elementary. However, in the world of technology, these numbers represent much more than simple divisors. They are the building blocks of digital architecture, the DNA of data structures, and the foundational pillars upon which modern software and hardware are built. From the way a processor handles information to the method by which a high-definition image is rendered on your screen, the factors of 16 dictate the efficiency, scalability, and security of our digital lives.

To understand the “factors of 16” within a technological context is to understand the language of computers. Machines do not think in tens; they think in powers of two. Because 16 is a perfect power of two ($2^4$), its factors represent the critical junctions where hardware meets software. This article explores how these five numbers define the landscape of modern tech.

The Mathematical Foundation: Why 16 is the “Golden Number” of Tech

At the most basic level, digital technology is binary. Every piece of data is a sequence of ones and zeros. However, binary is cumbersome for human developers to read and for systems to process in large chunks without a middle ground. This is where the importance of 16—and by extension, its factors—comes into play.

Base-2 and the Power of Hexadecimal

The number 16 is the basis for the hexadecimal system (Base-16), which serves as a human-friendly shorthand for binary code. While binary uses two symbols (0 and 1), hexadecimal uses sixteen (0–9 and A–F). Because 16 is a factor-rich power of two, exactly four bits (binary digits) can be represented by a single hexadecimal character. This relationship makes the factors of 16 essential for memory addressing and color coding in web design (e.g., #FFFFFF).

The Role of 2 and 4 in Logic Gates

The factors 2 and 4 represent the primary stages of logical operations. Modern transistors act as binary switches (the factor of 2). When these switches are combined into logic gates, they process “nibbles”—a four-bit aggregation (the factor of 4). This structure allows for the complex decision-making processes found in everything from a simple calculator app to sophisticated AI training models.

Hardware Evolution: From 8-Bit Limitations to 16-Bit Power

The history of computing is often categorized by “bits,” which refer to the size of the data units a processor can handle. The movement through the factors of 16 highlights the evolution of hardware performance and the expansion of what machines are capable of achieving.

The Legacy of the 8-Bit Architecture

Before the ubiquity of 64-bit systems, the tech world was defined by the factor of 8. The 8-bit byte became the universal standard for measuring data. However, 8-bit systems had significant limitations in memory addressing, only able to access 256 unique addresses. This limitation paved the way for the 16-bit revolution, which utilized the full “16” to expand memory capacity exponentially, allowing for more complex operating systems and graphical user interfaces.

16-Bit Processing and Beyond

When hardware transitioned to 16-bit architecture, the factor of 16 allowed for 65,536 unique memory addresses. This was a watershed moment for personal computing, enabling the leap from text-based interfaces to the early versions of Windows and MacOS. Today, even as we move into the realms of 32-bit and 64-bit processing, the underlying logic remains tethered to the factors of 16, as these systems must maintain “backward compatibility” to ensure that older data structures still function within newer environments.

Bus Width and Data Transfer Rates

In hardware design, “bus width” refers to how many bits of data can be transferred simultaneously between the CPU and memory. These widths are almost always factors or multiples of 16. A 64-bit bus, for instance, is essentially four 16-bit lanes working in parallel. By optimizing these factors, hardware engineers can eliminate bottlenecks and ensure that high-speed SSDs and RAM can communicate with the processor at peak efficiency.

Software Development and Data Structure Efficiency

For software engineers, the factors of 16 are not just theoretical; they are practical tools used to optimize code and manage resources. Efficient software relies on “memory alignment,” a process where data is stored in offsets that are multiples of a specific power of two, most commonly 8 or 16.

Memory Padding and Alignment

When a program runs, the CPU fetches data from RAM. To speed this up, processors read memory in “blocks.” If a piece of data starts at an address that is a factor of 16, the processor can grab it in a single cycle. If the data is misaligned, the CPU might need two or more cycles to retrieve it. Developers use “padding” to ensure that data structures align with these 16-byte boundaries, significantly boosting the performance of high-demand applications like video games and real-time data analytics tools.

The “Nibble” and Data Compression

In data science, the “nibble” (4 bits, a factor of 16) is a critical unit for compression. Many algorithms, such as those used in image and audio encoding, rely on breaking down data into these smaller factors to remove redundancy. By analyzing data in 4-bit or 8-bit chunks, software can compress a massive 4K video file into a streamable format without losing significant quality.

Grid Systems in UI/UX Design

The factors of 16 also dominate the visual side of tech. Most modern web design is built on a “16-pixel” base. Standard font sizes usually start at 16px because it is highly legible on digital displays. Layout grids, such as the popular Bootstrap grid or Tailwind CSS configurations, often use spacing increments based on factors of 16 (4, 8, 12, 16). This creates a rhythmic, mathematically consistent UI that scales perfectly across different screen resolutions.

Cybersecurity: The Factorial Strength of Encryption

In the digital age, security is perhaps the most critical application of these mathematical principles. Modern encryption standards, such as AES (Advanced Encryption Standard), are built directly upon the logic of 16.

AES-128 and Block Ciphers

AES-128 is the industry standard for securing sensitive data. It operates by breaking data into 128-bit blocks. If you divide 128 by 16, you get 8—a perfect alignment with the standard byte. The encryption process involves a “substitution box” (S-box) which is a 16×16 matrix. This specific use of 16 ensures that the data is scrambled in a way that is computationally impossible to crack with current technology, as the number of possible permutations is astronomical.

Hashing and Hexadecimal Outputs

When you enter a password, the system doesn’t store the password itself; it stores a “hash.” These hashes (like SHA-256) are almost always represented as hexadecimal strings. Because hexadecimal is base-16, the factors of 16 allow these security tools to represent massive numbers in a compact, standardized format. A security key might look like a random string of letters and numbers, but it is actually a precisely calculated factor-based representation of your digital identity.

The Future of Scaling: From 16 to Quantum

As we look toward the future of technology—specifically Quantum Computing and Cloud Scalability—the factors of 16 continue to serve as the benchmark for growth.

Cloud Elasticity and Virtualization

In cloud computing (AWS, Azure, Google Cloud), virtual machines are often provisioned in “instances” that scale by factors of 16. Whether it is 16GB of RAM or 16 vCPUs, these increments allow data centers to maintain “load balancing.” By keeping resources tied to these factors, cloud providers can ensure that physical hardware is partitioned efficiently without wasting energy or processing power.

Quantum Bits (Qubits) and Superposition

While classical computers use bits that are either 0 or 1, quantum computers use qubits. However, to make quantum computing useful for current tech stacks, researchers are developing “error correction” codes that map quantum states back to classical factors. Even in the strange world of quantum mechanics, the need to interface with our current 16-based digital infrastructure remains a primary challenge for engineers.

Conclusion: The Invisible Logic

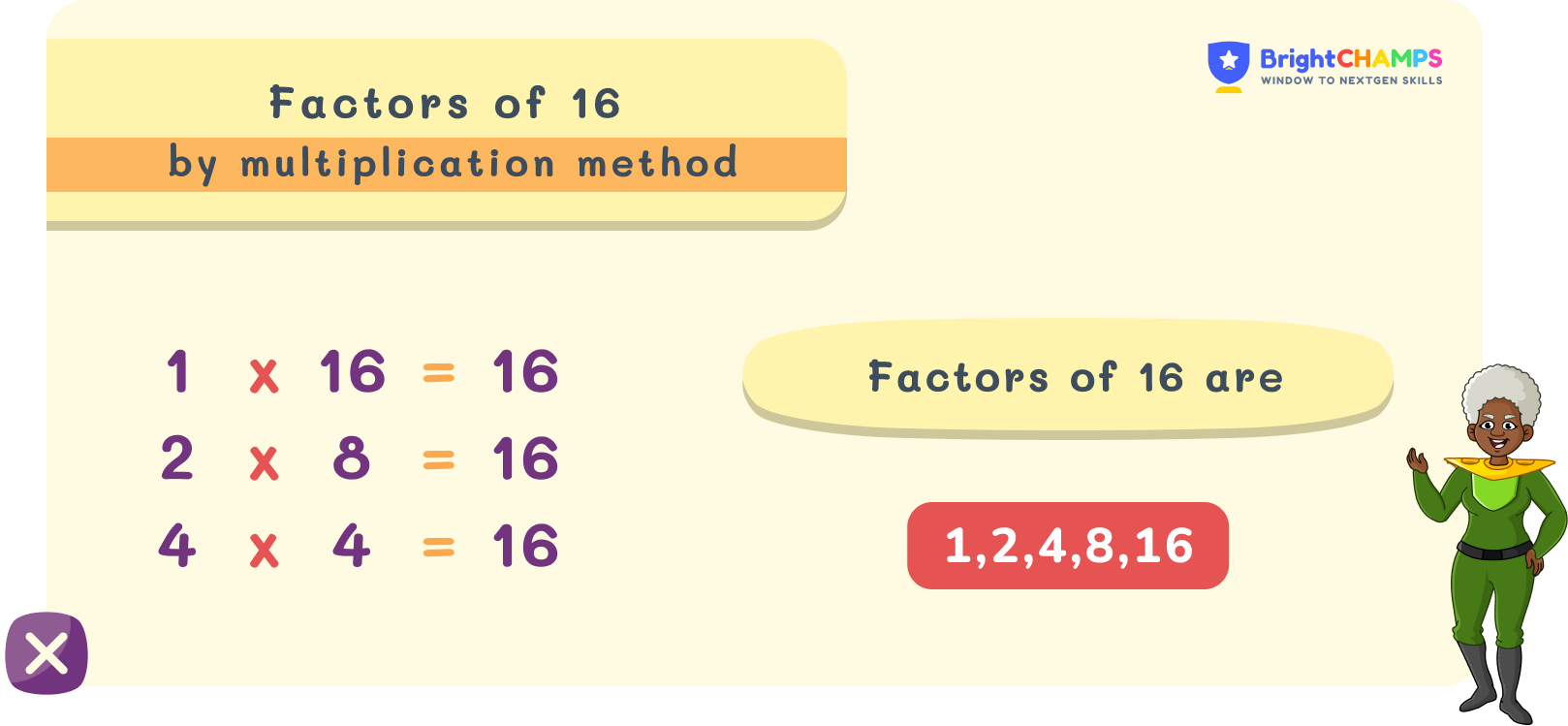

“What the factors of 16” are might seem like a simple math query, but in the tech industry, these factors are the invisible scaffolding of the modern world. The factors 1, 2, 4, 8, and 16 define the limits of our hardware, the efficiency of our software, the beauty of our interfaces, and the strength of our security.

As we continue to push the boundaries of Artificial Intelligence and global connectivity, these numbers will remain constant. They remind us that no matter how complex our technology becomes, it is always rooted in the elegant, logical simplicity of mathematics. Understanding these factors is not just about division; it is about understanding the very fabric of the digital universe.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.