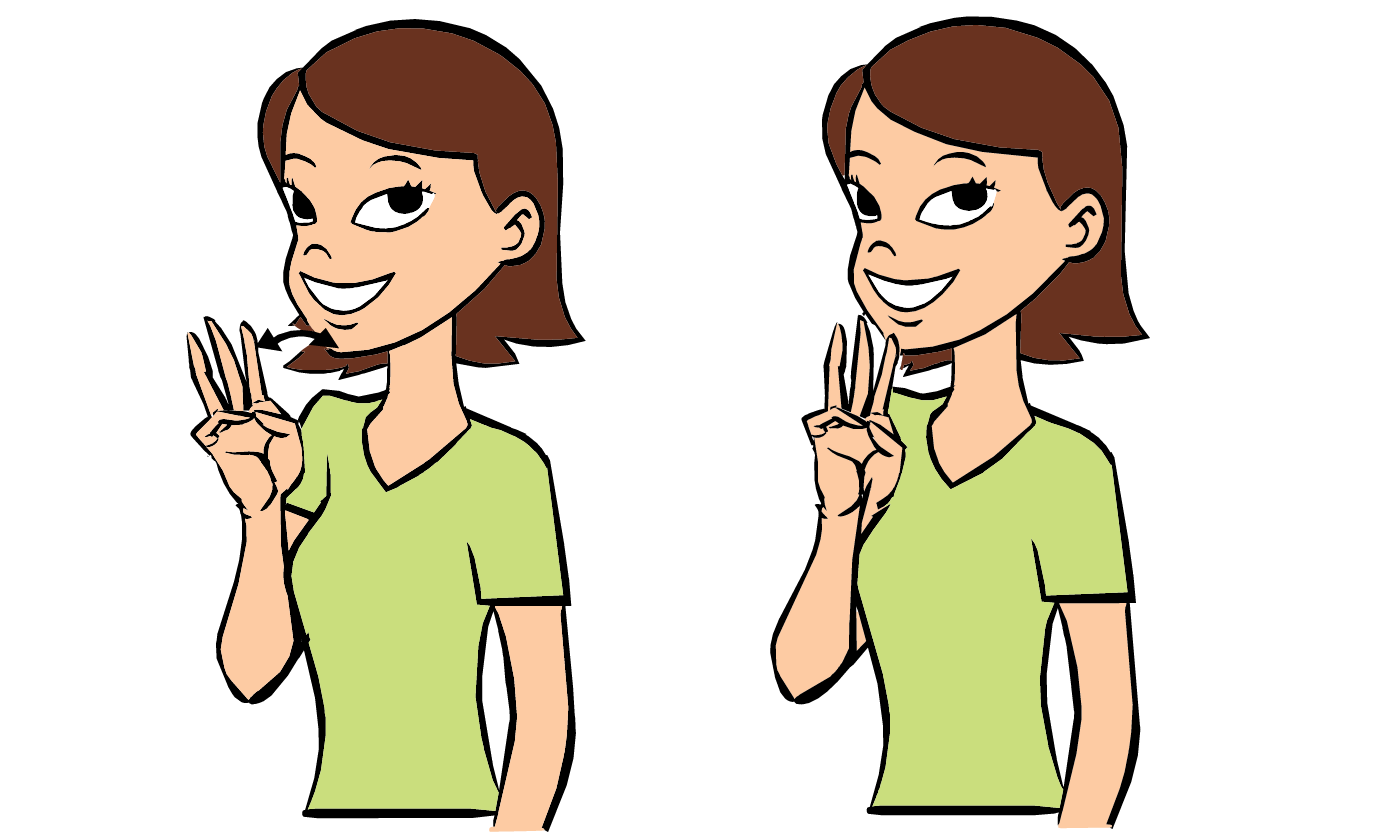

In the realm of human communication, few concepts are as fundamental as “water.” In American Sign Language (ASL), the sign is deceptively simple: the index, middle, and ring fingers are extended to form a “W,” while the thumb and pinky tuck away, and the index finger taps the chin. Yet, as simple as this gesture appears to the human eye, it represents a frontier of immense complexity for modern technology.

As we move deeper into an era defined by Artificial Intelligence (AI), machine learning, and spatial computing, the question of “what is water in sign language” is no longer just a linguistic inquiry. It is a data challenge. For software developers, UX designers, and AI researchers, “water” is a benchmark for gesture recognition, a test case for haptic feedback, and a cornerstone of the movement toward a more inclusive digital ecosystem.

The Evolution of ASL Learning: From Textbooks to Immersive Apps

The journey of learning a basic sign like “water” has undergone a radical digital transformation. Traditionally, students relied on two-dimensional diagrams or static photos in textbooks, which often failed to capture the fluid motion and spatial depth required for clear communication.

The Anatomy of a Sign: Digital Visualization of “Water”

Modern educational software has moved beyond the static image. Today, high-definition video libraries and 3D avatars allow learners to view the sign for “water” from a 360-degree perspective. This shift is crucial because sign language is inherently three-dimensional. A digital avatar can demonstrate the precise contact point of the “W” handshape against the chin, a detail that might be lost in a 2D sketch. Apps like ASL Bloom or Lingvano utilize these high-fidelity renderings to ensure that the nuances of hand orientation and movement speed—technical components of the sign—are conveyed with mathematical precision.

Gamification and Interactive Feedback Loops

The “Tech” niche thrives on engagement, and sign language education is no exception. By integrating gamification, developers have turned the memorization of signs into an interactive experience. Using the front-facing cameras on smartphones, these apps employ basic computer vision to track a user’s hand as they attempt the sign for “water.” If the fingers are misaligned or the “W” is held too far from the face, the software provides real-time corrective feedback. This creates a “low-stakes” environment where technology acts as a private tutor, bridging the gap between passive observation and active muscle memory.

AI and Machine Learning: Bridging the Communication Gap

The most significant technological breakthrough regarding sign language lies in the field of Computer Vision (CV) and Natural Language Processing (NLP). Teaching a machine to recognize the sign for “water” is a masterclass in data labeling and neural network training.

Computer Vision and Hand Gesture Recognition

To a computer, the sign for “water” is not a concept; it is a series of coordinates in a three-dimensional grid. Technologies like Google’s MediaPipe or Microsoft’s Azure Cognitive Services use “hand landmarking” to identify 21 key points on a human hand. When a user signs “water,” the AI analyzes the distance between the fingertips and the palm, the curvature of the knuckles, and the proximity of the hand to the face.

The technical challenge is “occlusion”—when one part of the hand hides another from the camera’s view. Advanced Convolutional Neural Networks (CNNs) are trained on thousands of variations of the sign for “water” to ensure the AI can recognize it regardless of the user’s skin tone, lighting conditions, or sleeve length. This level of technical sophistication is what allows for the creation of real-time sign-to-text translation tools.

Real-Time Translation Algorithms and Big Data

The goal of many tech giants is to create a “universal translator” for sign language. This involves more than just recognizing a single sign like “water”; it requires the AI to understand “prosody”—the rhythm and flow of a sentence. In ASL, the sign for “water” might be modified to indicate “the water is flowing” or “a large body of water” through the speed and breadth of the movement.

Data scientists are currently building massive datasets to train Large Language Models (LLMs) to interpret these variations. By treating sign language as a visual language with its own syntax, rather than a code for spoken English, developers are creating software that can translate complex ASL sentences into fluent spoken text in real-time, effectively breaking down barriers in workplaces and digital boardrooms.

Hardware Innovations: Haptics, VR, and Wearables

While software handles the interpretation, hardware is redefining the sensory experience of sign language. The tech industry is looking toward a future where communication is not just seen or heard, but felt.

Virtual Reality and Spatial Learning

Spatial computing platforms like the Meta Quest and Apple Vision Pro are revolutionizing how we interact with linguistic data. In a VR environment, a user can “see” the sign for “water” in a fully immersive 3D space. This is particularly effective for learning “directional signs” or signs that require specific spatial placement. VR allows for “embodied learning,” where the user’s digital hands must hit specific spatial triggers to successfully complete a sign. This high-tech approach accelerates the learning curve by reinforcing the “spatial grammar” that is unique to sign languages.

Smart Gloves and Sensory Feedback

Beyond cameras, wearable technology offers a different path toward translation. “Smart gloves” equipped with flex sensors and accelerometers can capture the physical movement of the fingers as they form the “W” for “water.” This data is then transmitted via Bluetooth to a smartphone app that vocalizes the word.

Furthermore, haptic feedback—vibrations sent to the wearer’s skin—can be used to correct form. If a student is learning the sign for “water” and their hand position is slightly off, the glove can provide a localized “nudge” to guide the hand into the correct posture. While still largely in the prototype and research phase, these gadgets represent the cutting edge of assistive technology, turning the human body into a direct input device for digital communication.

The Future of Digital Accessibility and Universal Design

As we look toward the future of software development, the integration of sign language recognition is becoming a standard of “Universal Design.” Tech companies are realizing that accessibility is not a niche feature but a core component of a robust digital identity.

Integrating ASL into Global Software Standards

The push for digital security and identity verification is beginning to look at gesture-based biometrics. Imagine a world where a specific sequence of signs—perhaps including the sign for “water” or other common nouns—serves as a secondary form of authentication. Beyond security, we are seeing the rise of “Sign Language APIs” (Application Programming Interfaces). These allow third-party developers to easily integrate ASL recognition into everything from customer service kiosks to smart home devices. Soon, you might be able to sign “water” to a smart refrigerator to check your filtration status or order a delivery.

Ethical AI and the Nuances of Non-Manual Markers

A critical tech-focused discussion in the ASL community involves the ethics of AI. Sign language is not just about hands; it involves facial expressions and body shifts (non-manual markers). A person signing “water” as a question versus “water” as a command uses different eyebrow positions.

The next frontier for tech developers is “affective computing”—AI that can read facial expressions to provide a more accurate translation. This requires a commitment to ethical data sourcing, ensuring that the AI is trained on a diverse range of deaf and hard-of-hearing individuals to avoid “algorithmic bias.” By focusing on these technical nuances, the industry ensures that technology doesn’t just “mimic” signs, but truly understands the depth of the language.

Conclusion: The Binary Behind the Breath of Life

What is water in sign language? In the context of technology, it is a triumph of data processing, a masterpiece of computer vision, and a testament to the power of inclusive design. We have moved from a time when the deaf community was digitally sidelined to an era where their primary mode of communication is driving some of the most exciting innovations in AI and hardware.

As we continue to refine the algorithms that recognize the tap of a finger against a chin, we are doing more than just teaching a computer a word. We are engineering a world where technology acts as a seamless bridge, ensuring that the most fundamental concepts of human life—like “water”—can flow freely between the physical and digital worlds, regardless of how they are expressed. In this tech-driven future, the sign for “water” is not just a gesture; it is a signal of a more connected, accessible, and intelligent global society.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.