In the modern digital landscape, sound is more than just a sensory experience; it is a complex data set that must be meticulously measured, managed, and optimized. Whether you are a software developer building a communication app, a content creator mastering a video for YouTube, or a hardware engineer designing the latest high-fidelity headphones, understanding the unit of measure for loudness is critical. While most people are familiar with the term “decibel,” the technology of sound has evolved to include much more sophisticated metrics that account for human perception, digital limits, and algorithmic normalization.

This article explores the technical nuances of how we measure loudness, the transition from analog to digital metrics, and the software tools that have revolutionized the way we interact with audio technology.

The Fundamentals of Sound Measurement: The Decibel (dB)

To understand loudness in technology, one must start with the decibel (dB). However, unlike a meter or a gram, a decibel is not an absolute unit of measurement. Instead, it is a ratio—a way of expressing the relationship between two values of power or pressure. In the realm of tech and hardware, the decibel is the cornerstone of audio engineering.

The Decibel (dB) Scale and Logarithmic Logic

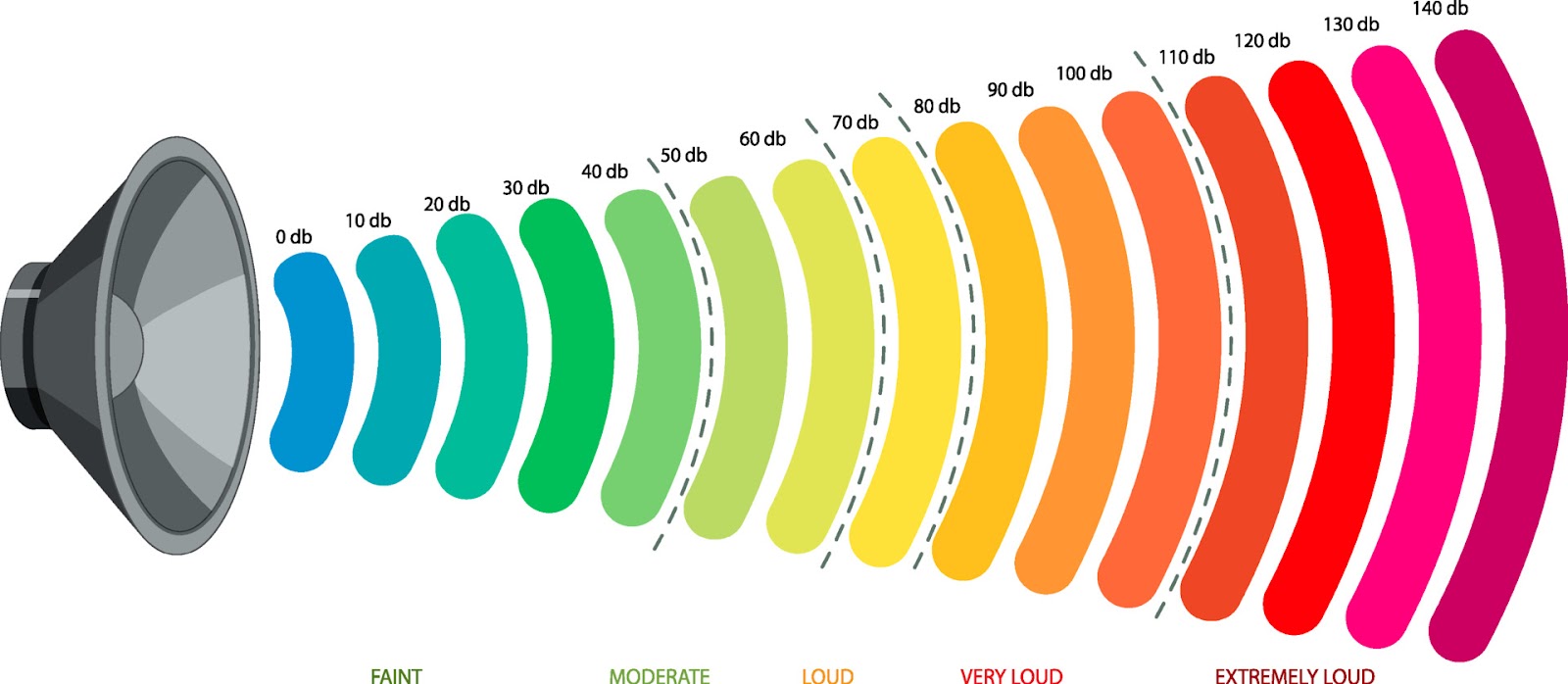

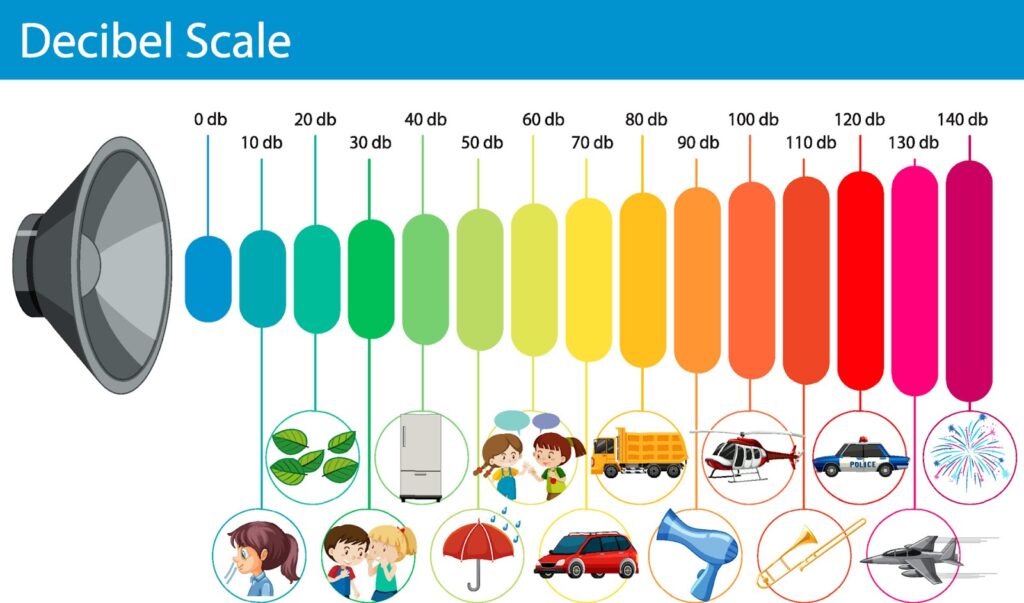

Human hearing is remarkably versatile, capable of detecting the faint sound of a leaf falling and the deafening roar of a jet engine. Because the range of sound pressure the human ear can handle is so vast, a linear scale (like 1 to 1,000,000) would be unwieldy for software and hardware interfaces. Therefore, technology utilizes a logarithmic scale.

In this scale, every increase of 10 dB represents a tenfold increase in signal intensity. To the human ear, however, a 10 dB increase is generally perceived as a “doubling” of loudness. This distinction between physical intensity and perceived volume is the first hurdle software engineers must overcome when designing audio sliders and volume controls in apps and operating systems.

dBSPL vs. dBFS: Physical vs. Digital

In the tech world, we distinguish between “Sound Pressure Level” (dBSPL) and “Full Scale” (dBFS).

- dBSPL measures sound in the physical world—the vibrations hitting a microphone or leaving a speaker.

- dBFS is the standard in digital software. In a digital system, 0 dBFS is the absolute maximum level a piece of software can handle before “clipping” or digital distortion occurs. Everything in digital audio is measured in negative numbers (e.g., -6 dBFS, -12 dBFS) because it is relative to that digital ceiling.

Digital Audio and the Evolution of LUFS

As technology moved from analog tape to digital streaming, the decibel alone became insufficient for ensuring a consistent user experience. This led to the development of LUFS (Loudness Units relative to Full Scale), which is now the industry standard for measuring “integrated loudness” in software, broadcasting, and streaming services.

Why Peak Normalization Failed

In the early days of digital audio, software used “peak normalization.” This tech would look for the highest transient (the loudest split-second spike) and turn the whole file up until that spike hit 0 dB. However, this didn’t account for “perceived loudness.” A song with one loud drum hit but very quiet vocals would still sound quiet overall, even if its peak hit the limit.

This discrepancy led to the “Loudness Wars,” where developers and engineers used aggressive compression software to make audio as loud as possible, often destroying the dynamic range and quality of the sound in the process.

The Rise of LUFS and the K-Weighting Filter

LUFS (also known as LKFS) was developed to solve this. Unlike standard decibels, LUFS utilizes a “K-weighting” filter—an algorithm that mimics the human ear’s frequency response. Our ears are more sensitive to certain mid-to-high frequencies (where human speech resides) and less sensitive to very low bass.

By using LUFS, software can measure the “average” loudness over time, providing a value that truly reflects how loud a human will perceive the audio. This technological shift has allowed platforms like Spotify, Apple Music, and Netflix to automatically normalize all content to a specific LUFS level, ensuring that users don’t have to reach for their volume remote every time a new song or show starts.

Tech Tools for Measuring and Managing Loudness

The transition to sophisticated loudness metering has birthed an entire industry of software tools, AI-driven plugins, and hardware monitors designed to help technicians achieve the perfect “loudness footprint.”

Digital Audio Workstations (DAWs) and Professional Plugins

Modern software like Adobe Audition, Ableton Live, and Logic Pro incorporates advanced metering suites. Tools such as the TC Electronic Clarity M or software plugins like Youlean Loudness Meter allow developers and creators to see a real-time visualization of their audio’s loudness. These tools don’t just show a bouncing bar; they provide histograms and “Loudness Range” (LRA) data, which tells the software user exactly how much contrast exists between the quietest and loudest parts of the audio.

AI-Driven Mastering Tools

Artificial Intelligence has become a dominant force in audio technology. Platforms like Landr or iZotope Ozone use machine learning algorithms to analyze a track and automatically adjust its loudness to meet the technical specifications of various streaming platforms. These AI tools compare the input data against millions of reference tracks, applying EQ and compression in milliseconds—a task that used to take human engineers hours of manual labor. This democratization of tech allows even amateur app developers to produce professional-grade audio for their products.

The “Loudness War” and Streaming Algorithms

The way we consume media today is governed by the algorithms of tech giants. Understanding how these companies use loudness units is essential for anyone working in digital media or hardware manufacturing.

How Spotify, YouTube, and Apple Normalize Audio

Each major platform has a “target” loudness. For instance, Spotify targets approximately -14 LUFS. If a tech enthusiast uploads a podcast that is too loud (e.g., -8 LUFS), Spotify’s backend software will automatically lower the volume to -14 LUFS. Conversely, if it is too quiet, they will use a limiter to bring it up.

This algorithmic intervention has effectively ended the “Loudness War.” Because the software will turn down anything that exceeds the target, there is no longer a technical advantage to making audio “hyper-loud.” Instead, the tech encourages maintaining “dynamic range,” which results in better sound quality for the end-user.

Impact on Gadget Design and Audio Hardware

This standardization also affects the hardware side of technology. Smartphone manufacturers and smart speaker designers (like Sonos or Bose) can optimize their internal amplifiers and Digital-to-Analog Converters (DACs) because they know the approximate loudness level of the incoming stream. When the software handles the normalization, the hardware can focus on delivering clarity and punch without worrying about unexpected signal spikes that could damage small, sensitive speaker drivers.

Future Trends in Audio Technology

As we move toward more immersive digital environments, the way we measure and perceive loudness is undergoing another transformation.

Spatial Audio and Object-Based Metering

With the rise of Apple’s Spatial Audio and Dolby Atmos, loudness measurement is moving from two channels (stereo) to multi-directional, object-based audio. In a 360-degree soundstage, loudness isn’t just about volume; it’s about “presence” and “distance.” New software tools are being developed to measure “binaural loudness,” ensuring that a sound coming from “behind” the user in a VR environment doesn’t feel jarringly louder than a sound in front of them.

Real-Time Digital Security and Hearing Protection

Digital security and health-tech are also integrating loudness measurement. Modern operating systems, such as iOS and Android, now include “Headphone Safety” features. These tools use real-time decibel monitoring to track a user’s exposure to high-volume audio over time. If the software detects that the “dose” of sound has reached a level that could cause permanent hearing damage, it automatically sends a notification and throttles the hardware’s output. This is a prime example of how units of measure for loudness are being used not just for entertainment, but for user well-being and proactive health tech.

Conclusion

The unit of measure for loudness has evolved from a simple physical calculation to a complex digital algorithm. While the decibel remains the fundamental building block, LUFS has become the operational standard for the modern tech ecosystem. By leveraging these metrics, software developers, AI tools, and hardware engineers are able to create a seamless, safe, and high-quality auditory experience across the billions of devices we use every day. As AI and spatial audio continue to advance, our ability to measure and manipulate sound data will only become more precise, further blurring the line between the physical world and the digital one.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.