In an era defined by ubiquitous technology, where data flows at light speed and devices seamlessly connect our world, a fundamental force often goes unacknowledged: electric charge. From the smallest microchip to the largest power grid, the manipulation and understanding of electric charge are at the very heart of how modern technology functions. To truly grasp the essence of electronics, computing, and telecommunications, one must first understand its most basic constituent: charge, and its standardized unit of measurement. The International System of Units (SI) provides a universal language for science and engineering, ensuring consistency and precision across the globe. For electric charge, this critical unit is the Coulomb.

This article delves into the significance of the Coulomb, exploring its definition, its historical journey, and its indispensable role in the vast landscape of contemporary technology. We’ll uncover why this seemingly abstract unit is the bedrock upon which our digital world is built, influencing everything from battery life to the very architecture of artificial intelligence.

The Fundamental Nature of Electric Charge

Electric charge is not merely a technical term; it’s a fundamental property of matter, just like mass. Without it, the universe as we know it would be dramatically different, and certainly, our technological advancements would be non-existent. Understanding its nature is the first step towards appreciating its measurement.

Defining Charge: A Core Concept in Electronics

At its most basic, electric charge is an intrinsic property of subatomic particles that causes them to experience a force when placed in an electromagnetic field. There are two types of electric charge: positive and negative. Protons carry a positive charge, while electrons carry a negative charge. Neutrons, as their name suggests, are electrically neutral. The fundamental principle is simple yet profound: like charges repel each other, and opposite charges attract. This interplay of forces is what drives countless phenomena, from the stickiness of static electricity to the intricate operations within a microprocessor. In the context of electronics, the movement of these charged particles, particularly electrons, constitutes electric current, the lifeblood of our devices.

Historical Context: From Static Electricity to Modern Electronics

The exploration of electric charge began millennia ago, with ancient Greeks observing static electricity, like amber attracting feathers when rubbed. However, it wasn’t until the 18th century that figures like Benjamin Franklin contributed significantly, coining the terms “positive” and “negative” charge. A pivotal moment came with the work of Charles-Augustin de Coulomb in the late 18th century. Through meticulous experiments, Coulomb quantified the force between two charged objects, establishing what is now known as Coulomb’s Law. His groundbreaking work laid the mathematical foundation for understanding electrostatics, paving the way for Maxwell’s equations and the subsequent explosion of electrical engineering. Without this rigorous early scientific inquiry, the development of motors, generators, and eventually, the digital revolution, would have been impossible. The evolution from philosophical curiosity to precise scientific measurement underscores humanity’s relentless pursuit of understanding the natural world, a pursuit that directly fuels technological progress.

Why Measurement Matters: Precision in the Digital Realm

In any scientific or engineering discipline, accurate and standardized measurement is paramount. Imagine trying to build a complex circuit if every engineer used a different unit for charge, or if the definition varied from lab to lab. Chaos would ensue, and interoperability would be a dream. The SI system was established to prevent this very scenario, providing a coherent set of units that are globally recognized and precisely defined. For electric charge, the Coulomb ensures that engineers in Tokyo can design components that will flawlessly integrate with systems built by engineers in Berlin. This standardization fosters collaboration, accelerates innovation, and guarantees the reliability and safety of technological products. From calibrating sensitive scientific instruments to ensuring the consistent performance of consumer electronics, the precision offered by the SI unit for charge is an invisible yet indispensable backbone of the modern tech landscape.

The Coulomb: The SI Unit of Electric Charge

Having established the foundational importance of charge, we now turn our attention to its specific SI unit: the Coulomb. This unit, named in honor of Charles-Augustin de Coulomb, is central to quantifying electric charge in all its manifestations.

Defining the Coulomb: More Than Just a Number

The formal definition of a Coulomb (symbolized as ‘C’) isn’t based on a fixed number of elementary charges (like electrons), but rather is derived from the definition of the ampere (A), the SI unit for electric current. One Coulomb is defined as the amount of electric charge transported by a constant current of one Ampere in one second. This relationship is expressed by the formula Q = I * t, where Q is charge in Coulombs, I is current in Amperes, and t is time in seconds.

To put its magnitude into perspective, a single electron carries an incredibly small charge of approximately -1.602 x 10⁻¹⁹ Coulombs. Conversely, one Coulomb represents the charge of approximately 6.242 x 10¹⁸ electrons. This immense number highlights that in most practical technological applications, we are dealing with a vast collective flow of charge carriers rather than individual electrons. The Coulomb provides a manageable scale to quantify these vast quantities of charge, allowing engineers to design circuits and systems effectively.

Deriving the Coulomb: A Relationship with Current and Time

The derivation of the Coulomb from the Ampere and second is a testament to the interconnectedness of SI units. The Ampere itself is defined based on the force between two current-carrying wires. By linking charge to current over time, the SI system provides a robust and fundamental definition that doesn’t rely on arbitrarily counting elementary particles, which would be far more challenging to measure consistently. This definition is crucial because in electronics, it’s often the flow of charge (current) that is directly measured and controlled, rather than static charge accumulation. Understanding that Q=I*t allows engineers to calculate battery capacities, energy consumption, and the behavior of dynamic circuits. For instance, a battery rated at 1 Ampere-hour (Ah) effectively stores 3600 Coulombs of charge (1A * 3600s = 3600C), providing a direct link between a common consumer specification and the fundamental SI unit.

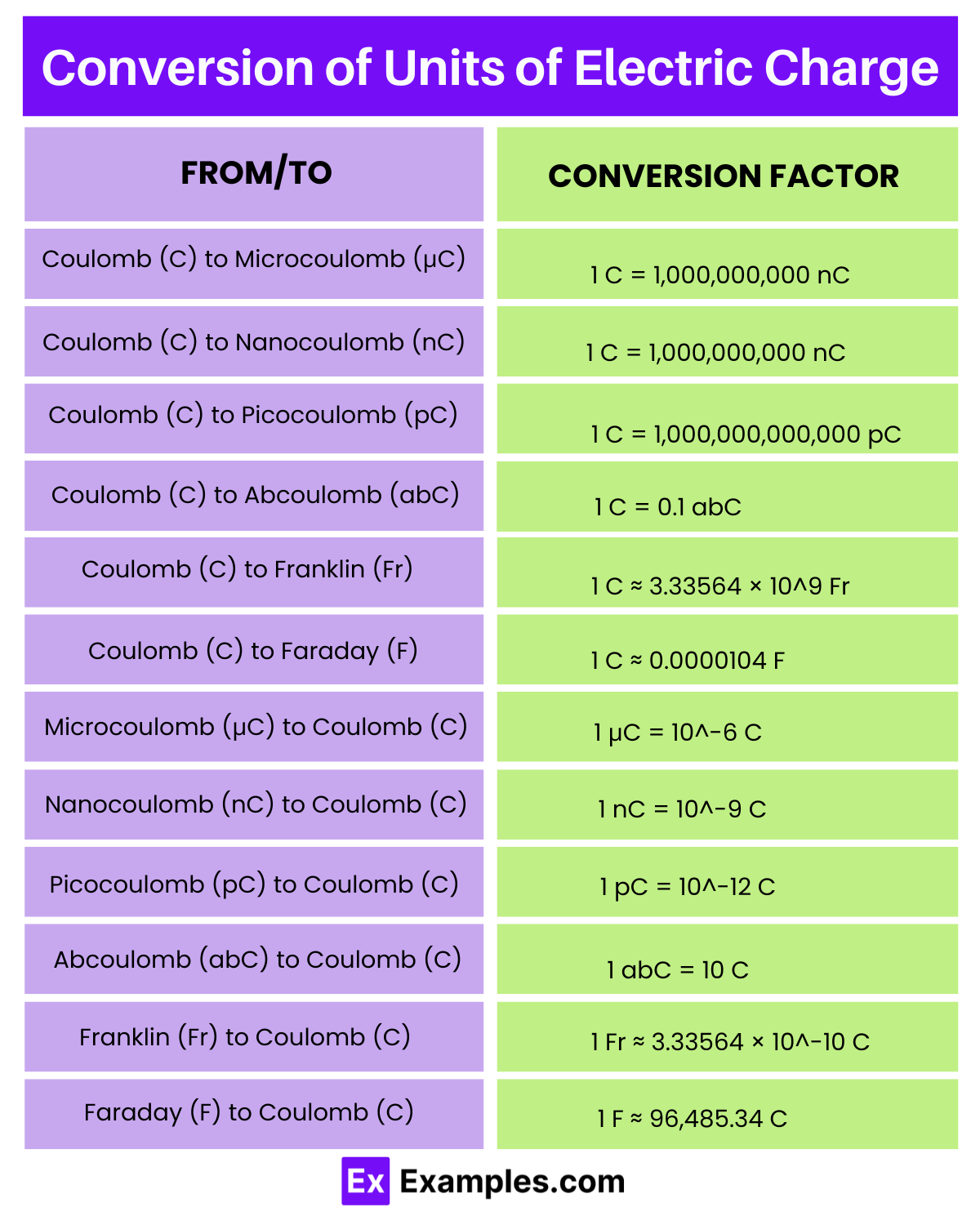

Practical Magnitude: Understanding ‘Small’ and ‘Large’ Charges

While a single Coulomb represents a massive number of elementary charges, in everyday technological contexts, we encounter both fractions of Coulombs and multiples of them. For example, static electricity built up on a person might be in the microcoulomb (µC) range. A typical AA alkaline battery has a capacity of around 2000-3000 mAh, which translates to 7200-10800 Coulombs. A lightning bolt, a dramatic natural phenomenon of charge transfer, can involve charges ranging from 5 to 300 Coulombs.

Understanding these magnitudes helps engineers design components suitable for specific applications. A circuit designed to handle minute sensor signals will manage microcoulomb charges, while power electronics for electric vehicles must be capable of efficiently processing tens of thousands of Coulombs per second. This practical application of the Coulomb scale is vital for everything from designing robust power supplies to developing sensitive medical diagnostic equipment.

Charge in Modern Technology: Applications and Implications

The Coulomb is not just a theoretical concept; its principles are woven into the very fabric of our technological infrastructure. From the power source that fuels our mobile phones to the intricate logic gates that perform calculations, electric charge is the active agent.

Powering Our Devices: Batteries and Capacitors

At the core of virtually all portable electronics are batteries, which are essentially sophisticated charge storage devices. A battery’s capacity, often expressed in Ampere-hours (Ah) or milliampere-hours (mAh), directly indicates how much charge it can deliver over time. Knowing the charge capacity in Coulombs (or a related unit) allows engineers to estimate battery life, optimize power consumption, and design efficient charging systems. Similarly, capacitors are components designed to store electric charge and release it rapidly. They are ubiquitous in electronic circuits, used for smoothing power supplies, filtering signals, and storing energy for flash photography or sudden power demands. The capacitance of a component, measured in Farads, directly relates to its ability to store charge (Q = C * V, where C is capacitance and V is voltage). Understanding the dynamics of charge storage and discharge in these components is critical for designing reliable, long-lasting, and high-performance devices.

Data Transmission and Processing: The Flow of Electrons

The entire digital world relies on the controlled movement and presence of electric charge. In semiconductors, the fundamental building blocks of microprocessors and memory chips, information is represented and processed by the presence or absence of charge carriers (electrons or ‘holes’). Transistors, the tiny switches within integrated circuits, control the flow of charge, allowing for the creation of logic gates that perform computations. CMOS (Complementary Metal-Oxide-Semiconductor) technology, prevalent in modern CPUs, uses tiny capacitors to store charge that represents binary 0s and 1s. Data transmission, whether through copper wires or fiber optics, also involves the manipulation of electromagnetic fields, which are fundamentally linked to the movement of charge. The speed and efficiency of our communication networks and computing devices are directly tied to how effectively we can control and detect minuscule amounts of charge.

Digital Security and Data Integrity

While seemingly less direct, the principles of charge are also critical for digital security and data integrity. Reliable storage of data in non-volatile memory (like flash drives or SSDs) depends on trapping or releasing charge in tiny cells, and maintaining its state despite external interference. Data corruption can occur if these charge states are inadvertently altered. In cryptography and secure communication, the physical properties of charge and electromagnetic signals are considered to prevent side-channel attacks, where information might be leaked through unintended emissions or power fluctuations. Ensuring the stability of power supplies and the integrity of signal transmission, both deeply rooted in the management of electric charge, is paramount to protecting sensitive data and maintaining the reliability of our digital infrastructure.

Advanced Technologies: Quantum Computing and Beyond

Looking to the future, the control of charge becomes even more precise and critical. In the nascent field of quantum computing, the fundamental units of information, qubits, can sometimes be represented by the spin state or charge state of individual electrons. Manipulating these quantum charges with extreme precision at near absolute zero temperatures is at the forefront of scientific and technological innovation. Furthermore, in areas like nanotechnology and bioelectronics, understanding and controlling charge at the molecular level is opening doors to revolutionary materials, sensors, and interfaces between biological systems and electronics. The Coulomb, and the fundamental principles it represents, continues to be a cornerstone for these cutting-edge frontiers.

Related Concepts and Units in Electrical Engineering

To fully appreciate the role of the Coulomb, it’s essential to understand how it interlaces with other fundamental electrical quantities and their respective SI units, forming a coherent framework for electrical engineering.

Current, Voltage, and Resistance: The Ohm’s Law Triangle

The relationship between charge, current, voltage, and resistance forms the bedrock of circuit analysis. As previously mentioned, current (I), measured in Amperes (A), is the rate of charge flow (I = Q/t). Voltage (V), measured in Volts (V), represents the electric potential difference between two points, essentially the “push” or “pressure” that drives charge. Resistance (R), measured in Ohms (Ω), is the opposition to the flow of charge. These three are intricately linked by Ohm’s Law (V = I * R), a fundamental principle in electronics. Understanding how charge flow (current) responds to potential difference (voltage) when impeded by resistance is crucial for designing everything from simple LED circuits to complex power distribution systems.

Energy and Power: Watts and Joules in Relation to Charge

The work done by electric charge and the rate at which it’s done are quantified by energy and power. Electrical energy (E) is measured in Joules (J), and it represents the capacity to do work. It is directly related to charge and voltage (E = Q * V). Electrical power (P), measured in Watts (W), is the rate at which energy is transferred or consumed (P = E/t = V * I). Since current (I) is the flow of charge (Q/t), power can be seen as the rate at which charge is moved across a voltage difference. This connection is vital for assessing the efficiency of electronic devices, designing power supplies, and managing energy consumption in large data centers, where even small inefficiencies in charge utilization can lead to massive energy waste.

Capacitance and Inductance: Storing and Controlling Charge

Beyond resistance, circuits also contain elements that store energy in electric fields (capacitors) or magnetic fields (inductors). Capacitance (C), measured in Farads (F), is a component’s ability to store electric charge (Q = C * V). It plays a crucial role in filtering, timing, and energy storage in circuits. Inductance (L), measured in Henrys (H), is a component’s ability to store energy in a magnetic field when current flows through it. While not directly a measure of charge, inductance influences the rate of change of current (and thus charge flow) in a circuit (V = L * dI/dt). Together, capacitors and inductors, along with resistors, form the basis of all complex electronic circuits, enabling sophisticated signal processing, radio frequency communication, and advanced control systems—all fundamentally reliant on the controlled manipulation of electric charge.

Conclusion

The question “What is the SI unit for charge?” leads us down a fascinating path, revealing the Coulomb as more than just a scientific term. It is a foundational concept, a precise measurement, and an invisible enabler of the digital world we inhabit. From the elementary attraction and repulsion of subatomic particles to the complex algorithms executed by advanced AI, the movement and control of electric charge are omnipresent. The Coulomb, as the standardized unit for this fundamental property, provides the common language that allows scientists and engineers worldwide to innovate, build, and connect.

As technology continues to advance at an astonishing pace, pushing the boundaries from nanoscale electronics to quantum computing, the principles governing electric charge will remain at the forefront. The Coulomb, a legacy of rigorous scientific inquiry, continues to be the bedrock upon which the future of technology is meticulously constructed, ensuring precision, interoperability, and endless possibilities in our ever-evolving digital age.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.