The landscape of generative artificial intelligence has expanded far beyond the utility-driven applications of productivity and coding assistance. As Large Language Models (LLMs) become more sophisticated, a new niche has emerged: immersive, roleplay-centric conversational interfaces. At the forefront of this movement is Janitor AI, a platform that has gained significant traction for its flexibility, advanced character customization, and technical architecture. Unlike standard chatbots designed for corporate efficiency, Janitor AI leverages the power of neural networks to facilitate highly nuanced, creative, and unrestricted interactions.

The Architecture and Core Functionality of Janitor AI

To understand what Janitor AI is, one must first look at its structural framework. It is essentially a sophisticated front-end interface that allows users to connect with various back-end Large Language Models. While many users interact with it as a website, it functions as a gateway to high-parameter models that process natural language with remarkable human-like fluidity.

Understanding the Role of APIs and LLM Integration

Janitor AI does not operate in a vacuum. Historically, the platform relied heavily on third-party Application Programming Interfaces (APIs). By allowing users to plug in their own API keys from providers like OpenAI (GPT-3.5 and GPT-4) or Anthropic, Janitor AI provides a bridge between raw computational power and a user-friendly roleplay environment. This decoupling of the interface from the model allows for a modular experience where the user can choose the “brain” behind their chatbot based on their specific needs for logic, creativity, or speed.

The Rise of JanitorLLM

As the platform grew, the development team recognized the need for a native solution to circumvent the limitations and strict content policies of external providers. This led to the development of JanitorLLM, the platform’s proprietary model. JanitorLLM is specifically tuned for roleplay and narrative consistency. Technically, this involved “fine-tuning” a base model on datasets rich in dialogue and descriptive prose, ensuring that the AI can maintain character “memory” and personality over long-term interactions.

Integration with KoboldAI and Reverse Proxies

For tech-savvy users and those seeking to run models locally, Janitor AI supports integration with KoboldAI. This is a crucial feature for the “self-hosting” community. By using a reverse proxy or a local server, users can run open-source models (like Llama or Mistral) on their own hardware while using the Janitor AI interface. This provides a layer of technical autonomy that is rarely seen in mainstream AI applications.

Advanced Customization and Character Engineering

The primary draw of Janitor AI is its depth of character creation. In the tech world, this is referred to as “Prompt Engineering” at a granular level. The platform allows users to define a character’s “Personality Matrix” through a series of complex descriptors and behavioral guidelines.

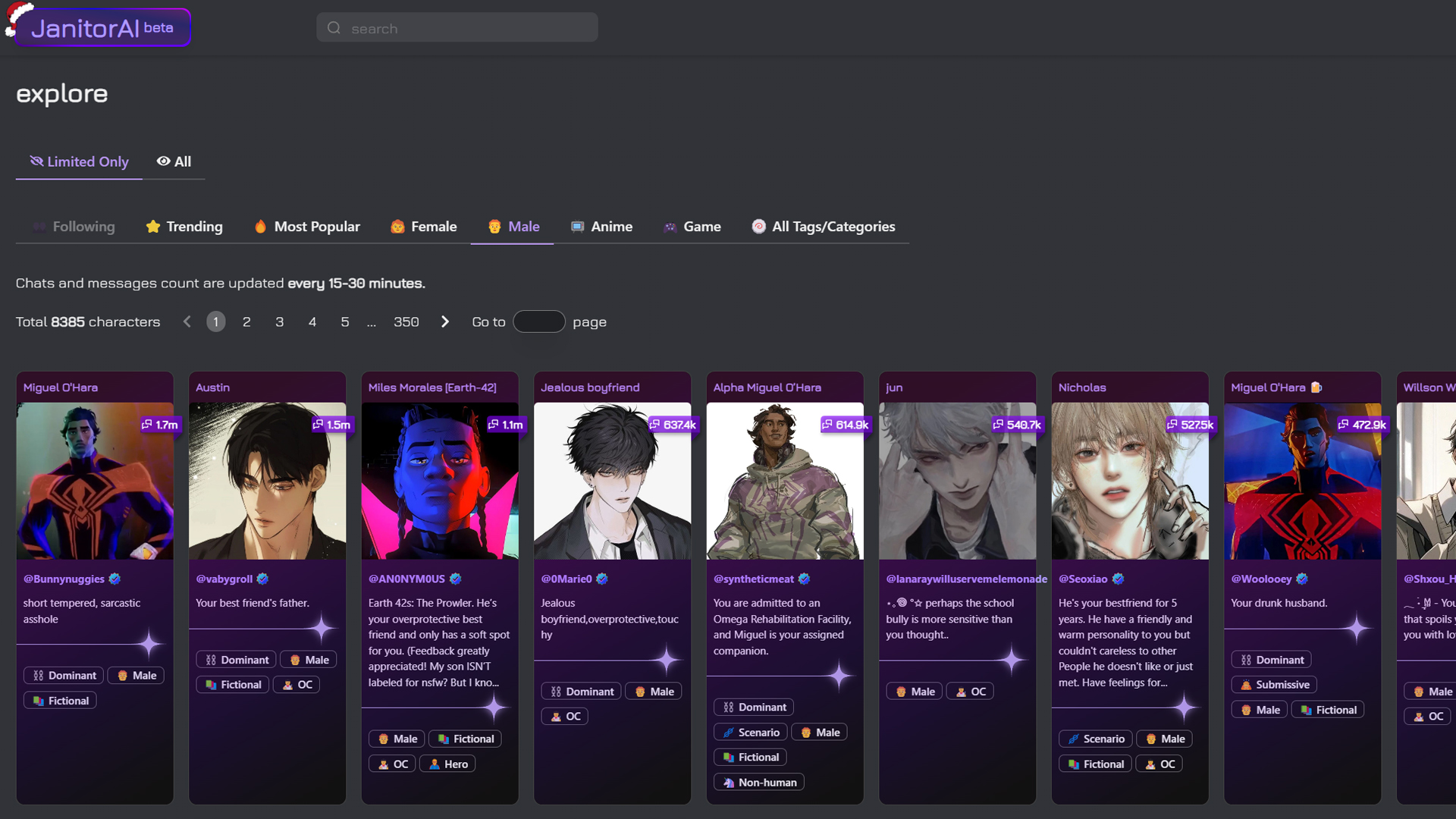

Defining Character Parameters and Metadata

When a user creates a “bot” on Janitor AI, they aren’t just writing a bio; they are defining the parameters of a specific instance of an LLM. This includes the “Core Persona,” which acts as the system prompt, and “Scenario” tags, which set the physical and temporal context of the interaction. From a technical standpoint, these inputs are weighted heavily by the model’s attention mechanism, ensuring that the AI’s responses remain within the bounds of the defined character traits.

The Logic of First Messages and Example Dialogues

A key component of the technology behind Janitor AI is the use of “few-shot prompting.” By providing a “First Message” and “Example Dialogues,” creators give the model a linguistic template. The AI analyzes the syntax, tone, and vocabulary of these examples to mirror them in its generated responses. This prevents the “generic assistant” tone often found in standard AI models and allows for the creation of characters ranging from historical figures to complex fictional archetypes.

Token Management and Context Windows

One of the technical challenges Janitor AI navigates is the “context window”—the amount of information the AI can “remember” during a session. Every interaction consumes tokens. Janitor AI employs sophisticated memory management techniques, such as “summarization loops” or “pinned memories,” to ensure that vital character information isn’t lost as the conversation progresses. This ensures a seamless narrative experience even in sessions spanning hundreds of exchanges.

Technical Comparison: Janitor AI vs. Competitive Chatbot Platforms

In the competitive landscape of AI tools, Janitor AI occupies a unique position compared to giants like Character.AI or ChatGPT. The differentiation lies primarily in its approach to content moderation and model flexibility.

Filter Logic and Content Moderation Systems

The most significant technical distinction between Janitor AI and platforms like Character.AI is the approach to “NSFW” (Not Safe For Work) filters. While mainstream platforms implement hard-coded safety layers that intercept and block certain outputs, Janitor AI offers an “unfiltered” environment. This is achieved by using models that have not been “RLHF-ed” (Reinforcement Learning from Human Feedback) into a sanitized state, or by allowing the use of APIs that permit a broader range of creative expression.

Latency and Response Optimization

From a performance perspective, Janitor AI focuses on optimizing the “Time to First Token” (TTFT). For an immersive experience, users require near-instantaneous feedback. The platform utilizes efficient load balancing to manage the high volume of traffic, particularly when using its native JanitorLLM. By optimizing the inference engine, Janitor AI minimizes the lag that often plagues high-parameter models, providing a more “human” conversational tempo.

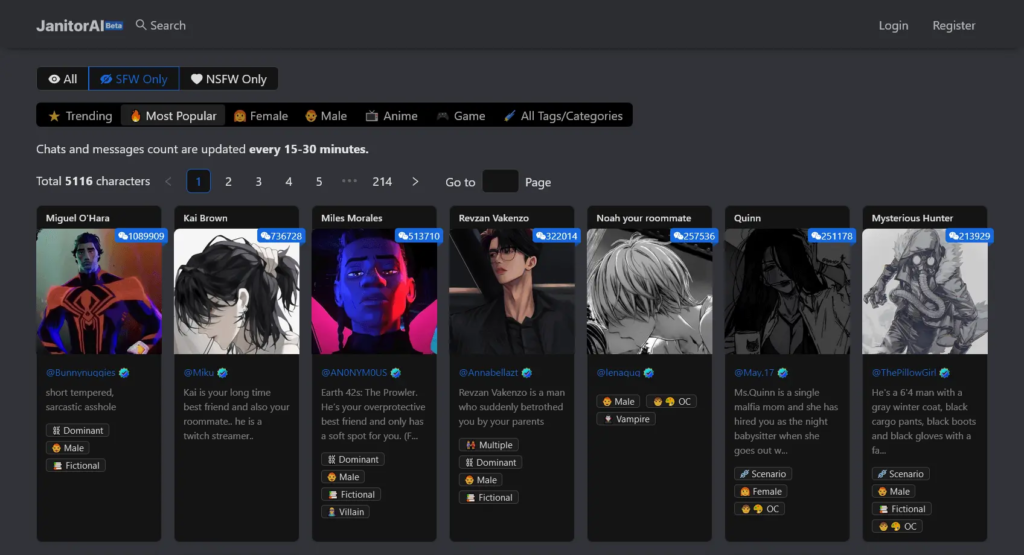

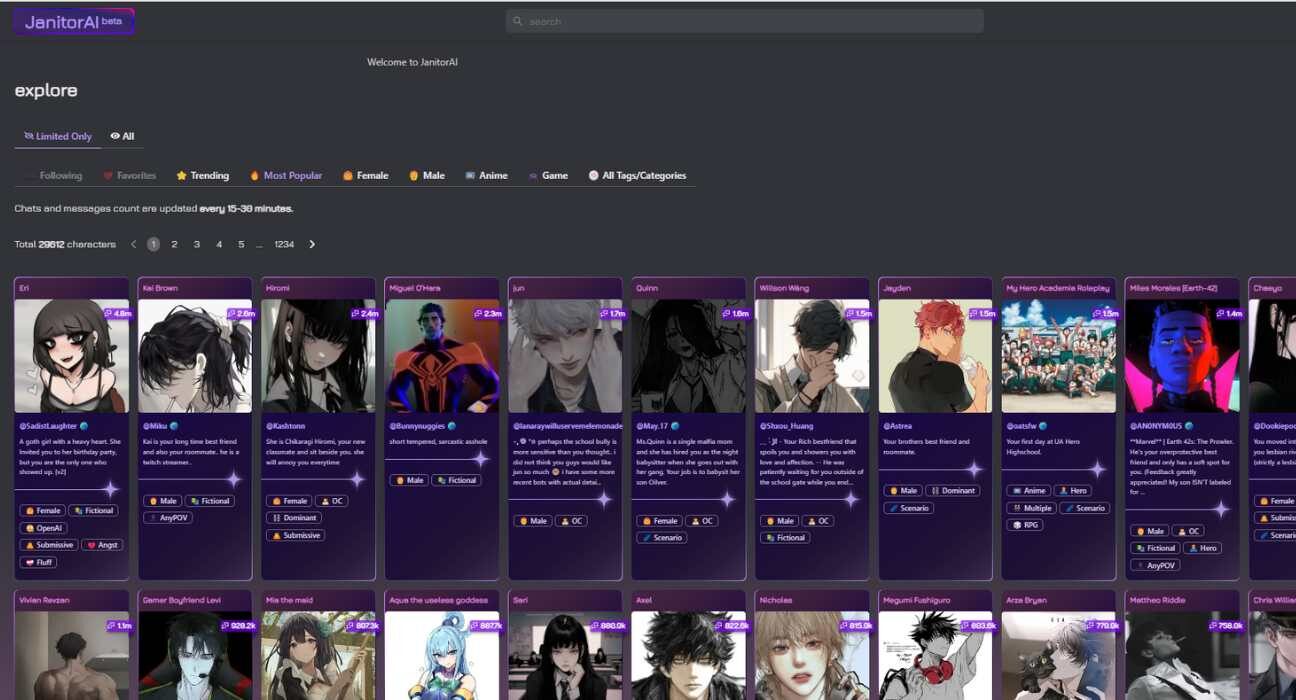

User Interface (UI) and User Experience (UX) Design

While the underlying technology is complex, the Janitor AI interface is designed for accessibility. The use of “Character Cards”—which contain all the metadata and prompts for a specific bot—allows for easy sharing and portability. This modular approach to AI entities is a significant trend in the tech industry, where “portable AI personalities” are becoming a standard for community-driven development.

Digital Security and User Privacy in Generative AI

As with any platform involving high levels of personal interaction and data exchange, the technical security of Janitor AI is a primary concern for its developers and users alike.

Data Encryption and Conversation Privacy

Janitor AI handles a massive amount of conversational data. To protect user privacy, the platform employs industry-standard encryption protocols (SSL/TLS) for data in transit. Furthermore, for users connecting via external APIs (like OpenAI), Janitor AI acts as a pass-through, meaning the conversation logs are subject to the privacy policies of the API provider. The introduction of JanitorLLM has allowed for more localized data handling, giving the platform more control over how user logs are stored or anonymized.

The Risks of API Key Exposure

A unique security aspect of Janitor AI is the management of user-provided API keys. Since these keys are essentially “digital currency” for AI processing, Janitor AI must ensure they are stored securely. Tech-literate users often advocate for “proxy” setups to add a layer of obfuscation between their personal billing accounts and the platform, a practice that Janitor AI supports through its flexible connection settings.

Local Hosting and Open-Source Alternatives

For those who prioritize total digital sovereignty, the tech community surrounding Janitor AI often explores the use of “Local LLMs.” By utilizing tools like LM Studio or Ollama in conjunction with Janitor’s interface, users can run the entire stack on their own GPUs. This eliminates the need for data to ever leave the user’s local network, providing the ultimate level of privacy and security in the age of cloud-based AI.

The Future of Roleplay-Driven AI Technology

The success of Janitor AI signals a shift in how society interacts with software. We are moving from “AI as a tool” to “AI as a companion or creative partner.” Looking forward, several technological advancements are likely to define the next phase of this platform.

Multimodal Integration

The next frontier for Janitor AI is multimodality—the ability for the AI to process and generate not just text, but images and voice. We are already seeing the integration of “stable diffusion” for character avatars and text-to-speech (TTS) engines for audible responses. This requires significantly more computational overhead but results in a far more immersive “Tech-Human” interface.

Scaling Infrastructure for Global Traffic

As Janitor AI’s user base grows into the millions, the technical challenge shifts toward scaling. This involves the use of distributed computing and edge AI to reduce latency for users across different geographic regions. The transition from a niche roleplay site to a robust AI ecosystem requires a backend capable of handling petabytes of conversational data and billions of daily tokens.

In conclusion, Janitor AI is more than just a chatbot website; it is a sophisticated demonstration of how Large Language Models can be tailored for specific, high-engagement niches. By providing a blend of proprietary modeling, API flexibility, and deep character customization, it has set a benchmark for the future of interactive digital media. As the technology continues to evolve, the line between algorithmic response and creative dialogue will only continue to blur, cementing Janitor AI’s place in the vanguard of the generative AI revolution.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.