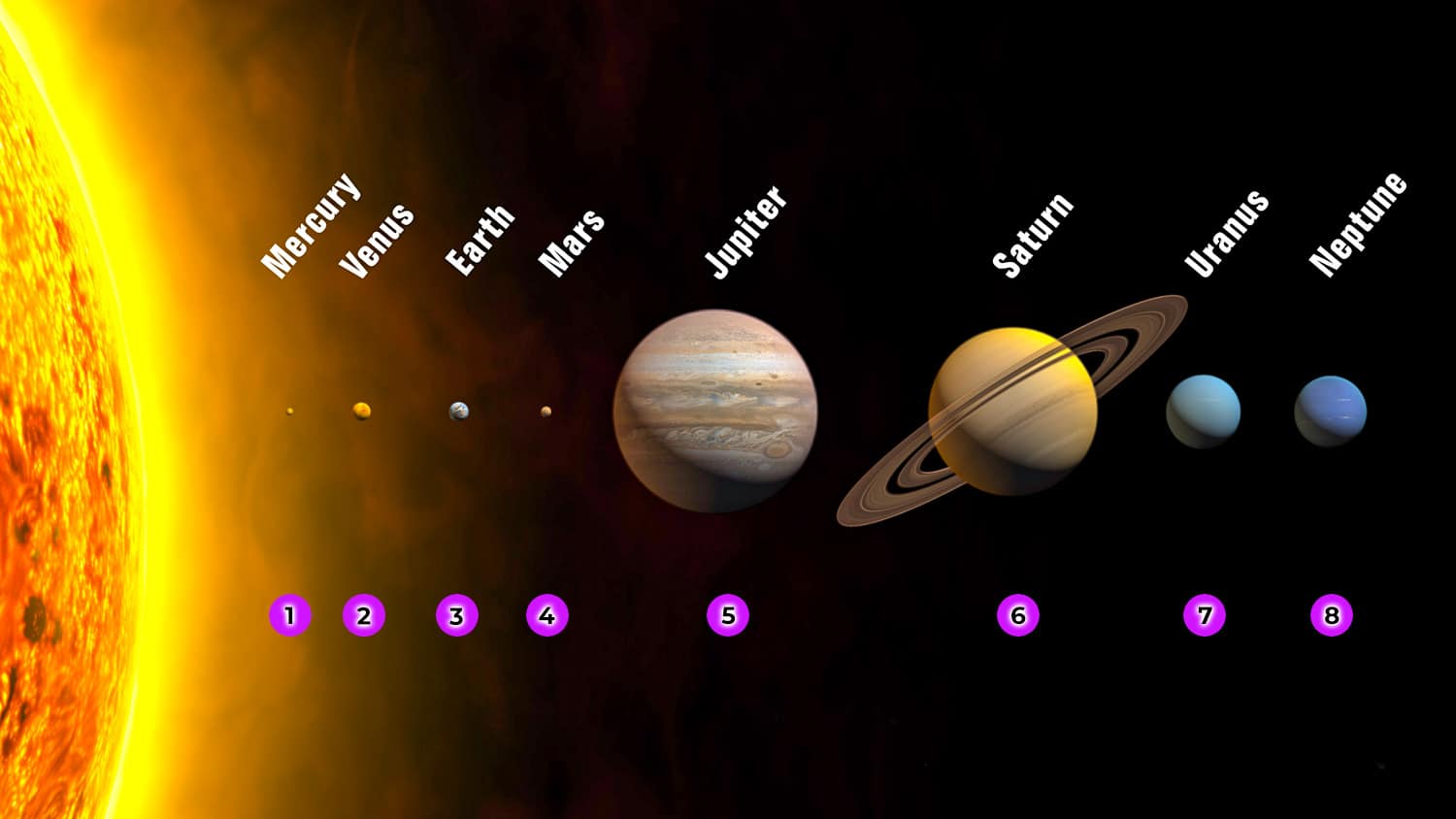

In the vast, expanding cosmos of the global technology landscape, we have long operated under a “Copernican” model where the Cloud was the sun—a massive, centralized source of power and storage around which all our devices and applications orbited. However, as the demand for real-time processing, artificial intelligence, and instantaneous data analysis reaches a fever pitch, the industry is witnessing a dramatic gravitational shift. We are no longer content to send data millions of digital miles away to a centralized data center and wait for a response. Instead, we are building the “planet closest to the sun.” In technological terms, this is Edge Computing: the infrastructure that lives at the absolute frontier of data generation.

Just as Mercury endures the most intense heat and energy due to its proximity to the sun, Edge Computing operates in the high-pressure environment where physical reality meets digital processing. By moving computation away from distant cloud servers and placing it directly on or near the devices generating the data, we are redefining the limits of latency, bandwidth, and autonomous intelligence.

1. Defining the Proximity: The Architecture of Edge Computing

To understand why the “planet closest to the sun” is the most vital piece of the modern tech stack, we must first define the architectural shift from centralized to decentralized systems. For the past decade, the prevailing trend was “Cloud First.” Whether it was a photo uploaded to Instagram or a complex financial algorithm, the data traveled from the device, through various gateways, to a massive server farm, and back.

The Shift from Centralized Clouds to Localized Power

While the cloud offers nearly infinite storage and massive scalability, it is limited by the laws of physics—specifically, the speed of light. For applications like autonomous driving or robotic surgery, a 100-millisecond delay in data transmission is the difference between success and catastrophe. Edge computing solves this by bringing “the sun”—the processing power—to the “planet”—the local device.

In this new paradigm, we see a tiered approach to technology. At the center remains the Heavy Cloud (Deep Storage and Training), but the “Inner Orbit” is now occupied by Edge Gateways and On-Device Processing. This proximity allows for “Mercury Logic”: the ability to filter, process, and act upon data in micro-seconds without ever needing to contact the home base.

Mercury Logic: Minimizing Latency in High-Heat Environments

In tech, “heat” is data density. Modern industrial environments, such as smart factories or 5G-enabled cities, generate terabytes of data every hour. Moving all this data to the cloud is not only slow but prohibitively expensive in terms of bandwidth. By implementing Edge Computing, companies can practice “Data Pruning” at the source. This means only the most critical insights are sent to the central cloud, while the immediate, operational decisions are handled locally. This efficiency is what allows modern technology to survive and thrive in data-saturated environments.

2. The Hardware Revolution: Gadgets and Silicon at the Orbit’s Edge

The transition to an edge-centric world has necessitated a complete overhaul of hardware design. We are moving away from “thin clients” (devices that are essentially just screens for cloud services) and toward “thick” or “intelligent” devices equipped with specialized silicon designed to handle heavy workloads locally.

Specialized AI Chips and NPU Integration

The “planet closest to the sun” requires a specialized “atmosphere” to survive. In the tech world, this atmosphere is composed of Neural Processing Units (NPUs) and Application-Specific Integrated Circuits (ASICs). Companies like Apple, NVIDIA, and Qualcomm are no longer just building general-purpose CPUs; they are designing chips specifically for machine learning at the edge.

When you use FaceID on an iPhone or real-time language translation on a Google Pixel, the “planet” (your phone) is doing the heavy lifting. The AI model isn’t living in a data center in Virginia; it’s living in the silicon in your pocket. This shift in gadget architecture is the primary driver behind the current boom in AI-capable hardware, as it ensures privacy and speed by keeping the data on-device.

IoT Sensors: The Satellites of the Local Ecosystem

If the edge server is the planet, then Internet of Things (IoT) sensors are the satellites that feed it information. In a professional tech environment, these sensors are becoming increasingly sophisticated. We are seeing “Smart Sensors” that don’t just record temperature or vibration but actually analyze the waveforms locally using micro-Python or specialized firmware.

These devices represent the absolute furthest reaches of the tech ecosystem. By processing information at the sensor level, we reduce the “noise” of the digital universe, ensuring that the central processing unit only deals with “signal.” This hierarchy of hardware is essential for the scalability of the next generation of digital infrastructure.

3. Digital Security in the Inner Orbit: Protecting the Frontier

One of the most significant challenges of moving closer to the data source is security. In the traditional cloud model, the “perimeter” was well-defined and easy to defend. You built a metaphorical wall around the data center. But when your processing power is scattered across thousands of edge devices—on street lamps, in cars, and in handheld gadgets—the attack surface expands exponentially.

Protecting Data at the Source

Paradoxically, being the “planet closest to the sun” can actually enhance security if handled correctly. By processing data locally, sensitive information never has to travel across the open internet. For example, in digital healthcare, a wearable device that analyzes a patient’s heart rate locally and only sends a “distress signal” to the cloud is inherently more secure than one that streams raw biometric data 24/7.

The tech industry is currently adopting “Zero Trust” architectures to manage this. In a Zero Trust Edge environment, every device is treated as a potential threat, and every data packet must be verified, even if it is within the local network. This “Trust nothing, verify everything” approach is the only way to secure the decentralized frontier.

The Challenges of Decentralized Security Architectures

Despite the benefits, the logistical “heat” of managing security at the edge is intense. Firmware updates, patch management, and physical tampering protection become massive hurdles when you have 10,000 edge nodes instead of one central server. The rise of “Edge Orchestration” software tools—such as Kubernetes for the Edge—is the tech industry’s answer to this. These tools allow IT professionals to manage the entire “solar system” of devices from a single console, pushing security updates to the furthest planets in the blink of an eye.

4. The Expanding Gravity of the Edge: Future Use Cases and Industry Trends

As we look toward the next decade, the “gravity” of Edge Computing is pulling more industries into its orbit. The convergence of 5G, AI, and Edge Computing is creating a “perfect storm” of technological capability that will render traditional centralized models obsolete for many high-performance applications.

Autonomous Vehicles and Real-Time Processing

An autonomous vehicle is essentially a high-performance data center on wheels. It is perhaps the best example of the “planet closest to the sun.” To navigate a busy intersection, the car must process inputs from LiDAR, cameras, and radar in real-time. It cannot wait for a cloud server to tell it to hit the brakes.

The future of this tech lies in Vehicle-to-Everything (V2X) communication, where cars communicate not just with the cloud, but with the “Edge” built into the traffic lights and roads themselves. This localized network creates a high-speed, low-latency “inner circle” of communication that makes autonomous transport viable and safe.

The Role of 5G in Shrinking the Distance

5G is the gravitational force that keeps the edge ecosystem together. While 4G was built for humans to browse the web, 5G was built for machines to talk to machines. Its high bandwidth and low latency are the “connective tissue” that allows the planet closest to the sun to stay in constant sync with the rest of the galaxy.

With 5G, we are seeing the rise of “Private MEC” (Multi-access Edge Computing). Large enterprises, such as shipping ports or mining operations, are building their own private 5G networks to power edge-based automation. This allows them to maintain a “closed loop” of data, ensuring maximum speed and total control over their digital assets.

Conclusion: The New Solar System of Technology

The question “what is the planet closest to the sun?” yields a simple astronomical answer: Mercury. But in the professional world of technology, the answer is more profound. The “planet” closest to the source of data, energy, and action is the Edge.

We are moving away from an era of digital distance and toward an era of digital proximity. This shift is not merely a trend; it is a fundamental restructuring of how we build software, design hardware, and secure our digital lives. By embracing the power of Edge Computing, we are moving the “sun” of processing power to where it is needed most—right at the center of our lives, our businesses, and our devices. As we continue to innovate, the organizations that master this inner orbit will be the ones that exert the most gravity in the future tech economy.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.