In the modern digital landscape, data is the lifeblood of every software application, artificial intelligence model, and enterprise system. However, the value of data is not inherent in its quantity, but in its accuracy and reliability. This is where data validation plays a critical role. Data validation is the technical process of ensuring that data entered into a system meets predefined standards of quality, format, and logic before it is processed or stored. Without rigorous validation, systems fall victim to the “Garbage In, Garbage Out” (GIGO) principle, where flawed input inevitably leads to erroneous output, compromised security, and system failures.

For software engineers, data scientists, and IT professionals, understanding the nuances of data validation is essential for building robust, scalable, and secure digital environments. This guide explores the foundational principles, technical implementations, and the evolving role of validation in the era of AI and Big Data.

Understanding the Core Foundations of Data Validation

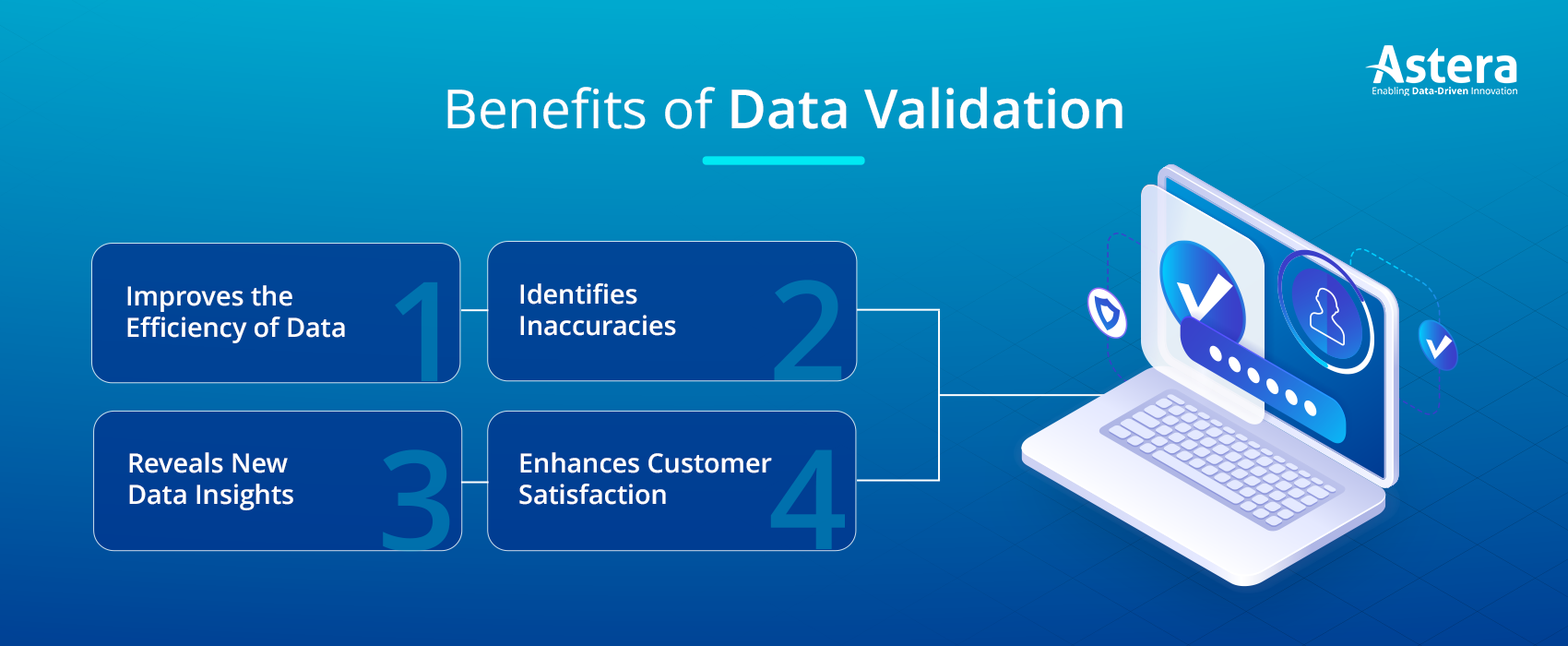

At its essence, data validation is a filter designed to catch errors at the earliest possible stage of the data lifecycle. It serves as a gatekeeper, ensuring that only “clean” data moves from the point of entry (such as a user interface or an API) to the storage layer (such as a database).

The “Garbage In, Garbage Out” (GIGO) Concept

In technology, the GIGO principle highlights that the integrity of a system’s output is strictly dependent on the integrity of its input. If a financial application accepts a negative number for a transaction amount, or if a healthcare database accepts a date of birth in the future, the resulting calculations and reports will be fundamentally flawed. Data validation acts as the primary defense mechanism against this degradation of system logic.

The Critical Distinction: Validation vs. Verification

While often used interchangeably, validation and verification serve different technical purposes. Data Validation checks if the data conforms to specific rules and formats (e.g., “Is this an email address?”). Data Verification, on the other hand, checks the accuracy or truthfulness of the data against a secondary source (e.g., “Does this email address actually belong to the user?”). In the technical workflow, validation almost always precedes verification.

Essential Types of Data Validation Checks in Software Engineering

To implement a comprehensive validation strategy, developers utilize several distinct types of checks. These technical constraints ensure that the data structure remains consistent and usable across different modules of an application.

Data Type and Format Checks

The most basic form of validation involves checking the data type. If a database field is configured as an integer, the validation logic must reject strings or floating-point numbers. Beyond basic types, format checks use Regular Expressions (Regex) to ensure complex strings follow a specific pattern. For example, a UUID (Universally Unique Identifier) or an IPv6 address must adhere to a strict character sequence to be technically valid.

Range and Constraint Validation

Range checks are vital for numerical and date-based data. For instance, a temperature sensor input might be validated to ensure the reading falls within a physically possible range (e.g., -50°C to 100°C). Constraints are also applied to string lengths—ensuring a password meets a minimum length for security or that a “Username” field does not exceed a maximum character count that would break the UI or exceed database column limits.

Consistency and Cross-Field Validation

Consistency checks look at the relationship between multiple data points. A classic example is a flight booking system: the “Return Date” must logically occur after the “Departure Date.” Cross-field validation ensures that the internal logic of a data record is coherent. In complex enterprise resource planning (ERP) systems, this might involve checking that a “Tax ID” matches the “Country of Origin” format.

Uniqueness and Referential Integrity

In database management, uniqueness checks prevent the creation of duplicate records, such as two users having the same primary email address. Referential integrity, often enforced at the database schema level, ensures that a piece of data (like a Customer ID in an Order table) actually exists in the parent table (the Customer table). This prevents “orphan records” which can lead to application crashes and data corruption.

Strategic Implementation: Client-Side vs. Server-Side Validation

A robust technical architecture does not rely on a single point of validation. Instead, it employs a multi-layered approach that balances user experience with backend security.

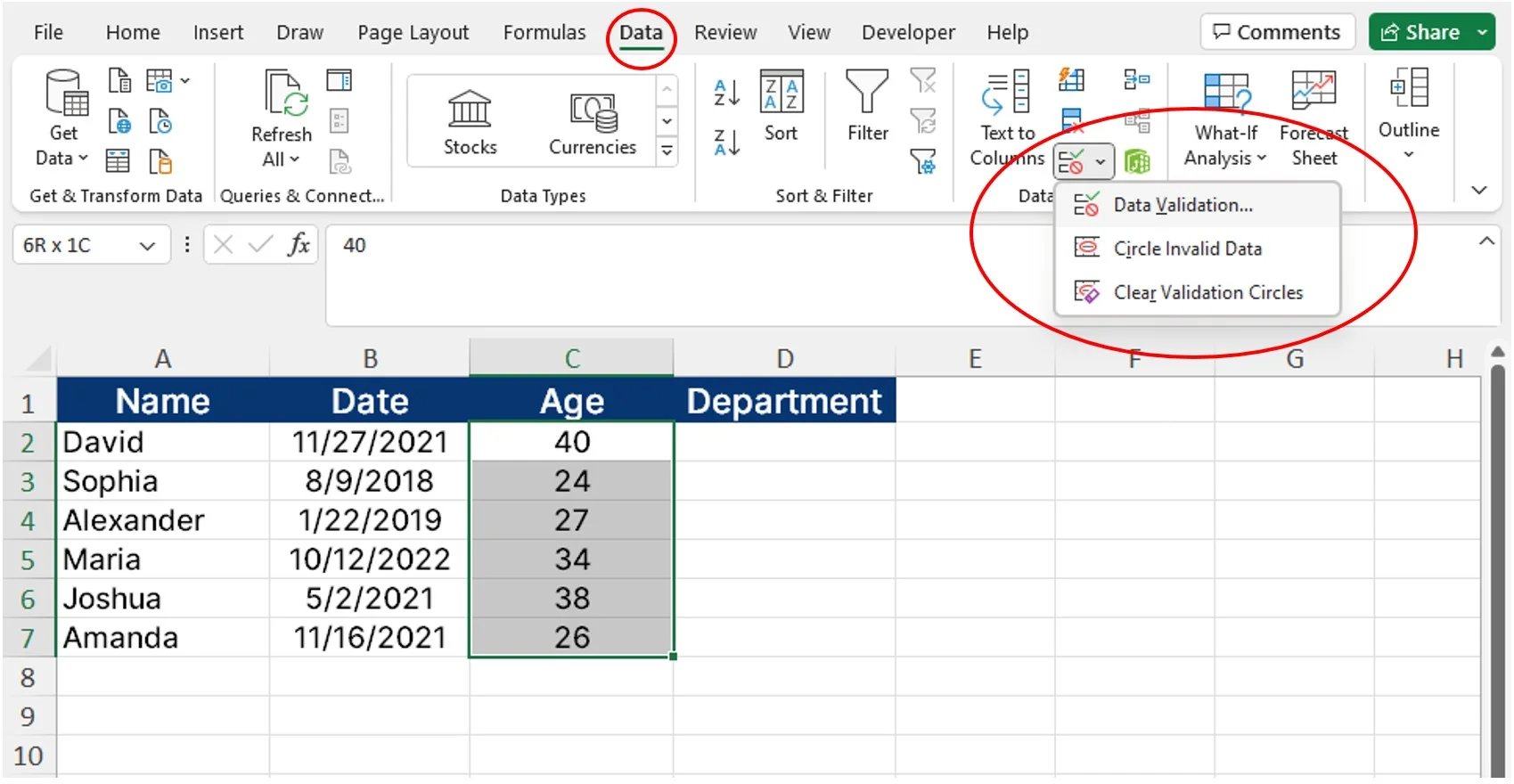

Enhancing User Experience via Client-Side Validation

Client-side validation occurs within the user’s browser or the mobile application interface before the data is even sent to a server. Using JavaScript or framework-specific tools (like React Hook Form or Vue’s Vuelidate), developers provide instant feedback to the user. For example, if a user forgets the “@” symbol in an email field, the UI can highlight the error in real-time. This reduces unnecessary server load and improves the “snappiness” of the application.

Ensuring Security through Server-Side Validation

While client-side validation is excellent for UX, it is never sufficient for security. Because client-side code can be bypassed by malicious actors using tools like Postman or custom scripts, the server must perform its own independent validation. Server-side validation is the final authority; it sanitizes inputs to prevent code injection and ensures that the data strictly adheres to the business logic before it touches the database.

The API Layer: Validating JSON and XML Payloads

In modern microservices architectures, data validation often centers on the API layer. Developers use Schema Validation (such as JSON Schema or OpenAPI specifications) to define exactly what an incoming request should look like. If a microservice receives a JSON payload missing a required field or containing an incorrect data type, the API gateway can automatically reject the request with a 400 Bad Request error, protecting the downstream services from processing invalid data.

Data Validation in the Age of Artificial Intelligence and Big Data

As we shift toward data-driven decision-making and machine learning (ML), the stakes for data validation have never been higher. In these contexts, validation extends beyond simple form fields into the realm of statistical integrity and high-volume data streams.

Validating Large-Scale Datasets for Machine Learning

Machine learning models are only as good as the data they are trained on. Technical teams implement “Data Pipelines” where validation occurs at every stage of the ETL (Extract, Transform, Load) process. This includes checking for missing values (nulls), identifying outliers that could skew the model, and ensuring that the distribution of data in the training set matches the distribution in the real-world production environment.

Schema Drift and Real-Time Monitoring

In Big Data environments, “Schema Drift” occurs when the source data format changes without notice (e.g., a third-party API adds a new field or changes a data type). Technical monitoring tools like Great Expectations or Pandera allow teams to write automated tests for their data. These tools provide real-time alerts if the data flowing through a pipeline deviates from the expected schema, allowing engineers to fix the issue before it impacts downstream analytics or AI predictions.

Automating Validation within CI/CD Pipelines

Modern DevOps practices integrate data validation into the Continuous Integration/Continuous Deployment (CI/CD) pipeline. Just as software code is tested for bugs, data “contracts” are tested to ensure that new code deployments do not break existing data structures. Automated testing suites verify that migrations and API updates maintain data integrity across the entire tech stack.

Digital Security and the Protective Role of Validation

Data validation is not just about keeping data “clean”; it is a fundamental pillar of digital security. Many of the most common cyberattacks exploit weaknesses in how a system handles input.

Mitigating Injection Attacks and Buffer Overflows

One of the most dangerous security threats is SQL Injection (SQLi), where an attacker enters malicious SQL commands into an input field to gain unauthorized access to a database. Strict data validation—specifically “input sanitization” and the use of parameterized queries—strips away dangerous characters and ensures that input is treated as literal data rather than executable code. Similarly, validating the length of input prevents “Buffer Overflow” attacks, where an attacker attempts to crash a system by sending more data than a memory buffer can handle.

Maintaining Compliance and Data Governance

In highly regulated technical sectors like FinTech or HealthTech, data validation is a legal requirement. Compliance frameworks such as GDPR or HIPAA necessitate that data is accurate and handled securely. Automated validation checks ensure that sensitive information (like Social Security numbers or credit card data) is formatted correctly and masked where necessary, reducing the risk of accidental data exposure and ensuring the organization meets strict technical governance standards.

Conclusion: The Future of Technical Data Integrity

As technology continues to evolve, the methods we use to validate data are becoming more sophisticated. We are moving toward a future where “AI-driven validation” can automatically detect anomalies and suggest corrections in real-time, and where blockchain technology might provide immutable validation of data provenance.

However, the core principle remains the same: data is only useful if it is trustworthy. By implementing multi-layered validation strategies—from Regex patterns in a mobile app to schema enforcement in a cloud-native API—tech professionals can build systems that are not only functional but also resilient and secure. In the digital age, rigorous data validation is the ultimate safeguard against the chaos of unorganized information.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.