The digital landscape is a rapidly evolving ecosystem where trends can emerge, peak, and dissipate in a matter of hours. While most viral sensations—like dance challenges or AI filters—are harmless fun, a darker side of digital culture occasionally surfaces. One such phenomenon that has caught the attention of tech developers, digital safety experts, and content moderators is the “chroming” trend.

From a technology and digital security perspective, chroming represents a significant challenge in how we manage algorithmic influence, content moderation, and the safety of digital natives. This article explores the technical mechanics behind the spread of this trend, the role of social media infrastructure in its proliferation, and the technological solutions being deployed to mitigate such risks.

1. The Digital Anatomy of a Dangerous Viral Trend

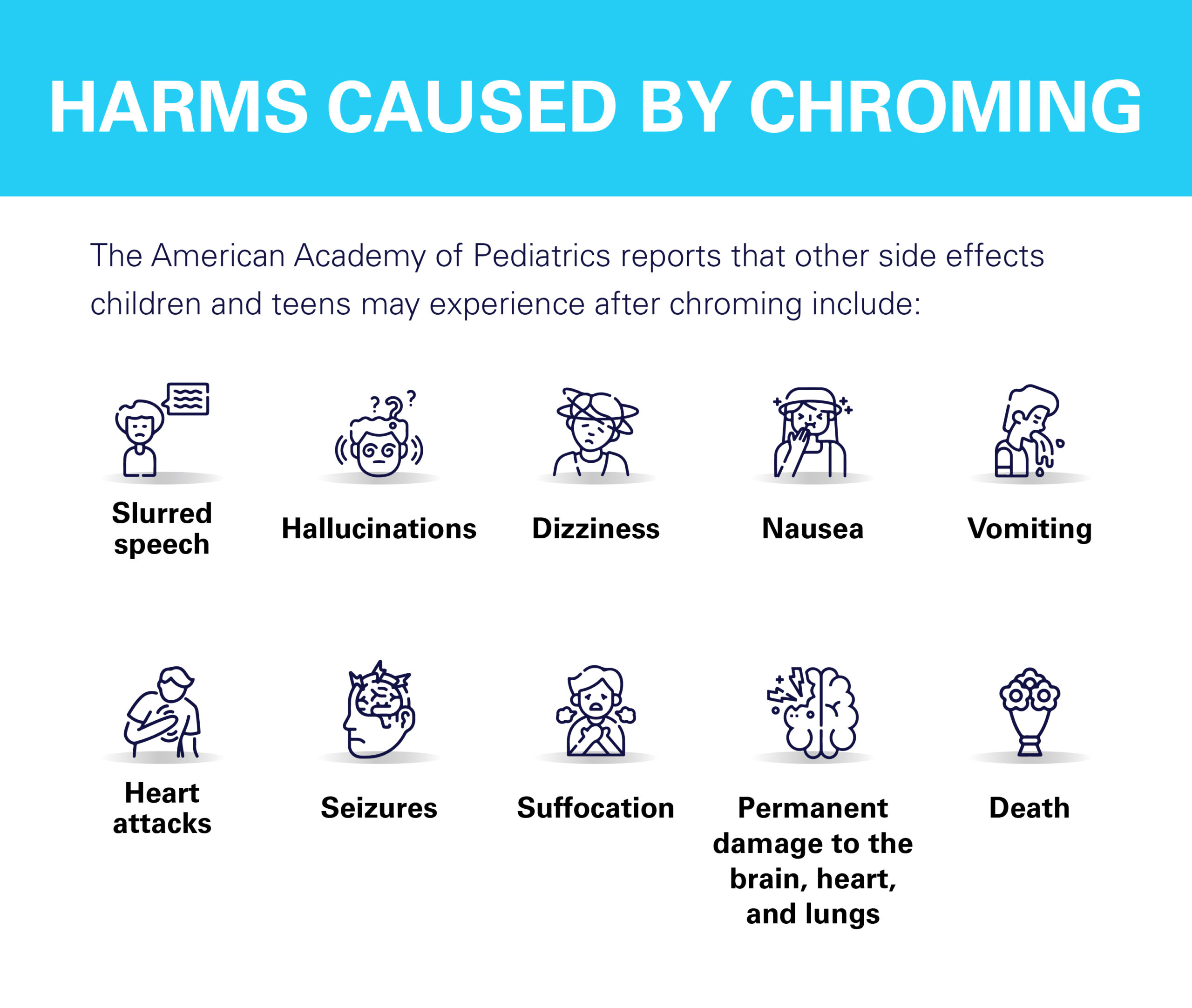

To understand chroming through a technological lens, one must first look at how it is categorized within the digital space. Chroming refers to the act of inhaling toxic fumes from household items—such as aerosol cans, metallic paints, or cleaning supplies—to achieve a temporary high. While the act itself is chemical, its “trend” status is purely digital.

The Role of the Recommendation Algorithm

The primary driver behind the visibility of chroming is the sophisticated recommendation engine used by platforms like TikTok, Instagram, and YouTube. These algorithms are designed to maximize user retention by serving content that generates high engagement. When a user interacts with a video—even out of curiosity or concern—the algorithm notes the “watch time” and begins serving similar content to that user and their demographic look-alikes. This creates an echo chamber where dangerous behaviors appear more normalized than they are in reality.

The Feedback Loop of Engagement Metrics

In the world of social media technology, metrics are king. Likes, shares, and comments act as signals to the platform’s backend that a piece of content is “valuable.” Unfortunately, controversial or high-risk content often generates massive engagement through “rage-baiting” or shock value. For developers, the challenge lies in teaching AI to distinguish between “popular healthy content” and “popular hazardous content,” as both may share similar metadata profiles in the early stages of virality.

Peer Influence in Virtual Spaces

Digital trends thrive on the “social proof” provided by virtual peer groups. Unlike physical peer pressure, which is localized, digital peer pressure is global and persistent. The technology allows a teenager in a small town to feel connected to a global “challenge,” utilizing hashtags and “duet” features to participate in a collective—yet dangerous—experience.

2. Algorithmic Accountability and AI Content Moderation

As chroming and similar hazardous trends emerge, the burden of responsibility shifts toward the tech giants and their moderation infrastructure. The battle against dangerous trends is fought primarily with Artificial Intelligence and Machine Learning.

Computer Vision and Pattern Recognition

Modern social media platforms utilize advanced Computer Vision (CV) to scan every uploaded frame. For chroming, AI models are trained to recognize specific visual cues: the presence of aerosol cans near the face, specific brands of metallic paint, or physical signs of distress. When the AI identifies these patterns, it can trigger an automatic “shadow ban,” limit the video’s reach, or flag it for human review.

Natural Language Processing (NLP) and Slang

Trend participants often use “leetspeak” or coded language to bypass keyword filters. Instead of using the word “chroming,” they might use emojis, deliberate misspellings, or niche hashtags. Tech companies employ Natural Language Processing (NLP) models that are constantly updated to understand shifting digital dialects. By analyzing the context of comments and captions, these tools can identify clusters of dangerous content that would otherwise fly under the radar.

The Challenge of “False Positives”

A major technical hurdle in automated moderation is the risk of false positives. For example, a professional painter using spray-on chrome finish for an automotive tutorial might trigger the same AI flags as someone participating in the chroming trend. Refining these models to understand intent—rather than just the presence of an object—is one of the most complex frontiers in digital safety engineering.

3. Digital Security: Strengthening the “Human Firewall”

In the realm of cybersecurity, we often talk about the “human firewall”—the idea that the user is the final line of defense. When it comes to the chroming trend, digital security isn’t just about protecting data; it’s about protecting the user’s physical well-being through digital literacy and protective software.

Parental Control Software and API Integration

The tech industry has seen a surge in “Safety-as-a-Service” tools. Apps like Bark or Qustodio use API integrations to monitor a child’s social media activity. These tools use their own proprietary AI to scan for keywords related to chroming and other inhalant abuse. This represents a secondary layer of digital security that operates independently of the social media platforms themselves, providing parents with alerts when their children are exposed to high-risk content.

Search Engine Intervention

Google and Bing have implemented “Safety Interventions” for high-risk searches. When a user searches for terms related to chroming or how to “huff” household chemicals, the search engine’s algorithm is programmed to prioritize educational resources, addiction hotlines, and medical warnings over instructional content. This is a deliberate “hard-coding” of ethics into the search architecture to prioritize human safety over raw search relevance.

Reporting Mechanisms as Community Defense

Digital security is increasingly a crowdsourced effort. Reporting tools have become more granular, allowing users to report content specifically for “Self-Harm” or “Dangerous Acts.” The backend data generated from these reports helps train the platform’s AI, making the detection of future trends faster and more accurate.

4. The Future of Digital Wellness and Tech Ethics

The rise of the chroming trend has sparked a broader conversation about “Tech Ethics” and the responsibility of software engineers. As we move further into the decade, we can expect to see technology evolve from being a passive host of content to an active protector of user health.

The Rise of “Safety by Design”

“Safety by Design” is a movement within the tech industry that advocates for embedding safety features into the very foundation of an app’s architecture. This means that before a feature like “Auto-play” or “Infinite Scroll” is launched, it must be audited for how it might exacerbate the spread of dangerous trends like chroming. If a feature is found to prioritize engagement at the cost of user safety, it is re-engineered.

Blockchain and Content Provenance

One emerging tech solution for tracking dangerous trends is the use of blockchain for content provenance. By creating a digital fingerprint for original videos, platforms can more effectively track the spread of “re-uploaded” or “mirrored” content. If a video depicting chroming is banned, blockchain-based tracking can ensure that copies of that same file are automatically blocked across multiple platforms, preventing the “Whack-A-Mole” problem currently faced by moderators.

VR/AR and Immersive Education

On the positive side, technology is being used to combat the very trends it helps spread. Virtual Reality (VR) simulations are being developed for schools to show the physiological impact of inhalant abuse on the human brain in an immersive, high-impact way. By using the same high-tech tools that capture a teen’s attention (like VR and AR), educators can provide a powerful “digital vaccine” against peer-pressured trends.

5. Conclusion: Navigating the Double-Edged Sword

The chroming trend is a sobering reminder that technology is a double-edged sword. The same algorithms that connect us with global communities and provide endless entertainment can also be weaponized—either by human intent or by the unintentional mechanics of engagement-based AI—to promote life-threatening behaviors.

From a tech perspective, the solution lies in a multi-layered approach. It requires more sophisticated AI that understands context, more robust parental control tools, and a fundamental shift in how we value engagement metrics. As we continue to integrate technology into every facet of our lives, the focus must shift from “what can this tech do” to “how can this tech protect.”

In the end, digital security is no longer just about firewalls and encryption keys; it is about the algorithms that govern our attention and the ethical frameworks that ensure the safety of those who navigate the digital world. By understanding the mechanics of trends like chroming, the tech community can better prepare for the next wave of viral challenges, ensuring that the future of the internet is as safe as it is innovative.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.