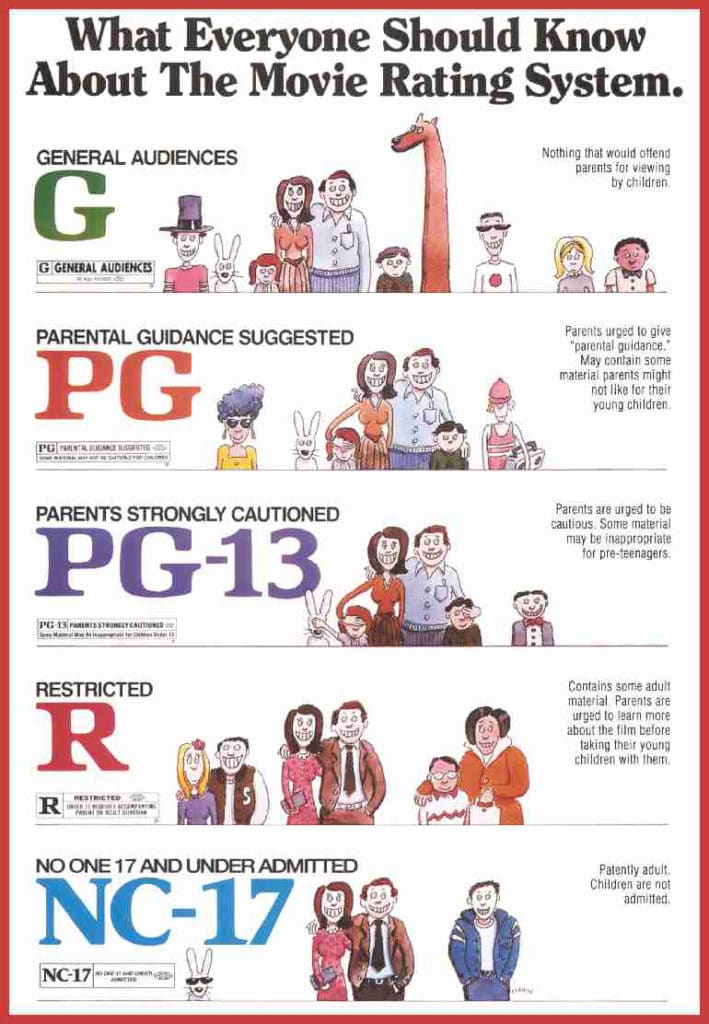

In the traditional sense, a “Rated G” classification has long been the hallmark of the Motion Picture Association (MPA), signifying that a piece of content is suitable for general audiences. It suggests that the material contains nothing that would offend parents for viewing by their children. However, as our consumption of media has migrated from the silver screen to a complex ecosystem of streaming services, mobile applications, and AI-driven platforms, the definition of “Rated G” has undergone a profound technological transformation.

In the digital age, a “G” rating is no longer just a sticker on a DVD case; it is a critical data point in the architecture of content moderation, a metadata tag for search algorithms, and a foundational element of parental control software. Understanding what “Rated G” means today requires an exploration of how technology classifies, filters, and delivers safe content to billions of users.

The Architecture of Digital Safety: How Apps and Software Implement Content Ratings

The transition from physical media to software-based platforms necessitated a more granular approach to content ratings. When we ask what “Rated G” means in a tech context, we are looking at the backend protocols that allow devices—smartphones, tablets, and smart TVs—to recognize and restrict content based on predefined safety tiers.

App Store Guidelines and Age-Appropriate Content

Platforms like the Apple App Store and Google Play Store do not use the exact MPA “G” rating, but they have developed digital equivalents. For instance, Apple’s “4+” rating serves as the technological “G.” To achieve this, developers must ensure their software contains no objectionable material, no gambling, and no unregulated social interaction. This classification is more than a label; it is a software permission. When a user sets a device to “Family Mode,” the operating system’s API (Application Programming Interface) queries the rating of every app. If an app is not the digital equivalent of “Rated G,” the software prevents the binary from even launching.

The Mechanics of Content Filtering and Metadata

In the realm of web browsers and search engines, “Rated G” is translated into “SafeSearch” protocols. Here, the meaning of G-rated content is determined by metadata tags and HTML headers. Software engineers use these tags to help search bots understand the nature of a page. When a piece of content is flagged as “General,” it is prioritized by educational software and restricted-access networks. This technological handshake between the content creator and the distribution platform ensures that “General Audiences” content is discoverable while adult-oriented material remains behind algorithmic firewalls.

Artificial Intelligence and the Automation of Content Classification

One of the most significant shifts in the meaning of “Rated G” involves how content is reviewed. Historically, human committees watched films to determine their rating. Today, with 500 hours of video uploaded to YouTube every minute, human intervention is impossible. The “G” rating has become a product of machine learning (ML) and computer vision.

Automated Visual Recognition for Content Safety

Tech giants utilize sophisticated AI models to scan video frames for prohibited content. To be classified as “Rated G” by an algorithm, a video must pass through several layers of neural networks. These networks are trained to detect high-contrast violence, specific anatomical shapes, and even the presence of substances like alcohol or tobacco. If the AI detects a high probability of any restricted element, the content is automatically demoted from a “G” equivalent to a higher age bracket. For tech companies, “Rated G” is a mathematical probability—a confidence score that the content is 99.9% likely to be benign.

Natural Language Processing in Sentiment and Language Analysis

It isn’t just about what users see; it’s about what they hear. Natural Language Processing (NLP) is used to transcribe audio in real-time and analyze the text for profanity, hate speech, or mature themes. A “Rated G” status in the world of podcasting or streaming audio is maintained through these automated transcripts. If the NLP engine identifies keywords that fall outside the “General” safety parameters, the software automatically updates the metadata, triggering a “Mature” warning or hiding the content from restricted profiles.

The Role of Parental Control Tech and Digital Safety Ecosystems

For parents and educators, “Rated G” is a functional tool used to manage the “digital well-being” of minors. The technology behind this is often invisible but incredibly complex, involving device-level encryption and cloud-based filtering.

User Profiles and Digital Permissions

Modern operating systems (Windows, macOS, Android, iOS) allow for the creation of “Child Profiles.” When a profile is designated as such, the software environment changes. The “Rated G” standard becomes a global filter for the entire OS. This involves “whitelisting”—a tech strategy where only verified “G” content is allowed through, while everything else is blocked by default. This is a shift from “blacklisting” (blocking bad things) to a more secure “Rated G” ecosystem where the software assumes everything is restricted unless it carries a verified safety tag.

The Integration of IoT and Smart Home Safety

As the Internet of Things (IoT) expands, the “Rated G” standard is being integrated into smart speakers and home automation. When a child asks a smart assistant to “play music,” the software doesn’t just search for the title; it filters the request through a “G-rated” API. Services like Spotify Kids or YouTube Kids are essentially software silos built entirely around the “Rated G” philosophy, using tech to ensure that the user never leaves a curated, safe environment.

The Impact of Rating Systems on Streaming Platform Algorithms

Streaming services like Netflix, Disney+, and Amazon Prime Video have redefined the business of “Rated G” through the lens of recommendation engines. On these platforms, a “G” rating is a powerful driver of “watch time” and algorithmic visibility.

Personalization vs. Protection

The challenge for tech companies is balancing a personalized user experience with content safety. Algorithms are designed to keep users engaged, but for a “Rated G” profile, the algorithm is intentionally constrained. The software must ignore high-performing “Mature” content and instead find patterns within the “General” category. This requires a specific type of algorithmic training where the “G” rating acts as a hard boundary, ensuring that the “Up Next” feature never accidentally drifts into PG-13 or R-rated territory due to a glitch in the recommendation logic.

Global Standardization through Software

One of the most difficult tech hurdles is that “Rated G” means different things in different countries. A film rated “G” in the US might be “6+” in another region. To solve this, global tech platforms use “Matrix Mapping” in their databases. When a user logs in, the software detects their IP address (geographic location) and maps the local rating system to the universal “G” standard. This ensures that the software remains compliant with local laws while maintaining a consistent user experience across the globe.

Future Trends: Dynamic Ratings and Blockchain Verification

As we look toward the future, the concept of “Rated G” is becoming even more high-tech. We are moving away from static ratings toward dynamic, real-time content analysis.

Dynamic Content Modification

Some emerging software tools are capable of “Dynamic Rating.” Imagine a movie that is originally “PG-13,” but a software layer filters out the profanity and obscures mature scenes in real-time to create a “Rated G” experience. This technology uses AI-driven “video inpainting” to modify content on the fly, allowing users to customize their safety levels through software settings rather than relying on a fixed rating from a board.

Verification through Blockchain and Decentralized IDs

To prevent the “spoofing” of content ratings, some developers are exploring blockchain technology. By recording a content’s rating on a decentralized ledger, the “Rated G” status becomes immutable and verifiable. This would prevent malicious actors from tagging mature software or videos as “G” to bypass filters. In this future, a “Rated G” tag would be a cryptographically signed certificate, ensuring that the tech ecosystem remains a trusted space for all users.

Conclusion

In summary, “Rated G” has evolved from a simple movie rating into a complex technological framework. It is the language that software uses to talk to parents, the criteria that AI uses to sort through petabytes of data, and the barrier that protects young users in an increasingly digital world. For tech professionals and users alike, understanding the machinery behind the “G” rating is essential for navigating the modern media landscape safely and effectively. Whether it is through AI classification, API permissions, or algorithmic filtering, the “Rated G” standard remains our most important digital gatekeeper.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.