In the current landscape of rapid digital transformation, the term “quantification” has moved beyond the realms of mathematics and physics to become the backbone of the technology industry. At its most fundamental level, quantification is the process of converting qualitative phenomena—thoughts, movements, temperature, or language—into discrete numerical values. In the world of tech, quantification is the bridge between the chaotic physical world and the structured digital universe.

Without quantification, there would be no Artificial Intelligence, no high-performance computing, and no data-driven decision-making. As we push the boundaries of what software and hardware can achieve, understanding the nuances of how we quantify data is no longer just for data scientists; it is essential for anyone navigating the modern tech ecosystem.

Understanding the Core of Quantification in Digital Ecosystems

To understand quantification in a technological context, we must first look at how computers perceive the world. Unlike humans, who perceive the world in gradients and continuous flows, computers operate in the binary: 1s and 0s. Quantification is the mechanism that translates the “analog” reality we live in into the “digital” data that processors can manipulate.

From Qualitative Insights to Discrete Data

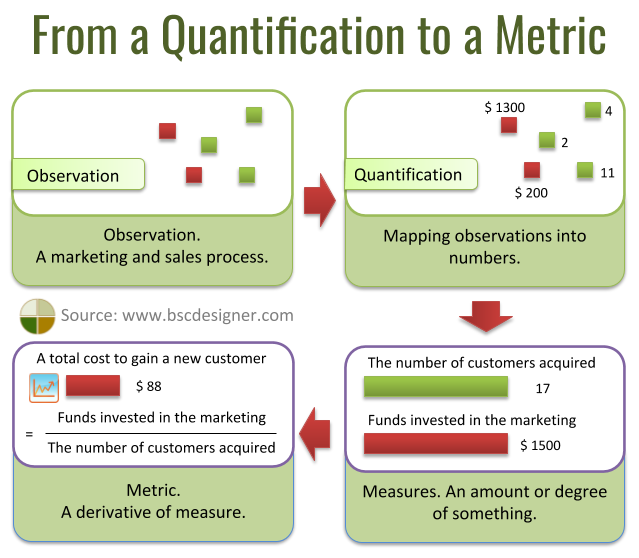

In software development and data science, the first step of any project is often identifying what needs to be quantified. A “fast” website is a qualitative description; a “page load time of 1.2 seconds” is a quantified metric. By assigning numbers to experiences, developers can create benchmarks. This transition from subjective observation to objective data allows for the creation of algorithms that can optimize performance based on real-world feedback.

Quantification also involves the categorization of unstructured data. For instance, in Natural Language Processing (NLP), a machine doesn’t “understand” the sentiment of a word like “excellent.” Instead, the word is quantified into a vector—a series of numbers in a high-dimensional space—that represents its relationship to other words. This mathematical representation is what allows AI to “comprehend” human language.

The Role of Binary Logic and Precision

Every piece of software relies on the precision of its quantification. In the early days of computing, storage and processing power were limited, meaning developers had to be extremely selective about how they quantified data. Today, while we have massive computational resources, the “granularity” of quantification remains a key technical challenge.

If you quantify a color with only 8 bits of data, you get 256 colors; if you use 24 bits, you get over 16 million. This leap in quantification is what differentiates the blocky graphics of the 1980s from the photorealistic renders of modern GPUs. In every tech sector, from digital imaging to audio engineering, the history of progress is essentially the history of increasing the resolution of our quantification.

Model Quantization: Making AI Leaner and Faster

Perhaps the most significant application of quantification in the current “AI Summer” is a process known as Model Quantization. As Large Language Models (LLMs) like GPT-4 and Llama 3 grow in size, they require immense amounts of VRAM and computational power. Quantization is the primary technique used to make these massive models accessible on consumer-grade hardware.

Reducing Precision for High Performance

In deep learning, model weights are typically stored as 32-bit floating-point numbers (FP32). These numbers are highly precise but take up significant memory. Quantization involves converting these 32-bit weights into lower-precision formats, such as 16-bit (FP16), 8-bit (INT8), or even 4-bit integers.

The goal is to reduce the “weight” of the model without significantly sacrificing its “intelligence” or accuracy. By quantifying the neural network’s parameters into smaller bit-sizes, developers can reduce the model’s memory footprint by 50% to 75%. This allows a model that would normally require an enterprise-grade A100 GPU to run on a standard laptop or even a high-end smartphone.

Edge Computing and the Need for Efficiency

The drive toward quantization is fueled by the rise of edge computing. We no longer want our AI to live exclusively in the cloud; we want it in our smartwatches, thermostats, and autonomous vehicles. These devices have strict power and thermal constraints.

Quantification allows for “inference at the edge.” By using INT8 quantization, a smart camera can run object detection algorithms locally in real-time without overheating or draining its battery. This efficiency is crucial for privacy (data doesn’t have to leave the device) and latency (processing happens instantly), making quantification a cornerstone of the Internet of Things (IoT).

Quantification in Software Development and Performance Monitoring

In the realm of DevOps and software engineering, quantification is synonymous with observability. Modern software stacks are incredibly complex, often consisting of hundreds of microservices interacting simultaneously. Without rigorous quantification, identifying a bottleneck or a security flaw would be like looking for a needle in a haystack.

Key Performance Indicators (KPIs) and System Metrics

Software engineers use quantification to monitor the “health” of their applications. This involves tracking specific metrics such as:

- Latency: The time it takes for a request to travel from the user to the server and back.

- Throughput: How many requests a system can handle per second.

- Error Rate: The percentage of requests that result in a failure.

By quantifying these metrics, teams can set Service Level Objectives (SLOs). For example, a team might decide that 99.9% of all requests must be quantified at under 200ms of latency. This numerical threshold provides a clear, unarguable standard for success, moving software management from guesswork to a science.

The Impact of Observability on User Experience (UX)

Quantification isn’t just about the back-end; it’s about the user. Companies now use “Quantified UX” to measure exactly how users interact with software. Heatmaps quantify where users click most often; session recordings quantify the “friction” points where users abandon a checkout process.

By turning human behavior into a set of quantifiable data points, tech companies can perform A/B testing—a scientific method of software improvement. If Version A of an app has a 5% higher conversion rate than Version B, the quantified data makes the decision for the product team. This data-driven approach has led to the hyper-optimized interfaces we see in modern social media and e-commerce platforms.

Cybersecurity: Quantifying Risk and Threat Intelligence

In the world of digital security, quantification is the primary tool for risk management. Cybersecurity professionals are constantly faced with a deluge of potential threats. They cannot fix every single bug or block every single IP address, so they must quantify risk to prioritize their efforts.

The Common Vulnerability Scoring System (CVSS)

The tech industry uses the CVSS to quantify the severity of security vulnerabilities. Instead of saying a bug is “bad,” security researchers assign it a numerical score from 0.0 to 10.0 based on criteria like ease of exploitation and potential impact on data confidentiality.

A vulnerability with a quantified score of 9.8 (Critical) will receive immediate attention, while a 3.2 (Low) might be scheduled for a future update. This quantification allows global tech organizations to speak a common language and respond to threats with mathematical precision.

Threat Modeling and Predictive Analytics

Modern security tools use quantification to detect anomalies. By establishing a “baseline” of normal network behavior (quantified in terms of data transfer volumes, login times, and geographic locations), AI-driven security software can identify outliers. If an employee who typically downloads 10MB of data a day suddenly downloads 10GB at 3:00 AM, the system detects a quantified deviation from the norm and triggers an alert. This proactive stance is only possible through the continuous quantification of network activity.

The Future of Quantification: Quantum Computing and Beyond

As we look toward the future, the nature of quantification itself is changing. The next frontier is quantum computing, which challenges our traditional binary understanding of data.

Qubits and the Shift in Quantifiable States

In classical computing, a bit is quantified as either 0 or 1. In quantum computing, a “qubit” can exist in a superposition of states. This requires a new kind of quantification—one based on probability amplitudes and complex numbers. The transition to quantum technology will require us to re-quantify everything we know about cryptography, material science, and optimization.

The Ethical Implications of a Fully Quantified World

As our ability to quantify the world grows, so do the ethical challenges. We are reaching a point where we can quantify human emotions, gait, and even facial micro-expressions via AI. In a tech-driven society, there is a risk of “quantification bias”—the belief that if something cannot be measured or turned into a data point, it doesn’t matter.

The future of tech will require a balance. We must leverage the power of quantification to build faster, smarter, and more secure systems, while also recognizing the qualitative human values that numbers cannot fully capture.

Conclusion

Quantification is the silent engine of the digital age. It is the process that turns a flickering light into a high-definition movie, a string of text into an AI-generated essay, and a potential security threat into a manageable risk score. From the low-level model quantization that allows AI to run on our phones to the high-level system metrics that keep the internet stable, quantification is the language of technology. As we move forward, the companies and individuals who master the art of quantification—knowing what to measure, how to measure it, and how to act on that data—will be the ones who define the future of the tech landscape.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.