In the modern digital landscape, the concept of security has evolved far beyond protecting databases from SQL injections or securing networks against DDoS attacks. As our lives become increasingly tethered to the internet, the most vulnerable point of any system remains the human element. Online grooming represents one of the most insidious forms of social engineering, where technology is leveraged not to steal passwords, but to manipulate, exploit, and compromise the safety of minors. Within the realm of digital security and tech trends, understanding online grooming is essential for developers, parents, and security professionals alike. It is a sophisticated process that exploits the features of modern communication apps, gaming platforms, and social media algorithms to bypass traditional safety barriers.

The Mechanics of Online Grooming in the Modern Tech Landscape

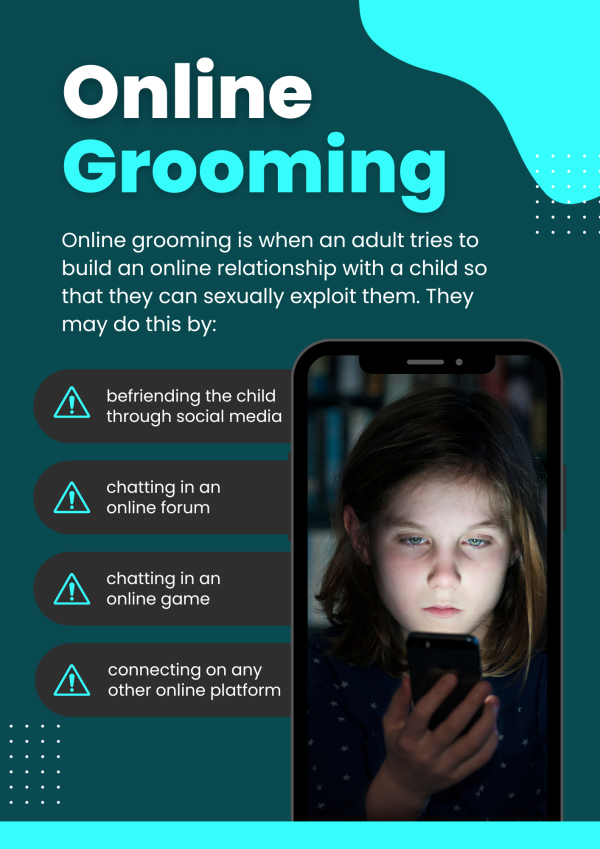

Online grooming is not a singular event but a multi-stage process facilitated by digital tools. From a technological perspective, it can be viewed as a long-term social engineering campaign designed to build a “backdoor” into a child’s trust. Unlike traditional cybersecurity threats that rely on rapid exploits, grooming is a slow-burn operation that utilizes the very features meant to enhance user connectivity.

How Social Engineering Bypasses Digital Barriers

At its core, grooming relies on social engineering—the psychological manipulation of people into performing actions or divulging confidential information. In the context of online grooming, the “information” sought is emotional intimacy and physical access. Predators use data-gathering techniques to identify vulnerable targets, analyzing public profiles to understand a child’s interests, location, and emotional state. By mirroring these interests, the groomer creates a false sense of security that renders technical safeguards, such as “don’t talk to strangers” prompts, ineffective.

The Role of Anonymity and Identity Deception

The architecture of the internet provides a veil of anonymity that is central to the grooming process. Using Virtual Private Networks (VPNs), burner accounts, and stolen imagery, perpetrators create “sockpuppet” identities. This digital masquerade allows an adult to present themselves as a peer, a strategy known as “catfishing.” The tech industry’s struggle with identity verification—balancing user privacy with safety—creates an environment where these deceptive profiles can flourish across multiple platforms, often migrating from public forums to private, encrypted messaging apps.

High-Risk Platforms and the Vulnerabilities of Interconnected Apps

The digital ecosystem is more interconnected than ever, and groomers exploit this fluidity to move targets through a “funnel.” The process often begins on high-traffic, public-facing platforms and transitions to more secluded, unmonitored digital spaces.

Gaming Ecosystems as Recruitment Hubs

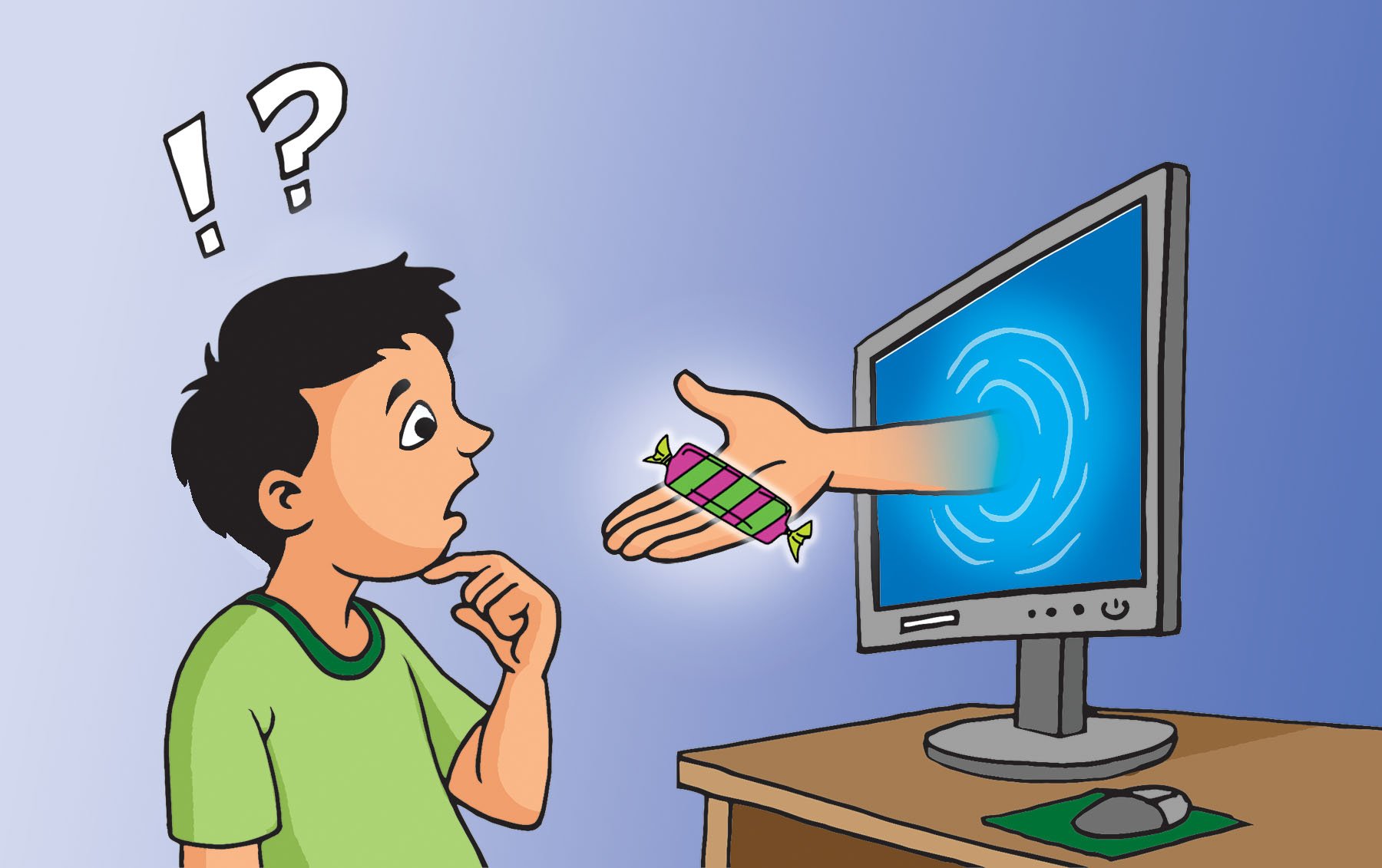

Modern gaming is no longer just about play; it is a massive social network. Platforms like Roblox, Minecraft, and Discord provide integrated voice and text chat features that are often the first point of contact. Groomers frequently use in-game assets—such as “skins,” virtual currency, or rare items—as digital lures. By offering these high-value digital goods, the perpetrator establishes a “gift-giving” dynamic, a classic stage in the grooming process. The immersive nature of these platforms makes it difficult for automated moderation tools to distinguish between legitimate friendly interaction and predatory behavior.

The Double-Edged Sword of End-to-End Encryption

One of the most complex debates in digital security is the use of End-to-End Encryption (E2EE). While E2EE is a cornerstone of digital privacy, protecting journalists and activists from surveillance, it also creates “dark corners” for groomers. When a conversation moves from a moderated platform like Instagram to an encrypted one like WhatsApp or Signal, the visibility for safety algorithms and parental monitoring tools drops to zero. This transition is a critical “red flag” in the grooming cycle, as it signifies the move to a space where the perpetrator can escalate the manipulation without fear of automated detection.

The Technological Evolution of Grooming: AI and Deepfakes

As we move deeper into the era of artificial intelligence, the tools available for online grooming are becoming increasingly sophisticated. We are seeing a shift from manual manipulation to automated and synthetic threats that challenge our current understanding of digital safety.

Synthetic Media and Extortion

The rise of Generative AI has introduced the threat of “deepfakes” into the grooming process. Predators can now use AI to generate realistic images or videos to further deceive their targets. More alarmingly, this technology is used for “sextortion.” A groomer may use AI to create non-consensual explicit imagery of a victim or use a single shared photo to manipulate the victim into believing they have been compromised. This technical leverage is used to coerce the victim into further compliance, creating a cycle of digital blackmail that is incredibly difficult for a minor to break.

Automated Bots and Mass-Scale Targeting

While traditional grooming is a one-to-one process, AI-driven bots allow predators to cast a wider net. These bots can be programmed to scan social media comments and gaming forums for specific keywords indicating vulnerability (e.g., mentions of loneliness or family conflict). Once a potential target is identified, the bot can initiate a conversation using Natural Language Processing (NLP) to build rapport before a human predator takes over. This “automated reconnaissance” significantly increases the scale at which grooming can occur.

Proactive Digital Defense: Security Tools and Monitoring Software

In response to these escalating threats, the tech industry has developed a suite of digital defense mechanisms. Protecting against online grooming requires a multi-layered security stack that combines hardware, software, and behavioral analytics.

Advanced Parental Control Features

The first line of defense often lies in the Operating System (OS). Both Apple (iOS) and Google (Android) have integrated robust parental controls—such as Screen Time and Family Link—that allow for app-specific restrictions and “Communication Safety” features. These tools use on-device machine learning to scan incoming and outgoing messages for sensitive content, blurring explicit images and providing the user with resources and warnings without compromising the privacy of the conversation to the service provider.

AI-Driven Content Filtering and Early Warning Systems

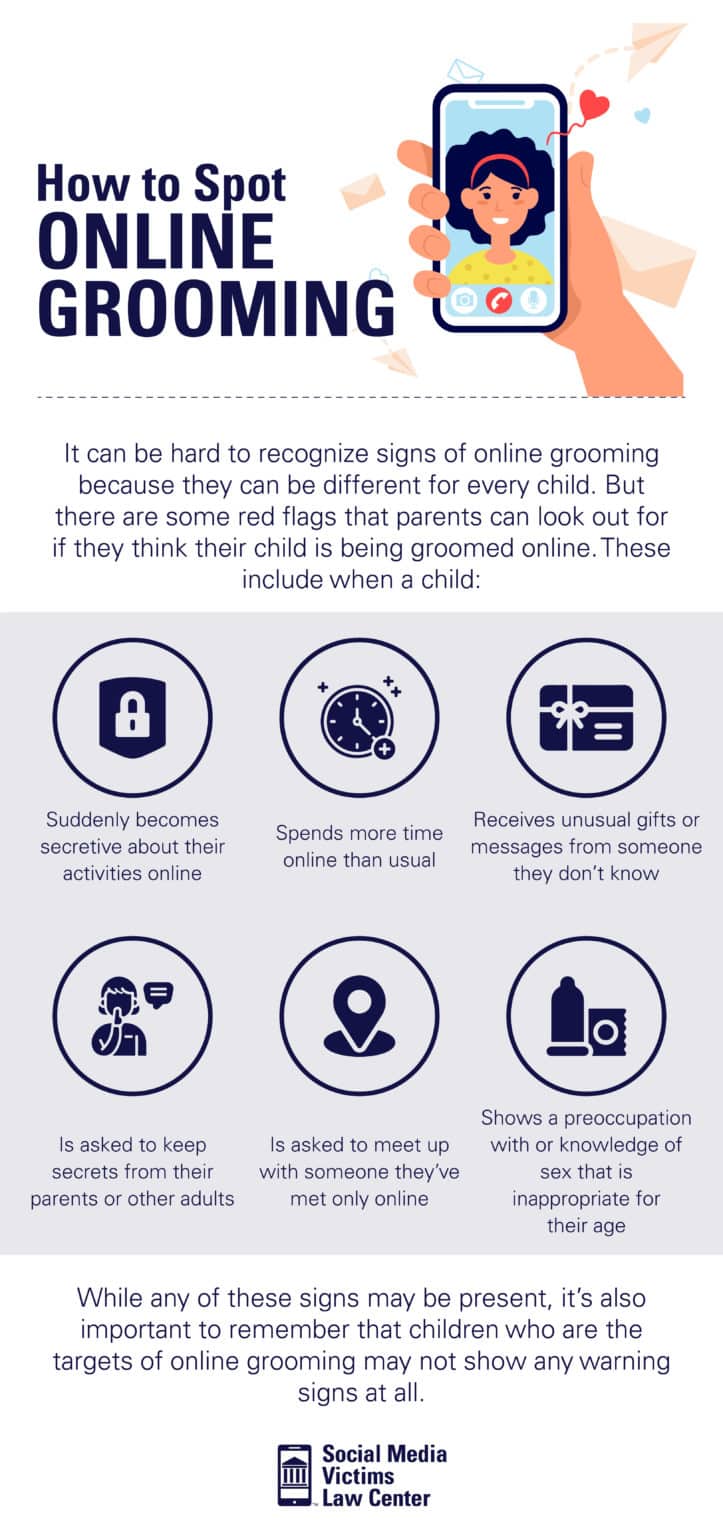

Third-party security applications like Bark or Qustodio represent the next level of digital protection. These tools do not just track location; they use sophisticated algorithms to monitor context. By analyzing language patterns across social media, email, and texts, these apps can identify the “grooming linguistic profile”—detecting shifts in tone, requests for secrecy, or the move toward “offline” meetings. This proactive monitoring acts as an Intrusion Detection System (IDS) for a child’s social life, alerting guardians to potential threats before they escalate.

Building a Resilient Digital Infrastructure for Child Safety

Securing the digital world against grooming is not just the responsibility of the end-user; it requires a systemic change in how platforms are designed and regulated. The “Safety by Design” movement is gaining momentum as a necessary standard in software development.

Platform Accountability and Regulatory Compliance

Governments worldwide are introducing legislation, such as the UK’s Online Safety Act and various US state-level regulations, that mandate tech companies to take proactive steps against grooming. This includes implementing age-verification technologies that are both accurate and privacy-preserving. From a tech standpoint, this involves the use of “Zero-Knowledge Proofs” (ZKPs), which allow a user to prove they are over a certain age without revealing their actual birthdate or identity documents to the platform.

The Importance of Digital Literacy in Cybersecurity

Ultimately, the most powerful firewall is an informed user. Digital literacy is a critical component of modern cybersecurity education. Just as employees are trained to recognize phishing emails, children and adolescents must be trained to recognize the “phishing” of their emotions. Understanding how algorithms work, why “free” digital items usually have a cost, and the technical ways someone can fake an identity is essential. In the tech niche, we often say that “security is a process, not a product.” This is never more true than in the context of online grooming. By combining robust technological safeguards with comprehensive digital education, we can create a more secure environment where the benefits of connectivity are not overshadowed by the risks of exploitation.

As technology continues to advance, the methods of grooming will undoubtedly evolve. The tech community—including developers, security researchers, and policy advocates—must remain vigilant, ensuring that the platforms we build to bring the world together are not used as tools to tear lives apart. The fight against online grooming is a fundamental pillar of the broader mission to secure the digital frontier.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.