In the realm of modern technology, logic and reasoning are not merely philosophical abstractions; they are the fundamental building blocks of every line of code, every silicon chip, and every artificial intelligence model currently reshaping our world. From the simplest calculator to the most sophisticated Large Language Model (LLM), the ability to process input, apply a set of rules, and derive a coherent output is what defines the “intelligence” of a machine.

To understand the trajectory of technology—where it began and where it is headed—one must first master the concepts of logic and reasoning as they apply to computational systems. This article explores how these human cognitive processes have been digitized, the evolution of algorithmic thought, and how the tech industry is bridging the gap between rigid binary rules and fluid, human-like inference.

The Architecture of Machine Thought: Defining Logic and Reasoning in Tech

At its core, “logic” in technology refers to the formal system of rules that govern how data is manipulated. If logic is the “grammar” of computing, then “reasoning” is the active process of using that grammar to solve a specific problem or reach a conclusion. In the digital world, this relationship is expressed through binary states and complex algorithmic structures.

Symbolic Logic: The Foundational “If-Then” Framework

The earliest iterations of computing relied heavily on symbolic logic. This is a form of reasoning where symbols represent specific data points or actions, and operators (AND, OR, NOT) define their relationships. This is best exemplified by the “if-then” statement. If a user enters the correct password, then the system grants access.

This deterministic approach ensures reliability and predictability. In software engineering, this is often referred to as “hardcoded logic.” It is rigid and inflexible, but it provides the absolute certainty required for mission-critical systems, such as flight control software or medical device monitoring, where there is no room for “maybe.”

Boolean Algebra and the Birth of Digital Circuits

To understand how logic manifests in hardware, we must look at Boolean algebra. Developed by George Boole in the mid-19th century, this mathematical logic uses only two values: true and false (1 and 0). Every processor inside every gadget you own is essentially a massive collection of logic gates (AND, OR, XOR, etc.) that execute Boolean logic at lightning speed.

Reasoning at this level is purely mechanical. The hardware does not “know” it is processing a video or a spreadsheet; it is simply executing a sequence of logical gates based on the electrical signals it receives. This synergy between mathematical logic and physical hardware is what allowed for the transition from mechanical counting machines to the digital revolution.

Reasoning in the Age of Artificial Intelligence

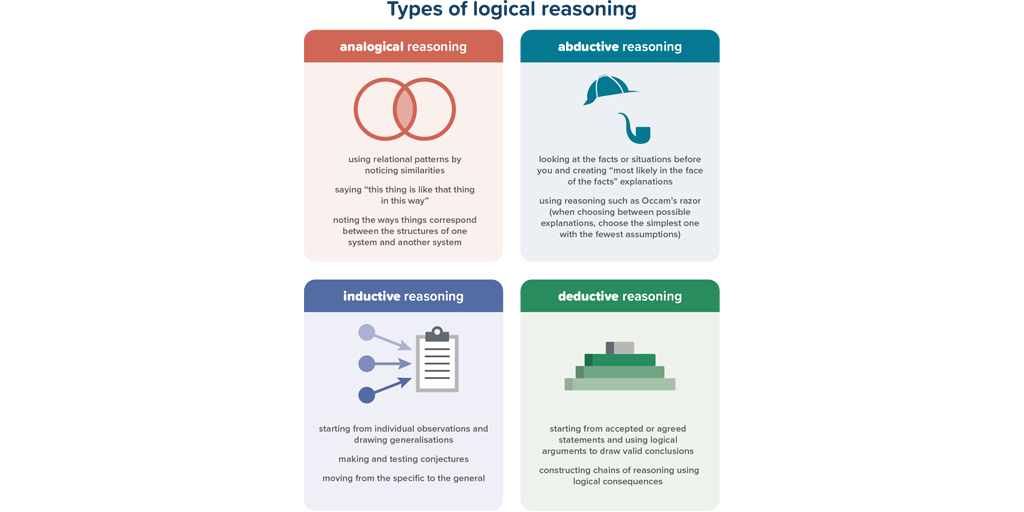

The shift from traditional software to Artificial Intelligence (AI) represents a monumental leap in how technology handles reasoning. While traditional software follows a roadmap (deductive reasoning), modern AI—specifically machine learning—attempts to navigate through observation (inductive reasoning).

Deductive vs. Inductive Reasoning in Machine Learning

In classical computing, we use deductive reasoning: we provide the rules (the code) and the data, and the machine produces the answer. However, the tech industry has moved toward inductive reasoning through Machine Learning (ML). In this model, we provide the data and the desired answers, and the machine “reasons” its way to the underlying rules.

For example, an image recognition tool isn’t given a list of rules for what a “cat” looks like. Instead, it processes millions of images and inductively reasons that certain patterns of pixels consistently correlate with the label “cat.” This shift from programmed logic to learned reasoning is what has enabled breakthroughs in computer vision and natural language processing.

Large Language Models (LLMs) and Emergent Reasoning Capabilities

The current frontier of tech involves Large Language Models like GPT-4 or Claude. These systems do not possess a “logic engine” in the traditional sense; rather, they predict the next most logical token in a sequence. Yet, as these models scale, they exhibit what researchers call “emergent reasoning.”

When an LLM solves a math problem it hasn’t seen before, it is performing a high-level form of probabilistic reasoning. It isn’t just reciting a database; it is synthesizing patterns to construct a logical argument. However, because this reasoning is statistical rather than symbolic, it leads to the phenomenon of “hallucinations,” where the machine follows a linguistic pattern that is logically sound in structure but factually incorrect.

Algorithmic Reasoning: How Software Solves Complex Problems

Beyond AI, logic and reasoning are the primary tools used in software architecture to manage complexity. As software systems grow to billions of lines of code, the ability to maintain logical consistency becomes the primary challenge for developers and architects.

Heuristic Search and Optimization

Not all technical problems can be solved through brute-force logic. In scenarios like global logistics or network routing, the number of possible outcomes is infinite. Here, tech relies on “heuristic reasoning”—a practical approach to problem-solving that employs “rules of thumb” to find a satisfactory solution within a reasonable timeframe.

Algorithms like A* (used in navigation apps) or those used in high-frequency trading use heuristic reasoning to weigh different logical paths and choose the one with the highest probability of success. This mimics human “common sense” reasoning, where we make the best possible decision with limited time and information.

Formal Verification: Ensuring Logic in Digital Security

In the world of digital security and blockchain, “logic” is a matter of safety. Formal verification is a technique where mathematical proofs are used to ensure that a piece of software’s logic is flawless. By treating code like a mathematical equation, engineers can prove that a system is immune to certain types of cyberattacks.

As we move toward a more decentralized internet (Web3) and autonomous vehicles, formal verification becomes the ultimate application of logic. It removes the “reasoning” errors made by human programmers, ensuring that the software will behave exactly as intended under every possible condition.

The Future of Cognitive Computing: Neuro-Symbolic AI

The tech industry is currently facing a divide: the rigid, reliable logic of symbolic AI versus the fluid, intuitive reasoning of neural networks. The future of technology lies in “Neuro-Symbolic AI,” an integrated approach that seeks to combine these two paradigms.

Bridging the Gap Between Intuition and Logic

Current AI models are excellent at intuition (recognizing faces, translating languages) but often struggle with basic logic (simple arithmetic, long-term planning). Neuro-symbolic systems aim to give AI a “dual-process” brain, similar to Daniel Kahneman’s “System 1” (fast, intuitive thinking) and “System 2” (slow, logical reasoning).

In this tech trend, the neural network handles the perception—seeing the world—while a symbolic layer handles the reasoning—applying rules and constraints to that perception. This would result in AI that can explain its reasoning, making it more transparent and “explainable” to human users.

Ethical Reasoning and Algorithmic Bias

As we delegate more decisions to machines—from hiring processes to judicial sentencing—the “logic” used by these systems becomes a matter of ethics. Tech companies are now forced to program “ethical reasoning” into their tools. This involves creating frameworks that can detect and mitigate bias in data.

The challenge is that logic is objective, but reasoning is often influenced by the data it is fed. If the underlying data is biased, the machine’s reasoning will be logically consistent but ethically flawed. The next decade of tech development will be defined by how we build “reasoning” systems that align with human values and social logic.

Conclusion: The Convergence of Mind and Machine

Logic and reasoning have evolved from the pages of philosophy books to the heart of the global economy. In the tech niche, understanding these concepts is essential for navigating the complexities of software development, digital security, and the rapidly advancing field of Artificial Intelligence.

We have moved from an era of “Logic 1.0″—where machines were simple tools following strict rules—to “Reasoning 2.0,” where machines learn, adapt, and infer. As we look toward the future, the goal is to create technology that doesn’t just calculate, but truly understands the logical context of the world it inhabits. For tech professionals and enthusiasts alike, the mastery of logical structures remains the most valuable skill in an increasingly automated world. Through the lens of logic and reasoning, we are not just building faster computers; we are building a digital reflection of the human mind itself.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.