In the contemporary landscape of rapid technological advancement, we are surrounded by devices that define our productivity, entertainment, and mobility. From the high-performance silicon chips in our smartphones to the massive battery arrays in electric vehicles (EVs), every piece of modern hardware is governed by a singular, vital metric: the kilowatt-hour (kWh).

Despite its ubiquity on utility bills and specification sheets, the term “kWh” remains frequently misunderstood. As we transition toward a more electrified, data-driven world—where smart homes, artificial intelligence, and sustainable energy tech dominate the conversation—understanding what a kWh is and how it functions within the tech ecosystem is no longer just for engineers. It is essential knowledge for anyone navigating the current digital frontier.

Understanding the Fundamentals: What Exactly is a kWh?

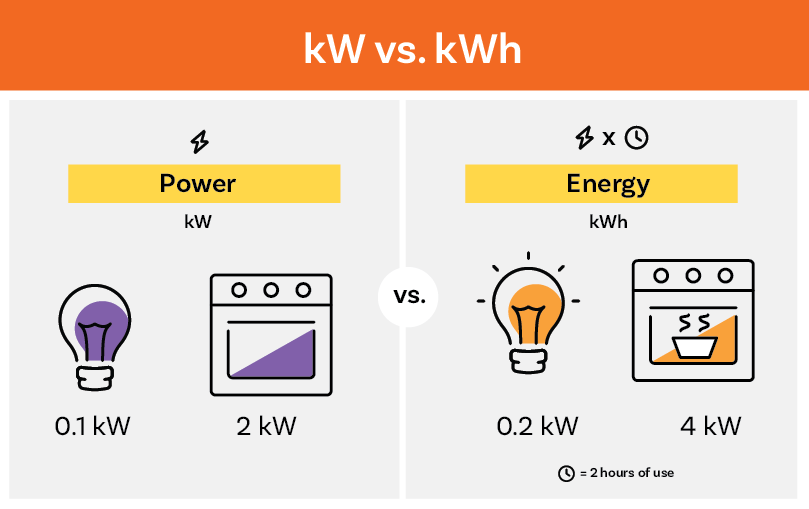

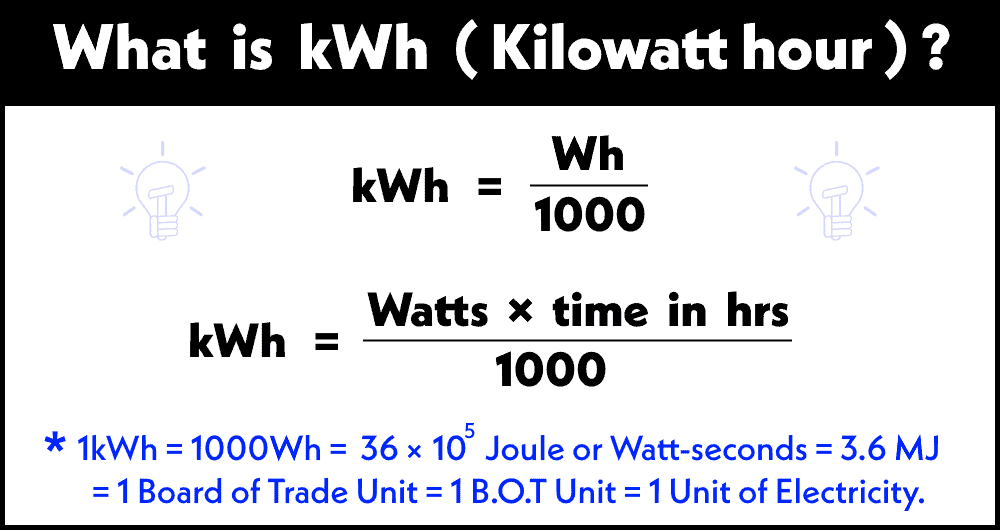

To understand the kilowatt-hour, one must first distinguish between power and energy. In technical terms, these are often confused, but they represent two different physical dimensions. Power, measured in watts (W) or kilowatts (kW), refers to the rate at which energy is consumed at a specific moment. Energy, measured in kilowatt-hours (kWh), refers to the total amount of power used over a specific duration of time.

The Technical Distinction: kW vs. kWh

A helpful analogy for tech enthusiasts is to think of energy in terms of data storage and transfer. If “power” (kW) is the speed of your internet connection (e.g., 1 Gbps), then “energy” (kWh) is the total amount of data you download over an hour (e.g., 450 GB).

Specifically, one kilowatt-hour represents the amount of energy used by a device consuming 1,000 watts for a duration of one hour. For example, if you have a high-end gaming PC with a power draw of 500 watts, running it for two hours will consume exactly 1 kWh of energy. In the world of hardware benchmarking and performance testing, this metric allows engineers to quantify the efficiency of a system under varying workloads.

The Physics of Energy Consumption in Hardware

In the realm of semiconductor technology, energy consumption is a primary constraint. Every operation performed by a CPU or GPU involves the movement of electrons through transistors, which generates heat and consumes a fraction of a watt. When billions of these operations occur every second, the cumulative energy requirement becomes significant. Tech manufacturers are constantly striving for “performance per watt”—a metric that defines how much computational work can be done for every kWh consumed. This is why a modern Apple Silicon chip can outperform an older Intel processor while using significantly fewer kWh over a standard workday.

kWh in Modern Gadgets and the Smart Home Ecosystem

As we move toward the “Internet of Things” (IoT), the kilowatt-hour has moved from the basement fuse box to our smartphone screens. Today’s smart homes are integrated ecosystems where software and hardware collaborate to monitor and optimize energy throughput in real-time.

Measuring Efficiency in Home Automation

The rise of smart plugs and intelligent home hubs has democratized energy telemetry. Devices like the Nest Thermostat or smart energy monitors (such as Sense or Emporia) use machine learning algorithms to “listen” to the electrical signatures of various appliances. By identifying the unique voltage fluctuations of a refrigerator compressor or a microwave, these software tools can tell a user exactly how many kWh each gadget is consuming.

This level of granular data is critical for the tech-savvy consumer. It allows for the identification of “vampire loads”—devices that consume energy even when in standby mode. For instance, an older game console or a desktop computer in sleep mode might still pull a consistent 10-20 watts. Over a year, this software-driven tracking reveals that these idle gadgets can consume hundreds of kWh, leading to hardware wear and unnecessary energy overhead.

Energy Monitoring Software and IoT Integration

The integration of kWh tracking into software platforms like Home Assistant or Apple HomeKit represents a significant shift in digital security and management. Modern “Energy Dashboards” provide visual analytics, showing peaks in consumption that correlate with specific automated routines. For a developer or a tech hobbyist, this data is invaluable for optimizing server uptime and ensuring that home labs or NAS (Network Attached Storage) systems are running within their thermal and energetic “sweet spots.”

The Role of kWh in Electric Vehicles and Portable Power

Perhaps the most prominent use of the kilowatt-hour in modern tech marketing is found in the automotive and portable power sectors. For electric vehicles, the kWh is the equivalent of the “gallon of gas,” representing the capacity of the fuel tank.

Battery Capacity and Range Estimation

When you look at the specs for a Tesla Model 3 or a Rivian R1T, you will see battery sizes listed as 75 kWh or 135 kWh. This capacity directly dictates the vehicle’s range and performance. However, the software layer on top of this hardware is what truly manages the energy. The Battery Management System (BMS) is a sophisticated piece of software that monitors the health of individual lithium-ion cells, ensuring that the discharge of those kilowatt-hours is balanced and safe.

The efficiency of an EV is often measured in “watt-hours per mile” (Wh/mi). A highly efficient tech-forward car might use only 250 Wh (0.25 kWh) to travel one mile. This calculation is a testament to the synergy between aerodynamic design and power-management firmware.

Fast Charging Tech and Throughput

The relationship between kW (the charging speed) and kWh (the battery size) is the defining challenge of modern charging infrastructure. When a vehicle “fast charges” at 250 kW, it is theoretically pumping 250 kWh of energy into the battery over the course of an hour. However, because of thermal limits and chemical constraints, the software must throttle this speed as the battery fills up. Understanding the kWh capacity of a device—whether it’s a 100-kWh car battery or a 0.099-kWh (99Wh) laptop power bank—is essential for determining how long a device will last under load and how quickly it can be replenished.

Data Centers and the AI Energy Footprint

On a macro scale, the kilowatt-hour is the metric used to measure the impact of the world’s most powerful technologies: Large Language Models (LLMs) and cloud computing. The tech industry’s “hidden” energy consumption occurs in the massive data centers that house thousands of H100 GPUs and specialized AI accelerators.

The Massive kWh Requirements of Large Language Models

Training a model like GPT-4 requires an astronomical amount of energy. It is estimated that training a state-of-the-art AI model can consume millions of kWh. This energy is used not only to power the silicon that performs the matrix multiplications but also to run the complex cooling systems required to prevent the hardware from melting.

In this context, the kWh is a measure of “computational cost.” As AI companies look toward the future, the goal is to reduce the “kWh per query.” Every time a user interacts with a chatbot, a small amount of energy—roughly equivalent to lighting a LED bulb for a few minutes—is consumed at a data center miles away.

Sustainable Tech and Green Computing

Because of these massive requirements, tech giants like Google, Microsoft, and Amazon have become the largest corporate purchasers of renewable energy. Their goal is to match every kWh consumed by their servers with a kWh generated from wind or solar sources. This has led to the development of “carbon-intelligent” software platforms that shift non-urgent computational tasks (like data backups or model training) to times of the day when renewable energy is most abundant on the grid.

Future Trends: Optimizing Every Watt

Looking ahead, the focus of the technology industry is shifting from pure power to extreme efficiency. The kilowatt-hour will remain the benchmark, but the way we interact with it will evolve through new materials and smarter algorithms.

Solid-State Batteries and Efficiency Breakthroughs

We are on the verge of a transition from traditional lithium-ion batteries to solid-state batteries. This technology promises to pack more kWh into a smaller, lighter package. For the tech consumer, this means smartphones that last a week on a single charge and EVs that can travel 600 miles. The “energy density”—the ratio of kWh to kilograms—is the key tech metric to watch in the coming decade.

Gallium Nitride (GaN) and the Shrinking Power Brick

Another technological leap is the use of Gallium Nitride in power electronics. Traditional silicon-based chargers lose a significant amount of energy as heat. GaN chargers are far more efficient, meaning more of the electricity drawn from the wall actually reaches your device. While the kWh consumed remains the same, the “waste” is minimized, allowing for smaller, faster, and cooler-running gadgets.

In conclusion, the kilowatt-hour is far more than just a number on a utility bill. It is the fundamental unit of the digital age, a bridge between the physical laws of energy and the virtual worlds we build. Whether you are gauging the range of your next vehicle, optimizing your smart home’s energy footprint, or considering the environmental impact of artificial intelligence, the kWh is the essential metric that defines the limits and the potential of modern technology. Understanding it is the first step toward mastering the tools that power our lives.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.