In the rapidly evolving landscape of artificial intelligence, specifically within the realm of Large Language Models (LLMs) like GPT-4, Claude, and Llama, the bridge between human intent and machine execution is “prompt engineering.” Among the various techniques used to refine this interaction, few-shot prompting stands out as one of the most powerful and technically significant methods. It represents a shift from simply asking a machine a question to providing it with a mini-framework for reasoning and pattern recognition.

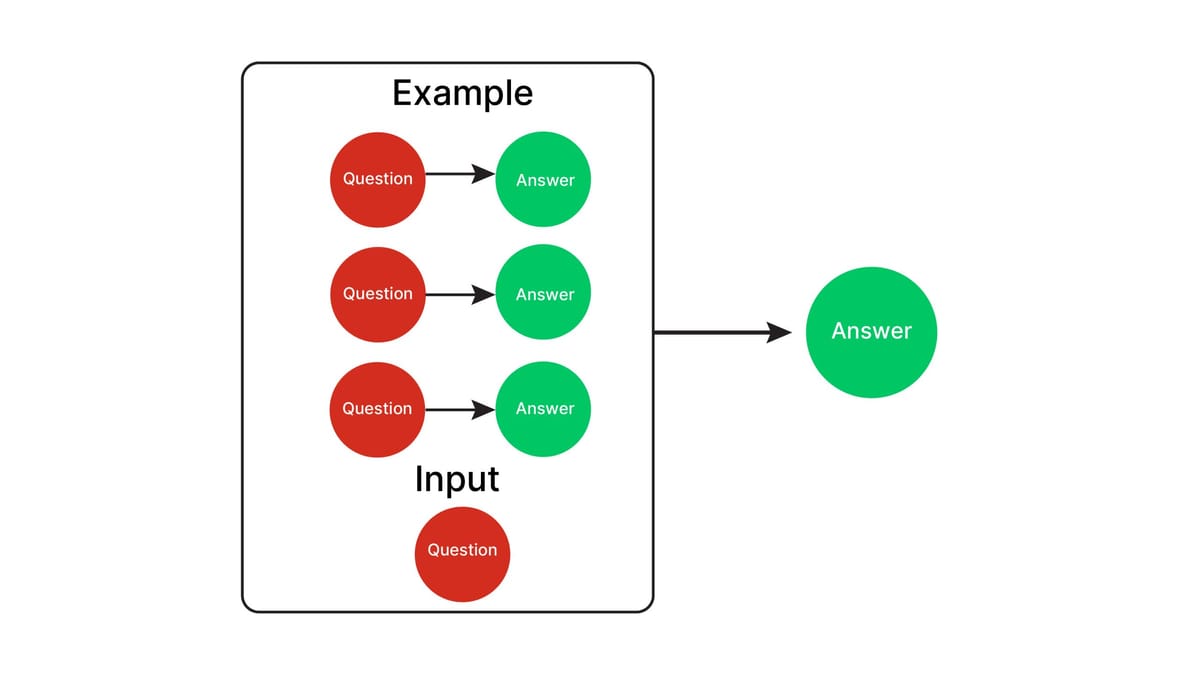

Few-shot prompting is a technique where a user provides a small number of examples (called “shots”) within the prompt to guide the model toward a specific output format or logic. This method leverages the model’s inherent ability for “in-context learning”—the capacity to understand and replicate patterns on the fly without changing its underlying neural weights.

The Mechanics of Few-Shot Prompting: How AI Learns in Real-Time

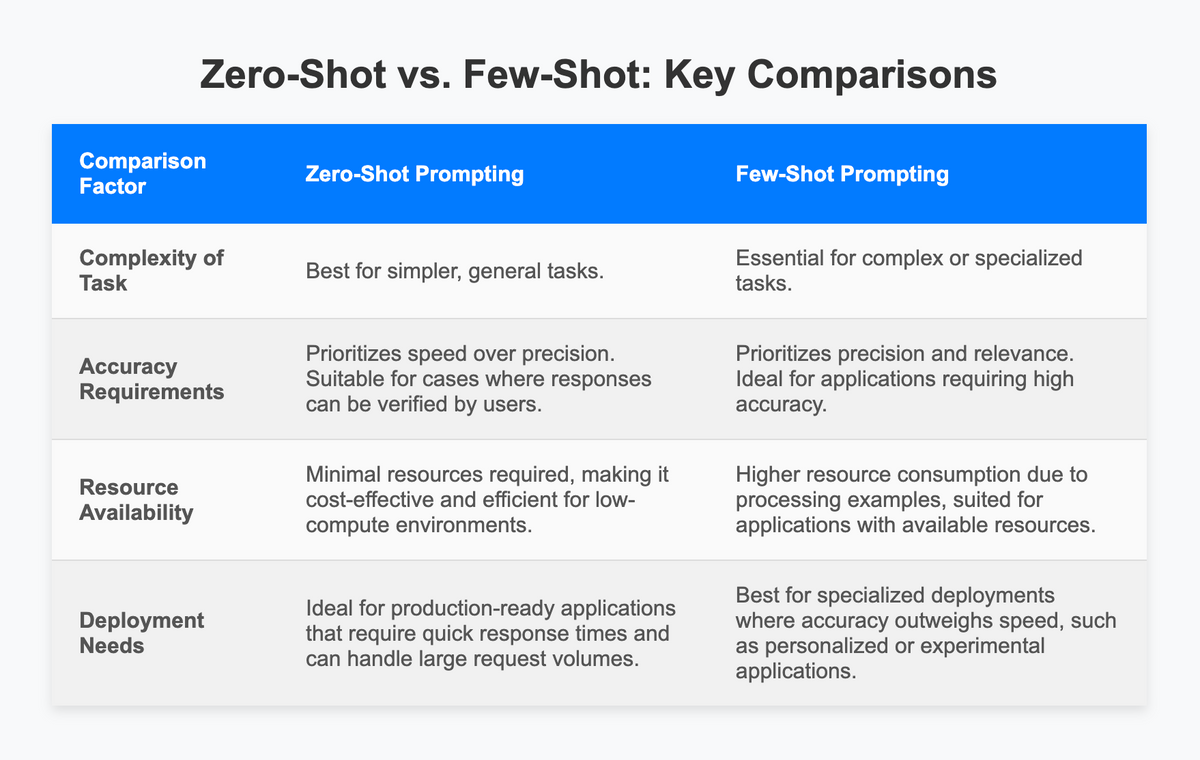

To understand few-shot prompting, one must first understand the spectrum of prompt complexity. At the most basic level is “zero-shot” prompting, where a model is given a task with no prior examples. While modern LLMs are incredibly capable in zero-shot scenarios, they often struggle with highly specialized formats or nuanced logic. Few-shot prompting solves this by providing a “latent bridge” between the user’s intent and the model’s vast pre-trained knowledge.

Zero-Shot vs. Few-Shot: The Performance Gap

In a zero-shot scenario, you might ask an AI to “Translate English to French: ‘The cat is on the table.'” The model uses its internal map of language to provide the answer. However, if you require a very specific output—such as a JSON object containing the translation, the word count, and the sentiment—a zero-shot prompt might fail to adhere to the formatting. Few-shot prompting allows you to provide 2-5 examples of this specific JSON structure, effectively “programming” the model’s behavior for that specific session.

The Role of In-Context Learning (ICL)

Few-shot prompting is powered by In-Context Learning. Unlike traditional machine learning, which requires “fine-tuning” (re-training the model on a new dataset), ICL happens entirely within the inference phase. The model processes the examples provided in the prompt and uses its attention mechanism to weigh the relationship between the inputs and outputs provided. This allows for immense flexibility, as the model can pivot from sentiment analysis to code generation in milliseconds based purely on the examples it sees in its context window.

Tokenization and Context Windows

From a technical perspective, every example provided in a few-shot prompt consumes “tokens.” Since every LLM has a finite context window (the maximum number of tokens it can process at once), there is a strategic balance to strike. Developers must choose examples that are descriptive enough to guide the model but concise enough to leave room for the actual task at hand.

Why Few-Shot Prompting is a Game-Changer for AI Performance

The transition from zero-shot to few-shot prompting is not merely about better formatting; it is about significantly increasing the accuracy and reliability of AI tools in professional environments. In a tech stack, reliability is the primary metric, and few-shot prompting is the primary tool for achieving it.

Precision in Complex Task Execution

For complex tasks—such as classifying legal documents or extracting medical data—a model needs to understand the boundaries of what is acceptable. By providing “shots” that demonstrate edge cases (e.g., how to handle a document that contains conflicting information), the developer can drastically reduce errors. The examples serve as a “ground truth” that anchors the model’s probabilistic predictions.

Overcoming Hallucinations and Improving Factuality

One of the greatest challenges in AI development is the “hallucination,” where the model generates plausible-sounding but false information. Few-shot prompting can mitigate this by establishing a rigorous pattern of output. If the examples provided always include a citation or a “null” response when information is missing, the model is statistically more likely to follow that pattern rather than inventing data to fill the gap.

Stylistic Control and Brand Voice

In the context of software-as-a-service (SaaS) products that use AI, maintaining a consistent “voice” is critical. Few-shot prompting allows developers to show the model exactly how to speak. Whether the requirement is technical and dry or conversational and empathetic, providing three examples of the desired tone is significantly more effective than providing a long list of abstract adjectives in a system prompt.

Best Practices for Crafting Effective Few-Shot Prompts

Effective few-shot prompting is an art backed by data science. Simply throwing examples at a model is not enough; the quality, order, and relevance of those examples determine the success of the output.

Selecting High-Quality, Representative Examples

The most important rule of few-shot prompting is that your examples must be diverse. If you are building a tool to categorize software bugs, your examples should not all be about “login errors.” You should include a UI bug, a backend timeout, and a database connection error. This diversity prevents the model from developing a “recency bias” or an overly narrow interpretation of the task.

The “Goldilocks” Number of Shots

While “few-shot” generally refers to anywhere from 1 to 10 examples, research suggests a point of diminishing returns. For most tasks, 3 to 5 examples provide the necessary context. Beyond that, the model may become overly focused on the specific content of the examples rather than the underlying logic, not to mention the increased latency and cost associated with higher token usage.

Maintaining Label Balance

In classification tasks, label balance is crucial. If you provide five examples of “Positive” sentiment and only one “Negative” example, the model will develop a statistical bias toward predicting “Positive.” To maintain technical integrity, ensure that your examples reflect a balanced distribution of the possible outcomes you expect the model to generate.

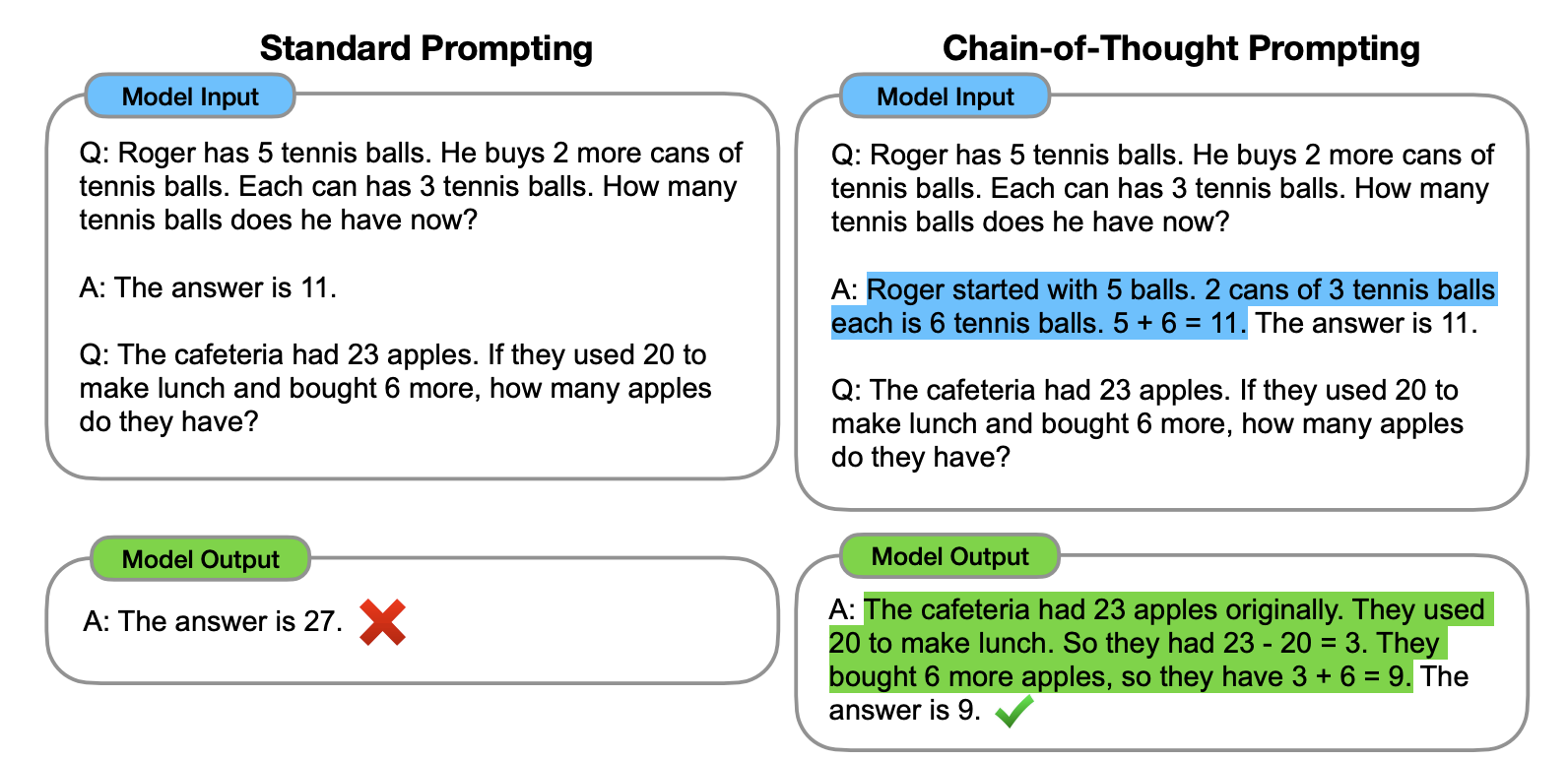

Advanced Techniques: Chain-of-Thought and Reasoning

As we push the boundaries of what AI tools can do, few-shot prompting has evolved into even more sophisticated techniques, most notably “Few-Shot Chain-of-Thought” (CoT) prompting.

Integrating Reasoning Steps

In standard few-shot prompting, you provide an Input and an Output. In Chain-of-Thought few-shot prompting, you provide an Input, a Reasoning Path, and then the Output. For example, if the task involves a complex math problem, the example would show the step-by-step breakdown of the logic before giving the final answer. This encourages the model to allocate more computational “thought” to the process, leading to a massive spike in performance for logical and mathematical tasks.

Self-Consistency and Verification

By combining few-shot prompts with self-consistency checks, developers can create AI agents that are remarkably robust. This involves prompting the model multiple times with the same few-shot examples and then using a “majority vote” or a secondary “verifier” prompt to ensure the output is correct. This is a common practice in building high-stakes tech tools where the cost of error is high.

The Future of Prompt Engineering in Large Language Models

As Large Language Models become more sophisticated, some argue that prompt engineering—and few-shot prompting specifically—might become obsolete. However, the current trajectory of AI research suggests the opposite.

From Manual Prompting to Automated Optimization

We are moving toward a future where “Auto-Prompting” tools (like DSPy) will use few-shot techniques to optimize themselves. These systems take a small set of human-defined examples and then iterate, testing thousands of slight variations in the “shots” to find the one that produces the highest accuracy. In this scenario, few-shot prompting isn’t disappearing; it is becoming the foundational data layer for automated AI optimization.

Impact on the AI Development Lifecycle

The ability to use few-shot prompting changes how software is built. Traditionally, changing a model’s behavior required a weeks-long cycle of data collection and fine-tuning. Now, a software engineer can change the behavior of an AI feature in seconds by simply updating the examples in a prompt. This agility is accelerating the pace of tech innovation, allowing for rapid prototyping and deployment of intelligent features that were once considered impossible.

In conclusion, few-shot prompting is more than just a trick for getting better answers from a chatbot. It is a fundamental technique in the modern AI stack that leverages the power of in-context learning to provide precision, reliability, and logical depth. For tech professionals, developers, and AI enthusiasts, mastering the nuances of how many shots to use, which examples to select, and how to structure reasoning paths is the key to unlocking the true potential of generative artificial intelligence. As models grow in size and context windows expand, the “shots” we provide will become the primary way we steer the immense power of these digital brains.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.