In the world of technology, efficiency is the currency of success. Whether you are browsing a website, running a complex simulation, or simply typing in a word processor, there is a silent, high-speed orchestrator working behind the scenes to ensure that instructions are executed in the correct order and that resources are allocated without conflict. This orchestrator is known as a “dispatcher.”

While the term “dispatcher” might evoke images of emergency services or logistics hubs, its role in computer science and software engineering is equally critical. At its core, a dispatcher is a specialized mechanism—either in hardware or software—responsible for routing instructions, managing process execution, and ensuring that various components of a system communicate effectively. This article explores the technical nuances of dispatchers, from the deepest layers of operating systems to the modern architectures of web development.

The Core Concept: The Operating System Dispatcher

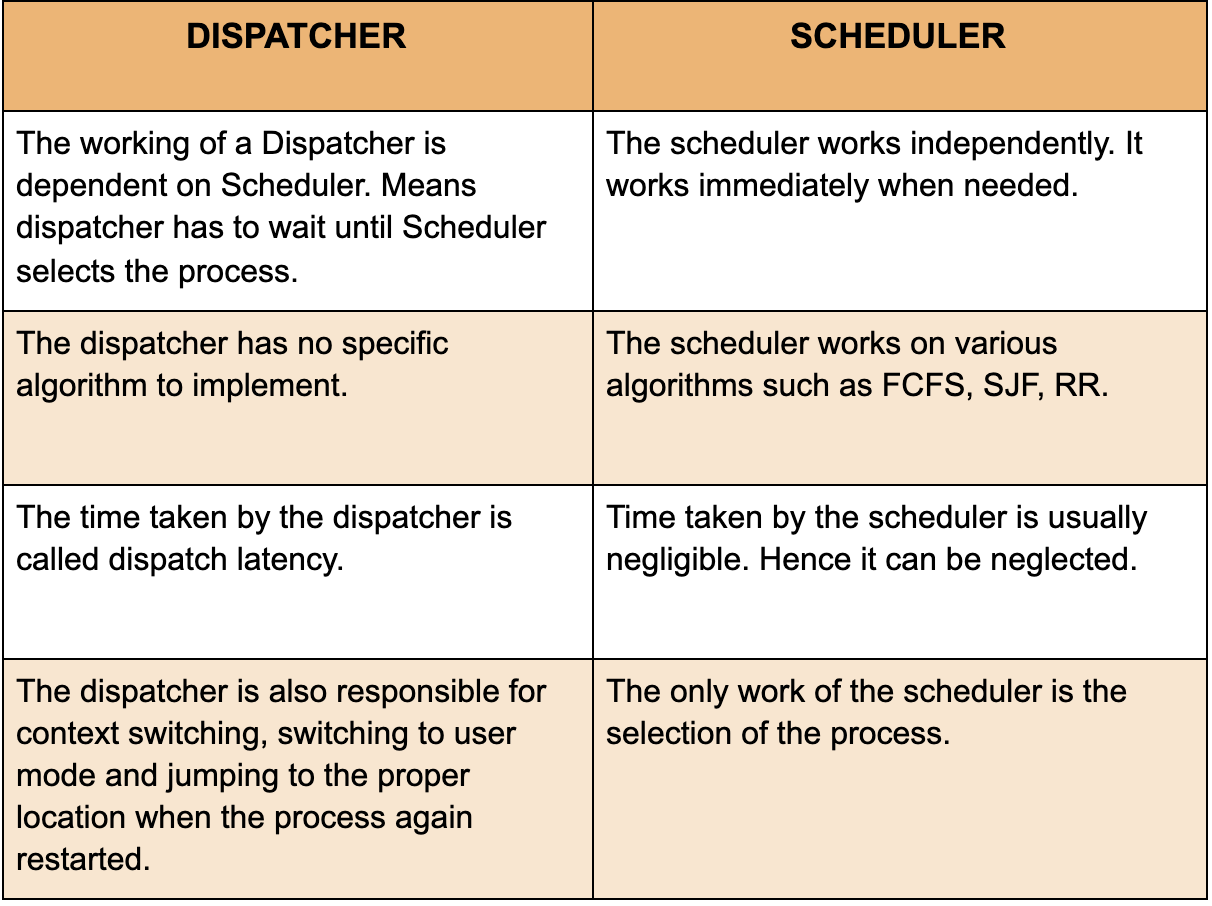

In the context of an Operating System (OS), the dispatcher is a fundamental component of the CPU scheduling process. To understand the dispatcher, one must first distinguish it from the “scheduler.” While the scheduler decides which process should run next, the dispatcher is the module that actually gives control of the CPU to the process selected by the short-term scheduler.

How the Scheduler and Dispatcher Work Together

The relationship between a scheduler and a dispatcher is a two-step dance. The scheduler acts as the brain, evaluating the priority of tasks in the “ready queue.” Once the brain decides which task is most urgent, the dispatcher acts as the muscle. It performs the physical handover. This involves several low-level operations that happen in microseconds, yet they are the foundation of modern multitasking.

The Context Switch: The Heart of Multitasking

The most significant task of an OS dispatcher is the “context switch.” When the CPU stops executing one process to start another, the dispatcher must save the current state (the “context”) of the running process so that it can be resumed later. This context includes CPU registers, the program counter, and stack pointers.

After saving the state of the old process, the dispatcher loads the saved state of the new process. This seamless transition is what allows a computer to feel like it is doing a hundred things at once, even though a single CPU core can only execute one instruction at a specific point in time.

Measuring Dispatcher Performance: Dispatch Latency

In high-performance computing, the time it takes for the dispatcher to stop one process and start another is known as “dispatch latency.” Minimizing this latency is a primary goal for OS architects. If the dispatch latency is too high, the system feels sluggish, and real-time applications—such as video streaming or industrial control systems—may fail. Tech advancements in kernel design constantly seek to reduce this overhead to ensure near-instantaneous task switching.

Dispatchers in Modern Web Development and Frameworks

Moving away from the hardware and into the realm of software engineering, the term “dispatcher” takes on a more structural meaning. In modern application design, particularly within complex front-end and back-end frameworks, a dispatcher serves as a central hub for managing data flow and application state.

The Flux and Redux Architecture

One of the most famous uses of the dispatcher pattern in recent years is within the Flux architecture, popularized by Facebook (Meta). In this model, the Dispatcher is a central registry through which all data must flow.

Unlike traditional Model-View-Controller (MVC) patterns where data can sometimes move in multiple directions, Flux enforces a strict unidirectional data flow. When a user interacts with a “View” (like clicking a button), an “Action” is created. This action is sent to the Dispatcher, which then broadcasts the action to various “Stores.” Because there is only one Dispatcher, it becomes the “single source of truth” for what is happening in the application at any given moment, making debugging and state management significantly easier for developers.

Event Dispatchers in JavaScript and PHP

Beyond state management, dispatchers are frequently used in “Event-Driven Architecture.” In environments like Node.js or frameworks like Symfony (PHP), an Event Dispatcher allows different components of an application to communicate without being tightly coupled.

For instance, when a new user registers on a platform, a “UserRegistered” event is dispatched. The dispatcher doesn’t need to know what happens next; it simply notifies all registered “listeners.” One listener might send a welcome email, another might update a database, and a third might trigger a marketing analytics tool. This modularity is a hallmark of clean, scalable software design.

Managing State and Action Propagation

The dispatcher ensures that actions are processed in a predictable order. In complex web apps, multiple events might trigger simultaneously. A well-designed dispatcher manages these “collisions” by queuing actions or ensuring that one update finishes before the next begins. This prevents the “race conditions” that often lead to UI bugs where the screen doesn’t match the actual data in the background.

Network Dispatching and Load Balancing

In the world of cloud computing and networking, the dispatcher takes the form of a load balancer or a traffic manager. Here, the “tasks” are not internal CPU processes but incoming requests from millions of users across the globe.

Distributing Traffic in High-Availability Systems

A network dispatcher sits at the edge of a server cluster. Its job is to receive an incoming HTTP request and “dispatch” it to the most appropriate server. If one server is overloaded, the dispatcher routes the request to a server with more available capacity. This ensures high availability; if a single server fails, the dispatcher simply stops sending traffic to it, and the end-user never notices a disruption.

Algorithmic Dispatching: Round Robin vs. Least Connections

Network dispatchers use sophisticated algorithms to decide where a request should go.

- Round Robin: The dispatcher sends requests to servers in a simple rotating order.

- Least Connections: The dispatcher monitors the current load of every server and sends the new request to the one currently handling the fewest tasks.

- IP Hashing: The dispatcher uses the client’s IP address to ensure they always connect to the same server, which is vital for maintaining “session persistence” (keeping a user logged in).

The Evolution of Dispatching in Cloud and Microservices

As we move toward a world of “serverless” computing and microservices, the role of the dispatcher is evolving into more abstract and automated forms. In these environments, dispatching happens at the orchestration layer.

Serverless Functions and Event-Driven Architecture

In a serverless environment like AWS Lambda or Google Cloud Functions, the “dispatcher” is part of the cloud provider’s infrastructure. When a specific trigger occurs—such as an image being uploaded to a storage bucket—the cloud’s internal dispatcher instantly provisions a container, runs the necessary code, and then shuts it down. This “just-in-time” dispatching allows companies to scale their tech stack infinitely without managing physical hardware.

API Gateways as Modern-Day Dispatchers

In a microservices architecture, an API Gateway acts as the primary dispatcher for all incoming calls. Instead of a client needing to know the location of twenty different services (one for billing, one for user profiles, one for inventory), the client talks only to the Gateway. The Gateway dispatches the request to the correct microservice, aggregates the results, and sends them back. This provides a layer of security and abstraction that is essential for modern, high-scale software ecosystems.

Conclusion: The Invisible Engine of Progress

Whether it is switching between threads in a high-speed gaming PC, managing the state of a complex React application, or routing global web traffic through a load balancer, the dispatcher remains a foundational concept in technology. It represents the transition from “what needs to be done” to “actually doing it.”

As AI and machine learning continue to integrate into system architectures, we can expect “intelligent dispatching” to become the next trend. Imagine a dispatcher that uses predictive analytics to move data closer to a user before they even request it, or an OS dispatcher that optimizes power consumption based on a user’s historical habits. By understanding the role of the dispatcher, we gain a deeper appreciation for the intricate layers of logic that keep our digital world running smoothly, efficiently, and reliably. For developers and tech enthusiasts alike, mastering the patterns of dispatching is not just a technical requirement—it is the key to building the high-performance systems of tomorrow.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.