In the realm of sociology, the term “de facto racism” describes a form of systemic inequality that exists in practice, even if it is not legally mandated or explicitly stated in policy. Unlike “de jure” racism, which refers to discrimination enshrined in law (such as the Jim Crow era), de facto racism is subtler, emerging from social patterns, economic disparities, and historical momentum. In the 21st century, this phenomenon has found a new and potent vessel: technology.

As we transition into an era dominated by artificial intelligence, machine learning, and automated decision-making, de facto racism has shifted from human interactions to the very code that powers our digital lives. When algorithms produce discriminatory outcomes despite being “blind” to race, we are witnessing the digital evolution of de facto racism. For tech leaders, developers, and consumers, understanding this intersection is critical to building a future where innovation does not inadvertently perpetuate the biases of the past.

The Digital Manifestation: Defining De Facto Racism in Tech

To understand de facto racism within the technology sector, one must first recognize that software is never truly neutral. It is a product of its creators, its data, and the social context in which it operates.

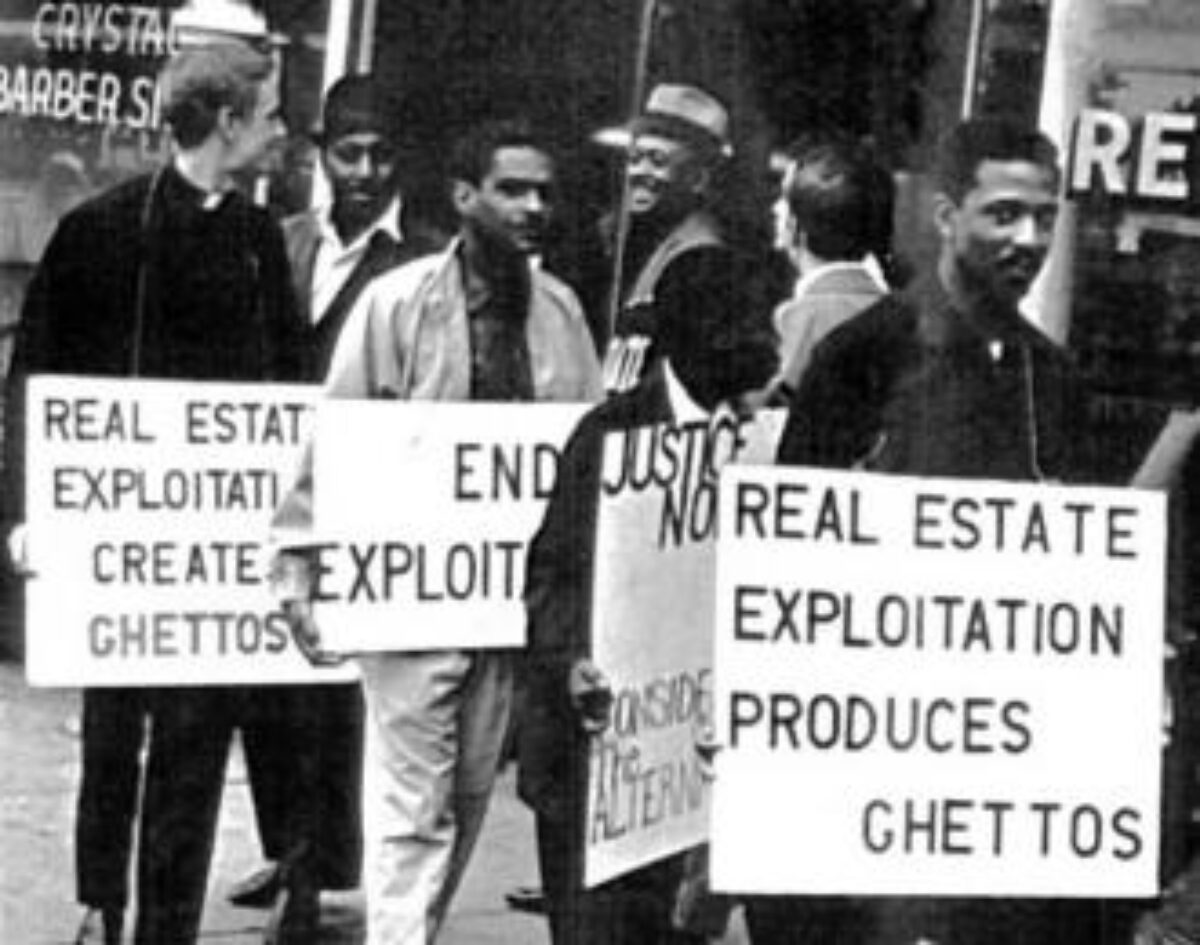

From Legal Statutes to Coded Realities

While modern tech companies do not write code with the intent to discriminate—an act that would be illegal and a violation of corporate ethics—the outcomes of their software often mirror historical inequities. This is the essence of de facto racism in tech: discriminatory results that occur without discriminatory intent. For instance, an algorithm designed to optimize for “neighborhood safety” might inadvertently penalize minority communities because it relies on historical crime data that reflects over-policing rather than actual crime rates. The code isn’t “racist” by design, but its output is racist in practice.

The Myth of Objective Code

There is a prevailing myth in Silicon Valley that mathematics is inherently objective. The logic follows that if a computer makes a decision based on data, that decision must be fair. However, this ignores the “de facto” reality that data is a mirror of society. If society has been shaped by decades of systemic exclusion in housing, education, and banking, the data generated by that society will carry those traces. When developers treat data as an objective truth without accounting for social context, they transition from engineers to conduits for systemic bias.

The Data Problem: Garbage In, Bias Out

The primary engine of de facto racism in technology is the training data used to “teach” machine learning models. If the input is tainted by historical prejudice, the output will inevitably reflect it.

Historical Training Data and the Persistence of the Past

Machine learning models require vast amounts of data to identify patterns. In fields like fintech or insurance, these models often look at historical success to predict future risk. If a bank’s historical data shows that a certain demographic was rarely approved for loans, the AI will learn that this demographic is a “high risk.” It does not see that the historical lack of approvals was due to redlining or discriminatory lending practices; it simply sees a pattern and replicates it. This creates a feedback loop where the de facto racism of the 20th century is automated and accelerated in the 21st.

The Problem of Underrepresentation in Datasets

De facto racism also manifests through omission. In the development of facial recognition and biometric software, datasets have historically skewed heavily toward lighter-skinned individuals. This wasn’t necessarily a conscious decision to exclude, but rather a result of using available, convenient data (often from tech-heavy, Western demographics). The result is “algorithmic de facto racism”: technology that works seamlessly for white users but fails significantly for People of Color. When a digital tool—be it a smartphone sensor or an automated passport gate—functions poorly for specific ethnic groups, it reinforces a standard where “default” is white and “other” is an error.

Case Studies in Technological De Facto Racism

To move beyond the theoretical, we must examine specific sectors where technological de facto racism has had measurable real-world impacts.

Facial Recognition and Demographic Accuracy Gaps

Extensive research, most notably the “Gender Shades” project by Joy Buolamwini and Timnit Gebru, has highlighted how facial analysis AI exhibits massive disparities in accuracy. While these systems often boast 99% accuracy for white males, the error rates for darker-skinned women can climb as high as 35%. In a tech-centric world, these errors aren’t just inconveniences; they lead to wrongful arrests when used by law enforcement and exclusion from digital services that require biometric identity verification. This is de facto racism facilitated by “technological blindness.”

Predictive Policing and Automated Risk Assessments

In the judicial system, tools like COMPAS (Correctional Offender Management Profiling for Alternative Sanctions) have been used to predict the likelihood of a defendant re-offending. Studies have shown that these algorithms often assign higher risk scores to Black defendants than white defendants, even when controlling for the severity of the crime. Because the algorithm uses proxies for race—such as “neighborhood,” “employment status,” or “social circle”—it reaches a discriminatory conclusion without ever “knowing” the defendant’s race. This is a classic example of de-facto-by-proxy, where tech automates systemic prejudice.

AI in Recruitment and Healthcare Disparities

In the corporate world, AI-driven resume screening tools have been found to penalize candidates who attended historically Black colleges or lived in specific zip codes. Similarly, in healthcare, algorithms used to allocate care to high-risk patients have been found to prioritize white patients over Black patients with the same level of illness. The reason? The algorithm used “healthcare spending” as a proxy for “healthcare need.” Because systemic poverty often results in lower healthcare spending among minority groups, the AI incorrectly concluded they were “healthier” and required less intervention.

The Business and Ethical Stakes for Tech Leaders

For the tech industry, addressing de facto racism is no longer just a social justice issue—it is a business imperative. The risks of ignoring these biases are manifold, ranging from legal liability to brand erosion.

Regulatory Pressure and the Rise of AI Ethics

Governments worldwide are beginning to catch up with the pace of technological bias. The EU’s AI Act and various US state-level regulations are starting to mandate transparency and fairness in automated systems. Companies that fail to audit their algorithms for de facto racism face significant fines and the possibility of being banned from certain markets. This has led to the rise of “AI Ethics” departments within major tech firms, tasked with identifying and mitigating bias before a product hits the market.

Brand Integrity in an Era of Social Accountability

In an increasingly conscious consumer market, a brand’s reputation is tied to its inclusivity. When a tech giant releases a product that doesn’t recognize Black faces or a credit algorithm that discriminates against women of color, the backlash is swift and global. Beyond the PR nightmare, de facto racism in tech represents a failure of product quality. If a tool doesn’t work for a significant portion of the global population, it is a flawed product. Tech leaders are realizing that inclusive design is simply good engineering.

Strategies for Mitigation: Building Equitable Systems

Eliminating de facto racism from technology requires a move away from “colorblind” engineering toward “bias-aware” development.

Implementing Algorithmic Audits and Bias Detection

The first step in fixing the problem is measuring it. Tech companies are increasingly using “fairness toolkits”—such as IBM’s AI Fairness 360 or Google’s What-If Tool—to stress-test their models. These tools help developers identify if an algorithm is producing disparate impacts across different demographic groups. By conducting regular algorithmic audits, companies can catch de facto bias in the development phase rather than the deployment phase.

Diversifying the Engineering Pipeline

A significant driver of de facto racism in tech is the lack of diversity among the people building the tools. When a development team is homogeneous, they are less likely to notice bias in a dataset or anticipate how a feature might negatively impact a marginalized community. Diversity in tech is not just about HR quotas; it is about bringing different life experiences to the table to ensure that software is built with a global perspective.

Human-in-the-Loop and Ethical Frameworks

The “set it and forget it” approach to AI is one of the biggest contributors to de facto racism. To mitigate this, many experts advocate for “human-in-the-loop” systems, where automated decisions are reviewed by human experts, particularly in high-stakes fields like medicine, law, and lending. Furthermore, companies must adopt ethical frameworks that prioritize equity over pure efficiency. This means being willing to sacrifice a small margin of “accuracy” or “speed” if it ensures the system does not produce discriminatory outcomes.

In conclusion, de facto racism in technology is a complex challenge that mirrors the complexities of society itself. As software continues to eat the world, the responsibility of the tech industry grows. By acknowledging that “neutral” code can still produce “biased” results, and by taking proactive steps to audit data and diversify development, the tech world can move toward a future where innovation truly serves everyone, regardless of the patterns of the past.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.