The intersection of technology and healthcare has fundamentally altered our understanding of human behavior, particularly regarding the clinical definition of substance abuse. Historically, “substance abuse” was confined to the physical ingestion of chemicals—alcohol, opioids, or stimulants. However, in the contemporary tech landscape, the definition is expanding. Through the lenses of AI-driven diagnostics, digital biomarkers, and the emergence of “digital substances,” we are witnessing a paradigm shift in how we categorize, identify, and treat dependency.

In the tech sector, substance abuse is no longer just a biological question; it is a data-driven one. From software that tracks neurological responses to apps that manage recovery, technology is redefining the boundaries of what constitutes an “abusive” relationship with a substance.

The Digital Definition: How AI and Data Redefine “Abuse”

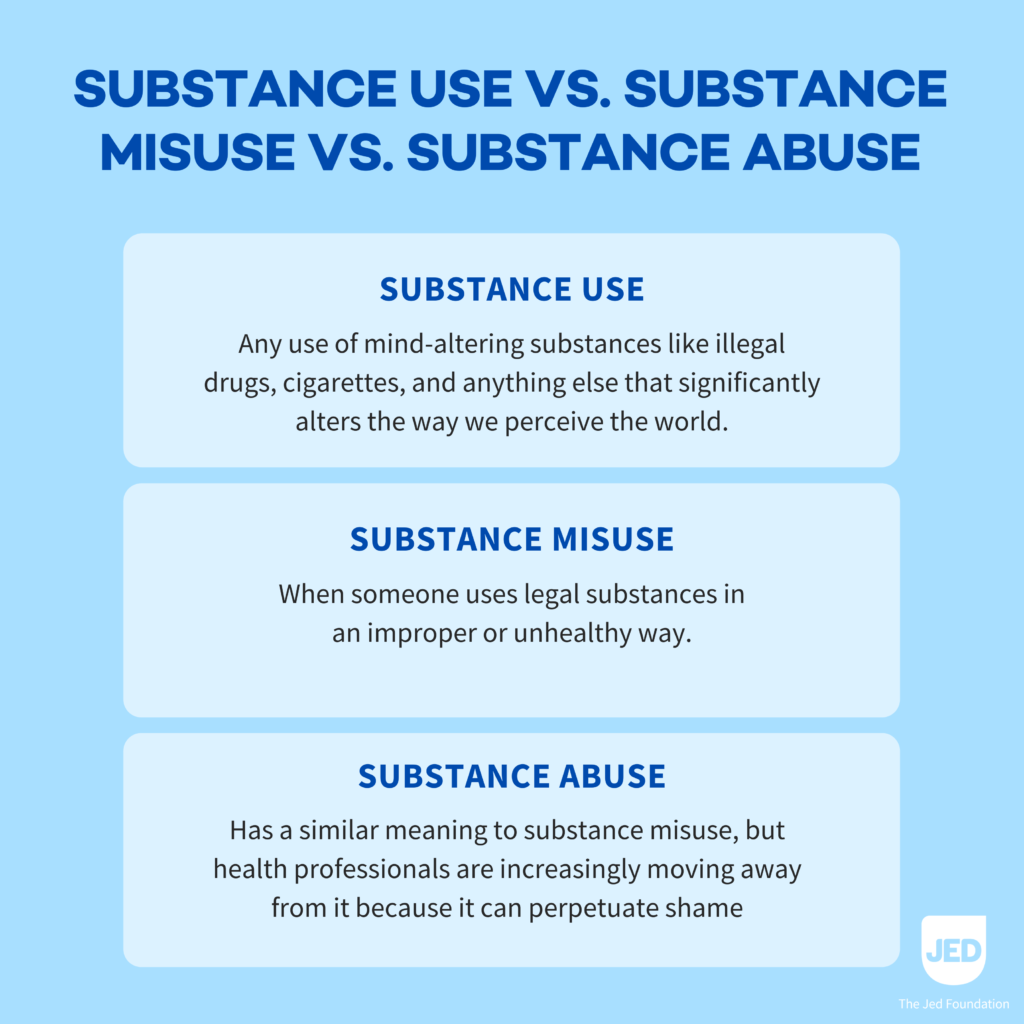

In traditional medicine, substance abuse is often defined by the Diagnostic and Statistical Manual of Mental Disorders (DSM-5) as a pattern of symptoms resulting from the use of a substance that the individual continues to take despite experiencing problems. In the tech niche, we view this through the lens of “behavioral analytics.”

From Subjective Reporting to Digital Biomarkers

One of the most significant shifts in defining substance abuse today is the move from subjective patient reporting to objective digital biomarkers. Tech companies are developing wearable sensors and smartphone-based software that monitor physiological signals—such as heart rate variability (HRV), skin conductance, and sleep patterns—to detect the early stages of substance misuse. When these data points deviate from a baseline, AI algorithms can identify a “substance abuse event” before the individual may even be aware of their own escalating dependency.

AI-Driven Predictive Modeling

Machine learning models are now capable of analyzing vast datasets to determine what is considered substance abuse at a population level. By processing electronic health records (EHRs) and even social media activity, AI can identify clusters of behavior that signify a transition from casual use to clinical abuse. This tech-centric approach allows for a “proactive definition,” where abuse is identified through predictive risk scores rather than waiting for a physical crisis.

Digital Substances: The New Frontier of Behavioral Addiction

As technology evolves, the very word “substance” is being interrogated. Software developers and neuroscientists are increasingly looking at how high-dopamine digital environments—such as social media algorithms or immersive gaming—affect the brain in ways identical to chemical substances.

The Dopamine Loop and Software Design

In the tech world, the “substance” isn’t always something you swallow; it can be the interface you interact with. Software engineers use persuasive design—intermittent reinforcement schedules and infinite scrolls—that trigger the same neural pathways as traditional narcotics. Tech-focused clinicians are now arguing that “digital substance abuse” should be categorized under the broader umbrella of substance-related and addictive disorders. This is because the neurological “high” and subsequent “withdrawal” from screen-based stimuli mimic chemical dependency.

Virtual Reality and Sensory Overload

Virtual Reality (VR) and Augmented Reality (AR) present a unique challenge to the definition of abuse. “Substance” in this context refers to the simulated environment. Tech reviews of immersive hardware often highlight the potential for “escapism,” but when that escapism leads to a neglect of physical health and social obligations, it enters the realm of abuse. The tech industry is currently debating where the line exists between high-end entertainment and “digital toxicity.”

Tech-Enabled Monitoring and Intervention Tools

Identifying what is considered substance abuse is only the first step; the tech niche has pioneered tools to monitor and mitigate these behaviors. These tools represent a fusion of digital security and healthcare, ensuring that recovery is tracked with the same precision as a software development lifecycle.

Software as a Medical Device (SaMD)

The FDA has recently cleared several “Software as a Medical Device” (SaMD) platforms specifically designed to treat substance use disorders (SUD). These are not just basic apps; they are complex, evidence-based therapeutic tools. For instance, reSET® is a prescription digital therapeutic that provides cognitive behavioral therapy to patients. In this ecosystem, “abuse” is quantified by a user’s interaction with the software—tracking triggers, cravings, and use patterns in real-time.

IoT and Remote Monitoring Gadgets

The Internet of Things (IoT) has introduced smart breathalyzers and wearable biosensors that sync with a user’s smartphone. These gadgets provide a continuous stream of data to clinicians. In this framework, substance abuse is defined by “non-compliance” or “biological spikes” recorded by the hardware. This shifts the focus from an occasional check-up to a 24/7 digital monitoring system, providing a much more granular view of what constitutes “active abuse” versus “remission.”

The Ethics of Big Data in Addiction Profiling

As we rely more on technology to define substance abuse, we encounter significant questions regarding digital security, privacy, and the ethical implications of “profiling” individuals based on their digital footprint.

Data Privacy and the Stigma of the “Digital Mark”

If an algorithm determines that a user’s behavior is “consistent with substance abuse,” where does that data go? The tech industry faces a massive challenge in securing sensitive health data. In the era of big data, being “flagged” by an AI as having a substance abuse problem could have repercussions for insurance premiums or employment, even if the user has not sought a formal medical diagnosis. Ensuring digital security and the right to “algorithmic privacy” is paramount.

Transparency in Algorithmic Diagnosis

There is a growing demand for “Explainable AI” (XAI) in the medical tech sector. If a software tool identifies a pattern as “substance abuse,” clinicians and patients need to understand why. The “black box” nature of some AI tools can lead to false positives, where a user’s irregular lifestyle (due to night-shift work or stress) might be mischaracterized as substance misuse. Ensuring that tech tools remain an aid to—and not a replacement for—human judgment is a central theme in modern digital health.

The Future of Digital Sobriety and Tech Wellness

The conversation around what is considered substance abuse is ultimately leading to the rise of “Digital Wellness” and “Digital Sobriety” as recognized tech categories. As the industry matures, we are seeing a shift toward building tools that prevent abuse rather than just identifying it after the fact.

Incorporating “Friction” into App Design

In response to the “digital substance” crisis, some developers are intentionally building “friction” into their apps to prevent addictive loops. This includes features like “Zen Mode,” app timers, and grayscale display options. By acknowledging that their products can be “abused,” tech companies are taking a page from the pharmaceutical industry’s playbook, implementing safety mechanisms to ensure their “substance” (the software) is used responsibly.

The Role of Decentralized Health Records (Web3)

Looking forward, Web3 and blockchain technology may offer a solution to the privacy concerns surrounding substance abuse data. By using decentralized identifiers, individuals could own their own “recovery data,” choosing which doctors or apps can access their history. This would allow for a more holistic and secure definition of their health status, where the individual—not the platform—defines their journey through and beyond substance abuse.

Conclusion: A Multi-Dimensional Framework

In the tech-driven world of 2024, what is considered substance abuse is no longer a simple binary of “using” or “not using.” It is a complex, multi-dimensional framework that includes:

- Chemical Abuse: Monitored via IoT and AI-driven digital biomarkers.

- Behavioral/Digital Abuse: Characterized by dependency on high-dopamine software environments.

- Data-Defined Risks: Identified through predictive modeling and large-scale data analytics.

As our gadgets become more integrated into our biology, the distinction between a “substance” and a “software” continues to blur. The tech niche is not just providing the tools to treat addiction; it is fundamentally rewriting the code on how we define the human experience of dependency. By leveraging AI, wearable hardware, and secure data practices, we are moving toward a future where “substance abuse” is identified early, treated precisely, and managed with a level of digital sophistication that was once the stuff of science fiction.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.