In the traditional sense, a music bar (or measure) is a segment of time defined by a specific number of beats. However, in the rapidly evolving landscape of technology, the “music bar” has transitioned from a mere notation on a paper score to a sophisticated digital construct. It is now a fundamental unit of data in software development, a critical element of User Interface (UI) design, and a cornerstone of artificial intelligence in generative audio.

Understanding the music bar through a technical lens requires an exploration of how software interprets rhythm, how developers visualize sound for users, and how modern hardware synchronizes complex audio signals. Whether you are a developer building a Digital Audio Workstation (DAW), a UI/UX designer crafting a streaming app, or a tech enthusiast curious about the mechanics of digital media, the music bar represents the intersection of mathematical precision and creative expression.

The Digital Foundation: The Bar as a Unit of Measurement in DAWs

At the heart of every music production software, from Ableton Live to FL Studio, lies the “grid.” This grid is the digital manifestation of music bars. For software to process sound, it must translate the fluid nature of rhythm into a rigid, manageable framework of data.

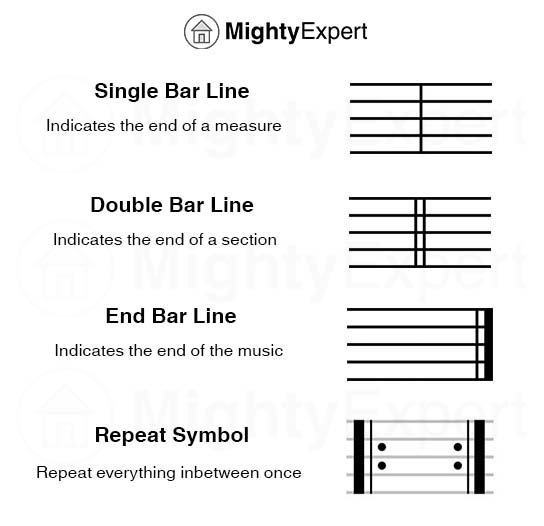

From Sheet Music to MIDI Grids

In the analog world, a bar is a visual guide for musicians. In tech, a bar is a data container. When a producer inputs a MIDI (Musical Instrument Digital Interface) note, the software assigns it a coordinate based on the bar, beat, and tick. This “snapping to grid” functionality is what allows for perfect synchronization. The technical challenge for developers is ensuring that the software’s internal clock remains sample-accurate. If the digital representation of a bar drifts by even a few milliseconds, the entire composition loses its temporal integrity.

The Role of Time Signatures in Algorithmic Logic

Modern music software must be flexible enough to handle various time signatures—4/4, 3/4, or even complex polymeters like 7/8. This is handled through algorithmic logic that divides the digital timeline into segments. For developers, this involves creating a dynamic interface where the visual “bar” adjusts its width and sub-divisions based on the user’s input. This isn’t just a visual change; it alters how the software’s engine triggers samples and processes effects, ensuring that time-based plugins (like delays and reverbs) stay in sync with the musical structure.

The User Interface: Engineering the Modern Playback Progress Bar

For the average consumer, the “music bar” is the horizontal line at the bottom of a screen on Spotify, YouTube, or SoundCloud. While it looks simple, the engineering behind this playback bar—often called a “scrubber”—is a masterclass in UI/UX design and data streaming.

Waveform Visualization and Scrubber Mechanics

A significant leap in music tech occurred when static progress bars were replaced by dynamic waveforms. These waveforms are generated through a process called Fast Fourier Transform (FFT). The software analyzes the audio file, calculates the amplitude of various frequencies, and renders a visual “bar” that represents the song’s energy. This allows users to “see” the music before they hear it, identifying drops, choruses, and silent bridges. Developing a responsive waveform bar requires balancing visual detail with CPU performance, especially on mobile devices where battery life and rendering speed are paramount.

Dynamic Metadata Integration in Modern Media Players

The contemporary music bar is no longer just a progress indicator; it is a hub for interactive metadata. Modern streaming apps integrate lyrics, artist information, and even “heat maps” into the bar. For instance, YouTube’s progress bar identifies the “most replayed” parts of a video. This is achieved through back-end data analytics that track millions of user interactions in real-time. The bar has evolved into a sophisticated tool for data visualization, providing a seamless bridge between the listener and the digital assets of the track.

Hardware Integration: MIDI Controllers and Physical Bar Representation

The concept of the music bar extends beyond the screen and into the physical world of hardware. MIDI controllers and digital consoles use physical indicators to represent the “bar,” providing tactile feedback to performers and engineers.

How DAWs Sync Bars, Beats, and Milliseconds

Hardware-software integration relies on a protocol known as MIDI Clock or MTC (MIDI Time Code). When a producer uses a drum machine, the device must stay perfectly aligned with the “bars” in the software. This involves a constant stream of “ticks” (usually 24 pulses per quarter note) sent from the computer to the hardware. The technical hurdle here is latency. High-performance audio interfaces are designed to minimize the delay between the software’s internal bar count and the hardware’s physical response, ensuring that the “bar” remains a unified concept across the entire ecosystem.

Buffer Management and Latency Compensation

When a digital system processes audio, it does so in “buffers”—small chunks of data. If the buffer is too large, the visual representation of the bar on the screen won’t match what the user hears. If it’s too small, the audio will crackle. Engineers must optimize buffer management to ensure that the visual “playhead” moving across the music bar remains perfectly aligned with the audio output. This requires sophisticated delay compensation algorithms that calculate the exact time it takes for a signal to travel through various plugins and hardware components.

The AI Revolution: How Machine Learning Decodes Musical Measures

The most recent frontier for the music bar is Artificial Intelligence. As AI tools like Suno, Udio, and Google’s MusicLM gain popularity, the “bar” has become a vital parameter for machine learning models.

Pattern Recognition in Generative Audio Tools

AI doesn’t “hear” music the way humans do; it identifies patterns in data. To generate a coherent song, an AI model must understand the concept of a four-bar phrase or an eight-bar chorus. This is achieved through Neural Networks that have been trained on millions of hours of audio. By tokenizing music into bars, the AI can predict what should happen next in a sequence. If the AI detects a high-energy “bar” in a dance track, its predictive modeling will suggest a complementary “bar” to follow, maintaining the rhythmic and melodic flow that a human listener expects.

Quantization and the Human Element in Digital Rhythms

One of the most complex tasks in music tech is “humanizing” the bar. While computers excel at perfect mathematical timing, human musicians naturally play slightly ahead of or behind the beat. Modern software uses AI-driven quantization to analyze a live recording and align it to the music bar without stripping away its “soul.” These algorithms can distinguish between a mistake and a deliberate stylistic choice (like “swing”), allowing technology to support human creativity rather than replacing it with robotic perfection.

The Future of the Digital Music Bar

As we move toward more immersive technologies like Spatial Audio and the Metaverse, the definition of a music bar will continue to expand. In a 3D environment, the music bar might not be a line on a screen, but a spatial trigger that interacts with the user’s movement.

The transition of the music bar from a simple musical term to a complex technical framework highlights the incredible progress of the digital age. It is a testament to how software, hardware, and AI have converged to transform our relationship with sound. For the technologist, the music bar is more than just a measure of time; it is the fundamental architecture of the digital symphony, a data-driven pulse that powers the global entertainment industry. Through meticulous UI design, rigorous hardware synchronization, and cutting-edge machine learning, the music bar remains the most vital “metronome” in the world of technology.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.