The release of The Last of Us Part II marked a watershed moment in the history of interactive media. While narrative discourse often dominates the conversation surrounding the title, the underlying technological framework that brings the protagonist, Ellie, to life represents a pinnacle of software engineering, motion synthesis, and digital artistry. When we ask “what happens to Ellie” in this sequel, the answer is as much a matter of code and hardware optimization as it is a matter of scriptwriting. From the implementation of motion matching to the groundbreaking advancements in facial performance capture, Ellie’s transformation is a masterclass in how technology can bridge the “uncanny valley” to deliver profound emotional resonance.

Performance Capture and Facial Animation: The Soul in the Machine

The most immediate change to Ellie in the sequel is her visual fidelity. This was not merely an increase in polygon count but a fundamental shift in how character data is captured and processed. To achieve the level of nuance required for a character undergoing extreme psychological trauma, Naughty Dog utilized a highly advanced proprietary performance capture pipeline.

The Meticulous Art of High-Fidelity Performance Capture

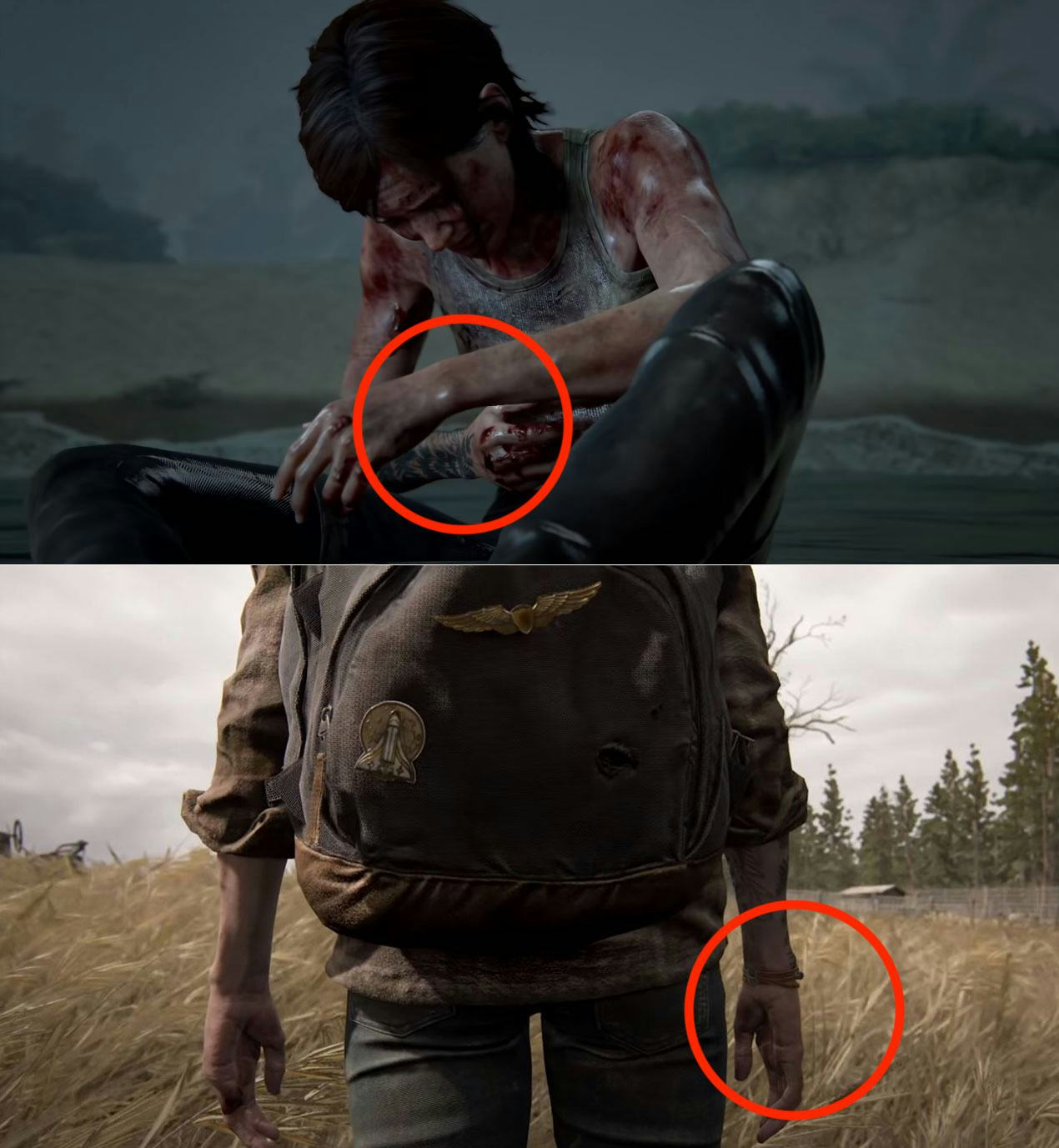

In the original game, much of the animation was hand-keyed or supplemented with basic motion capture data. In The Last of Us Part II, the “happening” of Ellie is rooted in a one-to-one translation of human emotion. The technology involved high-definition head-mounted camera rigs that tracked thousands of points on actress Ashley Johnson’s face.

This data was then fed into a facial animation system that accounts for the “internal” workings of the human face—including the way skin slides over muscle and how blood flow changes during moments of exertion or distress. For the first time, developers could simulate “blush” and “pallor,” allowing Ellie to physically redden in anger or turn pale in fear without manual artist intervention. This technical layer ensures that the character’s internal state is always visible to the player, creating a seamless link between the player’s inputs and Ellie’s emotional output.

Proprietary Animation Systems and Micro-Expressions

Beyond the broad strokes of a scream or a sob, what happens to Ellie technically is the introduction of micro-expressions. Naughty Dog’s engine utilized a system that layered small, involuntary movements—eye jitters (saccades), lip quivers, and pupil dilation—onto the primary animation. These features are processed in real-time, reacting to the lighting and the proximity of threats within the game world. By automating these micro-expressions through sophisticated software triggers, the developers ensured that Ellie never feels like a static “game object,” but rather a sentient entity reacting to her digital environment.

Combat AI and the “Threat Proximity” System: Simulating Human Instinct

In terms of gameplay mechanics, Ellie’s evolution is defined by a complete overhaul of the Artificial Intelligence (AI) and movement systems. The technical goal was to move away from the “tank-like” controls of the previous generation and toward a system that felt agile, desperate, and reactive.

The Impact of Motion Matching on Fluid Movement

One of the most significant technical leaps in The Last of Us Part II is the implementation of “Motion Matching.” In traditional game development, an animator creates a “run” animation, a “turn” animation, and a “stop” animation, and the engine blends them together. This often results in “foot sliding” or robotic transitions.

Motion Matching works differently: the developers recorded a massive library of every conceivable movement (limping, crouching, squeezing through gaps, lunging) and stored them in a searchable database. The game engine then constantly “looks ahead” at the player’s controller input and picks the most biologically accurate frame from the database to play next. For Ellie, this means her gait changes based on the slope of the terrain, her fatigue level, and her proximity to cover. What “happens” to her movement is a transition from pre-baked cycles to a fluid, data-driven simulation of human locomotion.

Sophisticated NPC Interactions and Stealth Tech

The tech behind Ellie’s interaction with the world also saw a massive upgrade through the “Threat Proximity” system. This AI framework allows Ellie to acknowledge enemies not just as targets, but as physical presences. If a bullet passes near her head, the system triggers a specific “near-miss” animation and an acoustic response.

Furthermore, the stealth mechanics were rewritten to include “prone” states and “neural noise” in the AI detection. This means enemies (and Ellie) have variable levels of perception based on foliage density, light levels, and even the sound of their own footsteps. This technical complexity forces the player to inhabit Ellie’s vulnerability, making the “survival” aspect of her character a product of complex mathematical probability and environmental scanning.

Environmental Interactivity and Hardware Optimization

What happens to Ellie is also a product of the world she inhabits. The technical achievement of The Last of Us Part II lies in its ability to push the aging PlayStation 4 hardware to its absolute limit, utilizing every cycle of the GPU to render a world that feels tactile and reactive.

Pushing the Limits of the PlayStation 4 Hardware

The optimization required to run Ellie’s high-detail model alongside complex environments was immense. Naughty Dog employed “asynchronous compute” and “tiled resources” to manage memory more efficiently. This allowed for larger, more open levels—such as the downtown Seattle sequence—without the need for visible loading screens. The technical evolution here is the “streaming” of assets; as Ellie moves through the world, the engine is constantly decompressing data in the background, ensuring that her journey feels like a continuous, unbroken shot.

Physics-Based Rendering and Material Interaction

The “tactile” feel of Ellie’s journey is largely due to Physics-Based Rendering (PBR). This technique simulates how light interacts with different materials in a mathematically accurate way. Whether it is the way light filters through Ellie’s ears (subsurface scattering) or how mud clings to her clothes and dries over time, the tech ensures a constant state of environmental “wear and tear.”

The physics engine also manages the “rope tech” and “glass shattering” systems, which were touted as some of the most advanced of the console generation. When Ellie breaks a window or throws a rope, the physics calculations are performed with high precision, allowing for emergent gameplay moments that aren’t scripted, but are instead a result of the robust physics software.

Accessibility as a Technical Milestone

Perhaps the most important thing that “happened” to Ellie in the sequel was her democratization. Naughty Dog transformed the game into one of the most accessible pieces of software ever created. From a tech perspective, this involved building a massive suite of features that could overlay and modify the core engine.

Designing for Universal Playability

The technical architecture of The Last of Us Part II includes over 60 accessibility settings. This isn’t just a menu of options; it is a deep integration into the game’s code. For visually impaired players, the “High Contrast Mode” strips away textures and applies solid shaders to the world, highlighting Ellie and her enemies in distinct colors. This required a fundamental redesign of the rendering pipeline to allow for a simplified, secondary visual output that doesn’t sacrifice performance.

Audio Cues and Visual Aids as Software Innovations

For players with hearing or motor impairments, the developers implemented sophisticated text-to-speech engines and “combat accessibility” toggles. The engine can translate on-screen text into synthesized audio in real-time, a feat that requires significant CPU overhead. Additionally, the “navigation assistance” feature uses the game’s pathfinding AI—originally designed for NPCs—to guide the player toward the next objective. By repurposing NPC technology for player accessibility, Naughty Dog proved that technical innovation can be used to make complex narrative experiences available to everyone.

Conclusion: The Digital Legacy of a Character

In analyzing what happens to Ellie in The Last of Us Part II, we find a character who has been elevated by the most sophisticated technology available in the modern gaming era. She is no longer just a collection of pixels and voice lines; she is a complex intersection of motion matching, PBR materials, advanced AI, and industry-leading accessibility software.

The technical journey of Ellie reflects a broader trend in the tech industry: the move toward “organic” digital experiences. By leveraging hardware to its breaking point and developing proprietary animation systems, Naughty Dog has set a new benchmark for what is possible in character-driven software. As we move further into the current generation of hardware, the innovations pioneered through Ellie’s development will undoubtedly serve as the blueprint for the future of digital storytelling and human-centric UI design. Through the lens of technology, Ellie’s story is not just one of survival, but one of unprecedented technical achievement.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.