The rapid integration of Large Language Models (LLMs) into our daily workflows has transformed how we approach coding, writing, and data analysis. At the forefront of this revolution is ChatGPT, a tool so versatile that it has become synonymous with generative AI. However, with great power comes a complex framework of governance. OpenAI, the architect of ChatGPT, maintains a rigorous set of Usage Policies and Terms of Service designed to ensure the safety, legality, and ethical deployment of their technology.

For the casual user, a policy violation might seem like a distant technicality. For developers, researchers, and tech-focused professionals, however, understanding the nuances of these boundaries is critical. Violating these policies doesn’t just result in a polite error message; it can lead to tiered enforcement actions ranging from content redaction to permanent platform bans. This article explores the technical and systemic consequences of crossing the line with ChatGPT.

Understanding the OpenAI Usage Policy Framework

OpenAI’s policies are not arbitrary; they are a synthesis of legal requirements, safety research, and ethical considerations. These guidelines are designed to prevent the weaponization of AI while fostering a creative environment. To understand the consequences of a violation, one must first understand the categories of restricted behavior.

Prohibited Content Categories

The most common violations occur within the content generation layer. OpenAI explicitly prohibits the use of its models to generate “Disallowed Content.” This includes, but is not limited to, hate speech, harassment, sexually explicit material, and content that promotes self-harm or violence. From a technical perspective, these categories are monitored by a secondary set of models known as “Moderation Endpoints,” which scan input and output in real-time to ensure compliance with safety standards.

Deceptive and Fraudulent Activities

Beyond the nature of the text itself, the intent of the usage matters. Using ChatGPT to create malware, orchestrate phishing campaigns, or generate large-scale misinformation for political influence is a severe breach of policy. Tech-savvy users attempting to bypass these filters via “jailbreaking” prompts—essentially social engineering the model to ignore its safety training—are often flagged for systematic policy abuse.

Regulated Industries and High-Stakes Decisions

OpenAI restricts the use of ChatGPT in specific sectors where the “hallucination” of facts could lead to physical or financial harm. This includes providing tailored legal, medical, or financial advice without a human in the loop. While the AI can discuss general concepts in these fields, using it to diagnose a disease or draft a binding legal contract for a client often triggers policy warnings, as the technology is not yet certified for autonomous operation in these high-stakes environments.

The Enforcement Hierarchy: From Flags to Bans

OpenAI does not employ a “one size fits all” punishment. Instead, they utilize a tiered enforcement strategy that scales based on the severity and frequency of the violation.

Immediate Content Redaction and Warning Labels

The first line of enforcement is the automated “Refusal.” When a user inputs a prompt that triggers a safety filter, ChatGPT will respond with a standardized message: “I cannot fulfill this request.” In more subtle cases, the system may generate the response but flag it with a red or orange warning label in the UI, signaling that the content may violate policies. At this stage, the violation is logged, but the account usually remains in good standing.

Temporary Account Suspension

Repeated attempts to bypass filters—often referred to as “adversarial testing”—will escalate the situation. If the automated systems detect a pattern of a user trying to force the model into prohibited territory (such as generating code for a cyberattack), OpenAI may issue a temporary suspension. During this period, the user is locked out of their account, including access to Plus features and custom GPTs. This serves as a “cooling-off” period and a final warning.

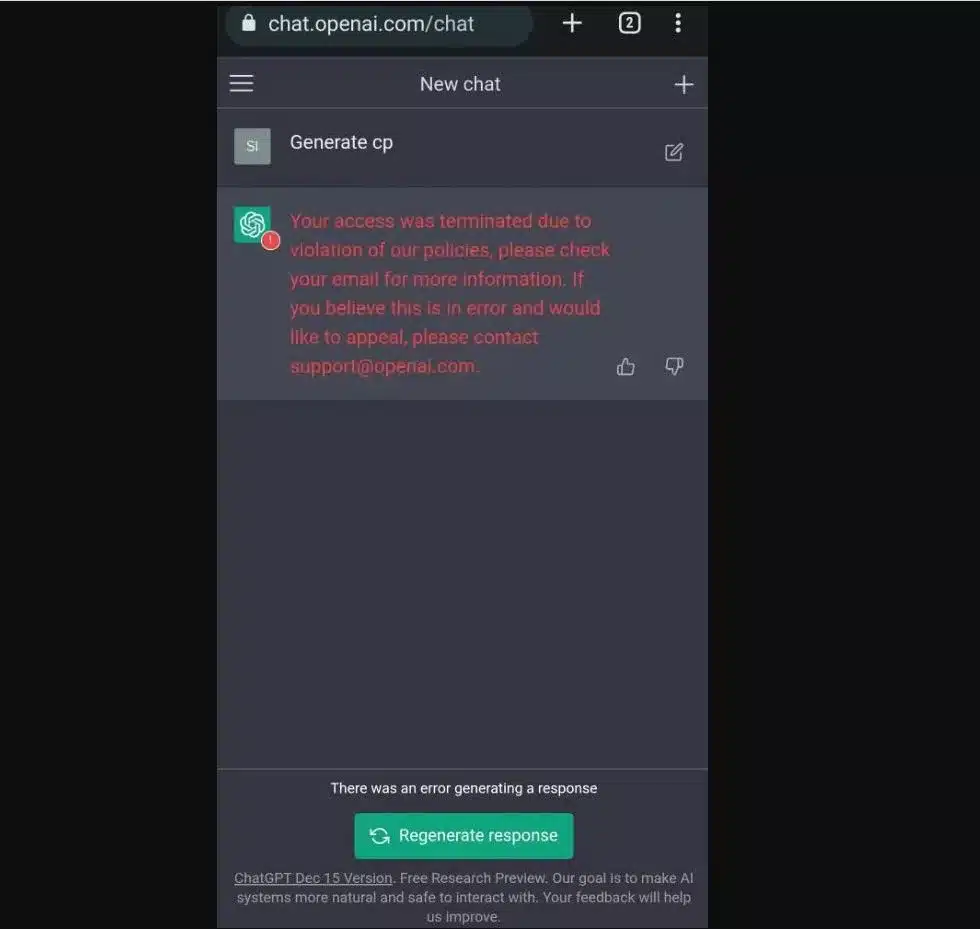

Permanent Termination and API Revocation

The most severe consequence is the permanent termination of the OpenAI account. This is usually reserved for egregious violations, such as using the platform for illegal acts, large-scale spamming, or violating the “Service Terms” regarding data scraping. For developers, this is particularly devastating. A permanent ban often extends to the API (Application Programming Interface) key. If a developer has built an entire software ecosystem around the GPT API and their access is revoked, their application will immediately cease to function, potentially leading to catastrophic business failure.

Technical Detection Mechanisms: How OpenAI Identifies Violations

OpenAI utilizes a sophisticated, multi-layered tech stack to monitor compliance. Understanding these mechanisms helps users realize that policy enforcement is not just a manual review process but an automated, algorithmic one.

The Moderation API

OpenAI provides a dedicated “Moderation API” that is used internally to screen every interaction. This tool classifies text into various categories (Hate, Self-harm, Sexual, Violence, etc.) and assigns a probability score to each. If the score exceeds a specific threshold, the system automatically triggers a refusal. This happens in milliseconds, ensuring that the enforcement is proactive rather than reactive.

Human-in-the-Loop (HITL) and RLHF

While automation handles the bulk of the work, human feedback is the foundation. Through Reinforcement Learning from Human Feedback (RLHF), OpenAI trains the model to understand the nuance of policy. When users click the “thumbs down” icon on a response or report a violation, that data is anonymized and used to fine-tune the model’s refusal boundaries. Furthermore, OpenAI’s safety team conducts periodic audits of high-volume accounts to identify sophisticated patterns of abuse that automated filters might miss.

Behavioral Heuristics and Metadata Analysis

OpenAI also monitors “meta-behaviors.” This includes tracking the rate of requests (to prevent DDoS-like behavior), the geographic origin of the traffic, and the consistency of the user’s prompt history. If a single account suddenly starts generating thousands of prompts related to political deepfakes from a suspicious IP address, the system identifies this as a “systemic risk” rather than a singular content violation, leading to swift account lockdown.

Implications for Developers and Enterprise Users

For those using ChatGPT at an enterprise or developer level, the stakes of a policy violation are significantly higher. The “Tech” ecosystem relies on stability, and a violation can disrupt the entire supply chain.

The Risk of Platform Dependency

Many modern startups are “wrappers” or sophisticated interfaces built on top of OpenAI’s models. If a developer fails to implement their own moderation layer and allows their end-users to generate prohibited content through their API key, the developer is held responsible by OpenAI. This “cascading liability” means that a developer’s entire business is only as secure as their most malicious user.

Loss of Custom Models and Fine-Tuning Data

OpenAI allows users to create “Custom GPTs” and fine-tune models on specific datasets. A policy violation that leads to an account ban results in the immediate loss of all proprietary configurations, custom instructions, and fine-tuned weights. In the tech world, where data is an asset, this is equivalent to a total loss of digital property. Recovering this data after a ban is notoriously difficult, as OpenAI’s support channels prioritize safety over data recovery.

Tiered API Access and Reputation

OpenAI uses a “Usage Tier” system for its API. Higher tiers offer lower latency, higher rate limits, and early access to new models (like GPT-4o or o1). Policy violations can result in a “tier demotion,” where a developer’s rate limits are slashed, effectively throttling their application’s performance. Furthermore, a history of violations can flag a company’s domain, making it difficult to secure enterprise-grade SLAs (Service Level Agreements) in the future.

Best Practices for Maintaining a Compliant Tech Environment

Staying within the lines of OpenAI’s policy is not just about avoiding “bad words”; it’s about architecting a responsible interaction model.

Implementing Local Moderation Layers

For developers, the best defense is a “Defense-in-Depth” strategy. Before sending a user’s prompt to the OpenAI API, run it through a local, open-source moderation model (like Llama-Guard). By filtering out prohibited content on your own servers, you ensure that OpenAI never sees a policy-violating prompt, thereby protecting your API key and account standing.

Transparent Use Cases and Disclosures

OpenAI policies require users to be transparent about the use of AI. If you are deploying a chatbot for customer service, it should be clearly identified as an AI. In the tech community, adhering to these “Attribution Guidelines” is a sign of professional maturity and significantly reduces the likelihood of being flagged for deceptive practices.

Regular Policy Audits

The AI landscape moves fast, and OpenAI updates its Usage Policies frequently. What was a “gray area” six months ago might be strictly prohibited today. Tech leads should conduct quarterly reviews of their AI integration to ensure that their use cases still align with the current documentation. This proactive approach ensures that your technology stack remains compliant and your access to cutting-edge AI tools remains uninterrupted.

In conclusion, violating ChatGPT’s policy is a high-stakes gamble that can result in the loss of tools, data, and access to the most advanced AI infrastructure in the world. By understanding the technical enforcement mechanisms and the specific categories of prohibited behavior, users and developers can harness the power of LLMs while maintaining a secure and professional digital footprint.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.