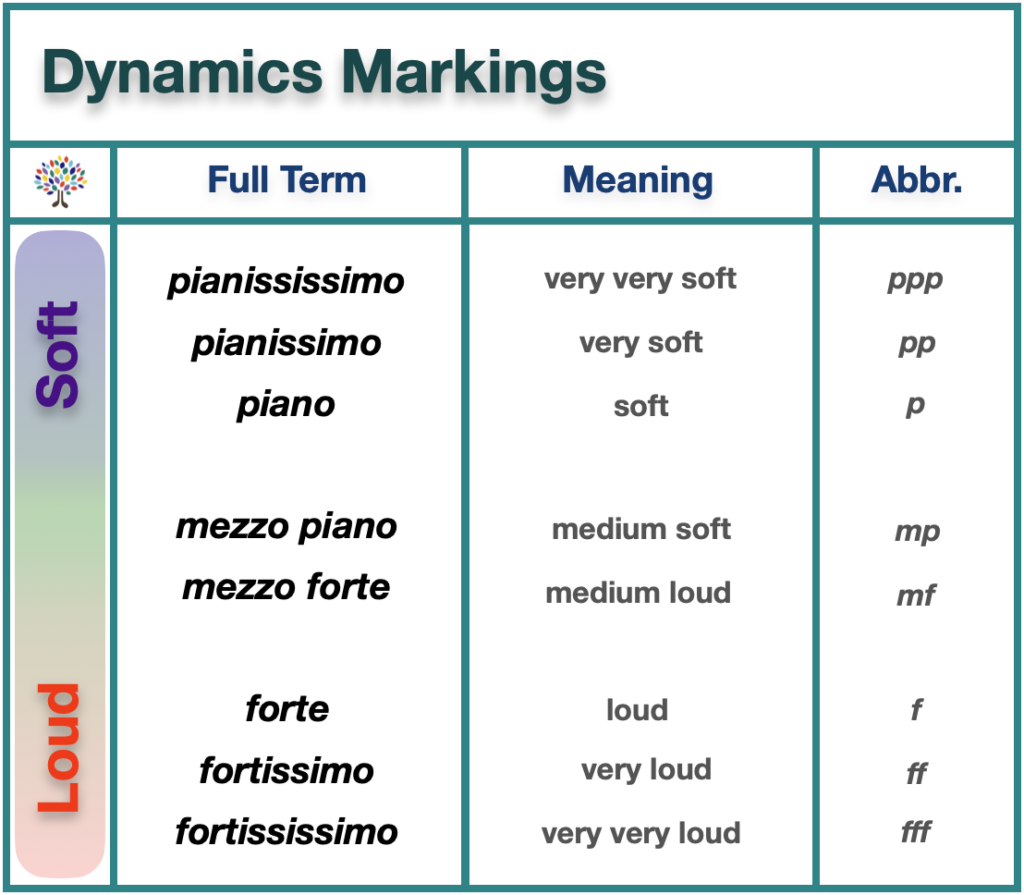

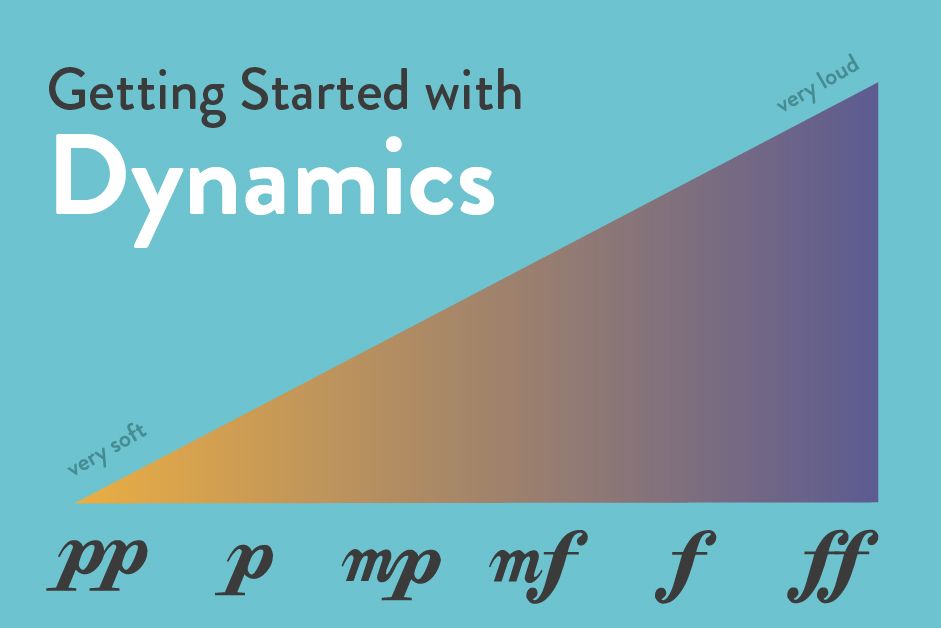

In the traditional world of music theory, the notation “mf” stands for mezzo-forte, an Italian term meaning “moderately loud.” For centuries, this instruction was a subjective guide for performers, etched in ink on paper. However, as music has migrated from the manuscript to the digital workstation, the meaning of “mf” has undergone a technological transformation. In the context of modern music technology, software engineering, and AI-driven composition, “mf” is no longer just a stylistic suggestion; it is a critical data point that dictates how algorithms, digital audio workstations (DAWs), and virtual instruments interact to create human-like sound.

Understanding what “mf” means in music today requires a deep dive into the intersection of acoustic physics and digital signal processing. As we transition into an era dominated by high-fidelity streaming and AI-generated scores, the technical representation of dynamics has become the backbone of modern audio production.

1. The Digitization of Expression: Translating “mf” into MIDI Data

When a composer writes “mf” in a digital environment, the computer must translate that instruction into a language it understands: binary code and MIDI (Musical Instrument Digital Interface) parameters. This translation is the foundation of all virtual orchestration and digital music production.

MIDI Velocity and the 127-Step Scale

In the realm of music technology, the dynamic “mf” is most commonly represented through “MIDI Velocity.” When you press a key on a MIDI controller or program a note in a DAW like Ableton Live or Logic Pro, the software assigns a value between 0 and 127.

In this technical ecosystem, “mf” typically occupies the middle-upper range, usually falling between 64 and 80. Unlike a human pianist who uses muscle memory to achieve a moderately loud tone, a software developer must calibrate digital instruments so that a velocity of 75 triggers a specific sample layer that mimics the harmonic richness of a mezzo-forte performance.

Multisampled Libraries and Dynamic Layering

Advanced software instruments, such as those found in Kontakt or EastWest libraries, utilize “dynamic layering” to give “mf” its character. Recording engineers capture a single note played at various intensities. When a producer inputs a “mf” command, the software doesn’t just turn up the volume; it triggers a specific audio sample of an instrument actually being played at a moderate intensity. This tech is crucial because the timbre (the “color” of the sound) changes depending on how hard an instrument is struck. Technology allows us to map these subtle acoustic shifts to specific digital triggers.

2. AI and the Evolution of Dynamic Humanization

One of the greatest challenges in music technology is making digital sequences sound “human.” Static dynamics are a giveaway of “robotic” music. Today, Artificial Intelligence and Machine Learning are being used to redefine how “mf” and other dynamic markings are applied in automated composition.

Predictive Modeling for Expressive Performance

New AI-driven tools, such as Google’s Magenta or various AI MIDI enhancers, analyze thousands of hours of human performances to understand the nuances of mezzo-forte. These tools recognize that a human playing “mf” doesn’t hit every note at a perfect velocity of 75. Instead, there are micro-fluctuations. AI algorithms can now “humanize” a track by applying a Gaussian distribution of velocities around the “mf” center point, ensuring that the digital output retains the emotional resonance of a live performer.

Intelligent Notation Software

Modern notation software like Sibelius, Dorico, and MuseScore 4 have integrated sophisticated playback engines. When a user places an “mf” symbol on a digital score, the software uses “Interpretive Playback” technology. This involves complex algorithms that look at the surrounding musical context—the genre, the instrument’s range, and the tempo—to decide exactly how “moderately loud” that “mf” should be in real-time. This bridge between visual notation and sonic output is a triumph of modern software engineering.

3. Loudness Normalization and the Tech of Streaming Algorithms

Once a piece of music is produced, the concept of “mf” enters the world of digital distribution and signal processing. In the age of Spotify, Apple Music, and YouTube, the way “moderately loud” passages are handled is governed by strict “Loudness Normalization” algorithms.

LUFS and Dynamic Range Management

In the analog era, “mf” was relative to the loudest point of a song. In the tech-heavy landscape of streaming, we measure loudness in LUFS (Loudness Units relative to Full Scale). Streaming platforms use automated software to normalize all tracks to a standard level (typically -14 LUFS).

This technology has a massive impact on how “mf” sections are heard. If a track is over-compressed (a byproduct of the “Loudness Wars”), the distinction between piano (quiet) and mezzo-forte (moderately loud) vanishes. Modern audio engineers use specialized plugins like FabFilter Pro-L 2 or iZotope Ozone to ensure that the technical “mf” data remains distinct from the peak levels, preserving the dynamic integrity of the music through the streaming pipeline.

The Role of Digital Signal Processing (DSP)

Digital Signal Processing (DSP) allows for “intelligent” gain staging. Modern apps use DSP to analyze the frequency spectrum of a “mf” passage. Because the human ear perceives certain frequencies (like 2kHz to 5kHz) as louder than others, tech-driven mixing tools automatically adjust the “mf” output to ensure it sounds “moderately loud” to the listener, regardless of the hardware—be it a pair of high-end Sennheiser headphones or a smartphone speaker.

4. Hardware Integration: Controllers and the Tactile “mf”

The intersection of hardware and software is where the physical sensation of “mf” is recaptured for the digital creator. The evolution of sensor technology has revolutionized how we input dynamic data into our devices.

MPE (MIDI Polyphonic Expression)

A significant trend in music tech is MPE, utilized by hardware like the ROLI Seaboard or the Ableton Push 3. Traditional MIDI controllers treat “mf” as a single strike value. However, MPE technology allows for continuous data streaming. This means a producer can start a note at mezzo-piano, swell into a “mf”, and fade out, all through tactile pressure sensors. The software interprets this continuous stream of high-resolution data to create a fluid, organic dynamic shift that was impossible in the early days of digital music.

Haptic Feedback and Virtual Interfaces

As we move toward VR and AR music production environments, “mf” is being translated into haptic feedback. Digital musicians working in spatial audio environments can now “feel” the resistance of a virtual string or key. The software uses haptic engines to provide a physical “kickback” that corresponds to the “mf” marking, allowing for a more intuitive connection between the digital tool and the creator’s intent.

The Future of “mf”: From Symbols to Intelligent Soundscapes

As we look toward the future of music technology, the definition of “mf” will continue to expand beyond its 18th-century roots. We are moving into a period where “moderately loud” is a dynamic variable in a broader ecosystem of smart metadata.

In the world of “Adaptive Audio” for video games and apps, the “mf” of a soundtrack isn’t fixed. It is reactive code. Using engines like Wwise or FMOD, developers program music that scales its dynamics based on user interaction. If a player enters a moderately tense area, the software shifts the music’s metadata to “mf.” This is not a simple volume change; it is a real-time re-rendering of the musical assets to reflect a specific state of intensity.

In conclusion, “mf” in music—when viewed through the lens of technology—is a masterclass in data translation. It represents the successful bridge between human emotion and machine precision. Whether it is a MIDI velocity value, a layer in a multisampled VST, a target in a streaming normalization algorithm, or a pressure point on an MPE controller, “mf” is a vital component of the digital toolkit. As software and AI continue to evolve, our ability to program, manipulate, and experience these “moderately loud” moments will only become more sophisticated, ensuring that the soul of the music remains intact, even when it is delivered through a line of code.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.