For over a century, the Mantoux Tuberculin Skin Test (TST) has been the cornerstone of tuberculosis screening. Traditionally, identifying “what a positive skin TB test looks like” relied on the subjective assessment of a healthcare professional—a physical measurement of skin induration (swelling) using a simple plastic ruler. However, the intersection of medical science and advanced technology is currently revolutionizing this process. As we move into an era defined by artificial intelligence (AI), computer vision, and mobile health (mHealth), the definition of a “positive look” is shifting from a human observation to a data-driven digital signature.

This transition from manual measurement to tech-integrated diagnostics is not merely a matter of convenience; it is a critical advancement in global health security, data accuracy, and diagnostic accessibility.

The Evolution of Diagnostic Visualization: From Ruler to Algorithm

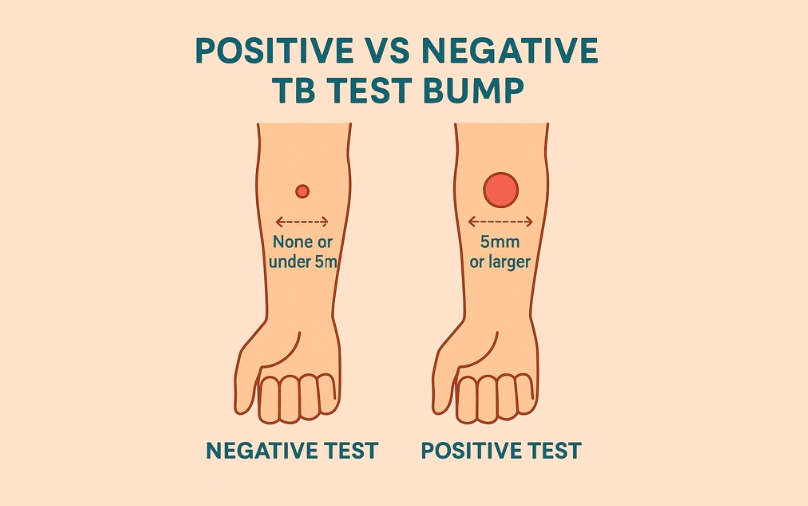

The traditional method of reading a TB skin test involves injecting a small amount of PPD (purified protein derivative) into the forearm. After 48 to 72 hours, a clinician looks for induration. However, the human eye is notoriously fallible when distinguishing between simple redness (erythema) and actual swelling (induration), leading to significant inter-observer variability.

The Limitations of the Human Eye in Mantoux Readings

In clinical settings, the margin for error in a TST reading can be the difference between a patient receiving unnecessary treatment or a latent infection going undetected. Human fatigue, lighting conditions, and the tactile nature of “feeling” for a bump introduce inconsistencies. Tech-driven solutions aim to eliminate this subjectivity by standardizing the visual input. By using high-resolution sensors, we can now capture the topographical nuances of the skin surface that the human finger might miss.

Digital Transformation in Point-of-Care Diagnostics

The digitization of the TST reading process involves transforming a physical biological reaction into a set of quantifiable pixels. Digital health platforms are now integrating specialized hardware—such as 3D optical scanners—that can create a volumetric map of the injection site. This shift represents a move from “qualitative” observation to “quantitative” analysis, where a positive test is defined by precise cubic millimeters of swelling rather than a rough estimate on a ruler.

Computer Vision: Identifying Induration through High-Resolution Imaging

At the heart of modern diagnostic tech is computer vision (CV). When we ask what a positive skin TB test looks like in a technological context, we are looking at how an algorithm perceives light, shadow, and texture on the human dermis.

The Role of Edge Detection in Measuring Skin Reactions

Computer vision algorithms utilize “edge detection” to identify the boundaries of an induration. While a human might struggle to see where a subtle bump begins and ends, AI can analyze the gradient of shadows cast by the swelling under specific light wavelengths. By processing these images, software can isolate the induration from the surrounding erythema. This is crucial because, in the world of TB testing, redness does not equal a positive result; only the hard, raised area (the induration) counts. Advanced tech can “filter out” the redness to focus exclusively on the physical elevation of the skin.

Overcoming Subjectivity with Machine Learning Models

Machine learning (ML) models are trained on tens of thousands of images of confirmed positive and negative TB tests. These models learn to recognize patterns in skin texture and density that correlate with a positive immune response. By feeding a smartphone photo into an ML-trained app, the software can provide a probability score of a positive result. This “digital look” is far more nuanced than a human’s binary “yes or no,” providing a spectrum of data that helps clinicians make more informed decisions regarding follow-up chest X-rays or blood tests (IGRA).

The Mobile Health Revolution: Apps for Remote TST Interpretation

One of the most significant barriers to TB eradication is the “return visit” requirement. Patients must return to the clinic 48–72 hours after the injection to have the test read. Technology is solving this through smartphone-based remote monitoring, effectively changing the location and the medium of the diagnostic process.

Tele-Health Integration for Global Disease Monitoring

New mHealth apps allow patients to photograph their own skin reactions. These apps often utilize an “optical calibration” sticker placed on the arm to provide the software with a reference for size and color. Once the photo is taken, it is uploaded to a secure cloud server where a computer vision algorithm—or a remote clinician—interprets the result. This technological bridge is vital in rural or underserved areas where traveling back to a clinic is a financial or physical burden.

Patient-Centric Tech: Empowering Self-Monitoring and Data Accuracy

By putting the “reading” technology in the hands of the patient, healthcare systems can increase compliance rates. These apps do more than just look at the bump; they time-stamp the entry, ensure the photo is taken under the correct focal length, and use the smartphone’s flash to standardize lighting. This creates a standardized environment for identifying a positive test that was previously only possible in a controlled clinical setting. The “look” of a positive test becomes a verified, high-fidelity digital record that can be integrated directly into an Electronic Health Record (EHR).

Data Security and AI Ethics in Automated TB Diagnostics

As we rely more on technology to identify positive TB tests, we must address the underlying infrastructure that supports these tools. Moving diagnostic imagery into the digital realm introduces complex challenges regarding data privacy and algorithmic fairness.

Protecting Biometric Health Data in the Cloud

A digital image of a patient’s arm, especially when linked to their health status, constitutes sensitive biometric data. Tech companies developing TB diagnostic tools must employ end-to-end encryption and comply with rigorous standards like HIPAA or GDPR. The “look” of a positive test is now a piece of metadata that must be shielded from unauthorized access. Secure APIs (Application Programming Interfaces) are used to transmit these images from a mobile device to a diagnostic server, ensuring that the patient’s identity remains decoupled from the diagnostic image whenever possible.

Addressing Algorithmic Bias in Skin Tone Recognition

A critical tech-centric challenge in identifying TB tests is ensuring that AI works across all skin phototypes. Historically, many medical imaging algorithms were trained on limited datasets, leading to inaccuracies when analyzing darker skin tones. In TB testing, where the disease disproportionately affects populations in the Global South, it is a technological imperative that computer vision models are trained on diverse datasets. A “positive look” on a Fitzpatrick scale Type VI skin tone may present different contrast levels than on Type I. Modern tech developers are now utilizing “synthetic data” and diverse training sets to ensure that the AI’s “eye” is as equitable as it is accurate.

The Future: Integrating Wearables and Biosensors

Looking forward, the definition of what a positive skin TB test looks like might move away from visual imaging entirely and toward wearable biosensors. Research is currently underway into “smart bandages” equipped with sensors that can detect the specific biomarkers of a TB immune response in real-time.

These devices would monitor local skin temperature, interstitial fluid changes, and cytokine levels at the injection site. Instead of a red bump, a positive test would look like a data spike on a clinician’s dashboard or a notification on a patient’s smartwatch. This represents the ultimate shift in the niche of health technology: the movement from external observation to internal, continuous data streaming.

In conclusion, while the biological reality of a positive TB skin test remains a physical reaction on the arm, the technology we use to interpret that reaction has transformed it into a sophisticated digital asset. Through the power of computer vision, machine learning, and mobile connectivity, we are eliminating human error and bringing high-level diagnostics to the furthest reaches of the globe. The “look” of a positive test is no longer just a measurement on a ruler; it is a complex, encrypted, and highly accurate data point in the digital fight against one of history’s most resilient diseases.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.