In the realm of acoustics and digital signal processing (DSP), the concept of pitch is often simplified as the “highness” or “lowness” of a sound. However, when we look through the lens of modern technology, pitch is a sophisticated variable determined by complex physical properties and interpreted through advanced software algorithms. Whether it is the crystalline high notes of a digital synthesizer or the deep bass of a home theater system, the determination of pitch is the result of a precise interaction between frequency, wave modulation, and technological interpretation.

Understanding what determines the pitch of a sound is no longer just a concern for musicians or physicists; it is a foundational pillar for audio engineers, AI developers, and hardware designers who are shaping the next generation of digital experiences.

The Fundamental Physics of Sound Waves in Digital Environments

At its core, the pitch of a sound is determined by the frequency of the sound wave. Frequency refers to the number of vibrations or cycles a sound wave completes in one second, measured in Hertz (Hz). In the world of technology, capturing and recreating these vibrations requires a deep understanding of how physical waves translate into digital data.

Frequency and Periodicity: The Heart of Pitch

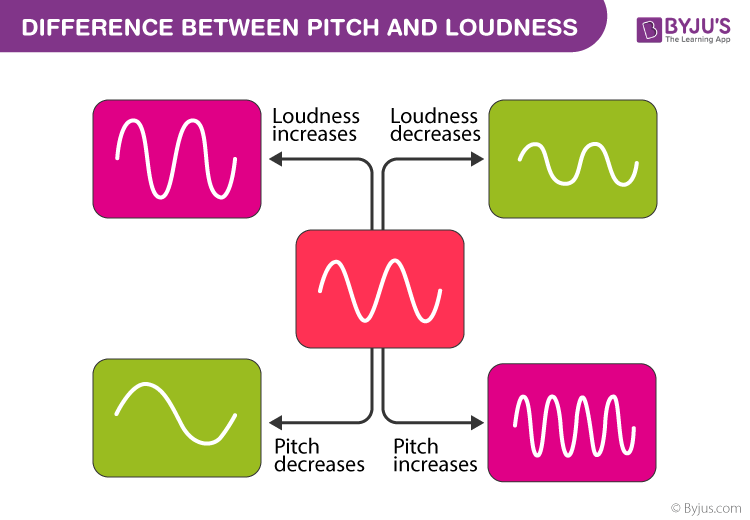

The most direct determinant of pitch is the rate of oscillation. A higher frequency—meaning more cycles per second—results in a higher perceived pitch, while a lower frequency results in a lower pitch. In digital synthesis, engineers use oscillators to generate periodic waveforms. These waveforms (sine, square, sawtooth) are the building blocks of sound. By manipulating the rate at which these waveforms repeat, tech tools can create a vast spectrum of pitches that are used in everything from smartphone notifications to cinematic soundscapes.

Sampling Rates and the Nyquist Theorem

In digital audio recording, pitch accuracy is heavily dependent on the sampling rate. To accurately capture the frequency of a sound, the hardware must sample the audio at a rate at least twice as high as the highest frequency present. This is known as the Nyquist-Shannon sampling theorem. Most professional digital audio workstations (DAWs) operate at 44.1 kHz or 48 kHz to ensure that the human ear—which hears up to 20 kHz—perceives the pitch accurately without “aliasing” or digital distortion. If the sampling rate is too low, the technology cannot properly determine the pitch, resulting in a loss of fidelity.

Signal Processing and Software-Driven Pitch Manipulation

While frequency is the physical driver of pitch, modern software allows us to decouple pitch from other sound characteristics like duration. This is where the intersection of technology and acoustics becomes truly transformative.

Fast Fourier Transform (FFT) and Spectral Analysis

To understand what determines pitch within a digital file, software uses a mathematical process called the Fast Fourier Transform (FFT). This algorithm breaks down a complex audio signal into its individual frequency components. By analyzing these components, software can identify the “fundamental frequency”—the lowest frequency of a periodic waveform—which the human brain perceives as the primary pitch. This technology is the backbone of tuning software, spectral analyzers, and even Shazam-like song recognition apps.

Time-Stretching vs. Pitch-Shifting Algorithms

Historically, changing the pitch of a recording meant speeding it up or slowing it down (like a vinyl record). Today, DSP technology uses phase vocoders and granular synthesis to shift pitch independently of time. By slicing audio into tiny “grains” and overlapping them, or by shifting the phase of frequency components, software can change the pitch of a voice or instrument without altering its speed. This technological feat is essential for modern music production, podcast editing, and film post-production.

AI and Neural Networks in Pitch Synthesis

The rise of Artificial Intelligence has introduced a new dimension to how pitch is determined and generated. We are moving away from simple wave generation toward “intelligent” sound synthesis that mimics human nuances.

Generative AI and Deep Learning for Voice Cloning

What determines the pitch of a human voice is a combination of vocal cord tension and laryngeal anatomy. AI tools, such as those used in “Deepfake” audio or high-end text-to-speech (TTS) engines, use neural networks to model these physical characteristics. These models analyze thousands of hours of audio to understand the “prosody”—the patterns of stress and intonation—that dictate pitch. By using WaveNet or similar architectures, AI can predict the precise frequency needed to make a synthetic voice sound empathetic, authoritative, or excited.

Improving Human-Computer Interaction (HCI) through Prosody

In the tech world, the “pitch” of an AI assistant like Alexa or Siri is carefully engineered to enhance user experience. Research in Human-Computer Interaction suggests that higher-pitched synthetic voices are often perceived as more helpful, while lower-pitched voices are seen as more authoritative. Engineers use specialized software to fine-tune the pitch variance in these AI models, ensuring they don’t sound “robotic.” This involves modulating the fundamental frequency (F0) in real-time to match the context of the conversation.

Essential Tools for Pitch Control and Monitoring

For professionals working in the tech industry, controlling pitch is a daily task that requires a specific suite of hardware and software tools. These tools provide the interface through which frequency is visualized and manipulated.

Digital Audio Workstations (DAWs) and VST Plugins

The DAW (such as Ableton Live, Logic Pro, or FL Studio) is the central hub for pitch management. Within these environments, Virtual Studio Technology (VST) plugins like Melodyne or Antares Auto-Tune provide a visual representation of pitch on a “piano roll” interface. These tools allow users to see the exact Hertz value of a sound and snap it to a specific musical scale. This level of precision is only possible because the software can instantaneously calculate the relationship between the fundamental frequency and its overtones.

Real-Time Auto-Tune and Pitch Correction Hardware

Beyond software, dedicated hardware processors are used in live broadcasting and concerts. These devices use low-latency DSP chips to detect the pitch of an incoming signal and shift it to the nearest semitone in milliseconds. This technology relies on high-speed pitch-detection algorithms that can differentiate between the intended pitch and background noise, a significant challenge in signal processing.

The Future of Sound Pitch in Virtual and Augmented Reality

As we move toward more immersive technologies like the Metaverse and spatial computing, the determination of pitch becomes even more complex, involving the user’s physical position in a virtual space.

Spatial Audio and HRTF Mapping

In a 3D digital environment, the “perceived” pitch of a sound can change based on the Doppler Effect—where the frequency of a sound increases as it approaches the listener and decreases as it moves away. VR headsets use Head-Related Transfer Functions (HRTF) to simulate how our ears receive sound. Tech developers must program these devices to dynamically shift the pitch of virtual objects based on their velocity and distance from the user, creating a realistic sense of presence.

Adaptive Soundscapes in Gaming and AI Assistants

Modern gaming engines like Unreal Engine 5 utilize adaptive audio tech to determine pitch based on gameplay states. If a player’s health is low, the system might shift the pitch of the background music or environmental sounds to create a sense of tension. This real-time manipulation is guided by the game’s logic, showcasing how pitch is no longer a static element of a file, but a dynamic variable controlled by software in response to human interaction.

In conclusion, while the physical determination of pitch remains rooted in the frequency of sound waves, the technological determination of pitch involves a sophisticated array of sampling, mathematical transformations, and artificial intelligence. From the minute adjustments of a vocal tuner to the immersive Doppler shifts in a VR headset, technology has mastered the science of frequency to create the rich, auditory world we navigate today.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.