For years, the question “What celebrity do I look like?” was a playful conversation starter at social gatherings. Today, that question is answered in milliseconds by sophisticated algorithms. The rise of “celebrity look-alike” picture upload tools represents more than just a viral social media trend; it is a showcase of the rapid advancements in computer vision, machine learning, and artificial intelligence. What started as a novelty has evolved into a complex ecosystem of digital image processing that impacts everything from biometric security to personalized marketing.

To understand how these tools function, one must look past the interface and into the underlying technology. These applications rely on a combination of deep learning frameworks and extensive datasets to map human features with precision that often rivals human perception.

The Architecture of Facial Recognition: How AI Processes Your Image

When a user uploads a photo to a look-alike application, the software does not simply “look” at the picture the way a human does. Instead, it breaks the image down into a series of numerical data points. This process is rooted in advanced computational mathematics and digital signal processing.

Convolutional Neural Networks (CNNs)

At the heart of modern facial recognition is the Convolutional Neural Network (CNN). CNNs are a class of deep neural networks most commonly applied to analyzing visual imagery. When you upload a picture, the CNN scans the image through various layers. The initial layers identify simple features like edges and shadows. As the data moves deeper into the network, the layers begin to recognize complex patterns such as the curve of an eyebrow, the bridge of a nose, or the specific contour of a jawline.

By the time the image reaches the final layer, the AI has created a mathematical representation—often called a “face print” or an embedding. This embedding is a high-dimensional vector that uniquely identifies the geometric structure of the face.

Landmark Detection and Feature Mapping

To ensure accuracy despite different lighting or angles, these tools utilize “facial landmarking.” This involves identifying specific points on the face—typically between 68 and 128 points—such as the corners of the eyes, the tip of the nose, and the boundary of the lips. By calculating the distances and ratios between these landmarks, the AI can normalize the face, effectively “straightening” it so it can be compared against a database of celebrity images that might be taken from various professional angles.

Vector Comparison and Database Searching

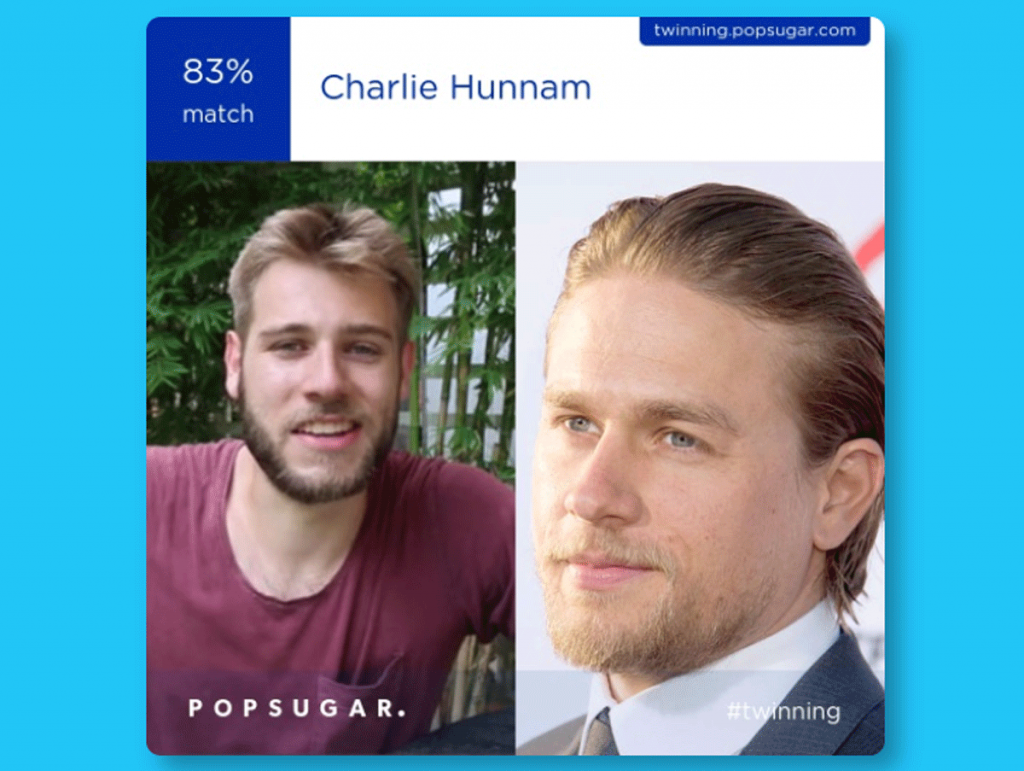

Once your face print is generated, the system performs a nearest-neighbor search within a massive database of pre-indexed celebrity face prints. The algorithm calculates the “Euclidean distance” between your vector and the vectors of thousands of celebrities. The smaller the distance, the higher the similarity score. This is why these apps can provide a percentage of similarity, showing you exactly how closely your digital signature matches that of a Hollywood actor or a world-renowned athlete.

Leading AI Tools and the Integration of Modern Image Processing

The landscape of celebrity look-alike technology is populated by a variety of platforms, ranging from simple web-based tools to high-performance mobile applications that utilize on-device processing.

Mobile Applications and Cloud Computing

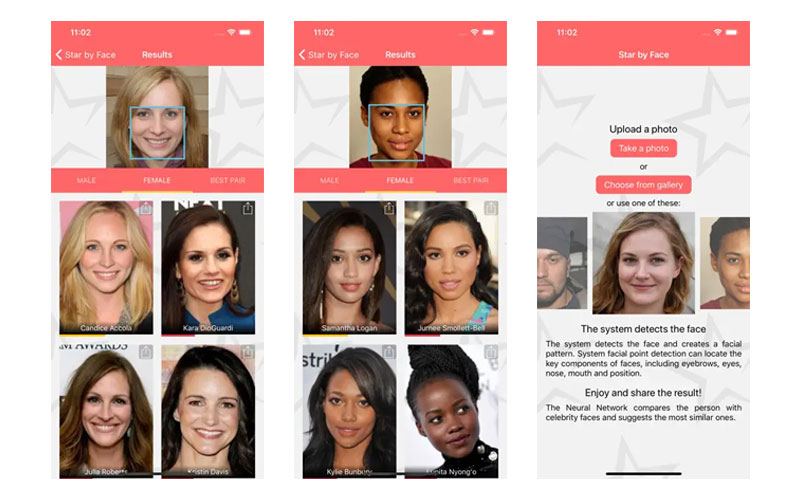

Popular apps like Gradient or StarByFace have popularized the “picture upload” trend by leveraging the power of cloud computing. While the user interface lives on your smartphone, the heavy lifting—the actual neural network processing—often happens on powerful remote servers. This allows for high-speed processing without draining the user’s local hardware resources. However, we are seeing a shift toward “Edge AI,” where newer smartphones with dedicated AI chips (like Apple’s Neural Engine or Google’s Tensor) can process these facial comparisons locally, offering faster results and enhanced privacy.

Generative AI and Style Transfer

Recent iterations of these tools have moved beyond simple matching. Many now incorporate “Style Transfer” and Generative Adversarial Networks (GANs). These technologies don’t just tell you who you look like; they can morph your features into the style of a specific celebrity or a different era of cinema. This involves two neural networks working against each other—one generating the image and the other critiquing it—until the result is a seamless blend of the user’s features and the celebrity’s aesthetic.

APIs and the Democratization of Facial Analysis

The tech behind these tools is no longer exclusive to giant tech firms. Through Application Programming Interfaces (APIs) like Amazon Rekognition, Google Cloud Vision, and Microsoft Azure Face API, developers can integrate high-level facial analysis into their own apps. This democratization has led to an explosion of niche “look-alike” services, each optimized for different demographics, artistic styles, or historical databases.

Privacy, Security, and Data Integrity in Image Uploads

While the primary use case for these tools is entertainment, the act of uploading high-resolution biometric data to a third-party server raises significant technological and ethical questions regarding digital security and data privacy.

The Ethics of Biometric Data Storage

A “face print” is a form of biometric data, similar to a fingerprint. When a user uploads a photo, they are essentially providing a digital key to their identity. The primary technical concern is whether this data is stored or discarded. Professional-grade applications should ideally utilize “transient processing,” where the facial vector is generated, compared, and then immediately deleted. However, some platforms may use uploaded images to further train their machine learning models, leading to questions about informed consent and the “right to be forgotten.”

Metadata and Hidden Data Leaks

Beyond the facial image itself, every photo upload contains metadata, known as EXIF data. This can include the exact GPS coordinates of where the photo was taken, the device used, and the timestamp. Sophisticated tech platforms must implement “data scrubbing” to remove this sensitive information before the image is processed by the AI, protecting the user from inadvertent location tracking or device fingerprinting.

Terms of Service and Data Ownership

In the tech industry, the “Terms of Service” (ToS) act as the legal framework for data handling. From a technical and legal standpoint, users must be aware of whether they are granting the platform a “perpetual, royalty-free license” to their image. As facial recognition technology becomes more prevalent in banking and security, the protection of one’s digital likeness becomes a critical component of personal cybersecurity.

The Future of Image Recognition: From Entertainment to Utility

The “what celebrity do I look like” trend is merely the tip of the iceberg for facial analysis technology. The engineering behind these tools is being repurposed for more significant technological shifts.

Hyper-Realistic Avatars and the Metaverse

The ability to map a user’s face to a celebrity is now being used to create hyper-realistic 3D avatars for virtual and augmented reality. By analyzing the same 128 landmark points used for celebrity matching, AI can construct a digital twin that mimics a user’s real-time expressions. This technology is foundational for the next generation of social interaction and digital presence in the “Metaverse.”

Personalized Retail and Health Tech

In the retail sector, look-alike technology is being used for “virtual try-ons.” If an AI knows your facial structure and which celebrities have similar features, it can recommend eyewear, makeup, or hairstyles that have been computationally proven to suit your specific geometry. Furthermore, in the medical tech field, researchers are looking at how facial symmetry and feature shifts—the same metrics used in look-alike apps—can be monitored over time to detect early signs of neurological or genetic conditions.

Advancements in Computer Vision Robustness

The future of this tech lies in overcoming current limitations, such as “occlusion” (when part of the face is covered) and low-light degradation. Engineers are currently training “Transformers”—a newer type of AI model—to better understand the context of an image, allowing for accurate celebrity matching even from grainy, partially obscured, or stylized photos.

Conclusion

The “celebrity look-alike” picture upload is a fascinating intersection of entertainment and high-level computer science. While it serves as a fun digital distraction, it is powered by the same Convolutional Neural Networks, facial landmarking protocols, and biometric processing units that are shaping the future of global technology. As these tools become more integrated into our digital lives, the focus will shift from simple identification to complex interaction, making the technology behind the “look-alike” one of the most significant pillars of modern artificial intelligence. For the end-user, staying informed about how these tools work is not just about finding their Hollywood twin—it is about understanding the digital footprint of their own identity in an increasingly automated world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.