In the realm of modern technology, precision is not merely a preference; it is a fundamental requirement. Whether you are developing an algorithm for an autonomous vehicle, calibrating sensors for an industrial Internet of Things (IoT) network, or designing the user interface for a high-frequency trading platform, the way you handle numerical data determines the reliability of your system. At the heart of this numerical integrity lies the concept of significant digits—often referred to as “significant figures.”

Significant digits are the digits in a number that carry meaningful contributions to its measurement resolution. In a world increasingly driven by Big Data and Artificial Intelligence (AI), understanding the rules for significant digits is essential for preventing cumulative errors that can lead to software glitches, hardware failures, or misleading analytical insights.

The Foundational Rules of Significant Digits in Technical Computing

Before a software engineer can write a single line of code involving complex calculations, they must understand the mathematical constraints of the data they are processing. Significant digits tell us how much “certainty” we have in a measurement. In technology, where we often deal with physical measurements or scientific simulations, these rules prevent us from claiming more precision than our hardware can actually provide.

1. Identifying Non-Zero Digits and Trapped Zeros

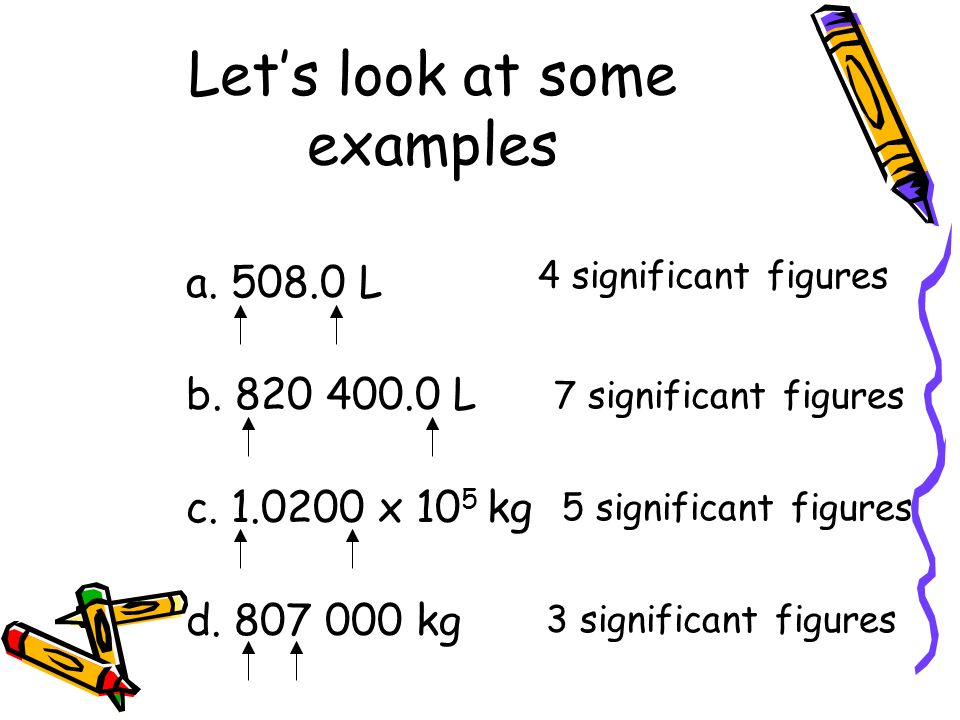

The first and most basic rule is that all non-zero digits are always significant. For instance, if a sensor reads 124.5 units, all four digits contribute to the precision of that measurement.

“Trapped zeros”—zeros that appear between two non-zero digits—are also always significant. In a digital reading of 10.005, the zeros are essential because they represent a measured value of zero at those specific decimal places. In software development, treating these zeros as placeholders rather than significant values can lead to disastrous rounding errors in iterative loops.

2. The Nuance of Leading and Trailing Zeros

Leading zeros are never significant. They act merely as placeholders to indicate the position of the decimal point. For example, in the value 0.00045, there are only two significant digits (4 and 5). This distinction is vital when optimizing data storage or defining variable types in languages like C++ or Java, where memory allocation is tied to the required precision.

Trailing zeros, however, are more complex. They are significant only if the number contains a decimal point. If a digital thermometer records a temperature as 37.00°C, those two zeros indicate that the device is accurate to the hundredths place. If the zeros were absent, the level of technical certainty would be lower. In data science, ignoring trailing zeros after a decimal point can lead to a loss of metadata regarding the instrument’s resolution.

3. Exact Numbers and Computational Constants

In technology, we frequently encounter “exact numbers,” which are values known with complete certainty. These include counts (e.g., 8 CPU cores) or defined constants (e.g., 1 byte = 8 bits). These numbers are considered to have an infinite number of significant digits. When performing calculations in a tech environment, it is crucial to distinguish between these absolute integers and measured floating-point values to ensure the final output reflects the correct degree of uncertainty.

Implementing Precision in Software Development and AI

The transition from theoretical mathematics to practical software implementation is where the rules for significant digits meet the reality of binary logic. Computers do not see numbers the way humans do; they see bits. This discrepancy often introduces challenges in maintaining numerical fidelity.

Floating-Point Arithmetic and the IEEE 754 Standard

Most modern software relies on the IEEE 754 standard for floating-point arithmetic. This standard dictates how decimal numbers are stored in binary. However, because binary cannot perfectly represent all decimal fractions, “rounding errors” occur.

When a developer works with significant digits, they must be aware that a number like 0.1 might be stored as 0.10000000149. Understanding significant digits allows developers to implement “epsilon” values—tiny buffers used to compare floating-point numbers—ensuring that a system doesn’t crash because 0.1 + 0.2 didn’t exactly equal 0.3. In mission-critical software, such as flight control systems, the strict adherence to significant figure rules prevents these infinitesimal errors from compounding into catastrophic failures.

Significant Digits in Machine Learning and AI

In the world of Machine Learning (ML), significant digits play a specialized role in model weights and biases. During the training of a neural network, billions of calculations are performed. If a model uses 32-bit floats (FP32) but only requires the precision of 16-bit floats (FP16), the extra “significant” digits are essentially noise that consumes unnecessary computational power and memory.

“Quantization” is a tech trend where significant digits are intentionally reduced to make AI models faster and more efficient for mobile devices. By understanding which digits are truly significant to the model’s accuracy, engineers can compress AI models without sacrificing performance, a process that is revolutionizing “Edge AI” deployments.

Data Validation and Input Sanitization

Software integrity starts with the input. When a system receives data from an external API or a user, it must validate the precision. If a financial tech (FinTech) application receives a transaction amount with five decimal places when the currency only supports two, the system must have a logic gate based on significant digit rules to handle the excess data. Proper sanitization ensures that the backend databases maintain a consistent level of precision, preventing “drift” in financial reporting or scientific logs.

The Role of Significant Digits in Hardware Engineering and IoT

The physical layer of technology—the hardware—is where the rules for significant digits are most strictly enforced by the laws of physics. Every sensor, from the GPS in a smartphone to the pressure gauge in an industrial plant, has a limit to its resolution.

Sensor Accuracy and Digital Resolution

An Analog-to-Digital Converter (ADC) translates real-world signals into binary data. The “bit-depth” of the ADC determines the number of significant digits the hardware can produce. A 12-bit sensor might provide a different level of significant detail than a 24-bit sensor.

A common mistake in tech documentation is “false precision.” This happens when a sensor with only two significant digits of accuracy provides data that is then displayed in a software dashboard with eight decimal places. This is misleading and potentially dangerous in engineering contexts. Professional tech systems must align their software display logic with the hardware’s actual significant digit capacity to maintain “data fidelity.”

Propagating Uncertainty in Automated Systems

In automated manufacturing and robotics, one calculation’s output is often the next calculation’s input. This is known as the propagation of uncertainty. The rule of thumb in engineering is that your final result cannot be more precise than your least precise measurement.

If a robotic arm moves 10.5 meters (three sig figs) based on a sensor that detected an obstacle at 2.1234 meters (five sig figs), the final position calculation must be rounded to reflect the three significant digits of the least certain input. Technology stacks that fail to account for this propagation risk “mechanical drift,” where a robot’s movements become increasingly inaccurate over time because the software overestimates its own precision.

UI/UX Design: Communicating Precision to the User

While the “under-the-hood” calculations require rigorous adherence to significant digit rules, the way this information is presented to a human user requires a different strategy. UI/UX design is about the balance between technical accuracy and cognitive load.

When to Show More Digits: Scientific and Specialized Software

In specialized fields like bioinformatics, cryptography, or astronomical modeling, the user needs to see every significant digit. In these tech niches, truncated data is useless data. Digital security, specifically in the generation of cryptographic keys, relies on the absolute precision of massive prime numbers. Here, the rules for significant digits are pushed to their limits, and the UI must be designed to handle large-scale numerical strings without obscuring the most critical information.

Simplifying Data for Consumer Apps

Conversely, for a consumer-facing app—such as a fitness tracker or a weather app—displaying too many significant digits can be counterproductive. If a smart watch tells a user they burned 342.18943 calories, it is providing a false sense of precision, as the sensors used to track heart rate and movement are not that accurate.

Effective UX design involves “graceful degradation” of precision. The software should perform the calculations with the appropriate significant digits in the backend but present a rounded, readable number to the user. This maintains the professional integrity of the tool while ensuring the data is actionable for a non-technical audience.

Conclusion: The Strategic Importance of Precision

Understanding and applying the rules for significant digits is a hallmark of professional technology development. It is the bridge between the messy, continuous variables of the physical world and the discrete, logical world of digital systems.

By adhering to these rules, tech professionals can:

- Ensure Computational Integrity: Preventing rounding errors in complex software.

- Optimize Resource Allocation: Reducing the bit-depth of data where high precision is not significant (as seen in AI quantization).

- Enhance System Safety: Ensuring that hardware and robotics operate within the realistic bounds of their sensor accuracy.

- Improve User Trust: Providing data that is accurate, honest, and easy to interpret.

In an era where we rely on algorithms to make life-altering decisions—from medical diagnoses to autonomous navigation—the humble significant digit remains one of the most important tools in our technological toolkit. Precision is not just a number; it is the foundation of digital trust.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.