In the rapidly evolving landscape of technology, innovation is rarely the result of mere guesswork. Whether a software engineer is optimizing a search algorithm, a product manager is launching a new feature, or a data scientist is refining a machine learning model, every change is a high-stakes experiment. At the heart of these experiments lies the scientific method, specifically the framework of statistical inference. To navigate this framework, tech professionals must master two fundamental concepts: the null hypothesis and the alternative hypothesis.

These concepts are not just academic abstractions; they are the gatekeepers of digital progress. They provide a structured way to determine if a new update, a security patch, or a UI change truly provides a significant benefit or if the observed results are simply the product of random noise. Understanding these hypotheses is essential for anyone involved in data-driven decision-making within the tech sector.

The Core Framework: Defining Null and Alternative Hypotheses

Before a single line of code is written for an A/B test or a predictive model is deployed, researchers must define what they are trying to prove. This is where the null and alternative hypotheses come into play. They are mutually exclusive statements that represent two different interpretations of the data.

The Null Hypothesis ($H_0$): The Status Quo

The null hypothesis, denoted as $H_0$, is the baseline assumption. In the context of technology, the null hypothesis typically posits that there is no effect, no relationship, or no difference between two groups. It represents the “status quo.” If you are testing a new compression algorithm for a cloud storage service, your null hypothesis would be that the new algorithm does not reduce file size more effectively than the current one.

In the tech world, the null hypothesis acts as a “skeptic.” It assumes that any perceived improvement in a software’s performance or a user’s engagement is merely a coincidence or a result of sampling error. The goal of testing is to determine whether there is enough evidence to reject this skepticism in favor of something new.

The Alternative Hypothesis ($H_a$): The Challenger

The alternative hypothesis, denoted as $Ha$ or $H1$, is the statement that challenges the status quo. It represents what the technologist or researcher actually hopes to find: that there is a significant effect or difference. If the null hypothesis says “nothing has changed,” the alternative hypothesis says “something significant has happened.”

Using our compression algorithm example, the alternative hypothesis would state that the new algorithm significantly reduces file sizes compared to the existing standard. In software development, the alternative hypothesis is the “innovation” we are trying to validate. It is the driving force behind research and development, asserting that a new tool, toolset, or methodology provides a measurable advantage.

Implementing Hypothesis Testing in Software Development and A/B Testing

In the tech industry, the most common application of these hypotheses is in A/B testing—a process where two versions of a digital product are compared to see which performs better. This methodology is the backbone of platforms like Netflix, Amazon, and Google.

Optimizing User Interface (UI) and Experience (UX)

When a social media platform considers changing the color of its “Sign Up” button, it isn’t making a choice based on aesthetics alone. It is conducting a rigorous statistical test.

- $H_0$: The new button color has no effect on the user click-through rate.

- $H_a$: The new button color increases the click-through rate significantly.

By deploying the new color to a small subset of users (the “test” group) while keeping the original for others (the “control” group), data scientists can collect performance metrics. They use the null and alternative hypotheses to filter out statistical “flukes.” Without this structure, a tech company might spend millions of dollars rolling out a feature that actually provides zero utility, simply because they misinterpreted a temporary spike in data.

Algorithm Validation: Is Your AI Actually Better?

The development of Artificial Intelligence and Machine Learning (ML) relies heavily on hypothesis testing. When a developer builds a new recommendation engine, they must prove it outperforms the old one.

The null hypothesis might state that the new ML model’s accuracy is less than or equal to the current model’s accuracy. The alternative hypothesis would state that the new model’s accuracy is superior. By testing these hypotheses against a validation dataset, engineers can ensure that the “intelligence” they are adding to the software is real and reproducible, rather than a result of over-fitting or biased data.

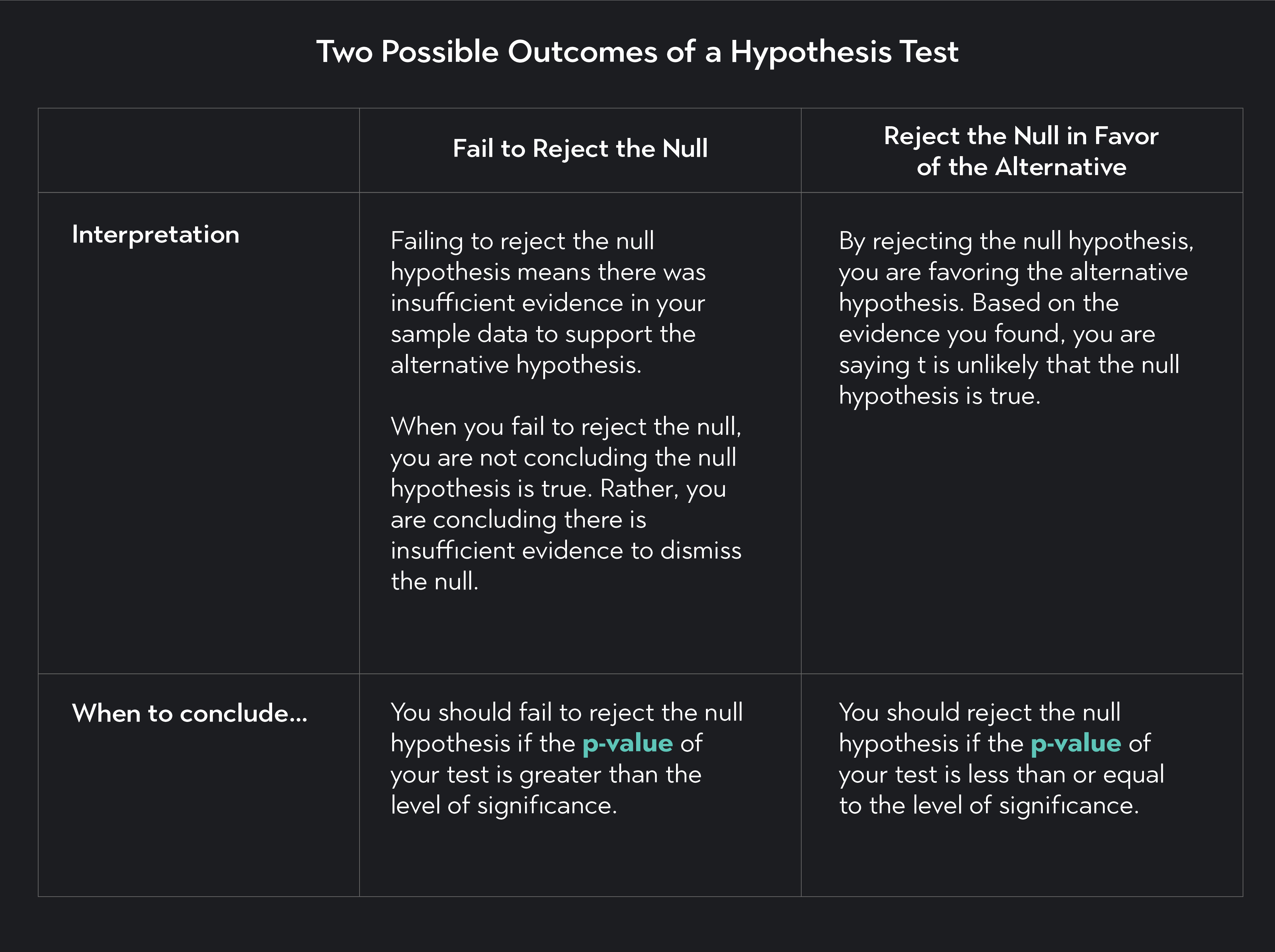

The Mechanics of Decision Making: P-Values and Significance Levels

Defining the hypotheses is only half the battle. The next step is determining whether the data provides enough evidence to ditch the null hypothesis. This is where the technical metrics of P-values and alpha levels ($alpha$) come into play.

Understanding the P-Value in Tech Applications

The P-value is a numerical representation of how likely it is that you would see the observed results if the null hypothesis were true. In tech, a low P-value suggests that the results are not just a random occurrence.

For instance, if a cybersecurity firm is testing a new threat-detection script and finds a P-value of 0.02, it means there is only a 2% chance that the increase in detected threats happened by accident. For tech leaders, the P-value is the “confidence score” of their innovation. It helps stakeholders decide whether a new software version is ready for a global rollout or if it needs more refinement in the sandbox environment.

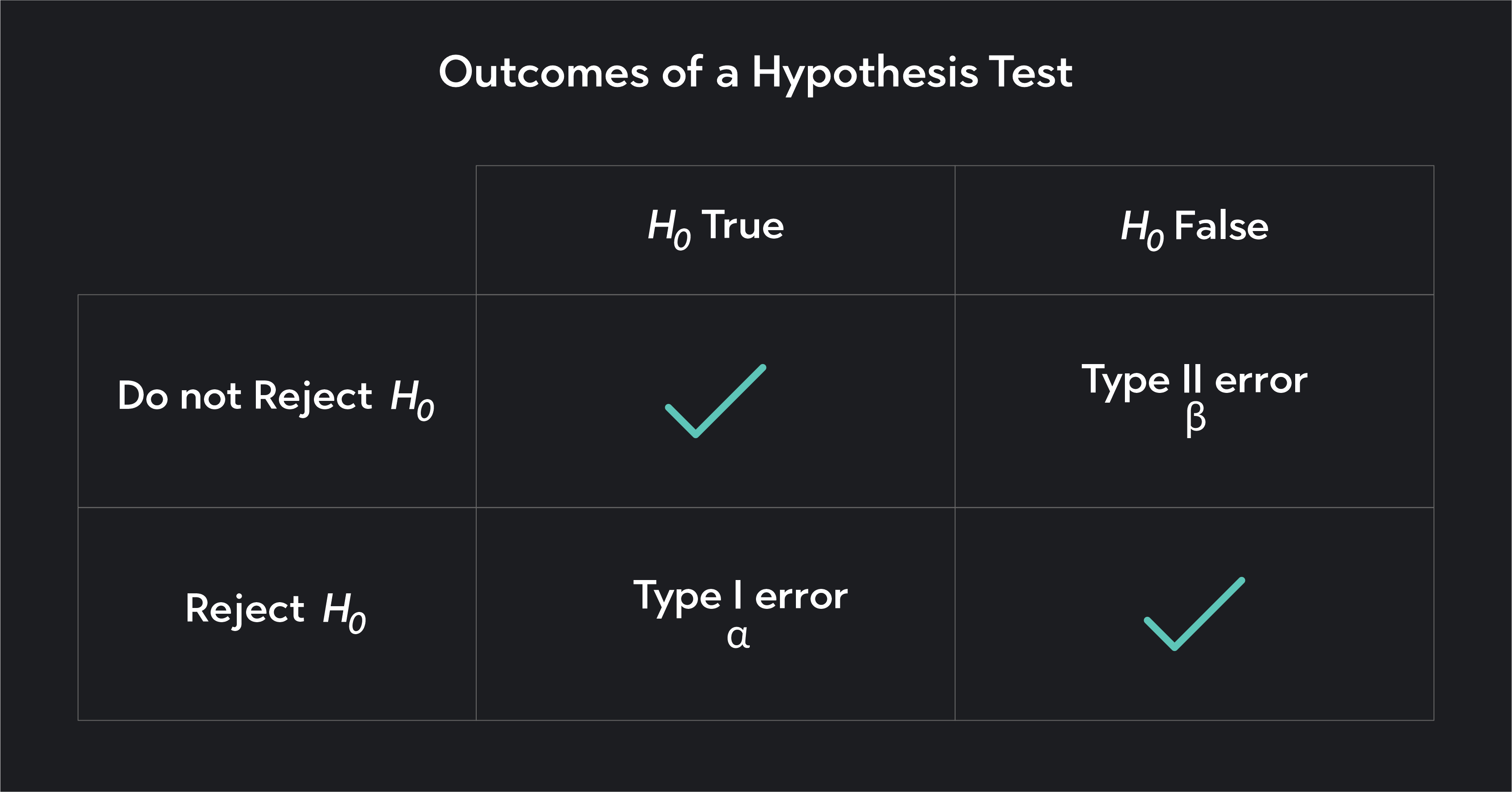

Type I and Type II Errors in Software Deployment

In the high-speed world of tech, mistakes happen. Hypothesis testing acknowledges this through the concepts of Type I and Type II errors.

- Type I Error (False Positive): This occurs when we reject the null hypothesis even though it is true. In software terms, this is like deploying a “performance-enhancing” patch that doesn’t actually do anything, costing the company time and resources for zero gain.

- Type II Error (False Negative): This occurs when we fail to reject a false null hypothesis. This is the “missed opportunity” error—when an engineer develops a truly revolutionary algorithm, but the test fails to recognize its significance, leading the company to scrap a potentially game-changing innovation.

Balancing these errors is critical for digital security and system reliability. A security tool with too many Type I errors (false alarms) leads to “alert fatigue,” while too many Type II errors (missed threats) leads to catastrophic breaches.

Real-World Tech Scenarios: From Cloud Infrastructure to Cybersecurity

To fully appreciate the utility of null and alternative hypotheses, one must look at their application across various technical disciplines.

Latency Testing in Distributed Systems

For cloud service providers like AWS or Azure, latency is a critical metric. When engineers implement a new load-balancing protocol, they use hypothesis testing to ensure the change actually speeds up data delivery across different geographic regions.

The null hypothesis would state that the new protocol does not decrease the average response time. The alternative hypothesis would state that the response time is significantly reduced. By testing this across millions of requests, they can ensure that their infrastructure remains competitive and reliable.

Security Protocol Efficacy

In cybersecurity, hypothesis testing is used to evaluate the strength of encryption and the efficacy of firewalls. If a tech team introduces a new biometric authentication layer, they set their hypotheses to test the “False Acceptance Rate” (FAR).

- $H_0$: The new biometric system has a FAR equal to or higher than the current password system.

- $H_a$: The new biometric system has a significantly lower FAR than the current system.

Strict statistical testing ensures that the “security” added to an app isn’t just “security theater,” but a robust, measurable improvement in the digital defense perimeter.

Conclusion: Why Statistical Rigor is the Backbone of Tech

In an era defined by Big Data and AI, the ability to distinguish between meaningful trends and random fluctuations is what separates successful tech giants from failing startups. The null and alternative hypotheses provide the logical framework necessary for this distinction.

By starting with a skeptical baseline (the null) and requiring a high standard of evidence to support a new claim (the alternative), the tech industry maintains a rigorous standard of truth. This scientific approach ensures that software updates improve user lives, AI models provide accurate insights, and digital infrastructure remains fast and secure. For any tech professional, mastering the art of hypothesis testing is not just about understanding statistics—it’s about building a better, more reliable digital future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.