In the rapidly evolving landscape of information technology, the most sophisticated hardware often takes its cues from the natural world. While the term “glutamate” is most frequently heard in culinary circles or biology labs, it has emerged as a cornerstone concept in the development of neuromorphic computing and advanced artificial intelligence (AI). To understand what glutamates are in a technological context, we must look beyond the amino acid and toward the “Excitatory Signal”—the biological mechanism that is currently revolutionizing how we design software architectures and high-performance hardware.

As we reach the physical limits of traditional silicon-based computing (Moore’s Law), engineers and data scientists are looking at the brain’s primary neurotransmitter system—the glutamate pathway—to build the next generation of “brain-inspired” tech. This article explores the intersection of biochemistry and bit-depth, detailing how the study of glutamates is shaping the future of digital security, AI tools, and hardware efficiency.

1. The Biological OS: What Are Glutamates in Computational Terms?

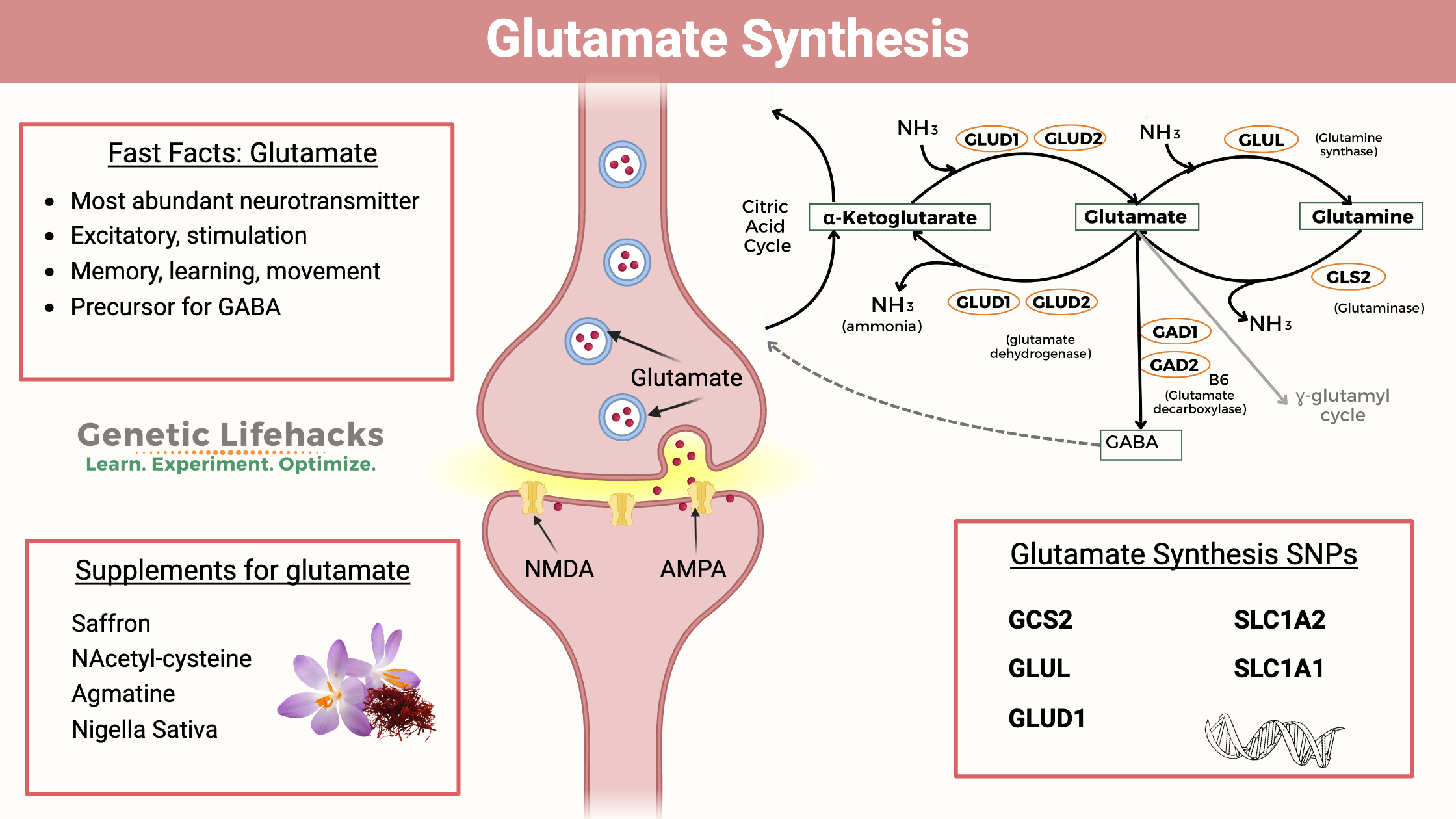

In human biology, glutamate is the most abundant excitatory neurotransmitter in the central nervous system. In the language of technology, think of glutamate as the “high-priority interrupt” or the “activation function” of the human operating system. It is responsible for sending signals between nerve cells, playing a vital role in learning, memory, and cognitive processing.

The Brain’s Primary Logic Gate

In a digital circuit, information is processed through binary logic gates (AND, OR, NOT). In the human brain, glutamate acts as the chemical catalyst that pushes a neuron past its firing threshold. Without glutamate, the “circuit” remains idle. By studying how glutamate facilitates the rapid transfer of high-density data across synapses, tech developers are learning how to create “sparse” neural networks that only activate when necessary, drastically reducing the energy consumption of modern AI models.

Synaptic Plasticity and Machine Learning

One of the most critical concepts in AI is “weighting”—the process by which a machine learning model decides which data points are important. This is a direct digital mimicry of Long-Term Potentiation (LTP), a process driven by glutamate. LTP strengthens the connection between neurons based on activity levels. In tech, this translates to the optimization of algorithms; just as glutamate helps the brain “hardwire” a memory, glutamate-inspired algorithms help AI systems refine their predictive accuracy over time through reinforced data pathways.

2. From Biology to Silicon: Mimicking Glutamate Pathways in AI Hardware

The current bottleneck in technology is the “Von Neumann bottleneck,” where the separation between the processor and memory creates a lag in data transfer. To solve this, the tech industry is pivoting toward neuromorphic engineering—creating chips that act like human brain cells. Here, the concept of the glutamate signal is translated into hardware through “memristors” and spiking neural networks (SNNs).

Neuromorphic Engineering and Spiking Neural Networks (SNNs)

Traditional AI, like the Large Language Models (LLMs) we see today, requires massive amounts of power because every “neuron” in the software is essentially active during a calculation. Neuromorphic chips, such as Intel’s Loihi or IBM’s TrueNorth, operate differently. They use Spiking Neural Networks that mimic the “all-or-nothing” firing of a glutamate-charged synapse. These chips only consume power when a signal (a “spike”) occurs. By understanding the chemical efficiency of glutamate, hardware designers are producing processors that are up to 1,000 times more energy-efficient than traditional GPUs.

Memristors: The Hardware Equivalent of Glutamate Release

A memristor is a type of electronic component that “remembers” the amount of charge that has previously flowed through it. This is the closest hardware equivalent we have to the glutamate-mediated synaptic strength in the human brain. In advanced AI gadgets and edge computing devices, memristors allow the hardware to “learn” at the circuit level. This eliminates the need for bulky software overhead, allowing for faster, more autonomous processing in everything from self-driving cars to real-time language translation devices.

3. Tech Applications: Why Glutamate Research Matters for Software and Security

The implications of glutamate-inspired tech extend far beyond laboratory experiments. They are currently being integrated into the software we use daily, the digital security protocols that protect our data, and the apps that manage our lives.

Enhancing Energy Efficiency in Large Language Models

One of the biggest criticisms of modern AI is its carbon footprint. Training a single massive model can consume as much energy as several households use in a year. By implementing “Glutamate-Driven Sparsity” in software design, developers can create models that only activate specific “clusters” of data—much like how the brain doesn’t use its entire capacity to perform a simple task. This “sparse activation” is the key to bringing powerful AI tools to mobile devices and gadgets without draining their batteries instantly.

Digital Security and Pattern Recognition

In the realm of digital security, the ability to identify an anomaly—such as a cyberattack or a fraudulent transaction—requires lightning-fast pattern recognition. Glutamate pathways are optimized for “high-fidelity” signal transmission, allowing the brain to filter out noise and focus on threats. Tech companies are now using “Synthetic Glutamate Signaling” in their cybersecurity stacks. These AI-driven security tools use bio-mimetic filters to ignore “white noise” network traffic and trigger an immediate response when a pattern matches a known threat vector, significantly reducing the “Time to Detect” (TTD) in data breaches.

Real-Time Edge Computing and Sensory Processing

Edge computing refers to processing data on the device itself (like a drone or a smart camera) rather than sending it to a distant cloud server. This requires extreme efficiency. By using “Glutamate-Logic,” sensors can process visual or auditory data in real-time. For instance, a smart security camera using this tech wouldn’t just record video; it would “perceive” motion using a spiking neural network, only “firing” a notification when the movement matches a specific, learned pattern, such as a human face versus a swaying tree branch.

4. The Future of Biocomputing and Synthetic Intelligence

As we look toward the 2030s, the boundary between biological systems and digital systems is blurring. The study of glutamates is leading us toward “Wetware”—a field where biological components are integrated with silicon to create hybrid computing systems.

Wetware: Integrating Biological Signals with Digital Circuits

Research is currently underway into “Brain-on-a-Chip” technology, where live neurons (which communicate via glutamate) are grown on top of microelectrode arrays. These systems are being tested for their ability to outperform traditional AI in complex, unpredictable environments. If perfected, this could lead to a new era of “Organic AI,” where the glutamate-driven efficiency of biological life is harnessed to solve computational problems that are currently too complex for even the most powerful supercomputers.

Ethical Considerations in Bio-Mimetic Technology

As we create technology that mimics the fundamental building blocks of human thought and memory, we face new ethical frontiers in digital security and AI governance. If a machine utilizes a “glutamate-style” architecture to achieve a form of synthetic consciousness or advanced learning, how do we regulate its “training”? The tech industry is currently debating the frameworks for “Neuro-ethics” in AI, ensuring that as our tools become more like us, they remain under human control and operate within safe parameters.

Conclusion: The Silicon Synapse

When we ask “what are glutamates” in the context of modern technology, the answer is clear: they are the architectural inspiration for the next century of innovation. By moving away from the rigid, power-hungry models of the past and toward the fluid, excitatory signaling of the glutamate system, the tech industry is unlocking new levels of efficiency and intelligence.

From the development of neuromorphic chips that power our gadgets to the sparse neural networks that make our software smarter and our digital security more robust, the glutamate model is the key. As we continue to bridge the gap between biology and technology, we are not just building faster computers; we are building a digital ecosystem that thinks, learns, and reacts with the elegance of the human mind. The future of tech isn’t just in the code—it’s in the chemistry of the signal.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.