In the rapidly evolving landscape of technology, from software engineering to artificial intelligence, the difference between a successful product and a failed launch often hinges on a single factor: evidence. As tech companies transition away from “gut-feeling” development toward data-driven decision-making, the scientific method has become the backbone of the industry. At the heart of this method lies a fundamental framework—the distinction between experimental and control groups.

Whether you are a product manager optimizing a mobile app’s user interface, a data scientist refining a machine learning model, or a DevOps engineer testing server performance, understanding these two groups is essential. This framework allows technical teams to isolate variables, measure impact accurately, and ensure that every update brings genuine value to the ecosystem.

The Core Framework: Defining Groups in Digital Environments

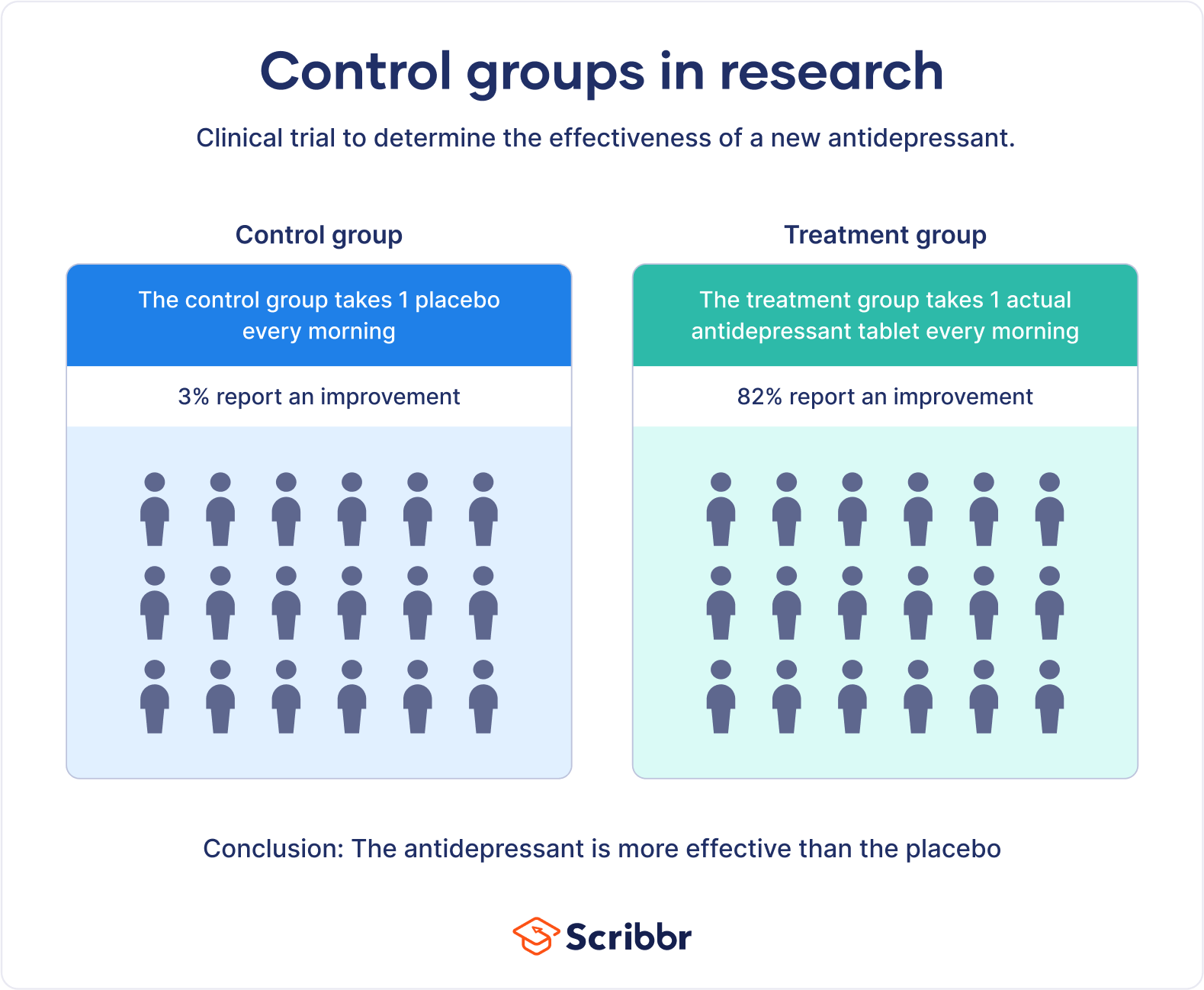

In any technical experiment, the goal is to determine cause and effect. If a developer changes a line of code and the system speeds up, was it the code change that caused the improvement, or was it a decrease in network traffic at that specific moment? To answer this, we utilize two distinct groups to isolate the “treatment.”

The Control Group: Your Baseline for Stability

The control group serves as the benchmark. In the context of software development, the control group consists of users or processes that continue to interact with the system in its current, “as-is” state. This group receives no changes, no new features, and no experimental patches.

The primary purpose of the control group is to provide a baseline for comparison. Without it, developers have no way of knowing if changes in metrics—such as latency, click-through rates, or churn—are the result of the new intervention or external factors like seasonal trends, hardware fluctuations, or user behavior shifts. For instance, if a fintech app sees a spike in logins during tax season, a control group helps the team realize the spike is external, rather than a result of their new login screen design.

The Experimental Group: Testing the Innovation

The experimental group (sometimes referred to as the treatment group) is the cohort that is exposed to the independent variable—the new feature, the updated algorithm, or the experimental UI. This is where the “test” actually happens.

In a tech environment, the experimental group is carefully monitored. Every interaction is logged and measured against the same metrics applied to the control group. The key is to ensure that the only difference between the two groups is the specific change being tested. If the experimental group shows a statistically significant improvement in performance or engagement compared to the control group, the development team can confidently conclude that the change was effective.

A/B Testing and Product Optimization

In the tech industry, the most common application of experimental and control groups is A/B testing (or split testing). This methodology is used by giants like Google, Amazon, and Netflix to make thousands of incremental improvements to their platforms every day.

Optimizing User Experience (UX)

User experience is rarely a matter of objective beauty; it is a matter of objective utility. When a tech team wants to improve a user journey—such as the checkout process on an e-commerce platform—they use experimental and control groups to test hypotheses.

For example, a team might hypothesize that changing a “Buy Now” button from blue to green will increase conversions.

- Control Group: 50% of users see the original blue button.

- Experimental Group: 50% of users see the new green button.

By tracking the conversion rate of both groups, the team can determine if the color change actually influences behavior. This removes the subjectivity of design and replaces it with empirical data.

Measuring Conversion Rates and Engagement

Beyond visual changes, experimental groups are vital for testing backend logic. Subscription-based apps often test different “paywall” timings. Does showing the pricing page after three minutes of use result in more sign-ups than showing it after ten minutes? By assigning new users to different experimental groups, data analysts can find the “sweet spot” of engagement where users feel the value of the product before being asked to pay, thus maximizing Lifetime Value (LTV).

The Role of Controlled Experiments in Artificial Intelligence

The importance of experimental and control groups extends deep into the realm of Artificial Intelligence (AI) and Machine Learning (ML). In these fields, the “groups” often refer to datasets and model versions rather than just human users.

Training vs. Validation Sets

While not identical to the traditional definition of experimental groups, the logic of “split testing” is foundational to training AI. When developing a neural network, data is split into training sets and validation/test sets.

The “Control” in this sense can be viewed as the baseline model (the current champion model), while the “Experimental” version is the new model with adjusted hyperparameters or a different architecture. By running the same “hold-out” dataset (the control data) through both models, engineers can see which model generalizes better to unseen information.

Benchmarking Model Performance

In the deployment of Large Language Models (LLMs), developers often use “A/B deployments” or “Canary releases.” When a new version of an AI model is ready, it isn’t rolled out to everyone at once. Instead, a small percentage of queries (the experimental group) is routed to the new model, while the majority (the control group) stays on the stable version.

Engineers monitor the experimental group for “hallucinations,” latency issues, or decreased accuracy. If the experimental group outperforms the control group across key performance indicators (KPIs), the new model is gradually promoted to the entire user base.

Best Practices for Tech-Driven Experiments

Setting up experimental and control groups sounds straightforward, but in complex digital ecosystems, “noise” can easily corrupt the data. Following rigorous standards is necessary to ensure the results are actionable.

Randomization and Eliminating Bias

The most critical element of a valid experiment is randomization. In tech, this means ensuring that users are assigned to either the control or experimental group purely by chance.

If a streaming service tests a new recommendation algorithm but accidentally puts all its “power users” into the experimental group, the results will be skewed. The experimental group might show higher engagement, but not because the algorithm is better—only because those users were already more active. Effective tech experiments use hashing algorithms or randomized user IDs to ensure that both groups are demographically and behaviorally similar before the test begins.

Statistical Significance: Knowing When Results Matter

A common pitfall in tech experiments is “peaking” at the data too early. If the experimental group shows a 5% lead after one hour, a developer might be tempted to declare victory. However, in tech, small sample sizes lead to high variance.

Professional tech teams rely on Statistical Significance. This is a mathematical measure (often expressed as a p-value) that tells us how likely it is that the difference between the experimental and control groups occurred by chance. Most tech companies require a 95% confidence level before implementing a change. This ensures that resources are not wasted on “improvements” that are actually just statistical noise.

Future Trends: Automated Testing and Machine Learning Loops

As we move toward more autonomous systems, the way we manage experimental and control groups is evolving. We are entering an era of “Continuous Experimentation.”

Multi-Armed Bandit Algorithms

Traditional A/B testing can be “wasteful” because, during the test, 50% of your users are stuck in the “losing” group (whichever one performs worse). To solve this, advanced tech companies are moving toward Multi-Armed Bandit (MAB) testing.

In this model, an AI automatically shifts more traffic toward the “winning” experimental group in real-time. If the experimental group starts showing better results, the system gradually decreases the size of the control group, maximizing the benefit of the innovation while the test is still running.

Hyper-Personalization

In the future of tech, there may no longer be a single “control group” for an entire platform. Instead, AI will allow for “N=1” experimentation, where the experimental and control variables are tailored to individual user profiles. What works for a user in Tokyo might not work for a user in London. The concept of the experimental group will shift from “broad segments” to “individualized optimization,” creating a highly personalized digital experience driven by constant, micro-level controlled testing.

Conclusion

The use of experimental and control groups is what separates a professional tech enterprise from a speculative one. By maintaining a stable control group and introducing variables to a randomized experimental group, tech companies can navigate the complexities of software and AI with clarity.

From the color of a button to the architecture of a billion-parameter AI model, these groups provide the empirical truth needed to innovate safely and effectively. In an industry defined by rapid change, the scientific discipline of controlled experimentation remains the most reliable compass for progress.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.