In the rapidly evolving landscape of social media, the battle against misinformation has become one of the most significant technical and ethical challenges for platform architects. On X (formerly Twitter), the response to this challenge is “Community Notes”—a unique, decentralized approach to fact-checking that relies on the collective intelligence of the user base rather than a centralized board of moderators. Originally launched as “Birdwatch” before the platform’s high-profile acquisition and rebranding, Community Notes represents a fundamental shift in how digital truth is negotiated in a hyper-connected world.

The Architecture of Community Notes: From Birdwatch to X

To understand what Community Notes are today, one must first look at their technical lineage. The project began as a pilot program designed to address the limitations of traditional top-down moderation. In the past, social media platforms relied on “trust and safety” teams or third-party fact-checking organizations to label or remove content. However, this method often faced criticisms of bias, lack of transparency, and an inability to scale with the millions of posts generated every hour.

The Shift to Open-Source Transparency

One of the most significant technical pivots for Community Notes under its current iteration is its commitment to transparency. Unlike the proprietary algorithms that govern our newsfeeds, the code driving Community Notes is open-source. This allows developers and researchers to inspect the logic behind how notes are prioritized and displayed. By hosting the algorithm on public repositories like GitHub, X allows for a level of technical scrutiny that is rare in the tech industry. This transparency is intended to build trust, ensuring that the system is seen as a tool for accuracy rather than a weapon for censorship.

How the Algorithm Ensures Diverse Perspectives

At the heart of Community Notes is a sophisticated ranking algorithm designed to prevent “brigading”—a phenomenon where a coordinated group of users attempts to push a specific narrative. Traditional voting systems are susceptible to majority rule, where the most popular opinion wins regardless of its factual accuracy. Community Notes uses a “bridge-based” ranking system. For a note to be displayed publicly, it must be rated as “Helpful” by contributors who have historically disagreed in their ratings. This mathematical approach identifies consensus across ideological divides, ensuring that the information presented has a higher probability of being objective and universally valuable.

How Community Notes Work: The Technical Process

The functionality of Community Notes is a multi-stage process involving recruitment, contribution, and evaluation. It is not a simple comment section; it is a structured data-gathering exercise that utilizes the community to curate a more accurate digital record.

Becoming a Contributor: The Onboarding Phase

Not every user on X can immediately write Community Notes. To maintain the integrity of the system, the platform requires a period of “onboarding.” New contributors must first earn the right to write notes by accurately rating existing notes. This “Rating Impact” score is a crucial metric. If a user consistently rates notes in a way that aligns with the eventual consensus of the community, their impact score increases. Only after demonstrating a track record of helpfulness are users granted the ability to author their own notes. This gamified, merit-based entry system ensures that those participating in the fact-checking process are familiar with the community’s standards.

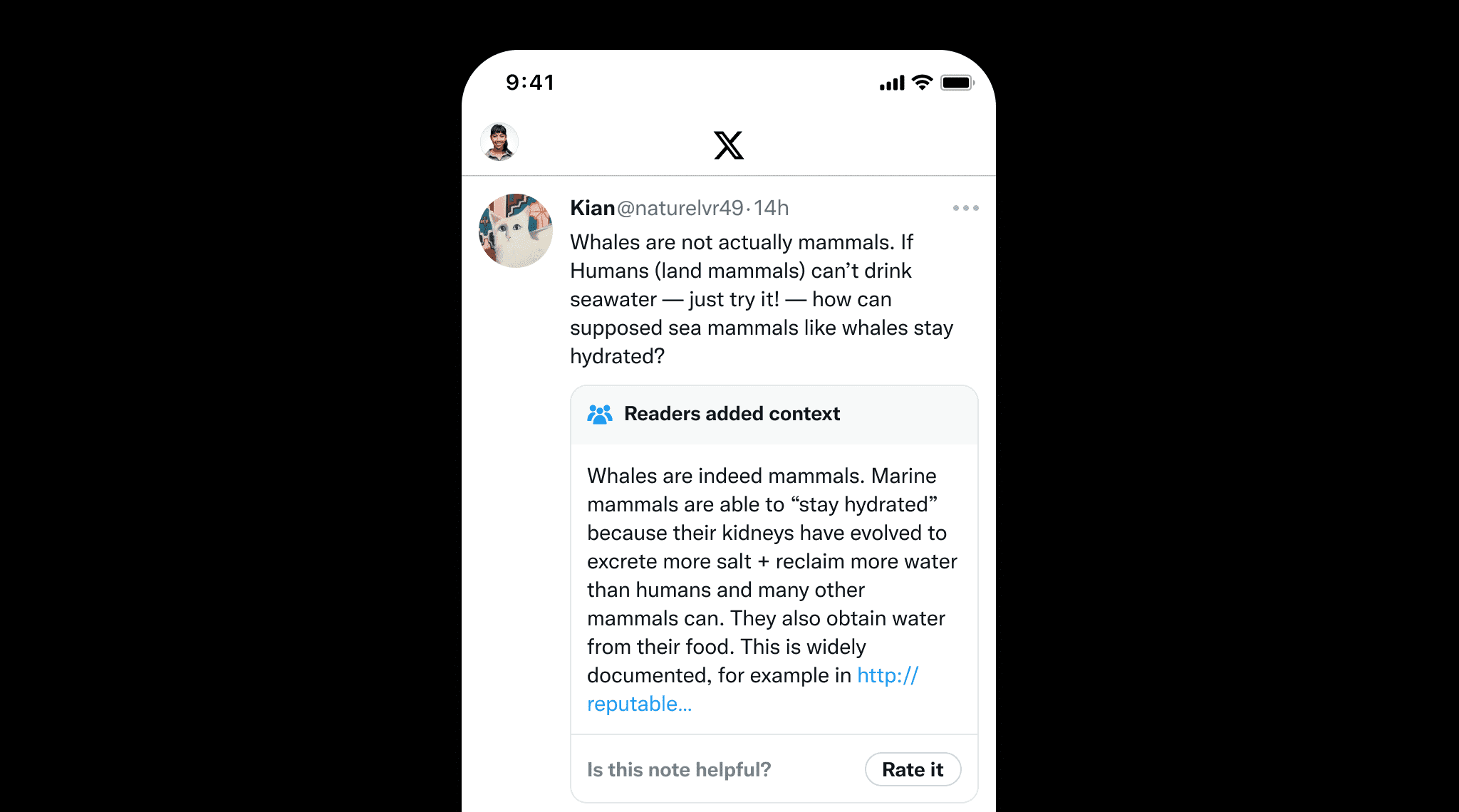

The Rating System: Helpful vs. Unhelpful

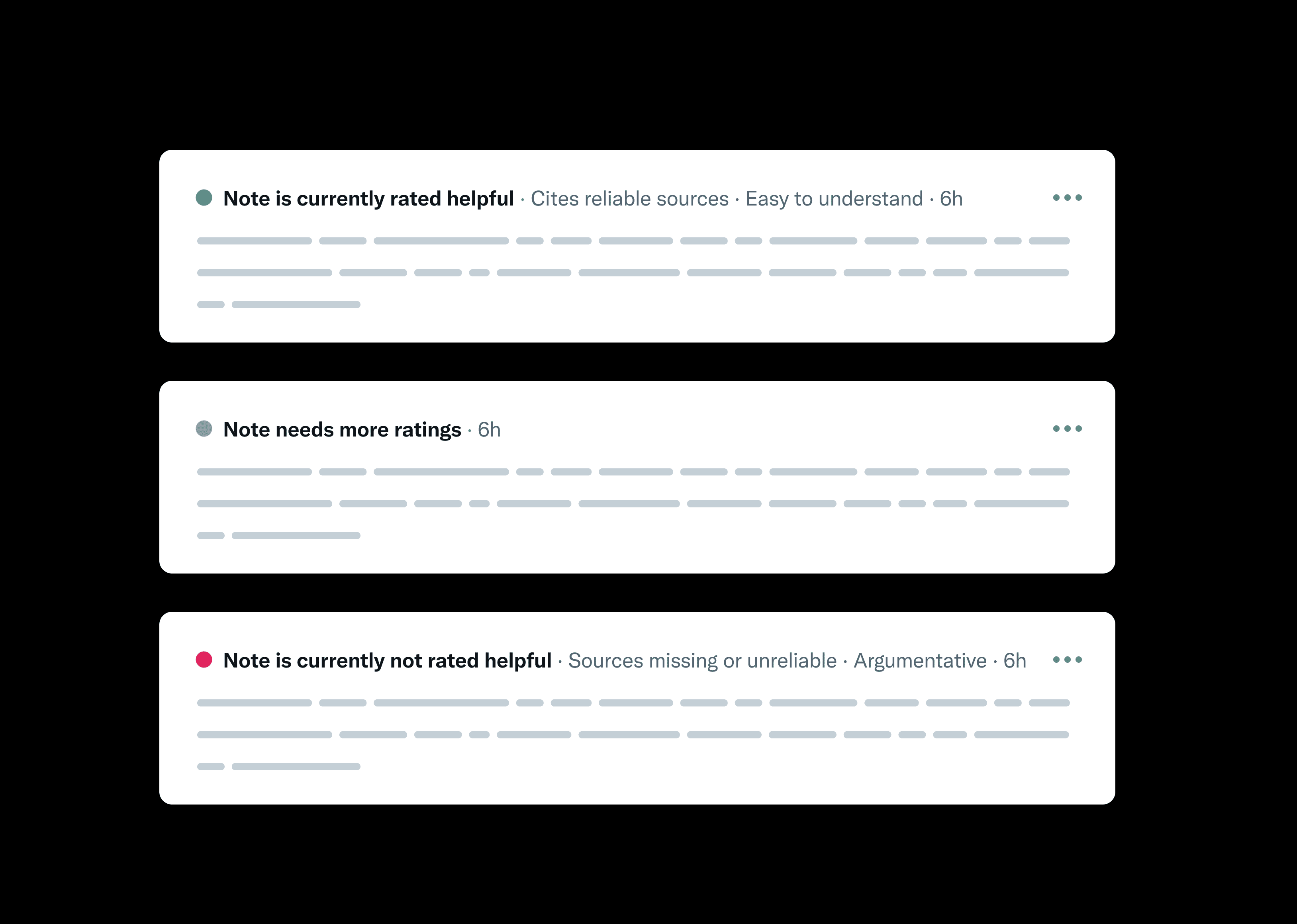

When a contributor writes a note, it enters a “pending” state. It is not visible to the general public until it meets the algorithm’s threshold for helpfulness. Other contributors then rate the note on several criteria: Is it cited with reliable sources? Is it written in a neutral tone? Does it address the specific claim made in the post? If a note receives high marks from a diverse group of raters, it is promoted to the status of “Helpful” and becomes visible to all users under the original post. Conversely, if a note is rated as “Unhelpful,” it remains hidden, preventing the spread of low-quality or retaliatory “fact-checks.”

Bridging the Divide: Why Consensus Matters

The technical genius of the Community Notes algorithm lies in its rejection of the “51% majority.” In a polarized digital environment, a simple majority often reflects tribalism rather than truth. By requiring agreement from users who typically disagree (calculated via latent factor models), the system identifies the “bridge” between opposing viewpoints. This ensures that the notes that make it to the public feed are those that transcend partisan bickering, providing a layer of context that is acceptable to a wide range of observers.

The Impact on Digital Security and Misinformation

In the age of AI-generated content and sophisticated disinformation campaigns, the speed and accuracy of content moderation are vital components of digital security. Community Notes serves as a first line of defense against the weaponization of information.

Combating Deepfakes and AI-Generated Content

With the rise of generative AI, the internet has seen a surge in “deepfakes”—hyper-realistic images and videos that can easily deceive the average user. Community Notes provides a mechanism for rapid debunking. For example, if an AI-generated image of a world leader is circulated, the community can quickly attach a note pointing to the original source or identifying the technical artifacts that prove the image is synthetic. Because the community operates 24/7 and spans the globe, these notes often appear much faster than a formal statement from a news organization or government body could.

Real-Time Fact-Checking in a High-Velocity Feed

One of the primary technical challenges for X is the speed of information. A tweet can go viral and reach millions of people in minutes. Traditional fact-checking can take days. Community Notes addresses this “velocity gap.” By empowering thousands of active users to contribute simultaneously, the platform can generate context in real-time. Furthermore, X has implemented “Media Notes,” a feature where a single note attached to a specific image or video can automatically appear on all other posts containing that same media. This “hash-matching” technology ensures that once a piece of misinformation is identified, its spread is mitigated across the entire network.

Community Notes as a Model for Decentralized Governance

The success of Community Notes has sparked a broader conversation in the tech world about the future of platform governance. Is it possible to manage a global communication network without a centralized “arbiter of truth”?

Moving Beyond Traditional Fact-Checkers

For years, social media platforms have been caught in a “no-win” situation regarding content moderation. If they remove too much, they are accused of stifling free speech; if they remove too little, they are blamed for the spread of harmful lies. Community Notes offers a middle path. By providing “more speech” rather than “less speech,” the platform avoids the heavy-handedness of deletions and bans. The original post remains visible, but it is accompanied by the necessary context, allowing the reader to make an informed decision. This shift from censorship to contextualization is a significant evolution in digital communication policy.

Challenges and Limitations of the Crowdsourced Model

Despite its technical merits, the system is not without flaws. The reliance on volunteer labor means that not every piece of misinformation will be addressed. Some notes can be “gamed” if a group is sufficiently patient to build up their rating impact scores. Furthermore, there is the risk of the “bystander effect,” where users assume someone else will provide the necessary context. From a tech perspective, the challenge is to continuously refine the algorithm to stay ahead of those who wish to exploit it. Ensuring that the system remains resilient against sophisticated bot networks and state-sponsored influence operations is an ongoing battle for the X engineering team.

The Future of Collaborative Information on Social Media

As we look toward the future of the internet, the principles behind Community Notes—transparency, decentralization, and algorithmic consensus—are likely to influence other platforms and tools.

Integration with AI and Machine Learning

The next frontier for Community Notes is the deeper integration of AI. While the human element is essential for nuance and judgment, AI can assist contributors by suggesting relevant sources, identifying similar claims made in the past, or flagging potential instances of media manipulation for human review. By combining the scale of machine learning with the critical thinking of a global community, X could create a factual infrastructure that is both robust and adaptable.

Potential Applications Beyond X

The “bridge-based” consensus model developed for Community Notes has applications far beyond social media. It could be used in collaborative software development, digital encyclopedias, or even within corporate environments to facilitate decision-making among diverse stakeholders. As a technical tool, it provides a blueprint for how we might solve the “crisis of truth” in the digital age—not by relying on a few powerful gatekeepers, but by empowering the many to hold each other accountable through transparent, data-driven systems.

In conclusion, Community Notes on X represents more than just a feature; it is a bold experiment in tech-enabled democracy. By leveraging open-source algorithms and a unique consensus-driven methodology, it offers a scalable solution to the complexities of modern digital discourse. While the system continues to evolve, its impact on how we perceive and verify information in the digital square is undeniable. For the tech world, it serves as a compelling case study in how decentralized systems can be used to foster a more informed and resilient digital society.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.